Ever since LCDs replaced CRTs, I have been severely aware of blur. While my friends are happy to game on any old 60Hz panel, I find that even 144Hz TNs cause nasty blurring of objects or backgrounds in any sort of game with motion. This has constantly vexed me in games to this day, with one exception.

I tried the PG279Q in 2017 with its ULMB and...wow. Games that could sustain a high refresh rate felt like something I could reach out and touch, showing crisp environments in motion! Sadly, the tech was too new for a daily driver: brightness was incredibly low with ULMB on, and toggling ULMB required two OSD changes (brightness/ULMB) and one visit to the nVidia control panel. But I felt that the tech was truly the next big thing, and that refinements to monitors would certainly demonstrate displays that could be used seamlessly with strobing.

It is now six years later and...what happened? BFI is an ignored niche, with many displays either leaving the feature out or sloppily butchering their implementation to check the box. New OLED displays - including the 240Hz LG - are perfect candidates for strobeless BFI, but the feature isn't in last year's OLED gaming displays or this year's LG TVs. Finding any sort of reliable reviewer of strobing implementations is almost impossible, as even RTings and TFT Central often devote a single sentence to their strobing assessments. The only company that seems to care about ULMB is Zowie, and they are firmly planted in 1080P, which is a non-starter in the days of hybrid WFH.

Even Blur Busters' own certification program only lists one 1080P model, and the site's list of blur reduction monitors is pretty old without any sort of qualitative assessment. (This isn't a criticism, just more a reflection of the tech's level of attention.) Recent game and GPU releases have me despairing of ever hitting 1000FPS/Hz at a reasonable resolution, as eye candy increases and AI upscaling has killed the impetus for well-optimized titles. So BFI is the only way to beat blur in the medium term.

I feel like all the pieces are there: LEDs have gotten quite fast from a G2G perspective and are now bright enough to allow 200+ nits while strobing, and OLED's are hitting higher refresh rates while being bright enough for BFI at least in a dark room. But the tech lies fallow and unforgotten, serving a niche of consumers who will never move the needle on marketing projections.

Does anyone have any news on upcoming displays or good sites for strobing tests?

What is the Future of BFI as a Feature?

- Chief Blur Buster

- Site Admin

- Posts: 11714

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: What is the Future of BFI as a Feature?

Blur Busters is currently more focussed on inventing new display tests as well as doing services for businesses (services.blurbusters.com) and doing research (www.blurbusters.com/area51). The pandemic knocked the Blur Busters Approved programme off the rails -- I had hoped to have way more models by now. I should have way more announcements this year than 2022 had.HappyHubris wrote: ↑02 Mar 2023, 03:15Even Blur Busters' own certification program only lists one 1080P model, and the site's list of blur reduction monitors is pretty old without any sort of qualitative assessment. (This isn't a criticism, just more a reflection of the tech's level of attention.)

With the increased post-pandemic income, I need to hire more writers this year to light up the Blur Busters cover page again.

Also, have you ever tried VR? They have the most superlatively zero-blur behavior. Quest 2 has 0.3ms MPRT strobing. You can even virtualize a zero-blur display in VR, so you can play 2D and yesteryear stereoscopic 3D games onto a VR screen (virtualized computer monitor), using things like Virtual Desktop or BigScreen.

By 2030s, I think 1000fps 1000Hz will be practical. We are going to press very hard for 1000fps advocacy, since the technology for 10:1 reprojection exists today. I downloaded the demo and converted 30fps to 360fps (12:1 ratio) on a mere Razer RTX 2080 using only a fraction of the GPU.HappyHubris wrote: ↑02 Mar 2023, 03:15Recent game and GPU releases have me despairing of ever hitting 1000FPS/Hz at a reasonable resolution, as eye candy increases and AI upscaling has killed the impetus for well-optimized titles. So BFI is the only way to beat blur in the medium term.

In theory a GPU can do 90% GPU on UE5-detail, and 10% GPU on reprojection. There's enough memory bandwidth on the RTX 4090 to do 4K 1000fps 1000Hz reprojection, so hopefully by the 5000 or 6000 series, DLSS 4.0 or 5.0 will allow quadruple digit frame rates via large-ratio reprojection technologies. We've got several projects in the pipeline including a 1000fps white paper to educate game developers;

Some good news, there's some OLED BFI works in progress for 240Hz panels from some of my inside contacts. It's not in first models AFAIK. So give it time. I am working very hard behind the scenes to keep BFI alive, even if I am a big fan of 1000fps 1000Hz nowadays.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

HappyHubris

- Posts: 15

- Joined: 02 Mar 2023, 02:56

Re: What is the Future of BFI as a Feature?

Thank you for the disturbingly prompt reply in the small hours of the morning! It's good to hear that OLED BFI is coming along well!

1. Are you talking about the 240Hz OLED panel used by LG in December or an upcoming panel release?

2. I'm a bit averse to headsets. Would the S3422DWG be a decent stopgap @ $350 with strobing (supposedly pretty good per Rtings) and HDR400? I'm nervous about VA black smearing as a new form of "blur."

1. Are you talking about the 240Hz OLED panel used by LG in December or an upcoming panel release?

2. I'm a bit averse to headsets. Would the S3422DWG be a decent stopgap @ $350 with strobing (supposedly pretty good per Rtings) and HDR400? I'm nervous about VA black smearing as a new form of "blur."

- Chief Blur Buster

- Site Admin

- Posts: 11714

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: What is the Future of BFI as a Feature?

VA is usually very terrible for strobing/BFI. Incomplete GtG's show up as strobe crosstalk as GtG isn't fully fitting in the black cycle between strobe flashes. VA strobing usually has terrible strobe crosstalk (duplicate image effects even at framerate=Hz), especially at colder temps.

I'd stick to TN or "Fast IPS" screens if you want good quality strobing.

The new headset LCDs are so good that they produce less eyestrain than even cinema 3D glasses (Real3D, Disney3D), and are perfect zero-crosstalk at perfect framerate=Hz with zero stutters. I haven't seen any LCDs that beat VR LCDs which now have less motion blur than CRT tubes! Ignore the Google Cardboard and ignore the nauseating demos, stick to 1:1 sync content (e.g. VR and real world stays in sync, no moving vehicles, no rollercoasters) and then the headsets can be quite comfortable and non-motionsicky. A Best Buy demo isn't representative of the great comfort that some headsets now offers, in a stationary-VR-world (not a rollercoaster app) in a stationary-sitting environment. If you're averse because of the dorkiness, fine. But I'm just mythbusting other common VR myths caused by people who try rollercoasters as the #1 first VR thing they try, etc, or seeing a headche stutterfest in a cheap Google Cardboard VR headset.

As for 240Hz OLED, it's not in any of the released OLEDs on the market, but apparently in a future manufacturer of the same panel (e.g. BFI added to firmware of a future model that is not yet on the market).

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

thatoneguy

- Posts: 195

- Joined: 06 Aug 2015, 17:16

Re: What is the Future of BFI as a Feature?

This needs to especially be made clear to indie developers(just because you're working on a 2D pixel art game doesn't mean 60fps is enough ffs) and ESPECIALLY Japanese Developers who are so stuck in their old framerate-lock ways that it's a miracle to get a 60fps game out of them let alone 120fps(apart from some outliers like Capcom who are pushing for 144hz for SF6 and offer 120fps support for DMC5).Chief Blur Buster wrote: ↑02 Mar 2023, 03:31We've got several projects in the pipeline including a 1000fps white paper to educate game developers;

-

GammaLyrae

- Posts: 119

- Joined: 28 Mar 2018, 01:44

Re: What is the Future of BFI as a Feature?

The prospect of a blur busters assisted OLED BFI is intriguing. I know it won't hit cert, but I'm confident time and attention would still be paid to image quality with BFI, including color volume and black crush / white crush / perceived contrast with it on.

Looking forward to it

Looking forward to it

Re: What is the Future of BFI as a Feature?

It's good and happy news that the Chief is going to help.

It's bad news there was no movement towards any LP/BFI work dony by manufacturers on their own.

@Chief

Can we hope for perfect monitor? OR 240Hz OLED monitor would only get somewhat usable 120Hz mode, for example, and nothing else? Personally I find 60, 90 and 120Hz modes mandatory before I even consider a monitor. OK, maybe 60+120, but that's absolute minimum.

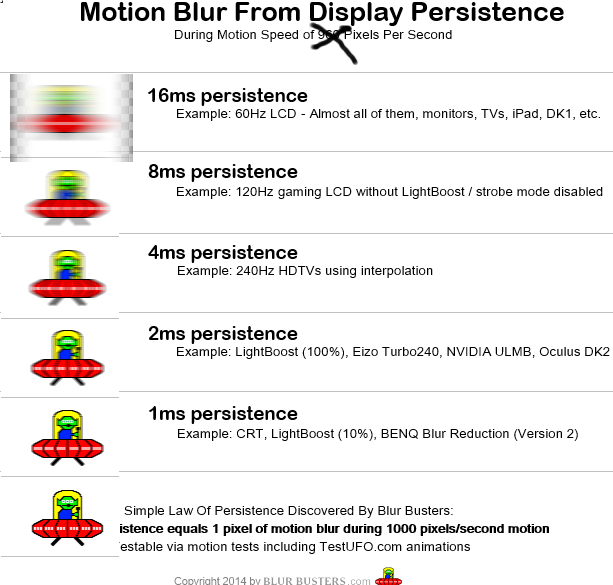

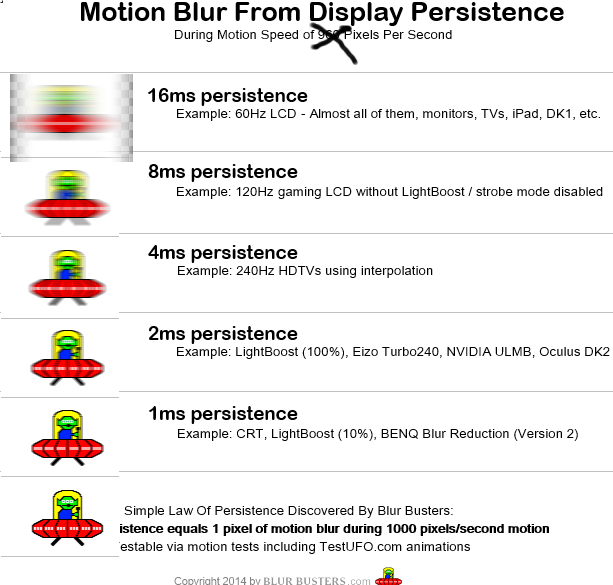

BTW. May I suggest a change like this? I always had to add a note when showing this to people on internet, that this applies only to slow motion. I've made a quick and crappy modification by simply moving everything down one row (and then doing a bad edit using the first blur filter I found, to replace the now missing slot). After this modification it much more accurately resemble what I see in real life. The speed used for the original one was 960 pixels. I think it could easily be 1920.

As it was, in the original version, some people may wrongly conclude that 240Hz without low persistence is good enough and acceptable, so they can continue to just ignore that issue.

Also, TAA throws the Donkey Kong's barrels on BFI. For people who were not aware this exists, here's a link to a subreddit where people talk about methods of disabling TAA:

https://www.reddit.com/r/FuckTAA/

The TAA ruins the advantages of good low persistence. I tried playing 2D games with it. 3D games. PS5 games. Nah. No matter how many ignorant fools say "TAA is improved, it's great and doesn't blur anything!" it still is blurrying and always will be.

I also advise checking mod websites like Mod Nexus and https://helixmod.blogspot.com/ where the awesome stereoscopic 3D (3D Vision) community made a lot of patches for 3D Vision. Some of the fixes include removing TAA in games where switching it off was previously not possible. Not sure if and how helpful it may be for people not using 3D, but probably.

It's bad news there was no movement towards any LP/BFI work dony by manufacturers on their own.

@Chief

Can we hope for perfect monitor? OR 240Hz OLED monitor would only get somewhat usable 120Hz mode, for example, and nothing else? Personally I find 60, 90 and 120Hz modes mandatory before I even consider a monitor. OK, maybe 60+120, but that's absolute minimum.

BTW. May I suggest a change like this? I always had to add a note when showing this to people on internet, that this applies only to slow motion. I've made a quick and crappy modification by simply moving everything down one row (and then doing a bad edit using the first blur filter I found, to replace the now missing slot). After this modification it much more accurately resemble what I see in real life. The speed used for the original one was 960 pixels. I think it could easily be 1920.

As it was, in the original version, some people may wrongly conclude that 240Hz without low persistence is good enough and acceptable, so they can continue to just ignore that issue.

Also, TAA throws the Donkey Kong's barrels on BFI. For people who were not aware this exists, here's a link to a subreddit where people talk about methods of disabling TAA:

https://www.reddit.com/r/FuckTAA/

The TAA ruins the advantages of good low persistence. I tried playing 2D games with it. 3D games. PS5 games. Nah. No matter how many ignorant fools say "TAA is improved, it's great and doesn't blur anything!" it still is blurrying and always will be.

I also advise checking mod websites like Mod Nexus and https://helixmod.blogspot.com/ where the awesome stereoscopic 3D (3D Vision) community made a lot of patches for 3D Vision. Some of the fixes include removing TAA in games where switching it off was previously not possible. Not sure if and how helpful it may be for people not using 3D, but probably.

- Chief Blur Buster

- Site Admin

- Posts: 11714

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: What is the Future of BFI as a Feature?

This is accurate.RonsonPL wrote: ↑02 Mar 2023, 15:35BTW. May I suggest a change like this? I always had to add a note when showing this to people on internet, that this applies only to slow motion. I've made a quick and crappy modification by simply moving everything down one row (and then doing a bad edit using the first blur filter I found, to replace the now missing slot). After this modification it much more accurately resemble what I see in real life. The speed used for the original one was 960 pixels. I think it could easily be 1920.

Double speed = double motion blur = retina refresh rate goes higher.

This is important because higher resolution displays have more pixels over the eye tracking angle change, and motion becomes slower at same pixels/sec or motion goes over more pixels/sec to stay as physical speed. So a 8K display has a much higher retina refresh rate than a 1080p display.

Yep, I will be redesigning those images in a new motion blur educating article of some time.

Perhaps algorithmically generated by algorithms within the open source Blurinator (software based MPRT + GtG emulator).

Very interesting!RonsonPL wrote: ↑02 Mar 2023, 15:35I also advise checking mod websites like Mod Nexus and https://helixmod.blogspot.com/ where the awesome stereoscopic 3D (3D Vision) community made a lot of patches for 3D Vision. Some of the fixes include removing TAA in games where switching it off was previously not possible. Not sure if and how helpful it may be for people not using 3D, but probably.

One of my problems is I need to hire more part time helpers/writers/freelancers to take over some of the writing while I do some of the more important/technical stuff. Blur Busters can only do so much solo.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

HappyHubris

- Posts: 15

- Joined: 02 Mar 2023, 02:56

Re: What is the Future of BFI as a Feature?

Thank you for clarifying! I'm not looking at VR headsets at this time due to past nausea and using my computer monitor as my primary working and creative display as well. So ideally the next upgrade will check as many boxes for as many uses as possible. I appreciate your in-depth explanation!Chief Blur Buster wrote: ↑02 Mar 2023, 05:05VA is usually very terrible for strobing/BFI. Incomplete GtG's show up as strobe crosstalk as GtG isn't fully fitting in the black cycle between strobe flashes. VA strobing usually has terrible strobe crosstalk (duplicate image effects even at framerate=Hz), especially at colder temps.

I'd stick to TN or "Fast IPS" screens if you want good quality strobing.

The new headset LCDs are so good that they produce less eyestrain than even cinema 3D glasses (Real3D, Disney3D), and are perfect zero-crosstalk at perfect framerate=Hz with zero stutters. I haven't seen any LCDs that beat VR LCDs which now have less motion blur than CRT tubes! Ignore the Google Cardboard and ignore the nauseating demos, stick to 1:1 sync content (e.g. VR and real world stays in sync, no moving vehicles, no rollercoasters) and then the headsets can be quite comfortable and non-motionsicky. A Best Buy demo isn't representative of the great comfort that some headsets now offers, in a stationary-VR-world (not a rollercoaster app) in a stationary-sitting environment. If you're averse because of the dorkiness, fine. But I'm just mythbusting other common VR myths caused by people who try rollercoasters as the #1 first VR thing they try, etc, or seeing a headche stutterfest in a cheap Google Cardboard VR headset.

As for 240Hz OLED, it's not in any of the released OLEDs on the market, but apparently in a future manufacturer of the same panel (e.g. BFI added to firmware of a future model that is not yet on the market).

Re: What is the Future of BFI as a Feature?

I don't think BFI will ever become more than a niche feature. Some people are just too sensitive to flickering and with the increasing adoption of HDR, strobing will become more niche because it reduces brightness. Though I think there's still good news. In the future, we may have mainstream 1000hz displays, which could simulate BFI. I hope there'll be a place for BFI as I can't stand motion blur anymore ever since I discovered the magic of strobing.