anothergol wrote:But you can't track something that's moving rapidly, just try it. Move your finger around in front of your eyes, tracked or not, it's not gonna be sharp. From the moment you move your finger fast enough for it to become a "blur trail" instead of a finger, there is just no way you can eye track it anymore.

Correct, that said, we need to cover both bases: Fixed gaze versus eye tracking.

You have mentioned precisely the point.

The required maximum refresh rate needed (for a specific use case) is at the threshold matching your maximum eye tracking speed. Beyond that, it's unnecessary. However, getting beyond all the "points of diminishing returns" extends surprisingly far.

Note: eye "tracking" can also apply to a physically moving screen while keeping eyes stationary in your eyeball sockets (which forces your eyes to essentially track across the screen, by virtue of a physically moving screen). Like moving your OLED Galaxy smartphone horizontally while watching http://www.testufo.com/eyetracking while keeping your eye gaze stationary. Try it on your phone or tablet, load http://www.testufo.com/eyetracking .... Use your hand to move your phone horizontally to keep the 2nd UFO in a fixed-gaze position. Exact same display motion occurs. Same tracking motion blur occurs, but it is commonly called "eye tracking" motion blur -- but it also applies to any vision/camera motion relative to a display's plane (e.g. No matter how you track -- whether be pursuit cameras, tracking cameras trying to photograph fast moving objects (sports,olympics), physically moving displays, eye tracking on displays, turning your head while keeping eyeballs stationary in their eye sockets, head turning in virtual reality, etc -- all of them, all create the same tracking-based motion blur above-and-beyond human brain limitation) It's akin to waving a camera while taking a picture at slow shutter. Creates a blurry photo. In blur-comparision tests, 60fps@60Hz and 240fps@240Hz on sample-and-hold displays (OLEDs, fast TN LCDs with good overdrive, or other display tech where GtG is an insignificant % of refresh cycle) -- at the same relative angle-per-second tracking motionspeed -- the motion blur (angle-of-blurring) of each look just like the blur difference between a 1/60sec vs 1/240sec camera shutter photograph. Slower shutters means more blurry photographs, and the same thing happens to eye-tracking smearing frames across retinas. Scientists/vision researchers/display engineers/VR scientists finally realized that once we removed GtG limitations, this motion blur limitation still remained with displays that is not fixable without going to formerly-laughworthy ultrahigh refresh rate. The use of static imagery to represent moving analog objects, creates motion blur limitations for tracking cases.

Yes, yes, human eyes aren't cameras. However, the principle of static refresh cycles being smeared across your retinas is still the principle of motion blur (that the TestUFO animations so eloquently demonstrate). As the TestUFO Thin-versus-Thick-Lines animations already demonstrate -- this specific type of blur animation is designed to highlight tracking blur instead of GtG. The longer the static refresh cycles are on for, the more time a refresh cycle can smear across your retinas. Once GtG is eliminated (or insignificant), the blur distance is exactly the same distance your eyes tracked between the beginning part of a refresh cycle and the end part of a refresh cycle.

Human eyes "cannot see 240fps" directly. We are simply seeing side effects. 240 blurred frames (whether blurred by tracking or blurred by pre-blending) in one second still shows up as sustained blurring that's now noticed by humans. Simple. That's it.

We just currently solve that by impulsing/flickering like a CRT. That acts like a fast shutter to prevent tracking based motion blurring. Like the special "GearVR Low Persistence Mode" on your phone which flickers your phone's OLED screen like a CRT to fix the motion blur from long frame visibility times.

Turn that mode off, and your OLED is no better in motion blur than a 1ms TN LCD! Yes, the OLED will have better colors and blacks, but, you get "LCD-like motion blur" even at 0ms GtG.

GtG and MPRT must simultaneously be low to eliminate tracking-based display motion blur.

At 960 pixels per second at 60Hz, that's (960/60) = 16 pixels thickness for

http://www.testufo.com/blurtrail (configured to Pixel Per Frame = 16) ..... At 960 pixels per second at 240Hz, that's (960/240) = 4 pixels thickness for

http://www.testufo.com/blurtrail (configured to Pixel Per Frame = 4). Try it now, change the speed of

http://www.testufo.com/blurtrail -- the line becomes thicker on your Samsung Galaxy OLED or your LCD desktop monitor -- during fast line moving speed. But fixed gaze, the line is still 1-pixel thick. But the faster you move that line, the thicker the line becomes in tracking situation.

Try this on your Galaxy OLED smartphone or on a fast LCD monitor (preferably one with GtG far less than refresh cycle).

If your display uses PWM for dimming, adjust to maximum brightness to eliminate PWM-stepping artifacts.

(Note, some newer OLED phones may have a low-persistence mode ("GearVR Low Persistence") to produce CRT-like clarity for Google Cardboard VR mode -- flickering the screen to avoid motion blur. Disable this low-persistence mode during the below test.)

TestUFO Blur Trail at 4 pixel step

TestUFO Blur Trail at 8 pixel step

TestUFO Blur Trail at 16 pixel step

Twice the speed = Twice the tracking-based motion blur (on non-strobed/non-impulsed/non-CRT displays)

Note: you might see shimmering if scaled above 1:1 pixel mapping; e.g. pinch zoomed Galaxy smartphone (or zoomed desktop browser) causing 1-pixel variations in blur trail thickness -- that's simply scaling/aliasing artifact. However, the motion blur should actually outweigh the shimmering artifacts. For the sake of educational exercise, ignore scaling/aliasing artifacts and focus only on the thickness of the overall motion blur.

You'll see that doubling the pixel step will double the tracking-based motion blur. (You can still tell all lines are the same thickness in a fixed-gaze-at-display-center situation). The only now-scientifically-proven way to avoid tracking based motion blur is to shorten frame visibility time (whether shutter in a camera, the flicker of a CRT/strobed LCD/strobed OLED, or the frame duration time on a continuously-illuminated sample-and-hold display = more frames per second (and thus more refresh cycles per second to display each frame in their original razor-sharp formats).

So, thusly:

1ms of frame visibility time = 1 added pixel of motion blur during 1000 pixels/sec motion.

So if you're doing a 240Hz display, sample-and-hold, 4ms frame duration time, that is 4 pixels of motion blurring during 960 pixels/sec. That will still cause TestUFO Panning Map Readability test to fail.

Now if you strobe instead (CRT phosphor, LCD strobe, OLED rolling scan), e.g. 1ms strobe flash length, the TestUFO Panning Map Readability Test stays sharp, avoiding tracking-based blur.

Another way to achieve 1ms frame visibility time is to fill all intermediate eye-tracking spots with sharp frames. (aka 1000fps@1000Hz). That can do essentially the same thing (assuming GtG isn't a limitation preventing such, basically good sub-refresh-cycle GtG).

1ms vs 2ms vs 4ms GtG makes a whole hoot of almost no difference to tracking motion blur 60Hz, but it can be a chasm valley at 240Hz when GtG really enroaches the full refresh cycle length (or even beyond, like 33ms LCDs in the Bad Old Days where LCD refreshes simply ghosted well into each other).

Yes, that's true -- 240Hz (at 0ms GtG, or insignificant percentage of refresh cycle) -- 240fps@240Hz is the same motion blur at the same speed as a 1/240sec camera shutter. That still creates blurry photographs during fast camera panning, like photographing a ski jumper. If you track perfectly, the skiier will be sharp but the background will be blurry. If you don't track, the skiier will be blurry but the background will be sharp. Now imagine a skiing game or skiing video. You can only do EITHER (sharp background or sharp skiier) but not BOTH simultaneously

IF you are also needing to simultaneously avoiding stroboscopic stepping effect

TOO. A human can randomly decide to track a skiier on a screen, or track the background behind the skiier. Strobing (and CRT) allows tracking to remain sharp, but that adds stroboscopic stepping effect. Stepping effects in background can be seen when tracking skiier (if background is not pre-blurred from slow camera shutter). For a game (GPU effects), you can do the same essentially by pre-blurring/pre-blending the scrolling background to compensate for stepping effect, but that spoils the situation when the human decides to track eyes on the background instead of the skiier. The background will look blurry. (That's also often what happens on a real TV too, the background is still blurry when you eye-track the background, even on a CRT -- if the video camera used a slower shutter than needed to be crisp & clear for your eye's maximum tracking speed).

Moral of the story, you cannot control whether a human decides to track their eyes across a display's plane, unless you're using some high-speed eye tracking sensor and being able to physically (or projection) flit the display around to keep the center of the display at your gaze center. That has the advantage of being able to choose displaying a higher resolution only where it matters (instead of peripheral vision), possibly making 8K unnecessary. Research is already being done on doing this in VR -- e.g. eye-tracking displays that actually move a projected display around to match your eye tracking (e.g. a high-resolution smaller display in the middle of a bigger low-resolution display) -- like

this startup. This can be a solution to attempting to solve both tracking situation and fixed-gaze situation simultaneously, without unobtainium refresh/framerates, since it's analog movement of a display to stay in sync with eye tracking -- problem solved in a framerateless manner. Could be done with a projector and a high-speed mirror, to do the same thing on a wall, to have CRT-quality panning without needing impulsing. However, that doesn't help desktop displays which can't physically move to keep in sync with eye tracking.

So, how do you solve everything simultaneously in all of the above paragraph, combined? Yep -- The only way to make it look like reality is to remove the 240 limitation, and go to refreshrate/framerates just right at your maximum eye tracking speed.

In fixed gaze, you can tell there's a stepping effect if you flick fast enough. If the mouse is going 50,000 pixels second in the fastest arm-flick, the mouse arrow tips are going to jump a 5 pixel gap, (50,000 pixels/sec divided by 10 KHz refresh) = 5 pixel step for mouse arrow tips. This is a situation where frame-blending is recommended.

You want a "high enough" refresh rate to a human's eye tracking speed limit. The more pixels, the more pixels to be blurred. If sufficiently razor-sharp enough (e.g. retina display at huge scales -- e.g. 4K or 8K TV, monitor, or VR, covering at least 30+, 45+ or more FOV) the defocussing effect of motion blur can be indirectly noticed. At low resolutions OR narrow FOV, it's not noticeable. But at super-high resolution AND very wide FOV, the effect becomes a problem. Panning scenery defocussing itself (above-and-beyond natural human brain blur). You are very familiar that panning scenery on CRT looks as sharp as stationary scenery, thanks to CRT's short visibility time (phosphor). To be able to do that, without flicker, requires analog-like motion (insanely high frame rates @ high refresh rates).

With your CRT, load

TestUFO Panning Map Street Label Readability Test. That will test your maximum eye tracking speed. Most people have said that they are able to still read the street name labels at 960 pixels per second on a CRT. Some can go up to 3000 pixels/second or more -- sometimes the label is not onscreen long enough to read it (limited FOV of CRTs, alas) but with a wider FOV, you have more time to read during eye tracking situations. Like giant retina monitors on a desk, or 4K/8K wall-size TVs (10-foot-size images from projectors etc), or in VR. (CRTs can't get big & sharp (simultaneously) enough for that, so we need other display technologies for those mantles).

But it's actually not necessarily important that you read the label in TestUFO Panning Map Test or not: You'll still notice whether the labels are sharp or blurry. What's important is you can notice/see the text is razor sharp on your CRT even at 960 pixels per second. There isn't the "1-2 pixels of motion blurring" necessary to obscure the small text into non-readability. (1-2 pixels of blurring = frame visibility time is less than 1/960ths or 2/960ths of a second = 1 to 2ms persistence = your CRT phosphor is going essentially dark after less than 1ms or 2ms). You can't keep the street name labels if you shine a frame for longer than that. (And blending won't help here, either).

The defocussing effect (of display motion blur) is something that doesn't happen to physical material being scrolled at the same speed (inches per second at the same physical distance) -- you can tell that a book's text stays crystal sharp if you wave a book slowly across your face -- you won't have time to read text (maybe just one word) on a moving book but you can immediately tell the text is sharp instead of unfocussed (if you eye-track the slowly moving book being waved across your face).

So, naturally, you want a display to be crystal sharp at your eye's fastest tracking speed.

The variables vary (e.g. Smartphone -> small monitor -> big monitor -> huge wall size TV -> narrow FOV VR -> wide FOV VR -> Holodeck) but let's assume a huge HDTV on a desktop.

If you sit only 2 feet from a 4K HDTV (using it like a computer monitor), you will be able to see pixels; it won't be quite "retina" sharp anymore at this distance (as proven by

http://www.testufo.com/aliasing-visibility ). You'll definitely notice 1 pixel motion blurring at 960 pixels/sec easily. However, 960 pixels/sec is slow -- that takes 4 seconds for a moving object to go from left edge to right edge of a 4K HDTV. It takes only roughly ~1 second worth of eye tracking (usually less) to notice if an image is not fully crystal sharp (e.g. reading just one word scrolling past the screen). So, 3840 pixels/sec for this specific situation, and that motionspeed still takes 1 second to go from left edge to right edge of a 4K display occupying about 30-40 degrees of FOV. At that FOV and 40-degrees-per-second eye tracking -- eye tracking is sufficiently accurate in most human to detect if something is in-focus or something is out-of-focus (motion-blurred) for non-retina graphic densities.

Obviously, some humans will have better vision than others, and some will have better eye tracking than others, but for the sake of simplicity, we're using the "sitting close enough to a 4K TV to the point that it's no longer retina sharp" -- like when some people repurpose 40-48" 4K 2160p HDTVs as a desktop computer monitor instead of using an 2x2 array of 20"-24" 1080p monitors in multimonitor mode (similar screen surface area, but becomes a single surface).

So, we're going with a conservative 3840 pixels/sec eye tracking speed for this particular use case (4K at 40 degree FOV), as an eye-tracking-speed limit (you can turn your head to keep up, too) -- you will be able tell whether there's 1 added pixel of motion blur added to CRT motion clarity (zero motion blur during tracking situations).

Now, if you want CRT quality with no impulsing, you need to maintain no blurring during all tracking speeds up to your eye tracking speed limit. So, for that particular display (resolution, FOV, and fairly slow eye tracking speed) to become "real life analog motion" (without impulsing / flicker / etc), you'd need 3840fps @ 3840Hz to make it look analog real life (avoid tracking-based blurring). Your eyes are not digital stepper motors, your eye tracking is continually moving as you track. Yes, there are erraticness/saccades, but that only determines your maximum accurate tracking speed; you can still read moving text, like driving past road signs or walking past signs, etc. Display motion blur (eye tracking across statically dispayed refresh cycles, even if they're only 1/240sec each) can affect the clarity of that sort of thing.

However, now if we're using a much smaller display, e.g. 10" LCD, at 10 degrees FOV -- at lower resolution of 1024x768 -- say the iPad Original. Then one human may only be able to track for about approximately 500 or 1000 pixels/second before noticing there's blurring or not. (narrow FOV = less time to track eyes to notice blur). In this case, you'd only need 500fps @ 500Hz for that specific FOV / resolution.

The wider the FOV, the more time your eyes has to track (to notice that things are blurry caused by display (tracking) motion blur)

The bigger the display, the bigger the FOV/pixels become, so you need more pixels. (more resolution)

The higher the resolution, the more pixels to be blurred at the same angles-per-second eye tracking. (up to your eye gaze resolution limit, and tracking speed limit). That's where the diminishing returns finally stop (finally, finally, finally

...LOL)

So at the end of the day, the bigger/sharper/wider FOV, the more problems are created by a display's long refresh cycle duration time (whether it's blended or not). With Microsoft's 1000Hz display, NVIDIA's 16,000Hz Augumented Reality display, Viewpixx 1440Hz lab DLP, etc, many scientists have learned quite a lot since then about display limitations.

Does it matter? Usually, no. We view handheld phones, 40" HDTVs all the way across a big living room, and 24" monitors sitting at the back of a desk. Often 60-70Hz can be good enough and people don't care about display motion blur. It't not important for a lot of use cases like writing emails, reading Internet news and writing documents, anyway! But the bigger/sharper/wider FOV, the bigger the problem becomes when trying to represent reality onscreen. (HDTV "seeing through a window of a moving train" test, or VR "it's just like real life" test, etc).

Once we've solved tracking motion blur (e.g. zero blur at human eye's fastest tracking speed for a particular display/use case), the remander of the solving can be done by blending (e.g. after (X)fps @ (X)Hz (to first solve the motion blur problem), then pre-blend to not need to go above >(X)fps@(X)Hz (to solve the stepping effect problem). And a margin is likely needed too, since the pre-blending may bump X slightly higher to compensate for the pre-blending a little. The variables vary a lot, but for sample-and-hold (no black periods, no flicker, no impulsing), the number X can end up being 100-200 for a specific small handheld display, above >1000 for a specific desktop display, and use cases already exists where certain "different-from-real-life" imperfections becomes visible below ~10,000Hz for wide-FOV retina VR situation for a percentage of people).

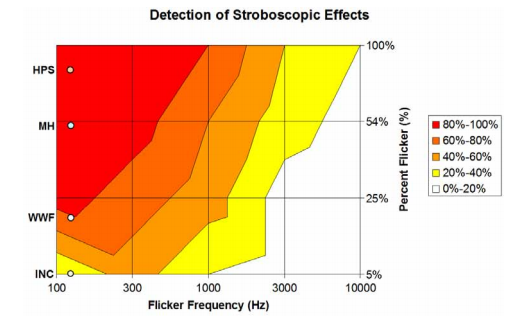

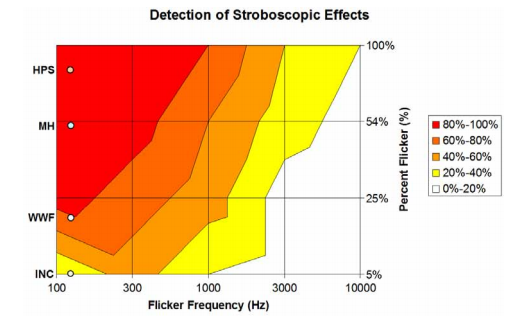

And display industry is not the ONLY time where imperfections shows up. The lighting industry has also found this out, e.g. 5000 Hz fluorescent ballasts still doesn't make five-sigma people happy.

From lighting industry paper

From lighting industry paper.

The numbers are apparently uncannily similar to the numbers that many VR scientists have discovered, in terms of stroboscopic stepping effects and/or motion blur limitations (at least at the "near final frontier" league of surround-retina).

TL;DR:

- Your maximum eye-tracking speed definitely affects the ideal maximum refresh rate to jump past "diminishing points of returns". However, display angular resolution relative to your eyes (and tracking time available) matters a great deal in determining the "perfect final frontier refresh rate where diminishing points of returns disappear".

- Size/FOV/resolution matters a lot.

e.g. Smartphone -> small monitor -> big monitor -> IMAX or wall height TV -> narrow FOV VR -> wide FOV VR -> Holodeck.

- The bigger/sharper/wider FOV, the more time for tracking over more pixels across the display plane, the easier it is to notice imperfections such as blur (tracking across display plane) or stroboscopic stepping effects (gaze in fixed point of display plane).

- You cannot control whether a human decides to track or gaze. Thus, doing only blending isn't always a fix-all.

- This all doesn't matter if you're just doing email, reading news, editing source code, or writing docs.

- Heck, this might not even matter for you, on displays you play on, running specific games (& gameplay tactics) you play today.