Post #8 of a Series

Graham J wrote:mdrejhon wrote:

Yes.

The jelly effect is simply a perfect storm of factors. See

my earlier post, as well as

Post #1 and

Post #2.

I work in the display industry with credit/references in over 20 peer reviewed industry papers.

The tilt effect is from eye movement while the screen is mid-refresh. Frame is global, but refresh is not global. To make the scan skew effect disappear — need time-relative sync’d global refresh (instant frame + instant refresh) or sync’d beam racing (beam raced GPU refresh of each pixel row in sync with screen scanout).

Makes sense. I wonder, what would it look like if you rendered the first half of the frame scanout from a delayed buffer? So on each refresh you scan out half the frame from the delay buffer, then the other half from the current buffer, then copy the current buffer into the delay buffer.

Would that halve the effect?

No, it would not fix scan skewing.

Concurrent scanouts can worsen other kinds of artifacts or add saw-toothing. There’s a good

late 90s paper by Mr. Poyton, from tests on LED marquee refreshing behaviours. See, he knew about scanout skewing in the 1990s! It shows some side effects of subdividing the scanouts. There’s a tearing artifact between the subdivided screens. It has to be one continuous sweep of the same frame.

This is an artifact on a LED billboard that refreshes top/bottom halves concurrently.

It’s like two scanskewed screens flush edge-to-edge.

Artifacts occur from any form of temporally subdividing refresh behaviors:

(For best effect, do the TestUFO animations on a desktop monitor)

- Slow scan-out sweep = tilting effect (Demo: TestUFO Scan Skew)

- Interlacing = combing / venetian blinds effect (Demo: TestUFO Interlace Simulation)

- DLP color wheel = rainbow effect (Demo: TestUFO Rainbow Effect)

(Epilepsy warning: Flicker for TestUFO on 60Hz screen. DLP color wheels run at 240-360+ color flashes per second)

- Temporal dithering = noise and contouring artifacts (DLP & plasma)

- Concurrent scanouts = sawtoothing with stationary tearing artifact at scanout boundaries (like multiple misaligned scan-skewed screens)

There is a

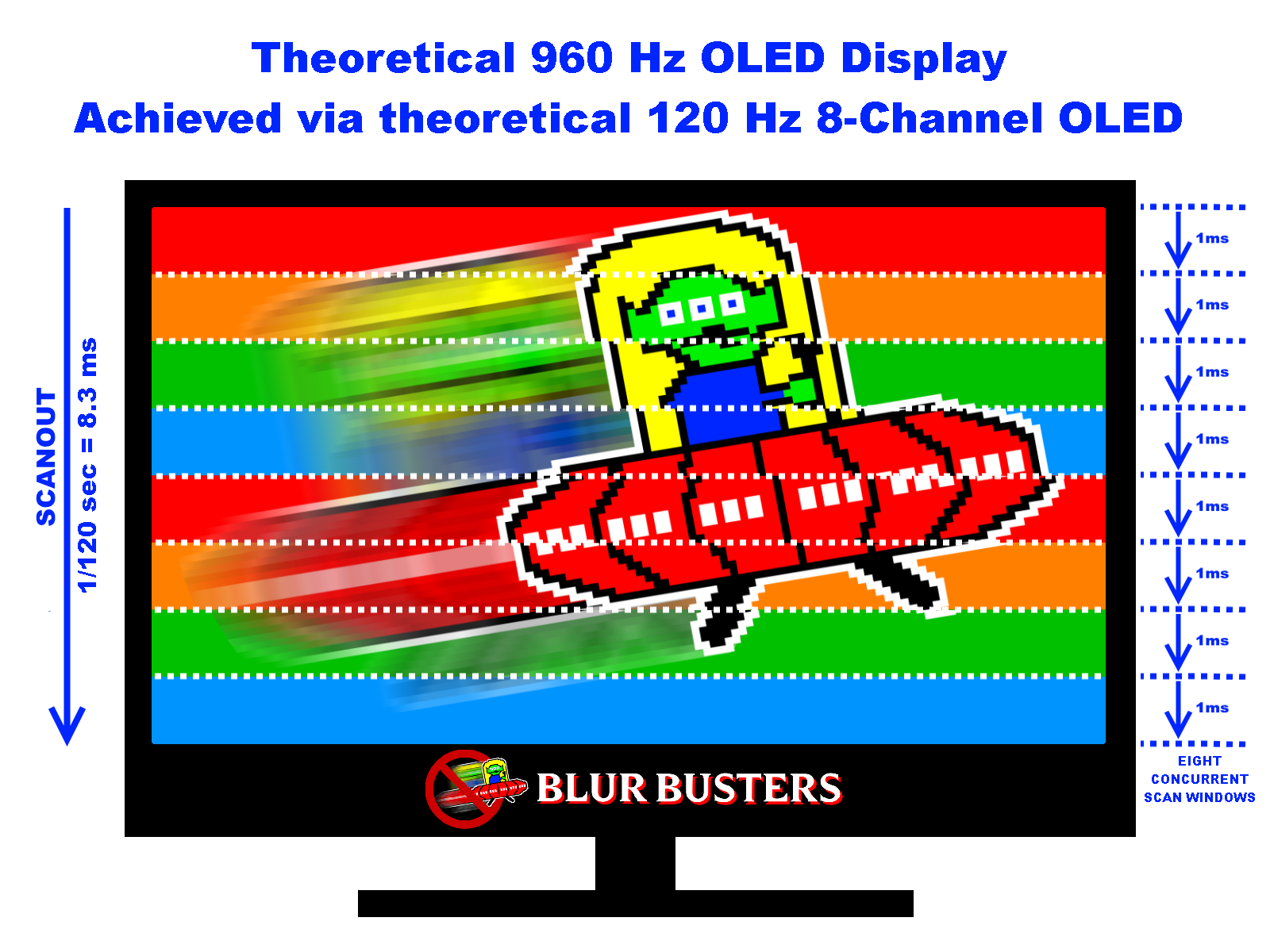

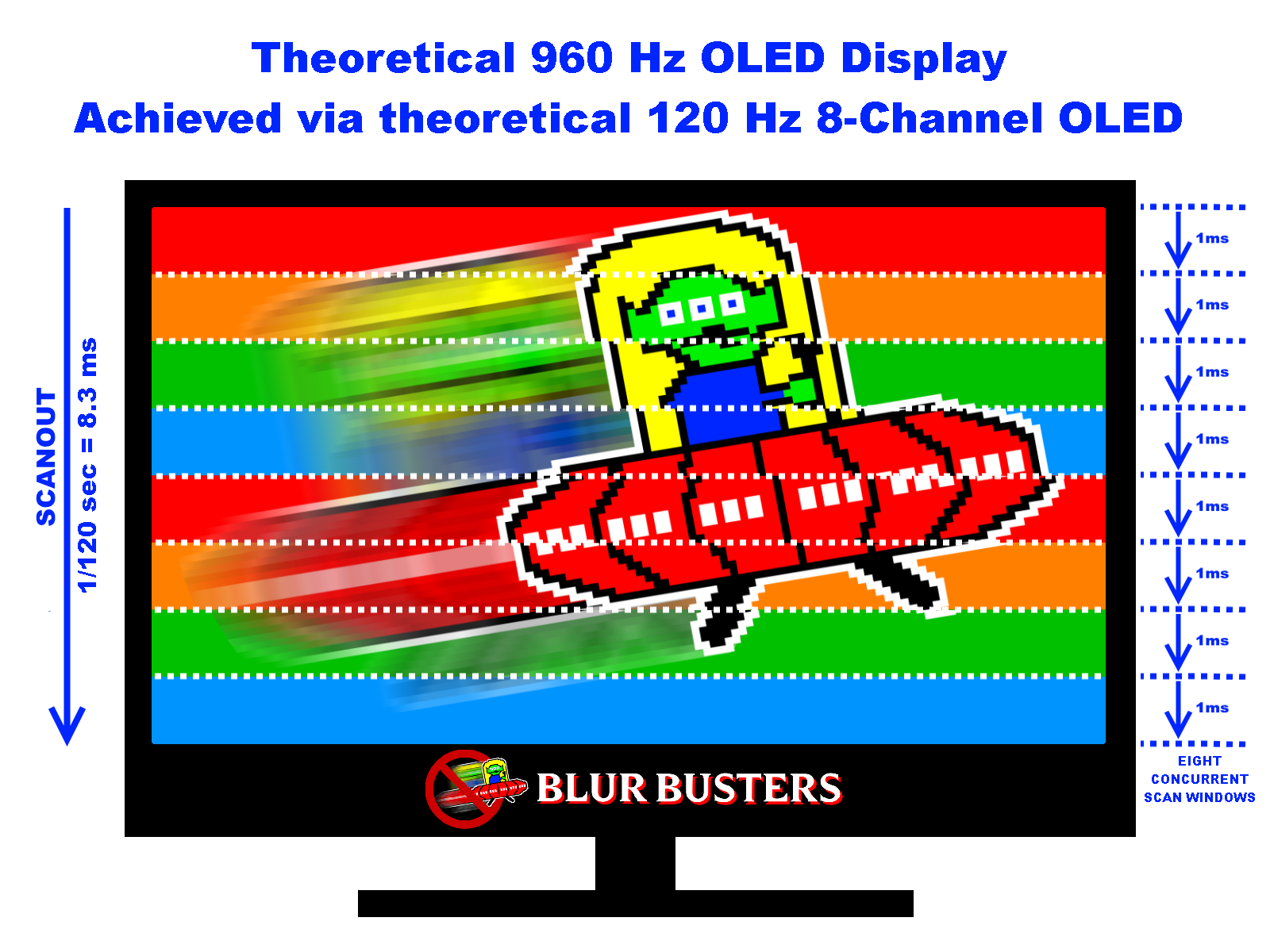

complex delayed-buffer multiscan algorithm that will fix the sawtooth+tearing artifact issue of subdivided scanouts, but it won’t fix scanskew, as the scanskew is linked to the velocity of the scanout. It is also described in this Blur Busters Forums thread of

theoretical concurrent scanouts for a theoretical native true 960 Hz OLED. To avoid tearing during multiscanning, the subdivided zone would need to inherit the subdivided screen’s framebuffer from the zone above. So that the scanout sweep is at the same velocity on the same global full-screen framebuffer, sweeping the same frame in one contiguous scanout sweep (even if there’s multiple concurrent sweeps going on). Essentially emulating a theoretical multiple-electron-gun CRT where each beam is assigned its own unique contiguous refresh cycle frame buffer in one continuous sweep per beam, despite multiple beams going on at the same time. For a serially-rendered and serially-delivered series of framebuffers from a GPU, it definitely would require buffer delays (as you suggest), but it doesn’t solve the skew, just the tearing. Basically the scanouts would “cascade” to the zone below (subdivided screen module/partitioning), in order to eliminate the zigzag/tearing artifact. It doesn’t fix scan skew, but it would fix the tearing.

Tearing artifact is because of a large temporal difference between adjacent pixel rows. For one form of software tearing (VSYNC OFF), it’s a new frame with its respective sudden increase in game clock, essentially spliced mid-scanout on the GPU transmission (signal / cable) that is not synchronized between refresh cycles —

see tearing diagram created by 432 frames per second on 144 Hz. For one form of hardware tearing, it’s a sudden temporal difference in pixel refreshing between adjacent rows.

For all forms of tearing (software side and hardware-design side) it is caused by the large time interval (of the render or of the refresh) in the pixel rows above/below the tearline. So it is a big problem in concurrent scanouts (i.e. treating the screen as multiple subdivided screens) unless mitigated.

Regardless, even if tearing is fixed — concurrent scanouts never help scan skewing, as concurrent scanouts don’t speed up the scanout sweep.

In fact, it can make scanout skewing worse. Concurrent scanouts of a 60Hz screen means the scanout sweep would be 1/30sec (1/60sec for top half, then cascaded to bottom half for another 1/60sec, for a grand total of 1/30sec in a slower scanout sweep). In other words, multiscanning can worsen scanskew because you’re reducing the global top-to-bottom scanout sweep velocity via the narrower-height stacked equivalents of multiple screens.

To understand better how scanout subdivision creates slower scanouts:

Although each subdivided screen slice (1/8th height) is scanned-out in 1/960sec, the 8 slices means a total top-to-bottom global sweep is 1/120sec instead of 1/960sec. So scanout subdivision is mainly useful for future ultra high Hz screens made of modular technology (like an array of many tiny screens). Jelly effect would be super-nasty for 60Hz and 120Hz screens doing concurrent scanouts.

Some screens necessarily use subdivided mini-screens (e.g. modular LED screens like Jumbotrons), and many of those LED JumboTron/Daiktronics screen modules concurrently refresh at 600Hz-1920Hz (refreshing a 60Hz refresh cycle between 10 to 32 times) as a skew/tearing/sawtoothing solution.

The modular “equivalent of many stitched-together tiny screens refreshing simultaneously” nature of giant LED screens can also in theory be commandeered to create a native 600Hz-1920Hz frame rate + refresh rate as a motion-blur-elimination method (brute Hz to create low-persistence sample-and-hold display) with some relatively minor modifications to the electronics — for ultra high refresh rate giant screens in the future. So the modularity actually helps the technological work-subdivision necessary to make ultra high refresh rates possible in a non-unobtainium way.

Multiscanning is a known shortcut to achieving retina refresh rates using today’s technology. Retina refresh rates (where the diminishing Hz curve of returns no longer derive humankind benefits) are scientifically

well in excess of 1000fps 1000Hz for a wide-FOV high resolution sample-and-hold display due to the

Vicious Cycle Effect of higher resolutions and wider FOV amplifying refresh rate limitations (from either motion blur effects, stroboscopic stepping effects, or other motion artifacts generated from a finite refresh rate

demonstrated by TestUFO Persistence which only stops looking horizontally pixellated at ultra high refresh rates). This is well known in VR, but also applies to FOV-filling wall sized physical screens, some of which also happens to be built modular like many tiny screens running concurrent scanouts.

Global refresh displays in the past still required sequential behaviours (e.g. multiple fast low-color-precision scanouts like plasma/DLP, or things like refreshing/priming pixels in the dark before a delayed screen illumination pulse). So all past low-Hz global refresh screens always have worse lag and/or worse artifacts than good LCD screens.

Metaphorical technological thought exercise: If a screen has truly global refreshed instantly without lag, then it’s fully idling between refresh cycles — then ask oneself, why is the screen 60Hz instead of a higher refresh rate such as 120Hz? In other words, ask oneself, why not put an idling screen to more work doing more refresh cycles? Rheoretical question to ask oneself. Modern color processing on GPUs can go thousands of framebuffers per second nowadays even on a midrange desktop GPU for 2D graphics such as scrolling or panning. Even modern Apple/Samsung mobile GPUs can now browser-scroll at a few hundred of composited framebuffers per second, and still only hit 50% GPU utilization (for scrolling overhead) on a retina screen for simple scrolling of render-light content like static web pages. The point being, there’s no zero-lag global refresh displays. Otherwise, we’d be milking the extra Hz sooner more cheaply. The only true zero-lag displays are displays that are streaming the signal directly onto the screen like a CRT in a fully raster synchronized manner, and that’s never a global refresh. Global refresh displays historically always had more lag than even a 60Hz CRT or modern low-lag fast-GtG 60Hz LCD.

High Hz single scanout is now ultimately the holy grail method of approaching global refresh in a compromises-free way (low lag, and very fast scanouts that behave as defacto global-refresh). Combining global-refresh and low/zero-lag, requires a display to do all of its processing during the practically global refresh. So the screen is effectively idling between refresh cycles. At that stage, it’s almost no extra cost to allow the display to at least optionally be able to accept more refresh cycles. Naturally, the converse is also true: If you’ve already designed a high-Hz single-scanout screen, it’s already defacto closer to a low/zero-lag global refresh.

Currently, the most scanout-motion-artifact-free technologies (ignoring ghosting differences) are

high refresh rate LCD, OLED, LED, MiniLED and MicroLED displays that do a single-pass scanout per refresh cycle:

- Pixels refresh directly to final pixel color with no temporal tricks.

Fixes rainbows/noise/contouring artifacts

- Refreshing use only one scanout sweep for minimum temporal difference between adjacent pixels, while also synchronizing framerate=Hz.

Fixes tearing artifacts

- High refresh rates means an ultrafast scanout sweep (from the panel’s shorter refresh interval capability).

Fixes scan skewing (jelly effect) — even for worst jelly effect visibility conditions