Eonds wrote: ↑28 Jun 2022, 11:19

Boop wrote: ↑27 Jun 2022, 11:31

Eonds wrote: ↑27 Jun 2022, 00:50

That's incorrect. It's not about FPS. FPS isn't latency.

1000fps = 1ms

500fps = 2ms

Higher FPS changes your overall system latency. If you're talking about comparing CPU A to CPU B with a framerate cap then I can see your point.

Obviously.... but FPS isn't latency. It's called Frames Per Second. If you comprehend exactly what that means then you wouldn't have written that reply. An average 10-20% fps boost doesn't mean anything. I'd like to see the 0.1% lows if anything. We're talking about architectural latency & improvements.

FPS does affect lag.

Higher frame rate is lower lag. The GPU takes 1/240sec to render a frame when a game is 240fps.

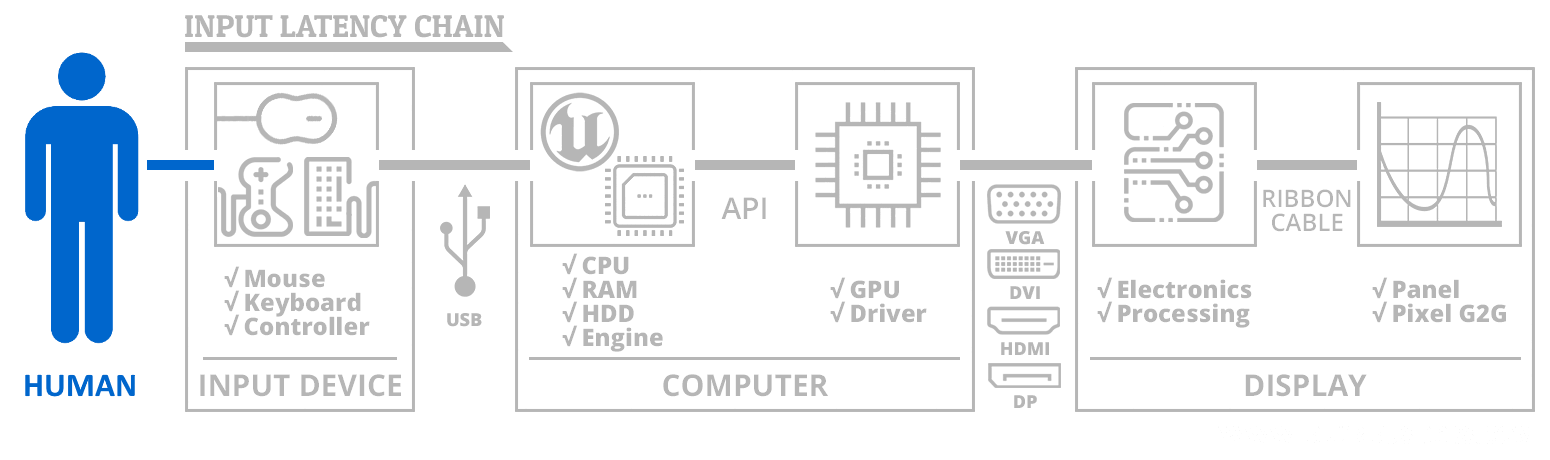

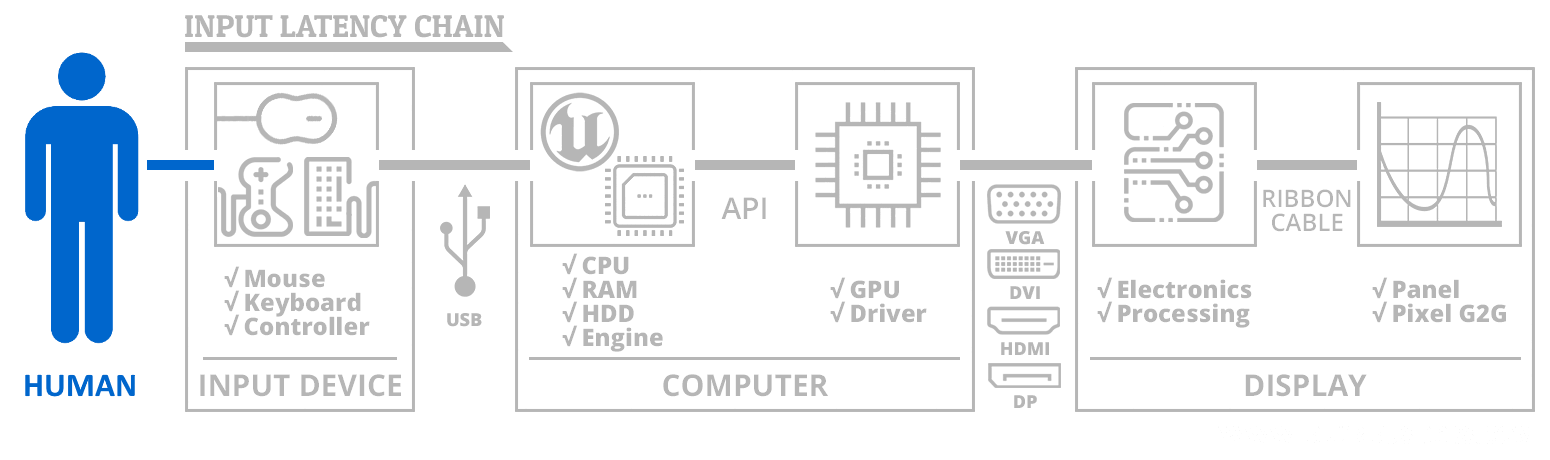

Latency is a chain:

Frames per second is a weak link in the latency chain, since lower frame rate = GPU took more time to paint a new frame.

<ELI5>

Wonder why they call it "frame"?

Wonder why they call it "paint a polygon"?

Wonder why they call it "draw a frame?"

Etc. They use drawing/painting/artist metaphors, for good reason.

So let's expand the metaphor.

Metaphorically, the GPU is like a high speed artist that draws (paints) a new frame, and 67fps means the GPU took 1/67sec to paint the frame (painting is something that is hidden inside the GPU chip and GPU memory) before it could be delivered to the screen. Drawing all those textures, triangles/squares/polygons, etc.

The math inside of a GPU is shockingly similar to what is used by a drafting table of the 1950s; billions of trigonometry math calculations per second is used to perspective-correct all the pixels, textures, polygons, that constitute a 3D-rendered scenery. And how things are parallelized, now means that an RTX 3080 Ti is now capable of over 1 trillion floating-point (numbers with a decimal) math operations per second. Math driving an automated artist called a GPU.

1 teraflops = 1 trillion flops

F.L.O.P.S. = Floating Point Operations Per Second

Floating Point = a math decimal point that moves left and right along a number,

such as 67.31243752 versus 67312437.52

All RTX GPUs on the market do many hundreds of billions math calculations per second in order to do its art. Figuring out where to begin drawing (X,Y,Z) to (X,Y,Z) and using trigonometry to rotate things. The GPU is blasting through millions and billions of tangents, sines, cosines, and arc equivalents, just to help you enjoy your game.

This is math-calculated at many thousands of math calculations for just ONE PIXEL

Read again:

Thousands of math calculations for just ONE PIXEL

Of just one frame.

GPUs are fast, but math takes a finite amount of time.

With GPU being a math artist, it takes time for GPU to paint-by-math.

So it takes a finite time to finish all the math and draw everything, even long before it remotely thinks of sending the pixels to the DisplayPort output. (also a finite-time manoever). One frame in Cyberpunk 2077 is impossible at less than approximately 8 billion math calculations, at minimum detail level. Ultra detail requires a lot more. One CS:GO frame still take many dozens of million calculations to finish, before doing the next frame. Try doing that with a pocket calculator! Even though the GPU is fast, it's not infinitely fast.

Yes, you can cap the frames, which means you're idling the GPU before letting it draw a new frame.

But in general, if you're not capping, the GPU will paint the next painting (Frame #2) after finishing artisting the the previous painting (Frame #1). The GPU is a serial artist that finishes a lot of paintings per second.

The more time the GPU spends painting a frame = the fewer frames per second. It can't spray as many picture frames per second if it takes too long to draw a single frame.

If a GPU can't finish a frame in less than 1/50sec, it is impossible for the GPU to do more than 50 frames per second (50 times 1/50sec = 1 second).

The boss is the game engine. It's the manager that orders the GPU (bosses your GPU around) to tell your GPU to paint "this and that", polygons, textures, pixels, sprites, and whatever you want. The framebuffer is the artist canvas the GPU is drawing to (hidden inside your GPU).

The software developer creates a game engine. The game engine is bossing the artist (GPU) around like a frankenstein merger of your math teacher and your art teacher (into one entity) talking in binary. The game engine is the Manager for your GPU to help it know what math it needs to do -- for the billions of math calculations per second it needs to to order your GPU around to tell it how to be an artist of a single frame. But it can only do one frame at a time. And it takes a finite amount of time for the GPU to draw the frame.

The more time the GPU spends painting a frame = the more lag before that frame gets delivered to the display pipeline (via the currently configured sync technology, VSYNC OFF, VSYNC ON, GSYNC, double buffer, triple buffer, fast sync, enhanced sync, RTSS Scanline sync, whateversync, etc). The sync technology happens AFTER the GPU finishes painting a frame.

But wait, it gets complicated: 100% GPU utilization can sometimes lag because it is too busy to do other things (e.g. garbage collection, or accepting new mouse deltas) and then the workflow lags badly from small software interruptions.

Metaphor version: Remember, the boss is still the game engine. And sometimes if the GPU is 100% busy, it's hard for the boss to interrupt the GPU for instructions. So lag spikes a little sometimes when GPU is 100%. There are times where GPU lag can spike when the GPU is 100% busy, it is rushing those frames out so fast, that the sync technology can fall behind (e.g. buffering lag, and the CPU is spending more time impatiently waiting for an overloaded GPU, because a boss can't easily interrupt a worker that's hurrying at 100% workload, without increasing lag).

You can have bad display lag with good GPU lag (240fps on a super-laggy 60Hz display). You can have good display lag with bad GPU lag (37fps on an ultra-fast 240Hz display). But it's true that uncapped 240fps 240Hz has less lag than uncapped 37fps 240Hz, because the GPU took less time to paint each frame. Yes, you want to lower the GPU portion of your input lag by increasing your frame rate, without overloading your GPU.

You have to realize when people talk about lag, the lag is not one item. The lag is a total of multiple things in a latency chain. Yes, GPU lag (framerate lag) is not the same thing as display lag, but it's part of the button-to-photons lag.

</ELI5>

TL;DR: More frames per second is generally less latency*

*Only if the GPU is not overworked