MatrixQW wrote:Chief Blur Buster wrote:So, in other words, it can have a Rube Goldberg effect in reducing input lag.

Reduced cpu usage doesn't necessarily mean it will give more fps in games. The operating system could actually work more efficiently without that translating into the game fps.

What i'm trying to point out is people confuse performance with input lag.

We are talking about input lag that affects games and these tweaks will do nothing like when we turn vsync on/off.

I just saw a blog with more than 100 tweaks to tune the operating system for gamers.

I take my chances saying that those things overall will not result in lower input lag or even a 2fps increase.

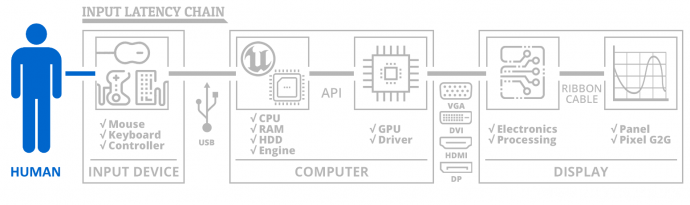

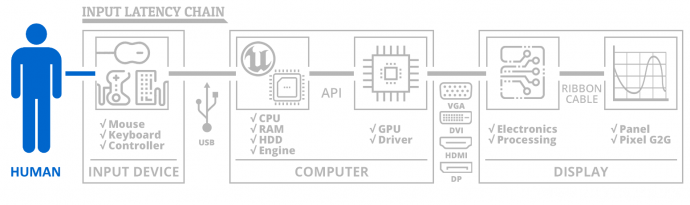

Mostly just semantics at this stage, but the input lag chain is a huge one.

Let's consider

button to photons -- the

entire lag chain.

Even this diagram is

oversimplified:

See, still complex despite being a heavily simplified diagram. Whether it's USB cable latency, or a monitor processing latency or a pixel response latency (very slow GtG adds lag before photons hits human eyeballs). I even omit lots of subtleties such as mouse antibounce filtering latency. Or subtleties like how different games process input reads relative to rendering. Those are complexities beyond scope of this diagram. But the diagram generalizes the fact that latency occurs at many different parts of the "button-to-photons" chain.

Within this, there's also

frametime latency. The amount of time it takes to generate 1 frame of graphics (as a co-operation between CPU and GPU).

Frametime latency is one tiny piece of the latency chain.

-- If performance improves, frametime latency can go down. For example, a game runs 110fps instead of 100fps.

-- The higher framerate can often mean frametime latency has gone down since input reads now occur 1/110sec before finishing rendering the new frame, instead of 1/100sec before finishing rendering the new frame, because input reads are often synchronized to frame rendering.

-- So better performance = higher framerate = lower latency = in many games.

It depends on the game and how it executes the input reads from the input devices, and how quickly "button-to-photons" occur.

So.... That's why I call it "Rube Goldberg". The input latency of "button-to-photons" is a complex chain. It's so complex, it's like Rube Goldberg.

Often, better framerate performance = lower latency

Certainly, not always. But it's very commonly the case.

(Exceptions apply, such as SLI, as doubling graphics cards doesn't typically reduce frametime latency, but that's a whole different Pandora Box to explain altogether.)