Casual “simple barely surface-scratch” answer:

It is correct that

input lag is a misnomer in some ways here — latency is a horridly complicated topic and it is easy to make mistaken assumptions especially when you are not an engineer. Sometimes those nanoseconds are super important (i.e. LIDAR) and other times less so.

But it gets WAY more complex than this.

Here is a very brief surface scratching:

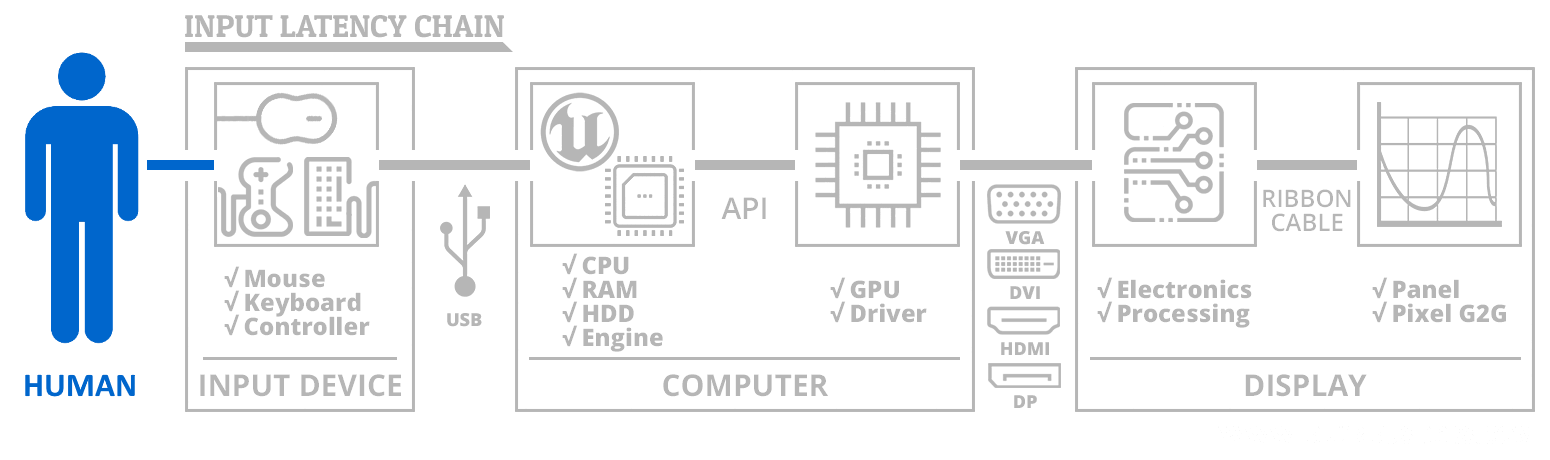

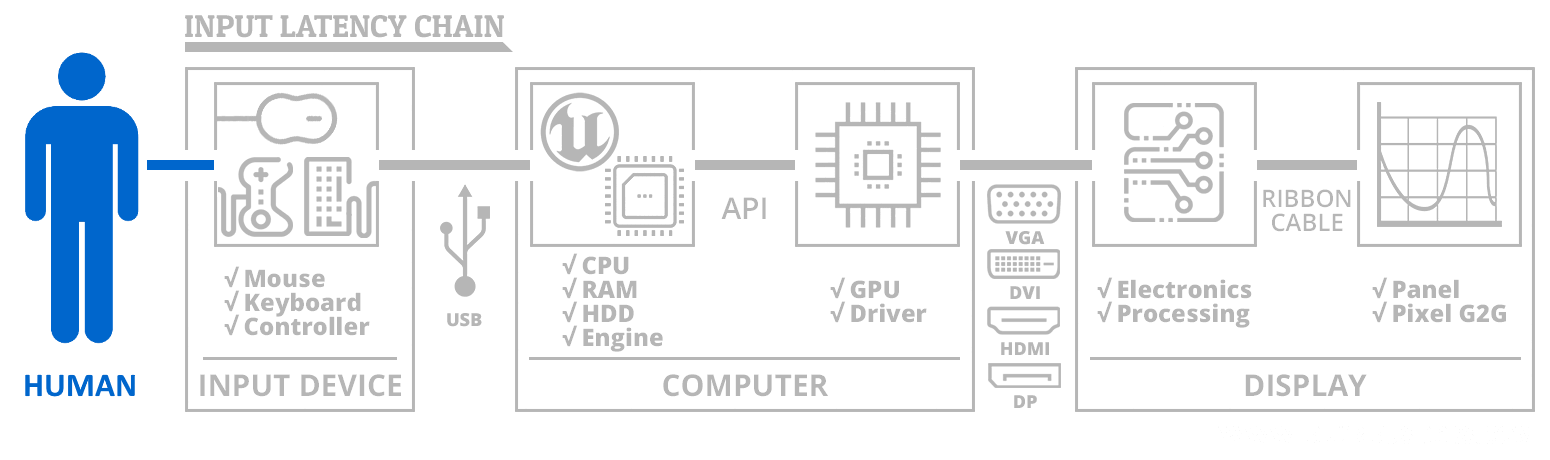

— There are multiple different kinds of latency, such as latency jitter (variable latency), absolute latency (tapedelay-style latency), sampling latency (higher Hz at same absolute lag feels less laggy), and they can hit different chains.

— One lag can appear while another lag disappears, for lag that is parallelized, internally in CPU, GPU or the interaction between CPU and GPU. For example SLI is higher frame rates but laggier frames. Likewise, a higher frame rate might also occur simultaneously with more lagged USB processing or lagged main RAM processing or lagged asset streaming processing. What might happen is, for example, you get a higher frame rate, but a slightly laggier mouse, or such — many possible scenarios.

— Etc. There are literally infinite number of causes of latencies — whether a line of computer code, a transistor on a CPU by themseles, or the new latencies generated by multiples of them cooperating with each other. And there are millions of lines of codes — and there are billions of transistors — and literally infinite combinations for latency to go wrong. I know I’m absurdly oversimplifying things, but it helps gain an appreciation.

Going into further detail about nuances of latency ends up going into engineer-speak, but this reply is to allow one to gain an appreciation of the complexity of the latency subject. For those who are so fascinated about this, should consider looking into the appropriate education and work fields.

Needless to say, it is not a simple yes/no answer about Ryzen vs Intel; each is superior at different things depending on the software and what is being done in the hardware/software combinations — there is more lags and less lags fighting against each other throughout the whole machination of a system.

I know some people went to university because of Blur Busters reading, to learn more about electronics, computing, engineering, because they were so fascinated. This topic is one of these; it is definitely very hard to distill in laymen’s terms but questions are always welcome even if not easily answered in forum posts.

Even a game may be superior on Intel, and a different game may be superior on Ryzen, simply because of how they optimize to the respective virtues of the different architectures. Some gamers prioritize on higher smooth sampling latencies rather than absolute latencies, while others want the lowest absolute latency.

We all know how deliciously massively multicore a Threadripper is, and they are really great for those workloads. When used with certain heavily multithreaded games or other software, those intercore latencies can actually perform worse/better (lag-wise or performance-wise, etc) depending on how you force thread affinity (e.g. if a game uses 2 or 3 or 4 threads, they should be thread-affinity allocated in adjacent cores on the surface of a Threadripper to keep lag lower than if they were spread throughout the Threadripper. Some optimizations like these make big differences for some multithreaded processing that utilize frequent inter-thread communications; but not all software need frequent inter-thread communications. But I’m cherrypicking “one nuance”.

As CPUs get more massively abstracted (pipelined, multicore, virtualized, hyperthreaded, etc) we’re getting lots and lots more untraceable latency noise. Ever tinier buildups of imprecision (whether latency, jitter or otherwise) in the refresh rate race to retina refresh rates when sub-millisecond latencies slowly start to show human-visible jittering as the Vicious Cycle Effect (higher rez, higher Hz, clearer motion) unveil the curtain and lower noisefloors, Rube Goldberging its way to the

The Amazing Human Visible Milliseconds. Cross-core latencies are way too diffuse to be worried about but in the picture of trying to simultaneously process an 8000Hz device, run dozens of threads, and a game engine, on various random cores, we get those intercore latency in the noise. How it bornes out into human degradations requires a lot more research that is far more specialized than what Blur Busters does.

I know this does not answer your question, and I know that the Ryzen vs Intel debate will continue. But it brings an appreciation to the delicious complexity of the latency subject.