Bunaries: https://github.com/NotThat/Benchmark-Di ... s/tag/v1.0

Benchmark.DX9.Black.White.exe - changes screen color to white when pressing 'w' button, changes to black when pressing 'b'. Esc to quit.

Benchmark.DX9.Human.exe - Uses mouse buttons (and Esc to quit). First click turns screen red, after a random 3-4 seconds delay turns screen green similar to the popular Human Benchmark website. It keeps stats of min/max/avg delay as well as how many early clicks you had. Needless to say it is much faster than the web version.

My original goal was to implement a program that changes the color of the screen on a keypress. I've first implemented it in Love2D, then when I wasn't happy with the performance I've implemented it in DirectX9, DirectX12, OpenGL, and Vulkan to see which one is faster. DirectX9 ended up giving me the best performance so that's what I went with.

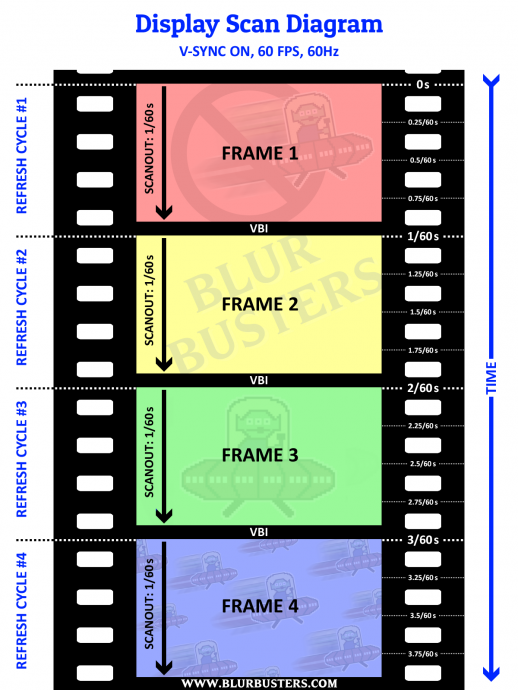

The program runs at ~6.7k-6.9k FPS on my machine producing full color, full screen resolution frames of a single color. My graphic card is showing 82% and I am CPU bound. Quite interesting since I have a very strong CPU (i9-9900k) and relatively weak graphic card (GTX 960). With lower resolutions it goes up to 16k FPS or so.

Once I've had the program going, implementing the human benchmark from it was trivial, and since I figured people might be interested in it I am sharing it here.

My focus was on getting everything to run as fast as I can. If anyone has ideas on how to get an increase in speed I would love to hear them. Some ideas:

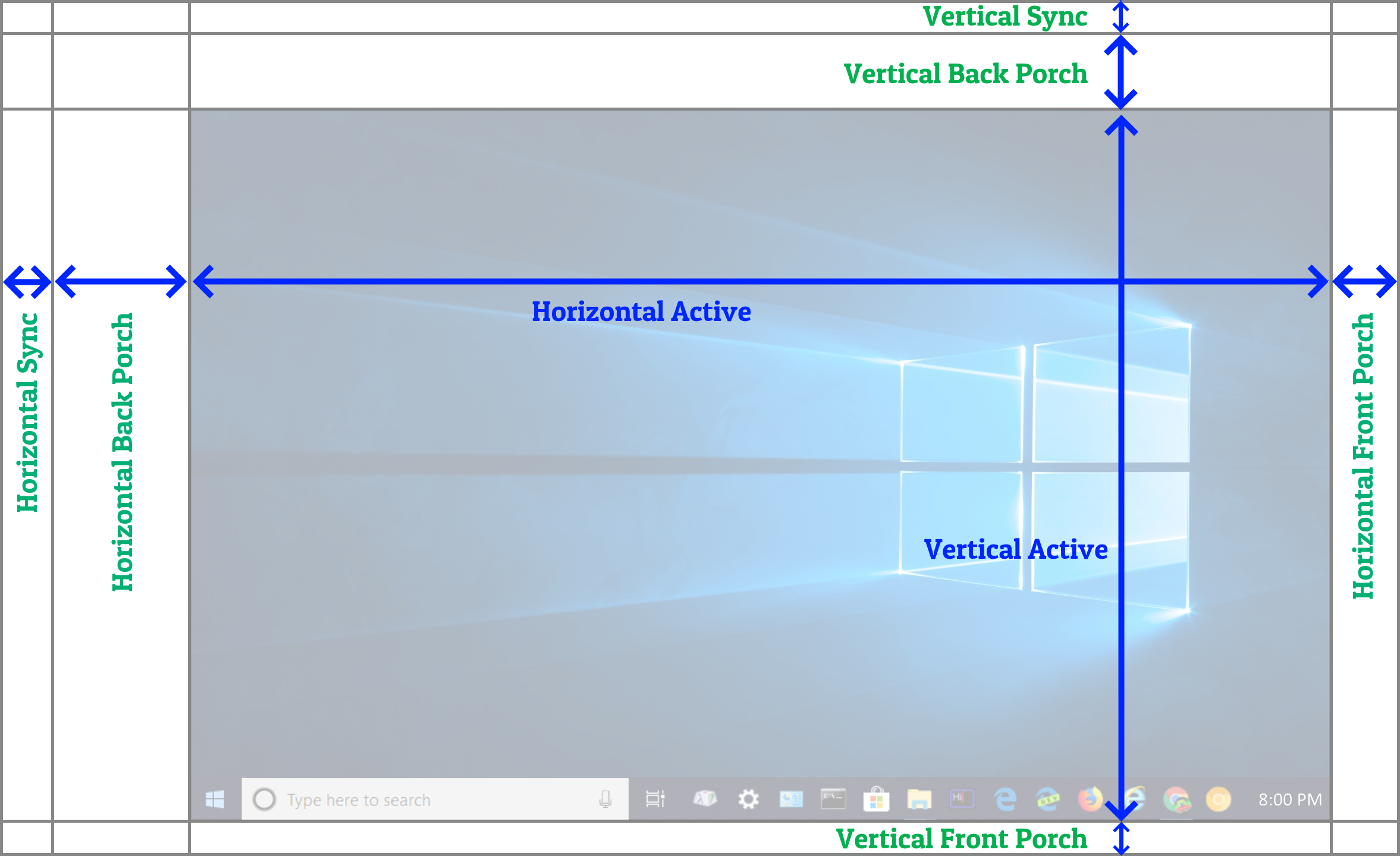

- Lowering resolution and or color space. I rather like 1080p and feel as though deviating from that makes the task more synthetic and less applicable to real world situations. It does have its uses, however.

- Different graphical implementation; which one?

- Using threads. I've implemented an option to use a separate thread for input and rendering, but the results are about the same. Almost all of the run time is being spent in the Present() call which is out of my control.

- Not producing frames at all and simply waiting for the input before calling Present() - This does make sense and I have tried that, but empirically the best-case results were somehow worse. I suspect there could be a power saving issue in the graphic card, probably not in the CPU as the CPU should remain active due to busy input listening. However I do not myself have an Arduino/Teensy yet to test this myself and the numbers are from somebody else who does. Since I don't, the only metric I have to judge by is FPS numbers, and I know if my implementation ends up producing more FPS it should generally perform better as well.

- It is my understanding that at least some of the CPU overhead in calling Present() is due to the context switch of windows having to go driver side in kernel mode. I wonder if it's possible to do something about this, perhaps writing the program in driver mode? O_o

I'm curious to see some results as well as the web one. Be sure to use at least 5 clicks sample size. Here's mine: