Embargo lifts, I can reveal one cool stuff. I'm currently testing an amazing gaming mouse with an official 8000 Hz poll rate. Not overclocked -- the Razer Avalon -- this is a genuine true official 8000 Hz mouse sensor and poll rate. Razer clearly took my "More than 1000Hz" pleas to heart -- and actually built it, and the Overclock.net Mouse Overclocking Thread.

I am creating an article about this, along with Mouse Guide Reloaded (Followup to previous Mouse Guide by sharknice).

If any of you want to tell me additional tests to try with the mouse for this week's article, let me know!

Yes, yes, the difference IS perceptible with fast GPUs on 240Hz, 280Hz or 360Hz.

Blur Busters article coming.

TL;DR Version

1000Hz is a 15-year old pollrate that worked fine in the 60Hz and 120Hz days. But 1000Hz is showing weak links at 360Hz refreh rates.

Going higher than 1000Hz poll rate is very clearly human visible on 360Hz+ gaming monitors. Are you familiar with two audio beat frequencies getting louder when two frequencies getting closer together? Metaphorically, a similiar thing is happening with jitter increasing. Refresh rates are now approaching too close to the mouse poll rate.

Long Version

I am now crossposting a reply from OCN Forums...

Crosspost #1 of 2

And that's not it. The 1000 Hz mouse poll rate came out in official mice about 15 years ago. Here's another crosspost:Chief Blur Buster wrote: FORE! ...I swing golfclub at a microphone sitting on my tee. <loud thwack>

It is not placebo.ToTheSun!, post: 28644744, member: 216216 wrote:Now, how that relates to how people would be able to even begin to benefit from 8000 Hz mice, I do not know. It's pretty self-explanatory how you would NOT SEE the difference on-screen, so invoking your seemingly superior ocular system is a bit irrelevant.

....But you need 360Hz to really easily see >1000Hz issue.

Remember my old photograph of 125Hz vs 500Hz vs 1000Hz a few years ago? That was taken at 120Hz.

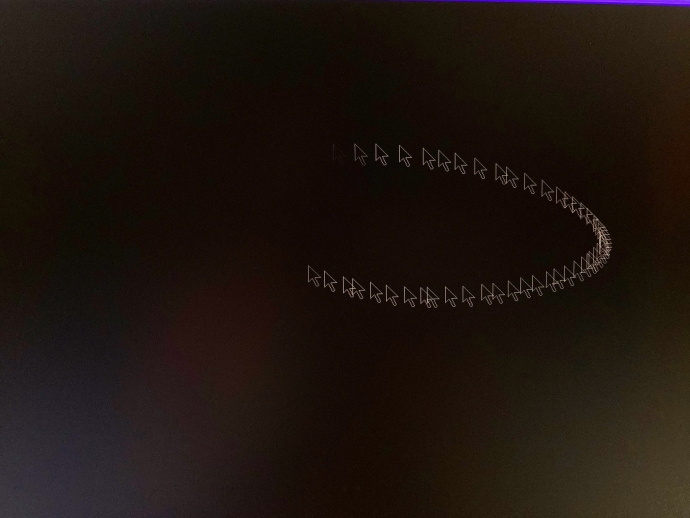

Remember my 480Hz MouseArrow Test? The phantom array effect means retina refresh rates are really high.

Photo Proof:

The 1000Hz pollrate limitation problem is much bigger at 360Hz on the PG259QN.

I have a 360Hz ASUS PG259QN and it's night and day. Long-exposure photographs below:

1000Hz mouse at 360 Hz

8000Hz mouse at 360 Hz

120Hz display... I couldn't tell 1000Hz vs 8000Hz in nonstrobed operation. (Some others may, but I'm not THAT sensitive)

240Hz display... It's subtle. Differences are starting to reveal, especially if I turn on DyAc or ELMB, and use 3200dpi at windows desktop.

360Hz display... WOW, you definitely want 2000Hz+ pollrate even nonstrobed. Still can see 2000Hz vs 8000Hz pollrate difference with mousearrow circling test. I notice in 1-2 seconds of circling mouse arrow.

Just like audio beat frequencies start interfering more when they get closer together, the increasing display refresh rates starts jitter-interfering with the mouse pollrate. Move your eye balls upwards and look at the photo proof above! If that's not a microphone drop, what is it?

<ShamelessBoast>

Myself (Mark Rejhon) and my temporal business Blur Busters/TestUFO is now mentioned in over 20 peer-reviewed science/research papers -- largely because things I said or researched 5 years ago really became true today and my writings tend to be tomorrow's textbook study material for today's refresh rate race disbeliever. Trust me, I know this [bleep]. Rinse, repeat, all those descendant myths of "Humans Cant See 30fps Versus 60fps" that I've been mythbusting since 1993. Or even the oft-parrotted "255Hz Fighter Pilot Limit" (that one was a single-frame silhouette test that doesn't consider motion blur or stroboscopics). My Hertz mythbusting continues all the way up to my recent display-industry textbook reads Area 51: Display Science, Research & Engineering articles. I've already been paid to fly over both the Atlantic and Pacific oceans to teach a classroom at display manufacturers. I think my rep already speaks for itself, 8000Hz mouse disbelievers. Blur Busters know all display temporal subjects (GtG, MPRT, latency, Hz, poll, jitter, stutter, strobing, VRR, etc).

</ShamelessBoast>

[SIZE=17px]Vicious Cycle Effect is the fuel that powers refresh rate race to retina refresh rates[/SIZE]

Refresh rate race to retina refresh rates have a Vicious Cycle Effect problem. The higher the display Hz becomes, the more visible limitations become. Even higher resolution amplifies refresh rate limitations. And even higher refresh rates amplify resolution limitations. They all interact each other in a vicious cycle effect. That's why 60fps@60Hz looks more motion-blurry on a 8K display than on 60fps@60Hz on a 1920x1080 LCD. That's because of bigger delta between stationary resolution and motion resolution (at the same physical motion speed of 1 screenwith per second). Those people who only incrementally upgrade refresh rates 144Hz->165Hz or 240Hz->280Hz and go "ho-hum", do not realize they need to upgrade refresh rates and frame rates geometrically to punch the diminishing curve of returns. 60Hz->120Hz->240Hz->480Hz->960Hz. Those who don't understand the diminishing curve needs to see Pixel Response FAQ: GtG Versus MPRT as well as the Amazing Journey To Future 1000Hz Displays; the 60Hz->144Hz upgrade (2.4x) is a much more similar ugprade magnitude as the 144Hz->360Hz upgrade (2.5x). Thanks to the Vicious Cycle Effect, even 0.5ms MPRT and 1.0ms MPRT is now human visible for fast panning at higher resolutions (See: The Amazing Human Visible Feats of the Millisecond), whereas it never mattered in the VGA days.

Many Reasons Why 240Hz-vs-360Hz is More Diminished Than It Should Be

Remember, most websites such as LinusTechTips only tested 1000Hz mouse with their 360Hz monitor; the mouse is now a problematic weak link further disappearing the 240Hz-vs-360Hz difference in some cases; difference partially lost due to GtG slowness (turns it into a 1.3x blur difference instead of 1.5x blur difference). Then you add mouse microstutter -- partially thanks to the stutter-to-blur continuum. If you click that TestUFO link, you see low-frequency is vibratey (visible stutter) and high-frequency is blurry (frametime persistence motion blur), much like slow vibrating guitar string versus fast blurry guitar string. That's why stutter and blur is the same "persistence" thing. They're just different frequencies below/above flicker fusion threshold. But blur comes from many sources. High-frequency jitter beyond flicker fusion threshold can blend into motion blur. 1-pixel mouse high-frequency microstuttering blending to 1-pixel extra motion blurring, secretly sadly adding +1ms MPRT to monitor's MPRT rating during mouseturns or mousepans. When you upgraade 240hz to 360Hz, your 1.5x blur improvement (ideal motion blur improvement, assuming 0ms GtG) of your upgrade becomes only 1.3x blur improvement (GtG bottleneck) and then become only a 1.1x blur improvement (1000Hz pollrate bottleneck: mouse jitter/microstutter contribution to display motion blur). Be noted blur-degradation numbers are approximate, but they're both real blur degradations. Thus, blur weak links build up, you're NOT getting your money's worth out of your ASUS PG259QN or other 360Hz monitor. NO WONDER why LinusTechTips could not tell a difference. Pollrates need to go up. GtG needs to go faster (realworld GtG should be a tiny fraction of a half refresh cycle to prevent bottlenecking display motion blur; that's hard at 360Hz). You need to optimize to milk your monitor Hertz!

Some Stuff Obvious To Me Are Tomorrow's Researcher Material

Some of those are just duh-o-matic obvious stuff to me, but jawdropping to other testers and researchers. I just show stuff, which now become new research study material. I've got an unusual form of motion-emulating memory (like photogenic memory, but for testing motion algorithms in my brain) -- in other wrods, brain has the capability to emulate TestUFO tests long before I write the HTML5 of TestUFO. That's how I invented TestUFO Pursuit Camera (many testers now use invention), TestUFO Eyetracking Optical Illusion (most researchers can't immediately explain why this illusion appears until I explain the blur physics), TestUFO Persistence of Vision (similar more advanced version), TestUFO BFI Pulse Width Comparison (software simulation of LightBoost 10% / ULMB Pulse Width adjustments) TestUFO BFI Double Image (software simulation of CRT 30fps@60Hz duplicate images), TestUFO Variable Refresh Rate Simulator (great stutter-to-blur continuum demo), TestUFO Blue Frame Insertion (motion blur reduction doesn't necessarily need black color), amongst other tests. Make sure to view all of these animation demos on a 144Hz+ desktop monitor, if possible. (Some of these are part of my TestUFO powerpoints for my classroom training service). This is how what I said 5 years ago become researcher textbook stuff in future. Patience, padawans...

Yes, 8000Hz is Overkill Sometimes

True, 8000Hz is sometimes overkill, but you can just select 1000Hz/2000Hz/4000Hz/8000Hz, find the sweet spot for your game. The luxury of a flexible 8000 Hz mice will be staple equipment of circa 2025 esports of >240Hz.

Special Optimizations Are Needed For CRT-to-LCD Upgraders

Also, if you want to maximize motion blur reduction nirvana, pollrate optimization (2000Hz / 4000Hz / 8000Hz) apparently helps, see CRT Nirvana Guide for Disappointed CRT-to-LCD Upgraders, since reduced display motion blur makes mouse microstutter/jittering even more visible to the eyes. Back in CRT days we were playing SVGA or XGA resolutions. Which were too low resolution to show many weak links. But today we're trying to cram CRT motion clarity at 1080p, 1440p or 4K display (and soon 8K). This requires special optimization (not done in CRT days) to make 1080p/1440p/4K look like a proper CRT, it's a sheer wonder why I've seen LCDs with less blur than a CRT, but others can't reproduce it without following more-recently-written Blur Busters guides. Because those people aren't optimizing properly or only has experience with crappy-colors antique LightBoost or early ULMB. Some blur-killer modes have 0.25ms MPRT while staying above 100nits, faster and brighter than most CRT phosphor. But flicker to eliminate blur is going to be obsolete eventually. It is why Blur Busters is a fan of strobeless blur reduction (ETA 2030) via flickerless low-persistence sample and hold (helped by Frame Rate Amplification Technologies).

Yes, Some Games Will Work Better at Lower Poll Hz

True, some games work better at 8KHz than others. For example, Valorant works so much better than CS:GO at high pollrate + high DPI. The 8000Hz mouse appears to have no smoothing at 3200dpi in Valorant, which means for high-DPI-supported games, 8000Hz 3200dpi 0.08 sensitivity versus 400dpi 0.64 sensitivity now feels identical at fast aimtrack speeds, but feels much better for slow aimtrack speeds. Unlike older games that are apparently high DPI unfrendly such as CS:GO. There's a lot of Pandora Boxes to open with 1000Hz / 2000Hz / 4000Hz / 8000Hz

Article Coming on Dream Team Combo: 360Hz+8000Hz+RTX3080

Finally, a major tick-tock in refresh rate race to retina refresh rates that punches the diminishing curve of returns a little more than average. I have a 360Hz(display) + 8000Hz(mouse) + RTX3080(gpu). Pushes lots of limits; only a few games needs apply. Article coming November. Patience, I tend to write top-shelf Blur Busters stuff on Valve Time, but it's worth the wait!

....klang goes the sound of my golfed microphone hits the hole, 400 yard drive, into a hole-in-one. Blur Busters doesn't drop microphones anymore, we golf them 400 yards into hole-in-ones!

Crosspost #2 of 2

Even if 8000Hz is overkill, you can see the parallels happening in this new computer upgrade supercycle (rare confluence of multiple interacting worthy upgrades -- pollrate, refreshrate, and GPU power -- my 360Hz+8000Hz+3080 piece is expected in November).Chief Blur Buster wrote:Right on, loopy750! +Reploopy750, post: 28657842, member: 510430 wrote:As for 8000Hz mice, I don't think anyone necessarily disagrees that this can be a good thing (any potential CPU usage issues aside), but I'm guessing many are curious as to how much better this can be, in both desktop and gaming scenarios. And I see nothing at all wrong with questioning that. How much better is "better", and is it worth the cost? A 400Hz monitor (if one existed) would be better than 360Hz, but would the cost outweigh the benefit? I think these are fair questions.

The diminishing curve of returns is why Blur Busters isn't a big fan of incrementalism (144Hz->165Hz, or 240Hz->280Hz).

Just like our standard Average User upgrade recommendation is doubling your refresh rate minimum to remain noticeable during gaming (assuming sufficient GPU horsepower). 60Hz->120Hz->240Hz->480Hz->960Hz

60Hz to 144Hz = 2.4x upgrade

144Hz to 360Hz = 2.5x upgrade

Not everyone can tell 144Hz->240Hz immediately (without spending time getting used to it), or 240Hz->360Hz immediately (without spending time getting used to it), but a lot more can clearly see 144Hz->360Hz quicker when versus'd against each other.

For better human-visible upgradefeel to the Average User (i.e. less experienced than OCN.net people), resolutions needs to increase geometrically until retina'd out (240p->480p->1080p->4K->8K), refresh rates needs to increase geometrically until retina'd out, wherever GPU horsepower allows.

Small upgrades aren't human-visible to most, except power users or esports professionals. As soon as a refresh rate becomes cheap/free it becomes the popular mainstream recommendation. Professionals and people with lots of money can leap at the small incrementals, but for the Average User to benefit, needs a big upgrade jump (like the 60Hz iPad to the 120Hz iPad, as well as the future 240Hz tablets). Doubling Hz is needed to noticeably halve scrolling motion blur (e.g. web browsers) on sample-and-hold displays.

...First official 1000Hz mice were approximately 2005-ish back in the ~60Hz LCD dregs.

...First official 120Hz LCD gaming monitor came out in 2009 (ASUS VG236H, Samsung 2233rz)

Back in the mid oughts, that was an 8x pollrate upgrade (125Hz->1000Hz) which was quite human-visible then even at 60Hz with less mouse jittering and better mouseturn smoothness, especially if you were still on a CRT (many were). Even though most saw 500Hz was a sweet spot for 60Hz monitors in less optimized games back then.

Today, we're finally seeing a new 8x pollrate upgrade (1000Hz->8000Hz) which is human visible in the 360Hz+ era. But most will probably initially set it to 2000Hz or 4000Hz at first until games/refresh rates keep going up.

Just like we often settled at 500Hz in the 60Hz days in 2005, we'll probably settle at 2000Hz or 4000Hz at 360Hz in 2020, but start pushing the needle to 8000Hz when 1000fps&1000Hz becomes an esport reality in the 2030s. Keeping mouse pollrate a healthy margin above refreshrate, to prevent mouse jittering.

At small poll-vs-display Hz separations (only 3x difference or less) the jittering goes above the human-visibilty noisefloor. 2000Hz pollrate is already a human-visible improvement for 360Hz monitors already, 8000Hz headroom is a bonus.

Certainly, subject to different user priorities, is whether the "benefit" is is cosmetic pleasing visibility -- or latency benefit -- or other performance benefit -- or all the above -- will be for others to decide, but already one checkbox benefit of worthiness already is immediately apparent to a few of us: Human visible benefit at Windows desktop. Much like if you were there in 2005-2007, got your first 1000Hz mouse, circled the cursor or window drag, and then immediately noticed the smoother cursor at your 60Hz Windows desktop 125Hz to 500Hz/1000Hz.

Gaming mice was stuck at 1000Hz for so freakishly long (15 years) that a pollrate upgrade was already clearly worthy to society when 360Hz monitors finally came out.