Razer_TheFiend wrote: ↑02 Oct 2020, 08:05

2. The development prototypes have the framerate locked to 20,000fps at the moment. Outside of sensor's physical limits, we have no restrictions in terms of what sensor framerates to run. We've played with several options : 1-20k dynamic, 8k-20k dynamic, 8k locked, 16k locked, 20k locked.

[This post is mainly for Blur Busters naysayers, but also useful read]

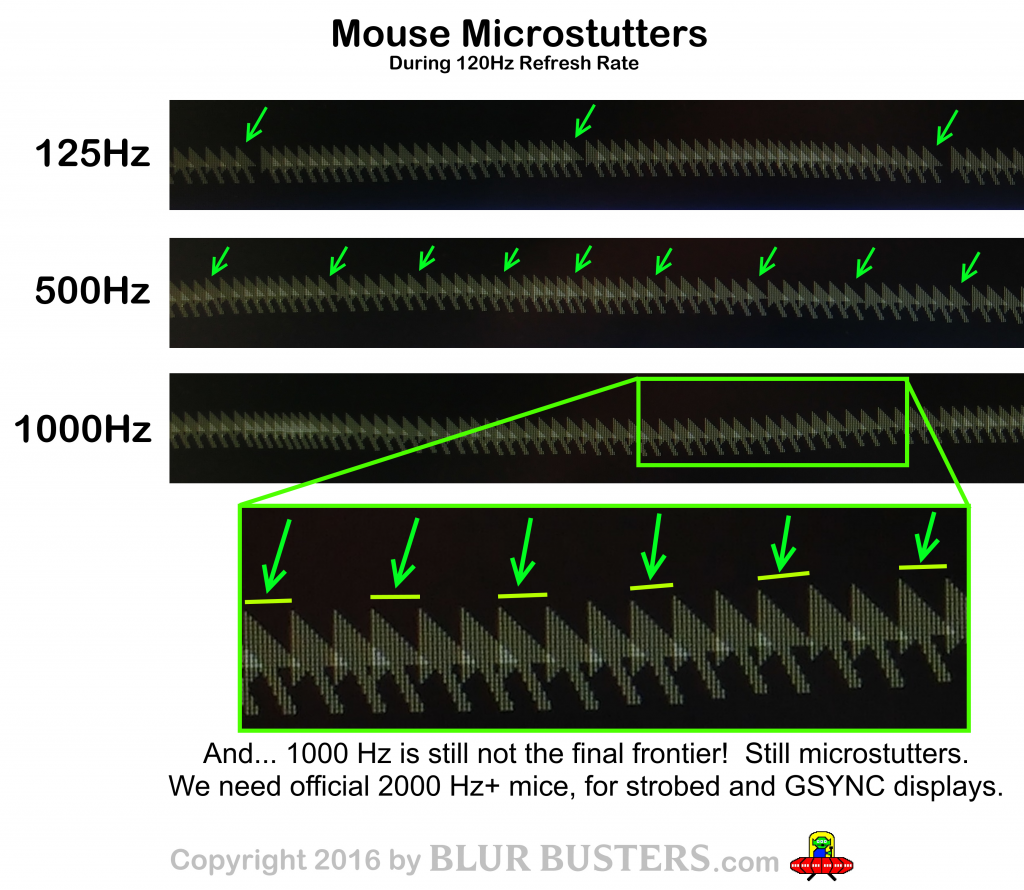

Almost overkill, but -- but camera Hz versus poll Hz beat-frequencying (single-pixel jittering) is definitely well within the realm of human perception in a VERY specific rare case: At 8000 pixels/sec at 8KHz, locked camera at infrequent polls (lower framerates) may produce single-pixel jittering/microstuttering effects that may be visible on a 0.25ms or 0.5ms MPRT strobed display. 0.25ms MPRT means 8000 pixels/sec on a display will only have 1/4th pixel motion blur, which is now no longer enough display motion blur to hide the mouse jitter from a odd-Hz sensor poll.

So it's within realm of possibility of human visibility if the beat-frequencies generated are within human flicker thresholds (1Hz-70Hz approx), since

stutter-to-blur is a complete continuum (slow sutter = like slow visibly vibrating guitar string, and fast stutter = blurred into motion blur like a fast-virating blurry guitar string).

Anyone who's seen a framerate ramping animation on a high-Hz VRR has correctly learned that stutters & motionblur is caused by exactly the same thing. Whether regular stutter (low-Hz sample-and-hold) or erratic stutter (modulating game framerates). Now, you bypass motionblur via ultra-Hz (e.g. >1000Hz) or via strobing (e.g. ULMB, LightBoost, DyAc, PureXP, etc).

You know music science? I'm full circling back to it now.

Scientifically, understandably, stutter is a complex layering of all causes of stutter (Game stutter, mouse stutter, OS stutter, disk stutter, Hz-vs-fps stutter, etc, etc, etc), so you have to peel the stutter onion one by one, until the weak links are progressively revealed. It's a cacophonic metal music or TV static of many stutters, so you peel, peel, peel away the components until you've just got the equivalent two major tuning forks (two frequencies) and can tell the beat frequencies between the two. You might have quiet noise elsewhere (e.g. DPC behaviors, 0.125us jitter, etc), but when you're down to the two human-detectable tuning forks (weak links) they can still be detected amongst other "white noise" (0.125us jitter). Then you know it's time to begin working on whac-a-mole of at least one of them. That's what happened to 1000Hz poll (on 360Hz+), and that's why 8000Hz poll now needs to exist today.

Stutter detectability of various harmonics/beat frequencies is directly related to a human's flicker detection threshold. (For example: 350fps at 360Hz, both frequencies beyond flicker thresholds, but generates a visibly vibrating 10 stutters per second at sufficient motionspeeds such as at 2000 or 3000 pixels per second).

But at 20KHz camera at 8KHz sensor poll, it will jitter at literally 4KHz, far beyond human's flicker fusion threshold. However when sampled at low frame rates (e.g. 100fps at 100Hz strobed), and since display Hz is not perfectly in sync with poll Hz, the jitter of randomly selected polls might become human visible on 0.25ms-0.4ms MPRT strobed displays. (e.g. ULMB Pulse Width 30, or BenQ Strobe Length 20, or ViewSonic PureXP+ Extreme, all capable of 0.4ms MPRT and less). When the display motionblur amplitude is smaller than the mouse jitter amplitude, the mouse movement just feel "grainy" (no longer TestUFO-smooth mouseturns).

Thusly, I'd recommend a 16KHz camera setting, although it's probably way overkill. It's remotely far away from being a weak link IMHO, just saying

it's definitely within the realm of human detection in certain cases, but it would take very special cases (high-resolution ultralow-MPRT strobed displays).

And since strobed 4K and 8K displays are not yet out, motionspeeds of 8000 pixels per second will be too fast on lower resolution displays, beyond the limits of an average human's eye-tracking speed. But at 4K and 8K, 8000 pixels/sec is now just a eye-followable TestUFO. But if low-MPRT simultaneously converges with ultrahigh-resolutions, then harmonics of camera Hz and sensor Hz, will begin to become human visible/feelable as faint potential coarseness ("Jitter": Random one-pixel microstuttering). Then 4000Hz and 8000Hz will have roughly the same human benefits, due to the camera Hz-versus-poll Hz jitter. To make sure 8000Hz is better than 4000Hz, may need adjustment of camera Hz downwards to 16KHz or upwards to 24KHz.

I'd rate this camera-Hz configurability sync as a priority 2 in a scale of 1 through of 5 (unimportant through highest). Useful, but focus on priorities first. If it's easy to go to 24KHz, do that, and call it a day. 24KHz is conveniently divisible by 500, 1000, 2000, 4000 and 8000.

But the poll Hz achievement is WAY more important. And system timing jitter (0.125us imprecisions) will be a bigger jitter noise margin.

All part of the textbook

Vicious Cycle Effect that is the huge raison d'etre of the refresh rate race bringing visible human benefits.