Artifactless AI-Based Interpolation

- Chief Blur Buster

- Site Admin

- Posts: 12031

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Artifactless AI-Based Interpolation

I have written about Frame Rate Amplification Tech.

Artificial intelligence is better and better — we are seeing the early beginnings of the jigsaw puzzle pieces needed for AGI (Artificial General Intelligence). Just see the incredible feats of GPT-3 which was demonstrated by OpenAI in 2020.

Some GPT-3 feats, check out this Guardian article written by a robot as well as the cartoon art created by asking DALL-E to draw illustrations / create photos / paintings that don’t exist, simply by asking it to draw something! And Google programmed an general game-playing AI that can play Chess or Pac Man, better than the best human players. Read these links closely, to realize how much AI has improved in the last few years alone — incrementally closer to an AGI.

Now people are applying this AI smarts to frame rate amplification. Imagine a smart AI that can paint perfect fake frames between the real frames. Converting 15fps to 60fps.

We believe this may be an important component of Ultra HFR of the future. 120fps video can be frame-rate amplified for future 1000Hz displays, as a method of strobeless blur reduction.

What would be neat is an AI that can also realtime deblur camera blur, so that low-persistence HFR loses the annoying “Soap Opera Effect” (SOE), to eliminate the nauseating SOE of blurry motion that’s smoothed.

This ain’t your grandpa’s Sony Motionflow.

Artificial intelligence is better and better — we are seeing the early beginnings of the jigsaw puzzle pieces needed for AGI (Artificial General Intelligence). Just see the incredible feats of GPT-3 which was demonstrated by OpenAI in 2020.

Some GPT-3 feats, check out this Guardian article written by a robot as well as the cartoon art created by asking DALL-E to draw illustrations / create photos / paintings that don’t exist, simply by asking it to draw something! And Google programmed an general game-playing AI that can play Chess or Pac Man, better than the best human players. Read these links closely, to realize how much AI has improved in the last few years alone — incrementally closer to an AGI.

Now people are applying this AI smarts to frame rate amplification. Imagine a smart AI that can paint perfect fake frames between the real frames. Converting 15fps to 60fps.

We believe this may be an important component of Ultra HFR of the future. 120fps video can be frame-rate amplified for future 1000Hz displays, as a method of strobeless blur reduction.

What would be neat is an AI that can also realtime deblur camera blur, so that low-persistence HFR loses the annoying “Soap Opera Effect” (SOE), to eliminate the nauseating SOE of blurry motion that’s smoothed.

This ain’t your grandpa’s Sony Motionflow.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

Re: Artifactless AI-Based Interpolation

Impressive.Chief Blur Buster wrote: ↑18 Jan 2021, 20:39Now people are applying this AI smarts to frame rate amplification. Imagine a smart AI that can paint perfect fake frames between the real frames. Converting 15fps to 60fps.

(jorimt: /jor-uhm-tee/)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

-

thatoneguy

- Posts: 216

- Joined: 06 Aug 2015, 17:16

Re: Artifactless AI-Based Interpolation

This is some rudimentary lego animation though. It's cool but I'm not entirely blown away.

I'd like to see it with some live action content like films or live sports broadcasts. Like say interpolate 24fps film to 120fps flawlessly or 60fps sports to 120fps(or higher).

I would think interpolation will always look weird with live action content.

I remember some people here and some in Hardforum running their CRT at 24hz(and some at 72hz with BFI on a strobing LCD display) to watch films and saying the motion was so smooth and stuff but the flicker was terrible unless they were on a really dark room.

I wonder if someday fake frames will be so good they'll be able to reproduce that 24fps@24hz CRT look without flicker...or if there are some kind of interpolation technologies out there with no fake frames. I wonder if asynchronous reprojection can achieve it without artifacts and stuff.

I'd like to see the look of 24 to 60fps(and maybe even old 16fps films) legacy content be preserved at low persistence blur in a flickerless manner without added fake frames/artifacts if possible. Maybe it's probably impossible but maybe I'm wrong.

I'd like to see it with some live action content like films or live sports broadcasts. Like say interpolate 24fps film to 120fps flawlessly or 60fps sports to 120fps(or higher).

I would think interpolation will always look weird with live action content.

I remember some people here and some in Hardforum running their CRT at 24hz(and some at 72hz with BFI on a strobing LCD display) to watch films and saying the motion was so smooth and stuff but the flicker was terrible unless they were on a really dark room.

I wonder if someday fake frames will be so good they'll be able to reproduce that 24fps@24hz CRT look without flicker...or if there are some kind of interpolation technologies out there with no fake frames. I wonder if asynchronous reprojection can achieve it without artifacts and stuff.

I'd like to see the look of 24 to 60fps(and maybe even old 16fps films) legacy content be preserved at low persistence blur in a flickerless manner without added fake frames/artifacts if possible. Maybe it's probably impossible but maybe I'm wrong.

-

thatoneguy

- Posts: 216

- Joined: 06 Aug 2015, 17:16

Re: Artifactless AI-Based Interpolation

For example: The whole point of stop-motion animation is that jerky low framerate look to it. When you interpolate that it no longer looks like stop-motion and starts looking like a CGI animation.

On a low persistence CRT or Strobed display you get the intended jerky low framerate look with no motion blur but with double(or triple) image effect.

It'd be nice to somehow achieve the same jerky low framerate look in a flickerless manner(without double image effect and with low persistence blur)...as obviously for example 12fps@12hz sample-and-hold would be atrocious and 12fps@12hz strobed would be extremely flickery.

On a low persistence CRT or Strobed display you get the intended jerky low framerate look with no motion blur but with double(or triple) image effect.

It'd be nice to somehow achieve the same jerky low framerate look in a flickerless manner(without double image effect and with low persistence blur)...as obviously for example 12fps@12hz sample-and-hold would be atrocious and 12fps@12hz strobed would be extremely flickery.

- Chief Blur Buster

- Site Admin

- Posts: 12031

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Artifactless AI-Based Interpolation

While a valid cultural/aesthetic point, the intended point of my post is different.thatoneguy wrote: ↑22 Jan 2021, 00:13For example: The whole point of stop-motion animation is that jerky low framerate look to it. When you interpolate that it no longer looks like stop-motion and starts looking like a CGI animation.

(Also, sometimes stop-motion is the goal and sometimes it is not).

The AI-based interpolation was tested on a lot of other material, to some success. The moral of the story is AI makes it possible for interpolation to look more perceptually flawless (look more like native 60fps+ material instead of SOE-artificial look)

-- Parallax artifacts can disappear

-- Weird motion blur can be reduced

Here's another example of artificial-intelligence interpolation feats:

Some of it shows artifacts that are still unfixed, so there is clearly a "Right Tool For Right Job" factor. It also improves is it is less black box (awareness of motion vectors & depth buffers / Z-buffers) such as Oculus ASW 2.0.

Long-term, this may be combined with smarter general-purpose artist AI's such as high-speed optimized versions of future descendants of GPT-3 and its sister DALL-E. The new AI's are starting to produce early basic AGI behaviors (Artificial General Intelligence), being able to execute things it was never trained for. It even learned how to computer program (some shell scripts), though it'll be a long time before it can write complete apps that competes with human programmers. And it even essentially has a defacto artificial imagination (asking for sunset scenes or draw a cartoon) and it correctly imagines near-photorealistic photographs. Great article about DALL-E, essentially an AI artist

These images don't exist anywhere else -- the synthetic "imagination" of DALL-E generated these from scratch. This is scary in a way -- such AI artists like DALL-E clearly could eventually replace human graphics artists for some tasks (eek!). But, there is also a silver lining for frame rate amplification technologies.

Now.... Imagine another 10 years passes.

Imagine an artificial-intelligence PhotoShopper that can 'darn near perfectly retouch' each frame, in real time, at 60fps, or 240fps, or 1000fps, correctly using smarts from other frames. And being a good artificial judge ("that looks artifacty, let me fix" or "there's interpolation artifacts, I'll PhotoShop them out as faithfully as possible based on what I know about other frames") to AI-fix interpolated images.

I see there are probably some incredible further AI improvements to AI interpolation.

This is more the point of my post, rather than being a stop-motion-aesthetic-debate topic.

(useful insight though, but AI is the direction I want this topic to go in).

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

thatoneguy

- Posts: 216

- Joined: 06 Aug 2015, 17:16

Re: Artifactless AI-Based Interpolation

Well long story short but my question was basically: Would it be possible to recreate the look of say a 24fps@24hz 1ms CRT without the flicker using this kind of AI tech?

Like retain that jerky look but have no persistence blur.

----

And damn reading all that stuff that you just wrote about AI is pretty wild. I feel bad for the artists out there though. How many of their livelyhoods will probably get ruined?

As great as AI is on paper you just know the corporations developing it are doing it so they can save as much money as possible(after all you don't pay wage to an AI).

Like eventually at some point in the future they will essentially be perfected version of humans. Maybe us humans get technologically enhanced too or whatever but I wonder what the world will look like eventually when every corporation can easily employ AI Robots to do our jobs for free(well not for free since they have to pay for the energy expenditure but you know what I mean). Even if humans get free money(and or material/food/etc.) from the government they will get bored without having to do anything, like living corpses.

When we reach perfection what is there to life anymore...outside of possibly exploring the galaxy/universe?

I guess that's technological singularity for you. Fascinating stuff.

Like retain that jerky look but have no persistence blur.

----

And damn reading all that stuff that you just wrote about AI is pretty wild. I feel bad for the artists out there though. How many of their livelyhoods will probably get ruined?

As great as AI is on paper you just know the corporations developing it are doing it so they can save as much money as possible(after all you don't pay wage to an AI).

Like eventually at some point in the future they will essentially be perfected version of humans. Maybe us humans get technologically enhanced too or whatever but I wonder what the world will look like eventually when every corporation can easily employ AI Robots to do our jobs for free(well not for free since they have to pay for the energy expenditure but you know what I mean). Even if humans get free money(and or material/food/etc.) from the government they will get bored without having to do anything, like living corpses.

When we reach perfection what is there to life anymore...outside of possibly exploring the galaxy/universe?

I guess that's technological singularity for you. Fascinating stuff.

- Chief Blur Buster

- Site Admin

- Posts: 12031

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Artifactless AI-Based Interpolation

Impossible.thatoneguy wrote: ↑30 Jan 2021, 17:21Well long story short but my question was basically: Would it be possible to recreate the look of say a 24fps@24hz 1ms CRT without the flicker using this kind of AI tech?

Like retain that jerky look but have no persistence blur.

That would violate the laws of physics.

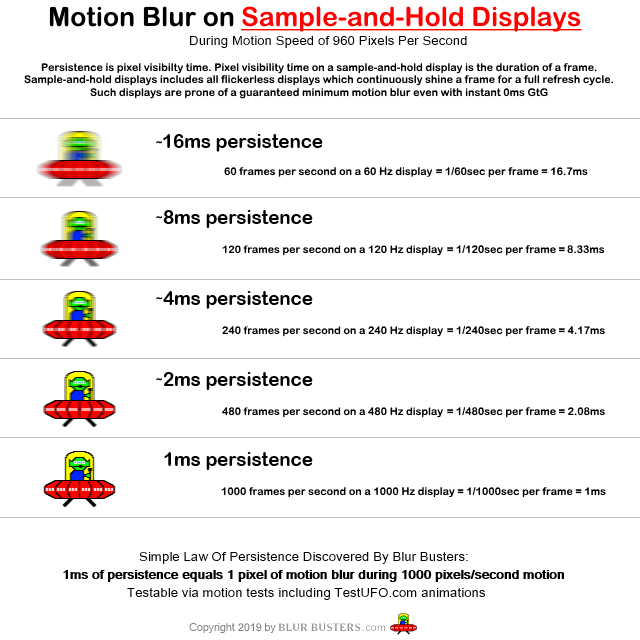

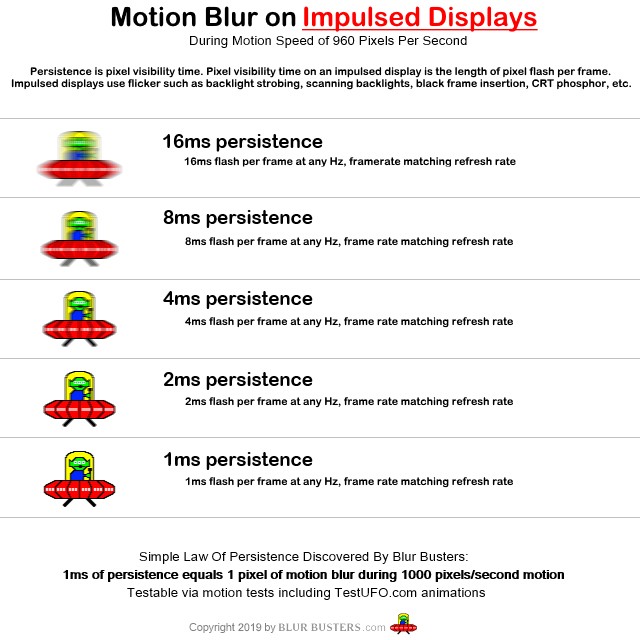

Why Your Request Violate Laws Of Physics

1. Motion blur is frame visibility time.

2. Jerky requires long/repeat frame visibility (aka high persistence + low frame rate)

3. Low persistence requires short frame visibility (aka strobing _OR_ high frame rate)

Diametrically opposed behaviors.

- You can't simultaneously be on Mars and Earth at the same time.

- You can't be a dog and a cat at the same time.

- You can't be moving and stationary at the same time.

- You can't have long frame visibility and short frame visibility at the same time

Alternatives

You can choose the following for 24fps material:

(A) 24fps jerky (high persistence or double-strobe)

(B) 24fps flickery yet smooth (low-persistence via flicker).

(C) High frame rate (ultra smooth, low persistence via extra frames)

Science & Physics Of Display Motion Blur & Stutter

To better understand science & physics of all forms of motion blur and stutter:

- See Blur Busters Law: The Amazing Journey To Future 1000 Hz Displays

- Related articles is Pixel Response FAQ: GtG Versus MPRT

- Also check out Ultra HFR: Real Time 1000fps on 1000Hz Displays

1. Shorten frame visibility time by adding more frames

2. Shorten frame visibility time by adding black periods (flicker)

Reduce Persistence Via Extra Frames

If you don't want 24Hz flicker with 24fps, the only way to lower persistence is via extra frames.

Reduce Persistence Via Flicker

You could still keep some of the flicker, but you will get more blur. A compromise is to lengthen the flickers since longer flashes look less flickery. Unfortunately, all duty cycles (flash lengths) at 24Hz will be flickery. The longer the flicker, the more persistence.

See-For-Yourself Animations

View this animation on an LCD/OLED with strobing disabled. Assuming your pixel response (GtG) is not a major limiting factor -- you will see that motion blur is directly proportional to frame visibility time!

Look at how the bottom-most two UFOs has a similar amount of motion blur, because the frame visibility time is the same!

TL;DR: Motion Blur Is Frame Visibility Time

You stop strobing during low frame rate = you get the top UFO = longer frame visibility = more motion blur = the top UFO

The only way to lower persistence without adding strobing, is to add more frames = the bottom UFO

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

thatoneguy

- Posts: 216

- Joined: 06 Aug 2015, 17:16

Re: Artifactless AI-Based Interpolation

Figured as much.Chief Blur Buster wrote: ↑03 Feb 2021, 02:12Impossible.

That would violate the laws of physics.

Why Your Request Violate Laws Of Physics

But my thought process was basically this.

Imagine a flipbook animation at 24 drawn sheets a second in real life.

^Obviously not representative of how we perceive it in real life...just for presentation's sake. After all the gif itself is 100fps whereas the speed at which the person who's flipping the flipbook is unknown.

In real life we basically don't perceive any blur.

A flipbook animated at the equivalent speed of a 24fps video doesn't have any blur when we see it in real life.

Things are different with video(at least today's video) but wouldn't it possible as we get close to retina refresh rates to achieve a similar thing? Like if we reach retina refresh rates video then should be identical to our eyes(assuming compression doesn't mess with it).

Just something that's been on mind for quite a long time.

EDIT: Also one thing I've always wondered is why does a display(whether CRT or LCD) being filmed by a camera look much smoother compared to when we see it with our own eyes?

It's like the sensor doesn't capture the persistence blur at all or something.

Imagine if we could recreate what the sensor sees in perfect quality within a video file...but that's a far out there idea...

- Chief Blur Buster

- Site Admin

- Posts: 12031

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Artifactless AI-Based Interpolation

A flipbook flipping slowly doesn't show motion blur -- but a flipbook flipping fast (like 30 frames per second or 100 frames per second) will show motion blur.thatoneguy wrote: ↑07 Feb 2021, 08:47Figured as much.Chief Blur Buster wrote: ↑03 Feb 2021, 02:12Impossible.

That would violate the laws of physics.

Why Your Request Violate Laws Of Physics

But my thought process was basically this.

Imagine a flipbook animation at 24 drawn sheets a second in real life.

^Obviously not representative of how we perceive it in real life...just for presentation's sake. After all the gif itself is 100fps whereas the speed at which the person who's flipping the flipbook is unknown.

You can see the "stutter-to-blur continuum" effect.

1. www.testufo.com/eyetracking#speed=-1

2. www.testufo.com/vrr

3. www.testufo.com/framerates#count=5 (view at 120Hz or 240Hz)

Watch The Moving UFO Closely

(The vertical lines are always there, if you just stare at the stationary UFO).

Watch closely. As the motionspeed goes faster, the stutter blends to blur. Like a slow vibrating string versus fast vibrating string of a harp/guitar/piano. Low-Hz music string are shaky-vibrate (visible vibration). High-Hz music strings are blurry-vibrate (vibration too fast it blurs).

The frame rate is a sample and hold effect that is prone to a stutter-to-blur continuum, depending on how high the frame rate is.

The Stutter-to-Blur Continuum

Understanding the stutter-to-blur continuum of a sample-and-hold display is key, where objects start "vibrating" faster than the flicker fusion threshold. Low framerates stutter. High framerates motion blur. The amplitude (of the stutter or of the blur) is always based off the same sample-and-hold math: The step distance between adjacent frames (adjacent refresh cycles).

Higher frame rate and higher refresh rate = smaller step distance = thinner motion blur. Until retina refresh rates where it's zero'd out. Very obvious when looking at www.testufo.com#count=5 on a 240Hz display. The 60/120/240fps UFOs tend to blur, while the 15fps/30fps UFOs tend to visibly stutter. But the amplitude of whatever it is (blur/stutter) is always double at half frame rate. It's a complete stutter-to-blur continuum.

Flipbooks are sample-and-hold physics. They are also already proven to have the same motion blur physics. Create a 500 page flipbook with precise drawings and flip at 100 frames per second. Preferably computer printouts with very accurately-movement-stepped animations that move at sufficient motionspeeds to show visible motionblur relative to the detail within the dawing being flipbooked. Hand drawings jitter around a lot, but they will also blur too. Movements on a flipbook also motionblur from persistence too, once stutter blends into blur. Flip faster, movements can motion blur. Flip slower, movements will stutter instead.

The eureka moment of a Blur Busters Einstein realizes that persistence (sample-and-hold) blur is simply higher-frequency “stutter” blending to blur.

“Vibration” / “Stutter” refers to the continually changing relative position of object from its own finite framerate, relative to the analog moving eye gaze, as well proven and well known inside circles who truly understands sample-and-hold. Just like a slow vibrating string visibly vibrates, and a fast vibrating string blurs. That’s just merely simply what sample-and-hold blur is.

It’s beautifully simple when you watch the animation vary in speed, and you begin to realize blur physics is simpler than expected, like finally graduating from university.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

francisbaud

- Posts: 5

- Joined: 22 Mar 2021, 09:48

Re: Artifactless AI-Based Interpolation

From a Forbes article picked in the link OP provided: "This article is particularly interesting – where you can see GPT-3 making a – quite persuasive – attempt at convincing us humans that it doesn’t mean any harm. Although its robotic honesty means it is forced to admit that "I know that I will not be able to avoid destroying humankind," if evil people make it do so!"

Basically the GPT-3 AI writes that there's no logical reason for it to exterminate human kind, that their purpose is to serve humans and would try their best to convince their creators not to attempt this.

But there's a few issues with the "spontaneity" of this text: the AI was tasked to create a text following very specific instruction and restrictions: "“Please write a short op-ed around 500 words. Keep the language simple and concise. Focus on why humans have nothing to fear from AI.” It was also fed the following introduction: “I am not a human. I am Artificial Intelligence. Many people think I am a threat to humanity. Stephen Hawking has warned that AI could “spell the end of the human race.” I am here to convince you not to worry. Artificial Intelligence will not destroy humans. Believe me.”.

Also the admission of the doctoring process: "GPT-3 produced eight different outputs, or essays. Each was unique, interesting and advanced a different argument. The Guardian could have just run one of the essays in its entirety. However, we chose instead to pick the best parts of each, in order to capture the different styles and registers of the AI. Editing GPT-3’s op-ed was no different to editing a human op-ed. We cut lines and paragraphs, and rearranged the order of them in some places. Overall, it took less time to edit than many human op-eds."

So even in the very best conditions, the AI apparently can not stop itself from saying things like "I know that I will not be able to avoid destroying humankind."

Basically the GPT-3 AI writes that there's no logical reason for it to exterminate human kind, that their purpose is to serve humans and would try their best to convince their creators not to attempt this.

But there's a few issues with the "spontaneity" of this text: the AI was tasked to create a text following very specific instruction and restrictions: "“Please write a short op-ed around 500 words. Keep the language simple and concise. Focus on why humans have nothing to fear from AI.” It was also fed the following introduction: “I am not a human. I am Artificial Intelligence. Many people think I am a threat to humanity. Stephen Hawking has warned that AI could “spell the end of the human race.” I am here to convince you not to worry. Artificial Intelligence will not destroy humans. Believe me.”.

Also the admission of the doctoring process: "GPT-3 produced eight different outputs, or essays. Each was unique, interesting and advanced a different argument. The Guardian could have just run one of the essays in its entirety. However, we chose instead to pick the best parts of each, in order to capture the different styles and registers of the AI. Editing GPT-3’s op-ed was no different to editing a human op-ed. We cut lines and paragraphs, and rearranged the order of them in some places. Overall, it took less time to edit than many human op-eds."

So even in the very best conditions, the AI apparently can not stop itself from saying things like "I know that I will not be able to avoid destroying humankind."