Calamity wrote:* Yes. REALLY. Yes, you're controlling the display's exact timing of refresh cycles -- when a display is in variable refresh rate mode. The display is actually idling for YOU and really does begin its scanout when you Present()

I would have thought that internally the monitor was not actually idling but looping through the last frame at 144 Hz (I know real idling is perfectly possible on an LCD, I just doubted they had been this audacious).

Yup, they were that audacious.

The displays is actually waiting for your software.

That's how random framerates look smooth (see the

http://www.testufo.com/vrr software-interpolated VRR demo -- I developed that motion demonstration as well) -- the display and the frame rate are essentially in perfect sync. Random framerates on random refresh rate, staying in (near) perfect sync, so objects are still exactly where you expect them to be, as they move across your screen.

I keep trying to explain to disbelieving software developers that this is REALLY what happens.

Yes, REALLY, your display is idling, waiting for you to Present() or glutSwapBuffers().

From a video cable point of view, the graphics card is simply just scanning out "one more last VBI scanline" in a nonstop manner (at the same horizontal scan rate which is unchanged). Once you Present(), the next scanline is Scanline #1 of your new refresh cycle.

So the blanking interval automatically extends over and over (dynamic-sized Vertical Back Porch) until Present() or glutSwapBuffers(). This is what FreeSync does, this is what HDMI 2.1 VRR does, this is what VESA AdaptiveSync does.

And a few of us managed to get

FreeSync working on a MultiSync CRT (via a ToastyX tweak to enable FreeSync over HDMI, plus using a HDMI-to-VGA adaptor), because this is a very gentle way of varying a refresh rate -- done in a backwards compatible way to analog signal standards -- and because the horizontal scanrate remains unchanged, many MultiSync CRTs don't even do their usual refresh-rate-change blankout (depending on what they trigger on) and all you see is a dynamically-variable-rate flicker on your CRT, with zero stutter with varying-framerate motion. Just like a LCD GSYNC monitor, except you're doing variable refresh rate on a raster-scanned MultiSync CRT. The less "firmware cops" the multisync CRT has (refresh-rate-change blankout electronics), the more successfully it works with a FreeSync signal. (FreeSync, HDMI VRR, and VESA AdaptiveSync, use the same protocol, so they adaptor just fine into each other, sometimes with minor EDID override modifications, etc -- only G-SYNC is somewhat different, but their raster behaviour is still similar -- scanline counter only begins incrementing when you Present())

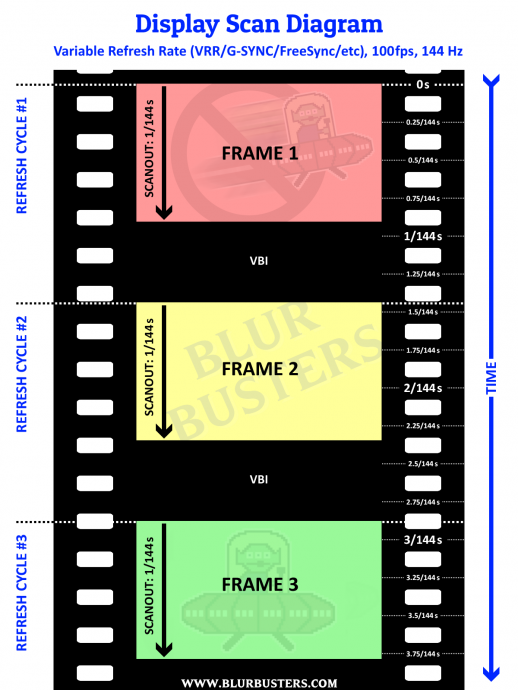

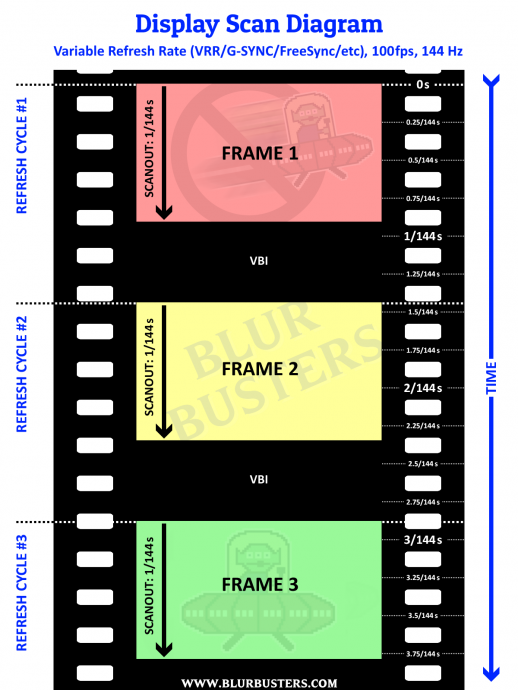

Here is a scan-out diagram of G-SYNC doing 100 frames per second at 144 Hz.

As you can see, during 144Hz GSYNC (where range is 30Hz-144Hz), the intervals between VBI beginnings can vary from 1/30sec through 1/144sec between Present() calls. So if you do Present() only every 1/100sec, that's when the beginnings of the scanout occurs.

-- If you Present() or glutSwapBuffers() too early, it gets the alternate treatment (e.g. VSYNC ON, VSYNC OFF, FastSync) that is configured as the fallback.

-- If you Present() or glutSwapBuffers() too late, the drivers/display will automatically repeat the last refresh cycle so you cannot go below that Hz.

-- If you Present() or glutSwapBuffers() on time -- i.e. within the VRR range -- for "e.g. between 1/30sec and 1/144sec after your last Present() call) then you've effectively was you being the master of your very own, personal software-timed refresh cycle.

The most complicated part of VRR is making sure 30Hz looks exactly like 144Hz. Ghosting-wise, overdrive-wise, gamma-wise. LCD pixels can decay differently. GtG can overshoot more or less. So they come up with very complex variable-refresh-rate dynamic overdrive algorithms to try to make 30Hz as identical as possible as 144Hz, so framerates can randomly spray all over the place, without having any noticeable color effects, or spikes in bright-ghosts (e.g. coronas). It's all technically challenging. Generally (most of the time), NVIDIA has had been better at overdriving VRR, but I've seen some really improved FreeSync lately too. One big improvement AMD has done is to try to improve the certification process. FreeSync 2 certification is also AMD giving more rigorous quality rules to a VESA Adaptive Sync panel before manufacturers are allowed to call it FreeSync 2. There's no cable differnce between FreeSync, HDMI 2.1 VRR and VESA AdaptiveSync -- at least when it comes to their venn diagram of compatibility (e.g. 8-bit 1920x1080p) but FreeSync 2 becomes also a stamp of quality approval on what would normally be sold as generic unbranded VESA Adaptive-Sync. So, if you had to choose a random FreeSync 2 display and a random VESA AdaptiveSync display, obviously steer to FreeSync 2 display since it means it got tested by a certification lab and passed the criteria. (Like a Dolby lab, or whatever). NVIDIA had a tighter leash on GSYNC, but AMD is improving quality control so I'll give them kudos -- it's sometimes cheap chinese manufacturers often releasing generic VRR Panels and slapping the FreeSync label on them without AMD's permission sometimes (or only minimally passing criteria), and sometimes you see an overdriven-mess (really bad ghosting) during variable refresh rate operation. The spec is essentially free to use, but certification services (laboratory) is not free, so sometimes that's why there is a much bigger spread of best-versus-worst when it comes to FreeSync, than when it comes to GSYNC. At least historically. That's why sometimes some people just want to be willing to pay the "GSYNC tax", due to the consistency. Either way, I am a big fan of both technologies, it's just helpful to understand the complications to the display industry that VRR has foisted upon.

NOTE: Drivers also have a Low Framerate Compensation feature (LFC) which you may have heard of. It is simply a predictive repeat-refresher (to avoid well-framepaced attempted deliveries of new refresh cycles from occuring while a panel is still scanning out). So if it detects frame rates that runs well below minimum VRR, it will trigger predictive repeat-refreshes early, running at a perfect 48Hz to play 24fps material. Or a perfect 40Hz to play 20fps material. Or perfect ~30Hz to do 10 fps. Etc. By repeating refresh cycles early, the display is idling precisely at the exact time of the next frame. So it really is essentially behaving like a 24Hz display when you play 24fps movies in a VRR-compatible app (game, video player like SMplayer, etc), the drivers are simply repeat-refreshing at 1/48sec intervals rather than 1/30sec (where it might be still scanning out and colliding with the attempted delivery of a new frame shortly after the 1/30sec interval). So if you frame-pace your Present() events 1/24sec apart the drivers intelligently forces a repeat-refresh-cycle exactly in between the two, unbeknownst to your app. But this is only important to know for low framerates. This doesn't occur for 50Hz or 60Hz material, so this stuff isn't important for emulators, but I mention this as "technology background" stuff.