Assume a monitor's variable refresh range is 20-60Hz, and suppose my application renders frames every 40ms exactly (25 Hz). At the end, an exceptional frame occurs that takes only 20ms to render (50Hz). Is this frame presented exactly when it appears, or does the monitor take time to change its refresh rate, thus delaying the frame by 20ms? If the exceptional frame takes 50ms to render instead of 20ms, what happens to the behavior then? None of the FreeSync explanations I've looked through have answered this question.

Here's two possibilities that I might expect. The first is that the monitor scans at its highest rate no matter what, and treats the remaining time as interruptible downtime. In this case, the exceptional frame will be presented immediately after it's done rendering, since 50Hz and 20Hz are still within its allowable range.

The second possibility is that the monitor requires its full period to improve color accuracy. That makes frames non-interruptible; the computer must inform the monitor how long the next frame will be displayed, and the monitor uses the entire promised period for optimal picture quality. After the last frame finishes in 20ms, the previous 40ms frame is still halfway through presenting. Thus, the frame will be delayed. If the monitor isn't able to hold an existing frame for an arbitrary time (and they aren't, since otherwise the variable refresh range wouldn't have a lower limit), the graphics card must predict how long the next frame should take, and will introduce some delay before each frame to handle variation in framerate.

Neither AMD nor Nvidia have been willing to describe these basic technical details after three years, as far as I can tell.

How do FreeSync's technical details work? [Programmer POV]

-

ad8e

- Posts: 68

- Joined: 18 Sep 2018, 00:29

- RealNC

- Site Admin

- Posts: 4597

- Joined: 24 Dec 2013, 18:32

- Contact:

Re: How do FreeSync's technical details work?

There is no "refresh rate change."

In VRR mode, the monitor can be prevented from starting a new scanout at the end of the current scanout. As soon as a new frame is available to be scanned out, the monitor is then allowed to continue. The speed at which the monitor is doing the scanout is constant and controlled by the selected refresh rate.

The scanout speed is the velocity that you would measure if you look at how pixels are updated on the monitor. This was the speed of the electron beam back in CRT times, but now there's no electron beam. So the scanout speed is just the rate at which the pixels are updated, from left to right, line by line, from top to bottom, as if it was an electron beam racing across the screen, hitting the pixels.

So the scanout speed is constant. When a full scanout has been completed, meaning the last pixel in the lower right corner of the panel has been updated, the GPU will stop the scanout with some signal trickery. The monitor will not start scanning out again from the beginning. The way this is done is by increasing the vertical blanking interval in real-time, so the monitor thinks it's still not done with the current scanout period. So the monitor just "hangs" indefinitely, until the GPU changes the signal.

So, as soon as the next frame is available in the GPU's frame buffer, the GPU will change the display signal so that the monitor will start scanning out from the top-left again.

If a new frame comes too soon (meaning the monitor still hasn't finished scanning out the previous frame), then the GPU has two choices. Either delay the new frame just enough until the monitor is finished, or don't delay it and let the monitor scan out the new frame from it's current scanout position. The former happens when you use vsync ON, the latter when you use vsync OFF. The monitor doesn't know anything about this stuff. That's just a GPU driver decision. The only thing the monitor does is scanning out pixels at a constant speed, and being "tricked" into freezing at the end of the scanout.

There's lots of other things to make all this happen, of course. Like fail-safes for when a frame takes too long, in which case the monitor is unfrozen again in order not to damage the panel (if you don't refresh LCD pixels for too long, they can get damaged.) It's not only damage, but also visual artifacts. If you don't refresh for too long, the pixels start to lose their color. This is the reason why LCD panels have a VRR range. It's also the reason why some monitors, especially TN panels, can show flickering when they're near the bottom end of the VRR range. Even when there's no change in color (like when displaying a static image), LCD pixels need to be refreshed with a different voltage, otherwise they get damaged. (The need for a voltage change is the source of pixel inversion artifacts, but that's another story entirely.)

In any event, freesync is just an AMD label. The actual spec for this is defined by the DisplayPort standard. And the HDMI standard (for HDMI VRR.) I don't know if the specs for these are public or not.

NVidia of course does not publish a G-Sync spec, but it's pretty clear that it works the same as DisplayPort VRR. The "secret" sauce of g-sync involves other things, like VRR overdrive, LFC and such. The actual way a monitor is driven during VRR operation is almost certainly exactly the same between G-Sync and DisplayPort VRR (FreeSync.)

In VRR mode, the monitor can be prevented from starting a new scanout at the end of the current scanout. As soon as a new frame is available to be scanned out, the monitor is then allowed to continue. The speed at which the monitor is doing the scanout is constant and controlled by the selected refresh rate.

The scanout speed is the velocity that you would measure if you look at how pixels are updated on the monitor. This was the speed of the electron beam back in CRT times, but now there's no electron beam. So the scanout speed is just the rate at which the pixels are updated, from left to right, line by line, from top to bottom, as if it was an electron beam racing across the screen, hitting the pixels.

So the scanout speed is constant. When a full scanout has been completed, meaning the last pixel in the lower right corner of the panel has been updated, the GPU will stop the scanout with some signal trickery. The monitor will not start scanning out again from the beginning. The way this is done is by increasing the vertical blanking interval in real-time, so the monitor thinks it's still not done with the current scanout period. So the monitor just "hangs" indefinitely, until the GPU changes the signal.

So, as soon as the next frame is available in the GPU's frame buffer, the GPU will change the display signal so that the monitor will start scanning out from the top-left again.

If a new frame comes too soon (meaning the monitor still hasn't finished scanning out the previous frame), then the GPU has two choices. Either delay the new frame just enough until the monitor is finished, or don't delay it and let the monitor scan out the new frame from it's current scanout position. The former happens when you use vsync ON, the latter when you use vsync OFF. The monitor doesn't know anything about this stuff. That's just a GPU driver decision. The only thing the monitor does is scanning out pixels at a constant speed, and being "tricked" into freezing at the end of the scanout.

There's lots of other things to make all this happen, of course. Like fail-safes for when a frame takes too long, in which case the monitor is unfrozen again in order not to damage the panel (if you don't refresh LCD pixels for too long, they can get damaged.) It's not only damage, but also visual artifacts. If you don't refresh for too long, the pixels start to lose their color. This is the reason why LCD panels have a VRR range. It's also the reason why some monitors, especially TN panels, can show flickering when they're near the bottom end of the VRR range. Even when there's no change in color (like when displaying a static image), LCD pixels need to be refreshed with a different voltage, otherwise they get damaged. (The need for a voltage change is the source of pixel inversion artifacts, but that's another story entirely.)

In any event, freesync is just an AMD label. The actual spec for this is defined by the DisplayPort standard. And the HDMI standard (for HDMI VRR.) I don't know if the specs for these are public or not.

NVidia of course does not publish a G-Sync spec, but it's pretty clear that it works the same as DisplayPort VRR. The "secret" sauce of g-sync involves other things, like VRR overdrive, LFC and such. The actual way a monitor is driven during VRR operation is almost certainly exactly the same between G-Sync and DisplayPort VRR (FreeSync.)

Steam • GitHub • Stack Overflow

The views and opinions expressed in my posts are my own and do not necessarily reflect the official policy or position of Blur Busters.

The views and opinions expressed in my posts are my own and do not necessarily reflect the official policy or position of Blur Busters.

- jorimt

- Posts: 2641

- Joined: 04 Nov 2016, 10:44

- Location: USA

Re: How do FreeSync's technical details work?

Enforcing some of what RealNC has already posted, while FreeSync is more software-based, and G-SYNC is more hardware-based, their core functionality is very similar; both manipulate the scanout "rate" (how many times a single scanout cycle is repeated per second) not the scanout "speed" (how long it takes a single scanout cycle to complete), which is based on the given max refresh rate of the display, and is static and unchanging, even with VRR.ad8e wrote:Neither AMD nor Nvidia have been willing to describe these basic technical details after three years, as far as I can tell.

This subject has been covered in my article for some time now.

Some excerpts...

G-SYNC 101: Range

https://www.blurbusters.com/gsync/gsync ... ettings/2/

VRR V-SYNC ON vs. V-SYNC OFF:

and...G-SYNC + V-SYNC “Off” disables the G-SYNC module’s ability to compensate for sudden frametime variances, meaning, instead of aligning the next frame scan to the next scanout (the process that physically draws each frame, pixel by pixel, left to right, top to bottom on-screen), G-SYNC + V-SYNC “Off” will opt to start the next frame scan in the current scanout instead. This results in simultaneous delivery of more than one frame in a single scanout (tearing).

VRR LFC:Unlike G-SYNC + V-SYNC “Off,” G-SYNC + V-SYNC “On” allows the G-SYNC module to compensate for sudden frametime variances by adhering to the scanout, which ensures the affected frame scan will complete in the current scanout before the next frame scan and scanout begin. This eliminates tearing within the G-SYNC range, in spite of the frametime variances encountered.

G-SYNC 101: G-SYNC vs. V-SYNC OFF w/FPS LimitOnce the framerate reaches the approximate 36 and below mark, the G-SYNC module begins inserting duplicate refreshes per frame to maintain the panel’s minimum physical refresh rate, keep the display active, and smooth motion perception. If the framerate is at 36, the refresh rate will double to 72 Hz, at 18 frames, it will triple to 54 Hz, and so on. This behavior will continue down to 1 frame per second.

https://www.blurbusters.com/gsync/gsync ... ettings/6/

VRR Scanout Manipulation:

There's plenty more information on VRR in my article, if you would like to take a look, and I'd be happy to provide any follow-up answers after you've read it, if needed.With a fixed refresh rate display, both the refresh rate and scanout remain fixed at their maximum, regardless of framerate. With G-SYNC, the refresh rate is matched to the framerate, and while the scanout speed remains fixed, the refresh rate controls how many times the scanout is repeated per second (60 times at 60 FPS/60Hz, 45 times at 45 fps/45Hz, etc), along with the duration of the vertical blanking interval (the span between the previous and next frame scan), where G-SYNC calculates and performs all overdrive and synchronization adjustments from frame to frame.

(jorimt: /jor-uhm-tee/)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboard: Wooting 60HE Mouse: Razer Viper Mini SE Sound: Creative Sound Blaster Katana V2 VR: Beyond 2, Quest 3, Reverb G2, Index Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock Scaler: RetroTINK 4k

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboard: Wooting 60HE Mouse: Razer Viper Mini SE Sound: Creative Sound Blaster Katana V2 VR: Beyond 2, Quest 3, Reverb G2, Index Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock Scaler: RetroTINK 4k

-

ad8e

- Posts: 68

- Joined: 18 Sep 2018, 00:29

Re: How do FreeSync's technical details work?

Thanks for the answers; that was very clear and exactly what I was looking for.

- Chief Blur Buster

- Site Admin

- Posts: 12265

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: How do FreeSync's technical details work?

Yes. One catch that others may get confused by, just a clarification, the display engineer's terminology for "scanout speed" is "horizontal scan rate" or "horizontal refresh rate" which can be confused with the former.jorimt wrote:Enforcing some of what RealNC has already posted, while FreeSync is more software-based, and G-SYNC is more hardware-based, their core functionality is very similar; both manipulate the scanout "rate" (how many times a single scanout cycle is repeated per second) not the scanout "speed" (how long it takes a single scanout cycle to complete)[/i]

So "scanout rate" while technically correct, is subtly different from the terminology, "scan rate"

scanout rate = vertical refresh rate = the rate of full refresh cycles output per second. For this, I prefer to use "refresh rate" instead of "scanout rate", albiet see below for yet another subtle distinction on that..

scan rate = horizontal refresh rate = the industry standard display engineer terminology for number of pixel rows output per second (same thing as "scanout speed" or "scanout velocity").

Scanrate are often a number such as "31.5 kilohertz" for 640x480 VGA 60Hz, and "135 kilohertz" for 1080p 120Hz.

In Custom Resolution Utilities (e.g. ToastyX), it's often labelled as "Horizontal Refresh Rate".

Also, If we refer to scanout of only the visible active resolution (excluding VBI), that can be faster than the time interval of a refresh cycle (especially for Quick Frame Transport / and Large Vertical Totals / and low framerates on VRR). This can be harder to terminologize -- aka "refresh cycle delivery time", or "frame delivery time" or "frame transport time" or "scanout duration" or "scanout time".

___

For you readers....

Display signals get complicated, don't they? Especially when we throw in VRR, QFT, custom VBIs (Large Vertical Totals), etc. I'm even ignoring other nuances pixel formats (e.g. ordinary integer 24-bit RGB versus floating-point HDR pixels) and/or display stream compression techniques (and DisplayPort/HDMI packetization, which can compress whole scanlines at once) and simply focussing on pixel delivery timings only. Heh.

That's effectively what happens.ad8e wrote:Here's two possibilities that I might expect. The first is that the monitor scans at its highest rate no matter what, and treats the remaining time as interruptible downtime.

It's a variable-length blanking interval.

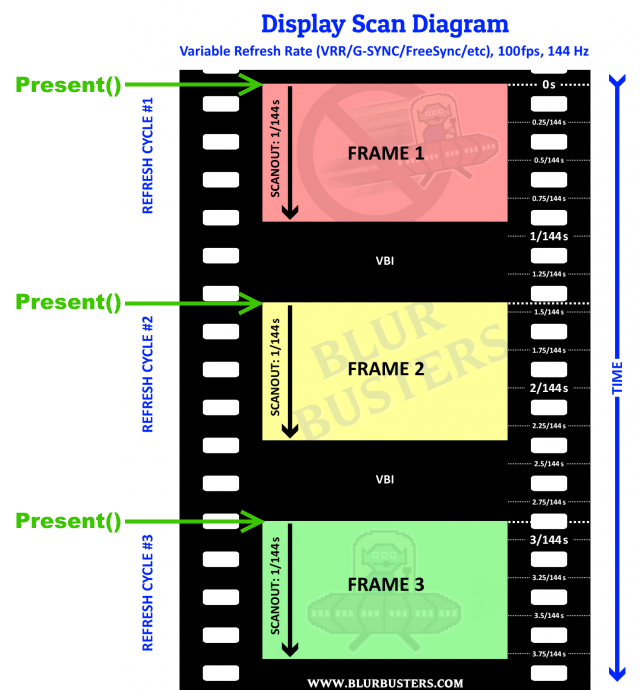

All refresh cycles are output at the same scanout velocity as the max Hz. Horizontal scanrate remains fixed. e.g. All refresh cycles of 48Hz-240Hz VRR is raster scanned-out in 1/240sec.

Between refresh cycles, the monitor is essentially looping inside VBI. It's continually outputting new dummy VBI scanlines (Back Porch, for you video signal geeks) until Present() or glutSwapBuffers() at which point the next scanline output of the GPU output is the beginning of the new refresh cycle. There may be a granularity associated with it (potentially 1ms) but there doesn't have to be a granularity, at least when operating within the VRR range.

So your API call controls the beginnings of new refresh cycles. It's asynchronous refreshing.

That's not how things work.ad8e wrote:The second possibility is that the monitor requires its full period to improve color accuracy. That makes frames non-interruptible; the computer must inform the monitor how long the next frame will be displayed, and the monitor uses the entire promised period for optimal picture quality. After the last frame finishes in 20ms, the previous 40ms frame is still halfway through presenting.

There's a cable scanout behaviour and a monitor scanout behaviour.

Otherwise, VSYNC OFF would be impossible. Tearlines are rasters, see explanation -- RTSS has a new method of hiding tearlines in blanking interval (scanline sync) -- from my feature suggestion to them on Guru3D that they implemented -- and it requires understanding behaviour of scanout.

So to simplify understanding, focus only on the GPU output. That's all your control. Forget about trying to visualize monitor processing, that's the horse out of the open barn door.

Try me. I can explain FreeSync.ad8e wrote:Neither AMD nor Nvidia have been willing to describe these basic technical details after three years, as far as I can tell.

It's really, really, easy for me to understand for me (at least FreeSync). I can explain the technical behaviour of FreeSync, it's just simply variable length VBIs.

Some of us actually got it to work on a multisync CRTs too (those without refresh-rate blankout logic) via using a HDMI->VGA adaptor and using ToastyX CRU to force FreeSync. Fundamentally, at the protocol level, FreeSync is just merely simply a backwards-compatible tack-on an existing 1930s analog signal standard: Variable-length VBIs. The graphics output is simply in an "infinite loop"* continually outputting VBI scanlines until software Present()s, and then the new refresh cycle immediately begins on the spot out of the GPU output.

(What happens when the display receives the pixel on the display-end of the cable, varies from display to display. Some displays will realtime-scanout 'off the wire' like a CRT. Many 1080p 144Hz gaming LCDs can now do this, producing VSYNC OFF lag numbers as little as roughly 3ms from API-to-photons -- as shown from RTINGS tests -- mostly consisting of various overheads along the chain.)

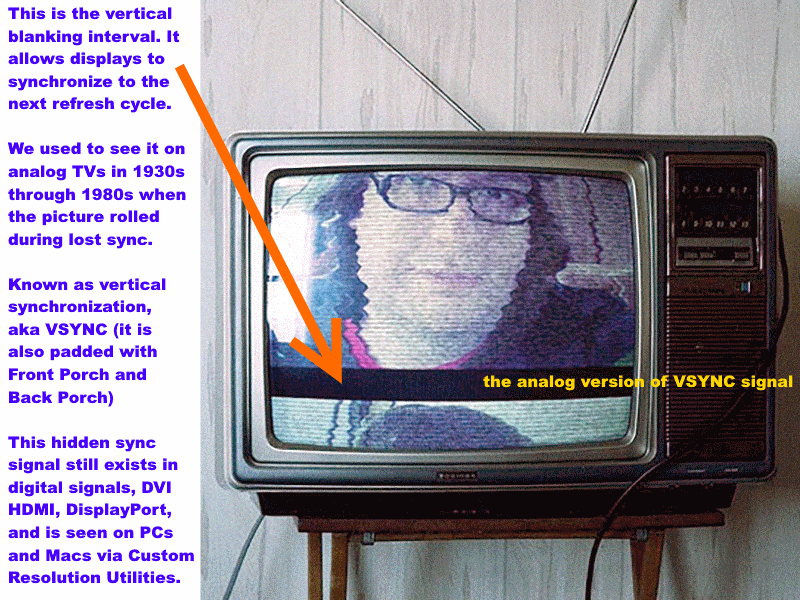

Here's an image of the VSYNC, if viewed on a very old television set -- it's simply black scanlines spacing between refresh cycles, used as a synchronization signal (a time padding between last scanline of refresh cycle and first scanline of new refresh cycle).

On FreeSync, that figurative "VHOLD" bar gets thicker/thinner to space out refresh cycles. The VHOLD black bar, is also called VSYNC or VBI. Few gamers understand the technical rube goldberg details behind "VSYNC ON", so we're really opening the hood here.

The VBI (that black bar) consist of three components that are nearly identical looking because they're all black colored on the signal level, stacked on each other.

- Vertical Front Porch scanlines (overscan padding between visible resolution and "Vertical Sync" signal, an equivalent of NTSC 7.5 IRE black level)

- Vertical Sync scanlines (often a "below black" pixel value, an equivalent of NTSC 0 IRE on signal level)

- Vertical Back Porch scanlines (overscan padding between "Vertical Sync" and visible resolution)

This was born of analog era but this topology is still used on all digital signals (DVI, HDMI, DisplayPort). Originally used to give time for the electron gun to move to its new position (e.g. move back to upper-right corner of CRT), the porches were used as overscan area, and the sync was the trigger to begin moving the electron gun. Today, it's simply digital timing paddings to allow monitor electronics to get ready to begin next refresh cycle, or to begin next pixel row, etc. But the topology is still exactly the same (Porches, Sync, Active) as seen in Custom Resolution Utilities.

And FreeSync is a very super-simple bolt-on that is backwards compatible with signalconverting into analog, which means FreeSync actually end up working on very good Multisync CRTs that aren't too sensitive to blanking out during refresh rate changes. Fortunately, the scanrate stays exactly the same permanently, which makes it much easier -- only the Back Porch is variable-sized in FreeSync. Everything in a Custom Resolution Utility stays exactly the same on FreeSync, only the "Vertical Back Porch" number dynamically changes to vary the intervals between refresh cycles -- with zero need for display mode changes (like changing between fixed-Hz modes).

This is the same for HDMI 2.1 VRR, VESA Adaptive-Sync, and AMD FreeSync. In addition, I met with AMD during CES 2018 whom I talked to. I had a private meeting with AMD and I asked some roundabout questions about the FreeSync protocol. It's not often publicly documented to end users because it's really just simply AMD enhancements to VESA Adaptive-Sync. Both of which is documented for display manufacturers. Some details can be under NDA but the commonalities are open/free -- anybody can legally generate a FreeSync-compatible signal if they wanted to, though understanding how to advertise it (e.g. EDID) to implementing it (e.g. GPU programming) is extra details that are beyond mainstream users such as me.

Forcing FreeSync to a HDMI 2.1 VRR display and a VESA Adaptive-Sync display, actually works fine. The protocol of a varying-number-scanlines "Back Porch" is all FreeSync merely simply is.

That said... FreeSync has other engineering challenges like designing good ghost-free LCD overdrive for asynchronous refresh cycles. But that's a display side engineering to worry about. And LCD artifacts that look very different at low Hz versus high Hz. That's why NVIDIA spent a lot on GSYNC, so GSYNC looked a lot better than FreeSync for many years until FreeSync finally caught up (FreeSync 2 can look really good, because of the much-more-rigorous certification).

(Whatever monitor color processing is a /completely/ separate topic altogether, that's sometimes done by monitor buffering -- sometimes only trailing buffers to prevent lag, and sometimes leading buffers which add lag (rarely done now in gaming monitors). This includes whether monitor does color processing or not, overdrive processing or not, HDR processing or not, everything is still rasterscanned over the cable). This has no effect on the GPU-output-side of things, just exactly like for tearlines. So you, as a programmer, FOCUS only on the GPU output, don't worry about monitor processing.

From a programming perspective, we don't care what the display does (whether it buffers or not, processes or not) after all, it's the horse out of the barn door (GPU output). At the GPU output level, you're indeed software-controlling the refresh cycles -- as long as the refresh interval is within the VRR range (e.g. "30Hz - 144Hz" means you're Present()ing within a range of 1/144sec thru 1/30sec apart -- if you do that -- you're software triggering refreshes and the API-to-photons latency for a given pixel on display becomes a constant. Assuming you're excluding rendering overheads or keeping it super-consistent (e.g. just repeatedly delvering the exact same frame, or simply a different-colored solid rectangle), API-to-photons can be literally 10-microsecond accurate (the timing granularity of 1 scanline).

It's also why WinUAE's emu-raster-and-real-raster synchronization (lagless VSYNC) works on WinUAE even in GSYNC/FreeSync mode because you still interrupt frames mid-scanout with a new frame (VSYNC OFF tearlines) in the GSYNC+VSYNC OFF mode and FreeSync+VSYNC OFF mode -- check out Github and the VRR explanation

It works on GSYNC too, not just FreeSync, which shows that the behaviours are pretty similar. They're both variable-spacings between full-velocity scanouts. The additional stuff that GSYNC does is slightly different, e.g. repeat refresh behaviours, polling behaviours or 2-way behaviours (FreeSync is totally unidirectional; that's why it unexpectedly works on analog multisync CRTs too), overdrive processing on monitor side, etc. But fundamentally, it's just max-speed scanouts with variable padding between them -- variable size VBIs.

*Asteriked Note: Not really an infinite VBI loop, due to the min Hz -- there's a maximum duration where the monitor will go artifacty/black unless either the GPU outputs a new refresh cycle, or the monitor has auto-refreshing logic. On FreeSync, the GPU is responsible for transmitting a new refresh cycle. The graphics drivers will do it automatically. If this is somehow hacked and you intentionally transmit a new refresh cycle, the screen goes blank / artifacted / fade to white / go out of sync (like if you were changing a fixed-Hz refresh rate) -- beheaviour is undefined. So you don't want to go below min Hz, but at least you can't because the GPU will present the previous refresh cycle automatically if you don't present a new one on time. The repeat-refresh will block the ability to deliver a new refresh cycle on demand. So if you want precise software control over your refresh cycle timings, you don't want Present() durations longer than the min Hz of your VRR range -- the next Present() call will block)

TL;DR for software developers, Direct3D Present() and OpenGL glutSwapBuffers() begins delivering the refresh cycle practically immediately, as long as your API-call interval is within VRR range, e.g. An interval 1/30sec thru 1/144sec for a "30Hz-144Hz" VRR display -- means the display refresh cycle, is indeed software-triggered by your API timing! Yep, you, my dear software developer, are the master of the refresh cycle on a FreeSync/GSYNC display!

These scan diagrams are easier to understand after seeing high speed videos.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!