My attempt at achieving a low input lag. Am I doing it right?

Posted: 22 Mar 2020, 10:39

Hello,

to reduce the inputlag of my game and provide the right settings to let players tune the input lag and smoothness according to their machine performance, I wrote a program made to test different settings and techniques.

For now, I am interested in reducing the input lag.

The game needs to be deterministic, so it uses fixed timestep. Because it can cause smoothness issues, I used this program to test if an interpolation won't add too much input lag. I also tried to have variable timestep in a way that the next frame can start earlier to be in sync with the screen refresh, but overall the update rate is the same. This what I call "loose timestep".

The program has a scene in which it displays 3 white bars when an input is pressed. I use a photodiode and a microcontroller to send keyboard inputs and measure how long it takes for the screen to go from black to white, and then how many frames are displayed before the program displays black again because the button is released. This way I have input lag in ms and in game ticks, at the top, middle and bottom of the monitor. Each test is repeated 1000 times, with a random delay between ON inputs.

I tested several updates rates, from less than half the display refresh rate to more than the double, with integer and non-integer ratios. I tested with different render times to see the potential behaviours on more or less fast machines. I testes with V-Sync off and on, with and without GPU hardsync, and with predictive waiting.

I noticed that I got inconsistent results when the V-Sync is turned off. The program starts at a random time in the display refresh cycle, so in different executions the buffers are not swapped at the same time. To get consistent results, the program turns V-Sync ON for one frame, syncs up and turns it off.

I also made a scrolling checkerboard scene to test tearing and smoothness.

For now I am interested only in video, but I may do similar work concerning audio later.

I measured the input lag only with one monitor plugged on my desktop computer, on Windows.

On a 2nd monitor, I don't see tear lines when it should have it.

Same with my laptop on it's monitor, with either GPU.

On GNU/Linux, I get a tear line that moves depending on where is the mouse cursor, even with V-Sync on. Also it detects a triple buffer, and I don't know how to turn it off.

I don't own a VRR monitor so I didn't researched how to make the best use of them.

The source code is on Github: https://github.com/Sentmoraap/doing-sdl-right.

If you read it, you may wonder why I render the frame on a offscreen buffer in different context, and then render it to the main window. I want to test it for games made for a specific resolution, and then scaled to fit.

I also want to be able to destroy and recreate a window, so I want to recreate a context without having to reload everything. I want to recreate a window because I got problems with just switching modes and moving it.

Here is what I concluded in my tests:

- my monitor have approx 17 ms of input lag; (EDIT: 17 ms not 25, I forgot to remove 8 ms from the random times compared to the scanout)

- interpolation does not seem to be a big deal in terms of input lag. So loose timestep is not necessary, and not desirable considering the implications of variable timestep.

- after several fixes, it seems that my predictive waiting works. There is not much more average lag than VSync off when the game update rate is the same as the display refresh rate;

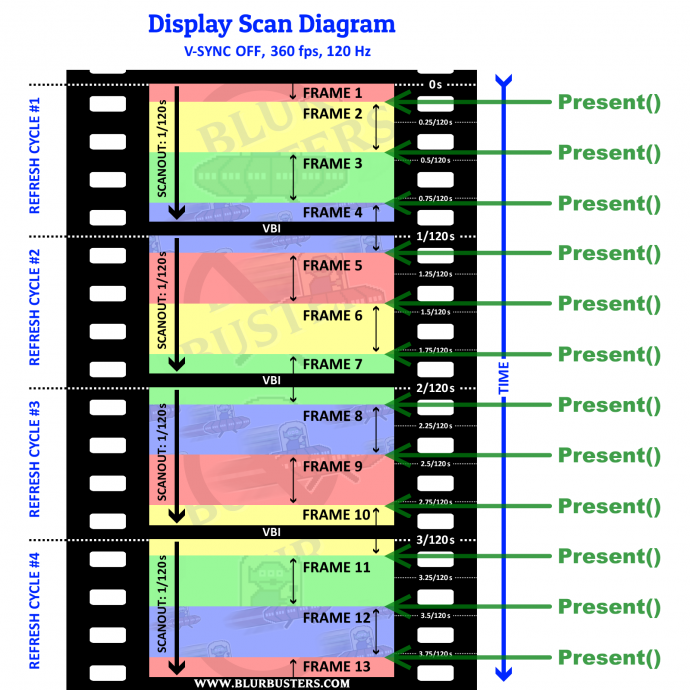

- sometimes it looks like that V-Sync OFF has significantly less lag, when in theory it should not. It may because how the tear lines are offset from the VBL, so on the average the bars are earlier in the refresh cycles;

- when the program is waiting, it should sleep to prevent the scheduler to end it's time slice at the wrong time and make it miss the VBL;

- the tear lines are jittery, and are slowly moving upwards when they should stay at the same place. The VBL is longer than the jitter time.

It looks like I got how to have less input lag with V-Sync ON on non VRR monitors. I may have made some mistakes. I have attached a Win32 build and a spreadsheet with my measurements.

to reduce the inputlag of my game and provide the right settings to let players tune the input lag and smoothness according to their machine performance, I wrote a program made to test different settings and techniques.

For now, I am interested in reducing the input lag.

The game needs to be deterministic, so it uses fixed timestep. Because it can cause smoothness issues, I used this program to test if an interpolation won't add too much input lag. I also tried to have variable timestep in a way that the next frame can start earlier to be in sync with the screen refresh, but overall the update rate is the same. This what I call "loose timestep".

The program has a scene in which it displays 3 white bars when an input is pressed. I use a photodiode and a microcontroller to send keyboard inputs and measure how long it takes for the screen to go from black to white, and then how many frames are displayed before the program displays black again because the button is released. This way I have input lag in ms and in game ticks, at the top, middle and bottom of the monitor. Each test is repeated 1000 times, with a random delay between ON inputs.

I tested several updates rates, from less than half the display refresh rate to more than the double, with integer and non-integer ratios. I tested with different render times to see the potential behaviours on more or less fast machines. I testes with V-Sync off and on, with and without GPU hardsync, and with predictive waiting.

I noticed that I got inconsistent results when the V-Sync is turned off. The program starts at a random time in the display refresh cycle, so in different executions the buffers are not swapped at the same time. To get consistent results, the program turns V-Sync ON for one frame, syncs up and turns it off.

I also made a scrolling checkerboard scene to test tearing and smoothness.

For now I am interested only in video, but I may do similar work concerning audio later.

I measured the input lag only with one monitor plugged on my desktop computer, on Windows.

On a 2nd monitor, I don't see tear lines when it should have it.

Same with my laptop on it's monitor, with either GPU.

On GNU/Linux, I get a tear line that moves depending on where is the mouse cursor, even with V-Sync on. Also it detects a triple buffer, and I don't know how to turn it off.

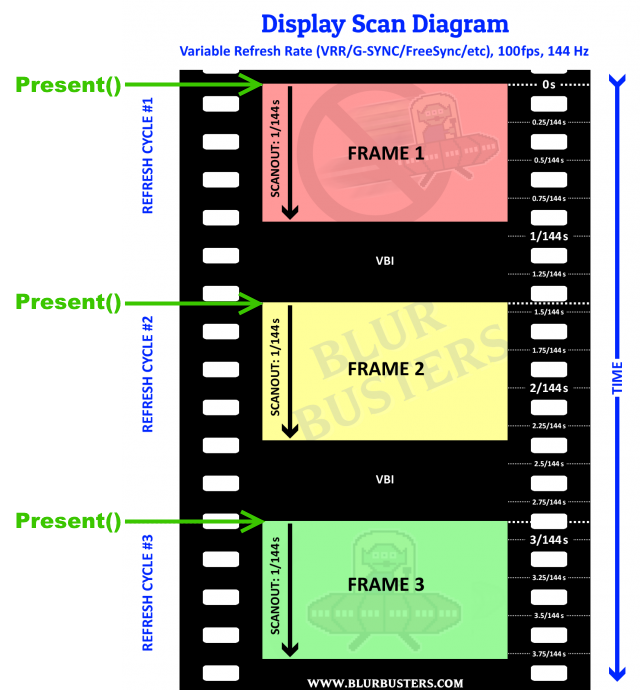

I don't own a VRR monitor so I didn't researched how to make the best use of them.

The source code is on Github: https://github.com/Sentmoraap/doing-sdl-right.

If you read it, you may wonder why I render the frame on a offscreen buffer in different context, and then render it to the main window. I want to test it for games made for a specific resolution, and then scaled to fit.

I also want to be able to destroy and recreate a window, so I want to recreate a context without having to reload everything. I want to recreate a window because I got problems with just switching modes and moving it.

Here is what I concluded in my tests:

- my monitor have approx 17 ms of input lag; (EDIT: 17 ms not 25, I forgot to remove 8 ms from the random times compared to the scanout)

- interpolation does not seem to be a big deal in terms of input lag. So loose timestep is not necessary, and not desirable considering the implications of variable timestep.

- after several fixes, it seems that my predictive waiting works. There is not much more average lag than VSync off when the game update rate is the same as the display refresh rate;

- sometimes it looks like that V-Sync OFF has significantly less lag, when in theory it should not. It may because how the tear lines are offset from the VBL, so on the average the bars are earlier in the refresh cycles;

- when the program is waiting, it should sleep to prevent the scheduler to end it's time slice at the wrong time and make it miss the VBL;

- the tear lines are jittery, and are slowly moving upwards when they should stay at the same place. The VBL is longer than the jitter time.

It looks like I got how to have less input lag with V-Sync ON on non VRR monitors. I may have made some mistakes. I have attached a Win32 build and a spreadsheet with my measurements.