missmah wrote: ↑Yesterday, 09:24

Since OLED is self emissive, why would strobing be the approach? (besides being easy to insert black frames in software/firmware)?

Strobing is something that can be done at fantastic quality levels in software, once the hardware is sufficiently of brute (high Hz enough). At least for most end-user motion cases. This gives end user control over display limitations, as long as the display has enough brute to allow software-based strobing (via black frame insertion).

Please note, one needs to disambiguate on Blur Busters, if you mean strobing as generic impulsing of any kind (flashing, BFI, backlight), or strobing only specific to backlight flashing. However, the pulsewidth math and physics are identical.

However, strobing can achieve sub-1ms MPRTs on LCDs, which can be human visible (0.5ms vs 1.0ms), in extreme cases such as 3000 pixels/sec

TestUFO Panning Map Readability Test. At 3000 pixels/sec, 1ms equals 3 pixels of display motion blur, according to the math of

Blur Busters Law, which is a simplification of MPRT(0%->100%).

Also, on this topic... TestUFO motion speeds were

intentionally designed to turn MPRTs into something easily human interpretable, 960 pixels/sec is the closest number to 1000 but still divisible by common refresh rates (60, 120, 240, 480) which is nice for explaining Blur Buster Law of "1ms persistence = 1 pixel of motion blur per 1000 pixels/sec" and turning it into something human visible and human explainable in see-for-yourself TestUFO links.

OLED black frame insertion (whether hardware-based and software-based) cannot do sufficiently short pulsewidths necessary to do MPRTs all the way to human visiblity noise floor.

missmah wrote: ↑Yesterday, 09:24

It would seem that a scanning display, where each pixel is illuminated only for a short time and then the illumination is turned off would make much more sense.

Real life does not work that way.

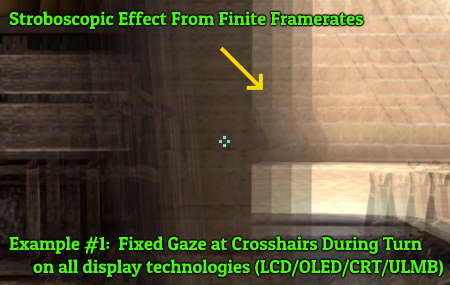

There are permanent artifacts if you don't continuously shine a pixel, see

The Stroboscopic Effect of Finite Frame Rates. To buttress my reputation for unfamiliar new forum members, I also in 35+ peer reviewed papers, see blurbusters.com/area51 -- I have been known to be a good teacher of this topic matter.

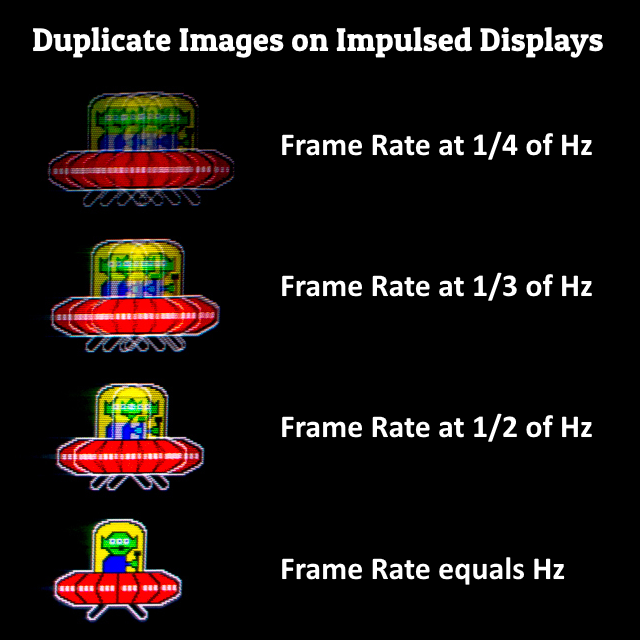

Impulsing of any kind (pixel-level, strobing, black frame insertion, etc) is fantastic for motion blur reduction, as long as done at only one pulse per refresh cycle. However, it is not appropriate for all user cases. Some people still get eyestrain from 1000Hz PWM due to PWM artifacts (duplicate image artifacts).

Homework exercise, look at 1st UFO then look at 2nd UFO:

(click for full screen version and then maximize window)

And also vastly educational, see the

Variable Speed Animation - Stutter to Blur Continuum (stare only at 2nd UFO for 30 seconds), to witness stutter is the same thing as persistence blur. Just different non-erratic stutter frequencies, much like a slow/fast music string which may visibly shake or simply blur.

Brute framerate & brute refresh rate on sample and hold displays is literally the only way to perfectly match real life for all four situations for as close to five-sigma of human population as possible:

1. stationary eyes, stationary imagery

2. stationary eyes,

MOVING imagery

3.

MOVING eyes, stationary imagery

3.

MOVING eyes,

MOVING imagery

Displays behave different whether your eyes are stationary or moving, and you can't make 1/2/3/4 match real life perfectly without a flickerless display AND bruting the framerate+refreshrate.

While impulsing is a fantastic solution, it is ultimately a bandaid that doesn't solve-all and fit-all use cases.

missmah wrote: ↑Yesterday, 09:24

I'm guessing columns or rows are driven as groups to simplify things, so there's not going to be per-pixel addressability, but it shouldn't take much for a panel nanufacturer to build a display with a self-decay to black function on a per-row basis, to set persistence (persist until next update, or persist for some set time)...

It will more than quadruple the cost because lithographing those extra components/transistors, while voltage-balancing it perfectly, is an engineering challenge.

Do you know how much realtime Ohm's Law compensation we had to do in active matrix LCDs to make sure the upper-right corner pixel had the same LCD GtG as the pixel in bottom-right corner? The lengths of the microwire grids means GtG varies a lot and crosstalks badly especially back in the passive matrix days. But with 4K screens and extreme contrast ratios, it is quite a tall order to do it on a 5-figure price LCD glass that is now only priced at 3-figures.

And can you imagine the horrendous increase in complexity of the real time Ohm's Law compensation for self-emissive pixels? Those pixels consume ginormously more power, creating more distortions in Ohm's Law in those same microwires, requiring more advanced computes (in an ASIC) rather than things that could be done with simpler electronics...

We haven't dived into the rabbit hole of lifetime candleburn-style counters on a per subpixel basis used for image retention compensation algorithms, where an internal LUT is kept track on the aging of the subpixels based on how brightly/dim the subpixels are lit...

Or the complex LUTs for HDR tonemapping, above the LUTs used for overdrive algorithms (even OLED often still use overdrive, although much more lightly than LCDs need) as well as other picture adjustments. The TCON/scaler is literally a supercomputer nowadays, and we consumers expect to pay less than $1000 for it. Some of them are doing over a

trillion operations per second -- this is because scaler/TCONs in modern displays are doing over a billion subpixels per second (3840 x 2160 x 3 color channels x 120 refresh cycles per second), and some of the more advanced ones (4K120+ videophile displays with lots of features) get an average of over a thousand math ops per subpixel per refresh cycle. How do you propose to make that cheap? Any modern screen you're reading this post on, is currently a gigantic engineering miracle, even in a bottom-barrel $150 Android phone.

So, it's kind of a pie in sky to ask for this cost-quadrupling feature, sadly.

It's much cheaper to invent/fabricate a 2000Hz OLED than to do the feature suggestion...