tygeezy wrote: ↑20 Jul 2020, 20:43

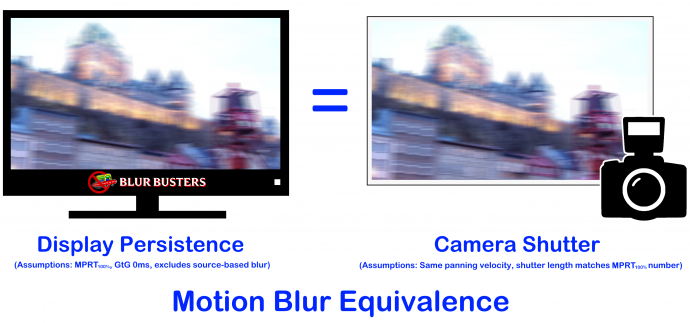

My question is, would it be possible to use ai upscaling to improve motion resolution on camera panning in shooter games?

Short answer: YES

For a theoretical DLSS 3.0 I'd love to see them combine the current spatial FRAT algorithm with Oculus-style ASW temporal FRAT algorithm, for non-VR games, to improve motion resolution of panning/turning/scrolling, for 240Hz+ and 360Hz+ displays.

Frame Rate Amplification Technology (FRAT)

If done properly, the input-to-photons (1000Hz mousemoves) can be an input into the frame rate amplification technology, allowing reprojection to

lower input lag, unlike old-fashioned television interpolation that increases input lag, generates a Soap Opera Effect (SOE) artifacts, and parallax edge-movement artifacts. None of that exists with my Oculus Rift's amplifying 45fps into 90fps, since it's reprojecting in 3D with the help of the Z-Buffer, using ASW 2.0

Amplifying 100fps into 1000fps (for future retina refresh rate displays -- blurless sample-and-hold, like ULMB but without strobing), can be less lag than 100fps, despite the extra processing overhead of reprojection.

Old-fashioned interpolation was an ugly black box, but the frame rate amplification of the future, that is already happening, uses things like Z-buffer and input (head trackers, mouse, etc) at higher refresh rate than the original GPU full-render framerate, so theoretically adding more "fake frames" can reduce input lag, instead of increase input lag, since no lookahead buffering is needed once you're including high-Hz motion vectors from high-Hz input (and game variables) into a frame rate amplification technology that is less black box.

It's easy to predict that NVIDIA is already probably working on this stuff, most NVIDIA researchers likes the "Frame Rate Amplification Technology" article.

Adding more "fake frames" isn't bad if they're virtually flawless frames without input lag...

(Netflix video already does something similar in 2D -- I-Frames, B-Frames, and P-Frames -- so this is essentially rearchitecturing the GPU motion pipeline in all 3 dimensions, to reduce the number of original GPU full-renders to achieve an ultra high frame rate, and using AI + FRAT + reprojection + non-blackbox stuff (high-Hz controller input) to skip the need for full GPU renders for every single frame. New techniques now exist to make these intermediate frames virtually flawless.

I would not be shocked that a future version of DLSS within five years, will also eventually add optional temporal frame rate amplification algorithms (similar to Oculus ASW).

Imagine, Unreal 5 graphics quality at 1000fps...