Upgraded PC; Motion Blur makes same old games unplayable.

Posted: 19 Feb 2021, 14:41

Hi all,

TL;DR, I upgraded from an Intel Core Quad system to a Ryzen 5 3000 system and now there's so much motion blur that my games make me throw up.

I've been building my own gaming PC's (at a slow upgrade pace) for 17 years now.

About a year and a three months ago (where does time go? I keep getting distracted from truly solving this problem), I went from this [feel free to laugh, but please keep it to yourself ]:

]:

Intel Core 2 Quad Q6600

4 GB OCZ Fatal1ty

ASUS P5QPro MoBo

Geforce GTX 1050 with G-Sync (which I've heard some people describe as a "boat anchor" but I've had good luck with it.)

Dell E2215HV (some budget office monitor with a single VGA connection)

To this:

Ryzen 5 3600

16 GB G. Skill RAM

MSI B450M Gaming Plus MoBo

And I kept my GTX1050 with the plan to upgrade it sooner rather than later to an ATI card (I just seem to have better luck with those and it will be more compatible with my AMD FreeSync monitor?) next time there are some sales, but with the latest data mining craze, I think I'm going to be stuck with it for awhile.

Scepter M25 (E255B-1658A-25) LED TN Monitor. Advertised: 1MS G-to-G, 165 Hz, Anti-Flicker, Pixel Overdrive mode and FreeSync.

The first thing I did upon upgrade was load up some of my old favorites to see just how they'd look with graphics cranked: Spintires/Mudrunner, Farming Simulator 17, Tomb Raider, Railway Empire, Tropico 5, Pillars of Eternity, WW1 Verdun Western Front, Valiant Hearts: The Great War (to see what 2D looked like).

These should be pretty big clues as to what sort of gamer I am and also that my graphical standards are pretty low.

Immediately I noticed that due to LCD blur all of these old games were unplayable. I tried playing them with far lower settings than my antique Intel setup and still no joy.

One of my main questions is, how can a major computer upgrade on all fronts create so much motion blur? If the blur exists now, it should have definitely existed before? By all rights, running all my games at higher FPS on a higher refresh rate monitor should be an improvement? Why could a game look just fine on my old setup, but be a blurry mess on my new one?

One theory I had based on the below posts is that the Ryzen 5/Zen 2 refuses to play well with my Nvidia graphics card?

I tried to do my research and not necro any old threads. My question is, I believe, related to this viewtopic.php?f=10&t=7033&p=52619&hilit ... 2619]post.

A viewtopic.php?f=10&t=7033&p=52619&hilit ... lowup post to that includes this quote with my emphasis: "We can keep our heads stuck in the sand and pretend that latency doesn't exist, or we can acknowledge the problem and finally begin to address it. As latency becomes more of a problem nowadays than ever (Windows 10, Ryzen, game developers buffering frames to boost FPS instead of properly optimizing games, bloated electron garbage "software," etc.), more and more people are waking up to the latency question and are questioning why their old and less powerful systems were noticeably more responsive than their brand new systems (quad core Intel owners upgrading to Ryzen systems being a notable example)."

Okay, now here's where I'm at. I don't 100 percent understand what's happening in this older thread and I don't know if it directly translates to motion blur.

I had an aging Intel Quad Core CPU and an antique junky Dell office LED-backlit LCD panel that ran over a VGA connection. To be honest, I gamed on that for quite a few years and was content. Not blown away, but never was like "this sux." I never once had a noticeable issue with motion blur. I'm not flush with cash, so I upgrade on sales and in a piecemeal manner. I had an ATI card, but it crapped out on me, so I decided to give Nvidia a try and got a decent upgrade and was content. Then, I caught some more sales and decided it was time to bite the bullet and an entirely new system, so I upgraded to a Ryzen 5 3000 series with a new motherboard and RAM to match, obviously, but kept my Nvidia graphics card and the same old monitor. Immediately I noticed that any motion whatsoever made me sick to my stomach. Games were unplayable. Even scrolling down a simple webpage in a browser, would cause my eyes to hurt and give me a headache as everything became a blurry mess with even the slightest bump of the scrollwheel.

So I did a lot of research (on a laptop so I could actually read without throwing up) and decided that to match my new admittedly humble system's "power," I had better get something that at least claims to be fancy-schmancy gaming monitor (Scepter E255B-1658A-25). It wasn't on the Blurbusters list of best gaming monitors, but it had good reviews and I could afford it (that part is key). So, it's LED, has 1ms response time, it's 165hz, it's got an pixel overdrive mode and some anti-flicker backlight thingy. Finally, it has Freesync so I can use the G-Sync of my Nvidia card. I also went from VGA to DP. And to be honest, it did make a little difference. G-Sync is unusable, the motion blur is worse than without it. Using a web browser is doable, but only if the overdrive mode is on and it's set to 165hz. Still not as smooth as my antique Dell monitor on my old system. Games are "playable" now, but too much motion forces me to either let my eyes go out of focus until the movement is over or look away from the screen. Obviously, this makes any game with a lot of action or any competition absolutely unplayable. The character standing still every few seconds so he can actually see what he's shooting at (and even then, only shoot at things that aren't moving too quickly) is a pretty easy target. What finally got me up in arms about all this is trying to replay a 20-plus-year-old game: Baldur's Gate II. Scrolling around the map is a blurry pixelated mess that looks like, honestly, a pile of fresh sick and makes me want to produce one. I took my new computer innards out and replaced them with my old Intel setup. Zero noticeable motion blur in any game on either my old monitor or my brand new one. Now, it isn't powerful enough to play my newest games, but the ones that ran on both were infinitely better looking on the older system. I borrowed a friends Asus gaming monitor and my new system was still terrible to play on. I also tested all three monitors on an ancient AMD A8/Radeon 8550G laptop with the ancient games it could play and had acceptable results. I took both of my monitors to his house and they worked just fine on his gaming system. We also swapped graphics cards and the business was the exact same: My card works fine in his system and his looks like trash in mine. Both cards work just fine on my old Intel setup. I also tried a variety of cables, including HDMI and DVI and even adapters for VGA. The only caveat is that my buddy only has an Nvidia card for testing and I don't know anyone with an ATI card to test if it's some sort of architecture compatibility problem.

So, I know for sure these components are good:

Monitors.

Graphics cards.

DP/HDMI/DVI/VGA Cables.

Ancient Intel setup.

I know for sure where the problem originates:

Ryzen 5 3000 series CPU

New motherboard?

New Ram?

Now, the reason I'm creating this TL;DR post is because I've tried all the easy stuff:

I use the latest build of Windows 10 with all updates.

Graphics drivers are up to date.

I don't have any weird or unnecessary software installed or running in the background.

I've turned off Windows gaming mode and the xbox bloatware junk that's on by default.

I've turned off full screen optimization.

Turned the graphics all the way down on my test games so they're running at a super high frame rate even if they look hideous.

(And before you ask, yes I turn off motion blur in games and some of my test games don't even have it.)

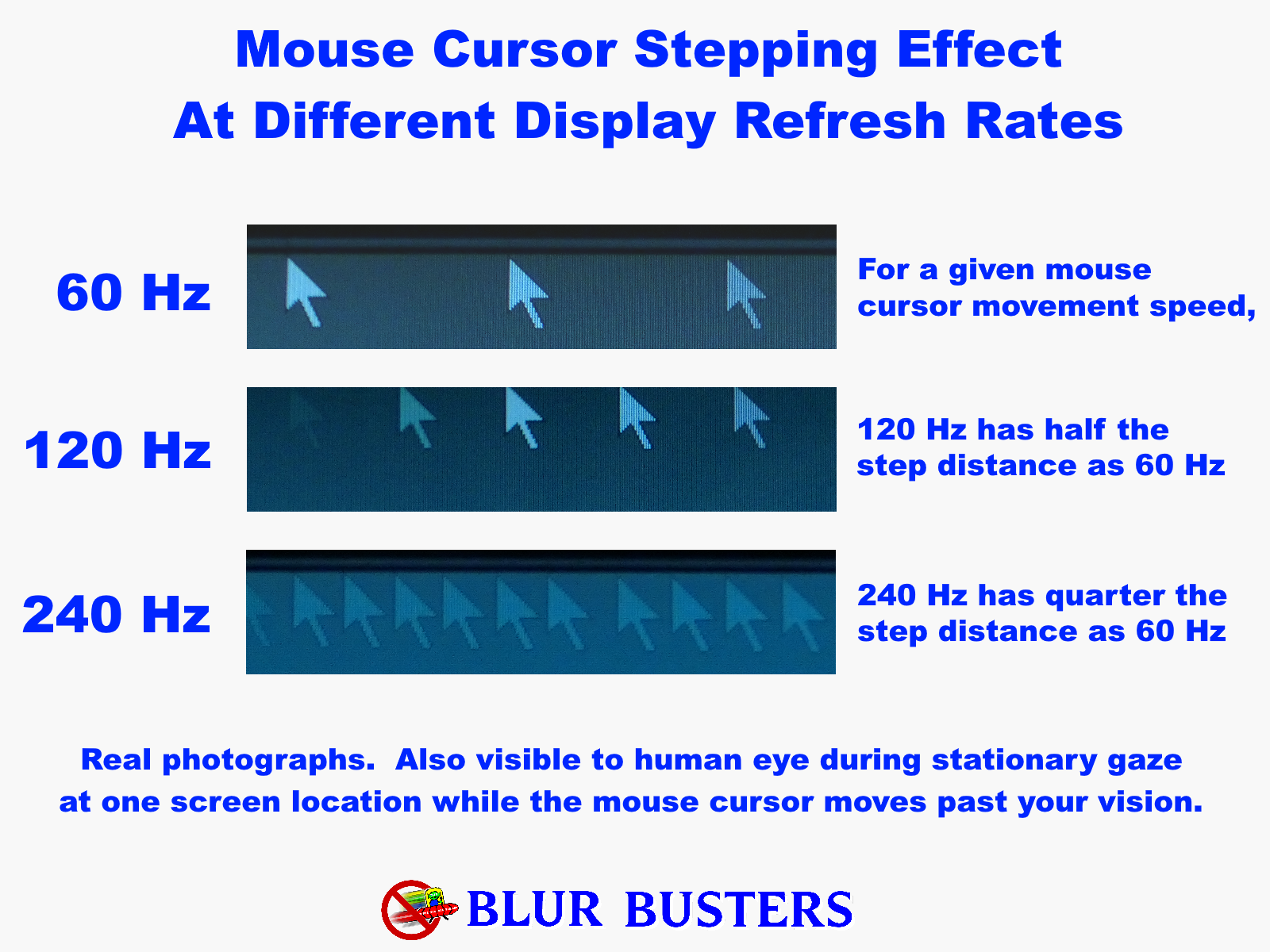

I don't want to have to try to upgrade to a 240Hz monitor because I'm not certain that would fix this problem. I don't want to upgrade my perfectly good graphics card and I don't want to upgrade out of this new Ryzen system and buy a new Intel one, because I can't afford to do so. And to be honest, it feels like a waste to have all this hardware and not even really have played any games on it. I can toss out the DXDiag if you guys think it will help.

I basically want to do whatever it takes to play games again. If I have to get in the registry to turn a bunch of stuff off, disable cores, run some software or use an older driver. I'll do whatever. I miss games. A lot.

I don't need this PC for anything but gaming, so it doesn't have to be capable of doing anything else.

*le sigh*

Thanks for reading.

TL;DR, I upgraded from an Intel Core Quad system to a Ryzen 5 3000 system and now there's so much motion blur that my games make me throw up.

I've been building my own gaming PC's (at a slow upgrade pace) for 17 years now.

About a year and a three months ago (where does time go? I keep getting distracted from truly solving this problem), I went from this [feel free to laugh, but please keep it to yourself

Intel Core 2 Quad Q6600

4 GB OCZ Fatal1ty

ASUS P5QPro MoBo

Geforce GTX 1050 with G-Sync (which I've heard some people describe as a "boat anchor" but I've had good luck with it.)

Dell E2215HV (some budget office monitor with a single VGA connection)

To this:

Ryzen 5 3600

16 GB G. Skill RAM

MSI B450M Gaming Plus MoBo

And I kept my GTX1050 with the plan to upgrade it sooner rather than later to an ATI card (I just seem to have better luck with those and it will be more compatible with my AMD FreeSync monitor?) next time there are some sales, but with the latest data mining craze, I think I'm going to be stuck with it for awhile.

Scepter M25 (E255B-1658A-25) LED TN Monitor. Advertised: 1MS G-to-G, 165 Hz, Anti-Flicker, Pixel Overdrive mode and FreeSync.

The first thing I did upon upgrade was load up some of my old favorites to see just how they'd look with graphics cranked: Spintires/Mudrunner, Farming Simulator 17, Tomb Raider, Railway Empire, Tropico 5, Pillars of Eternity, WW1 Verdun Western Front, Valiant Hearts: The Great War (to see what 2D looked like).

These should be pretty big clues as to what sort of gamer I am and also that my graphical standards are pretty low.

Immediately I noticed that due to LCD blur all of these old games were unplayable. I tried playing them with far lower settings than my antique Intel setup and still no joy.

One of my main questions is, how can a major computer upgrade on all fronts create so much motion blur? If the blur exists now, it should have definitely existed before? By all rights, running all my games at higher FPS on a higher refresh rate monitor should be an improvement? Why could a game look just fine on my old setup, but be a blurry mess on my new one?

One theory I had based on the below posts is that the Ryzen 5/Zen 2 refuses to play well with my Nvidia graphics card?

I tried to do my research and not necro any old threads. My question is, I believe, related to this viewtopic.php?f=10&t=7033&p=52619&hilit ... 2619]post.

A viewtopic.php?f=10&t=7033&p=52619&hilit ... lowup post to that includes this quote with my emphasis: "We can keep our heads stuck in the sand and pretend that latency doesn't exist, or we can acknowledge the problem and finally begin to address it. As latency becomes more of a problem nowadays than ever (Windows 10, Ryzen, game developers buffering frames to boost FPS instead of properly optimizing games, bloated electron garbage "software," etc.), more and more people are waking up to the latency question and are questioning why their old and less powerful systems were noticeably more responsive than their brand new systems (quad core Intel owners upgrading to Ryzen systems being a notable example)."

Okay, now here's where I'm at. I don't 100 percent understand what's happening in this older thread and I don't know if it directly translates to motion blur.

I had an aging Intel Quad Core CPU and an antique junky Dell office LED-backlit LCD panel that ran over a VGA connection. To be honest, I gamed on that for quite a few years and was content. Not blown away, but never was like "this sux." I never once had a noticeable issue with motion blur. I'm not flush with cash, so I upgrade on sales and in a piecemeal manner. I had an ATI card, but it crapped out on me, so I decided to give Nvidia a try and got a decent upgrade and was content. Then, I caught some more sales and decided it was time to bite the bullet and an entirely new system, so I upgraded to a Ryzen 5 3000 series with a new motherboard and RAM to match, obviously, but kept my Nvidia graphics card and the same old monitor. Immediately I noticed that any motion whatsoever made me sick to my stomach. Games were unplayable. Even scrolling down a simple webpage in a browser, would cause my eyes to hurt and give me a headache as everything became a blurry mess with even the slightest bump of the scrollwheel.

So I did a lot of research (on a laptop so I could actually read without throwing up) and decided that to match my new admittedly humble system's "power," I had better get something that at least claims to be fancy-schmancy gaming monitor (Scepter E255B-1658A-25). It wasn't on the Blurbusters list of best gaming monitors, but it had good reviews and I could afford it (that part is key). So, it's LED, has 1ms response time, it's 165hz, it's got an pixel overdrive mode and some anti-flicker backlight thingy. Finally, it has Freesync so I can use the G-Sync of my Nvidia card. I also went from VGA to DP. And to be honest, it did make a little difference. G-Sync is unusable, the motion blur is worse than without it. Using a web browser is doable, but only if the overdrive mode is on and it's set to 165hz. Still not as smooth as my antique Dell monitor on my old system. Games are "playable" now, but too much motion forces me to either let my eyes go out of focus until the movement is over or look away from the screen. Obviously, this makes any game with a lot of action or any competition absolutely unplayable. The character standing still every few seconds so he can actually see what he's shooting at (and even then, only shoot at things that aren't moving too quickly) is a pretty easy target. What finally got me up in arms about all this is trying to replay a 20-plus-year-old game: Baldur's Gate II. Scrolling around the map is a blurry pixelated mess that looks like, honestly, a pile of fresh sick and makes me want to produce one. I took my new computer innards out and replaced them with my old Intel setup. Zero noticeable motion blur in any game on either my old monitor or my brand new one. Now, it isn't powerful enough to play my newest games, but the ones that ran on both were infinitely better looking on the older system. I borrowed a friends Asus gaming monitor and my new system was still terrible to play on. I also tested all three monitors on an ancient AMD A8/Radeon 8550G laptop with the ancient games it could play and had acceptable results. I took both of my monitors to his house and they worked just fine on his gaming system. We also swapped graphics cards and the business was the exact same: My card works fine in his system and his looks like trash in mine. Both cards work just fine on my old Intel setup. I also tried a variety of cables, including HDMI and DVI and even adapters for VGA. The only caveat is that my buddy only has an Nvidia card for testing and I don't know anyone with an ATI card to test if it's some sort of architecture compatibility problem.

So, I know for sure these components are good:

Monitors.

Graphics cards.

DP/HDMI/DVI/VGA Cables.

Ancient Intel setup.

I know for sure where the problem originates:

Ryzen 5 3000 series CPU

New motherboard?

New Ram?

Now, the reason I'm creating this TL;DR post is because I've tried all the easy stuff:

I use the latest build of Windows 10 with all updates.

Graphics drivers are up to date.

I don't have any weird or unnecessary software installed or running in the background.

I've turned off Windows gaming mode and the xbox bloatware junk that's on by default.

I've turned off full screen optimization.

Turned the graphics all the way down on my test games so they're running at a super high frame rate even if they look hideous.

(And before you ask, yes I turn off motion blur in games and some of my test games don't even have it.)

I don't want to have to try to upgrade to a 240Hz monitor because I'm not certain that would fix this problem. I don't want to upgrade my perfectly good graphics card and I don't want to upgrade out of this new Ryzen system and buy a new Intel one, because I can't afford to do so. And to be honest, it feels like a waste to have all this hardware and not even really have played any games on it. I can toss out the DXDiag if you guys think it will help.

I basically want to do whatever it takes to play games again. If I have to get in the registry to turn a bunch of stuff off, disable cores, run some software or use an older driver. I'll do whatever. I miss games. A lot.

I don't need this PC for anything but gaming, so it doesn't have to be capable of doing anything else.

*le sigh*

Thanks for reading.