Vleeswolf wrote:it would surprise me if it really was much different than connecting an LED to a mouse button though.

There certainly can be.

In my original forum tests, I validated with my 1000 FPS camera that when tapping into the Razer chroma function using a custom program to light up my mouse scroll wheel LED on click, I got anywhere from 9-10ms of delay from click to light up, as opposed to the external LED that the Chief hardwired to my mouse, which only had a <1ms variance.

Seeing as you're relying on the keyboards existing software, which is usually not tuned for responsiveness or consistency in this respect, as they don't expect/intend it to be used for this purpose (whereas I optimized the light up action responsiveness as much as possible through a custom program for my forum test, which weren't even as accurate as my article tests), that's a big unknown, and is definitely influencing the final results.

Vleeswolf wrote:

My formula is actually 1000/240 = 4.2 ms * frames count from input to output, not sure why you say 10 ms per frame.

Yes, sorry, typo: *(

240 FPS = 4.2ms frametime x frame delay = input lag in ms). That's at least how I was calculating in my original 240 FPS tests (which I found weren't worth too much where accuracy was concerned, thus why I moved onto a 1000 FPS camera).

So you're saying you times 4.2ms by total delayed frames captured?

Because I originally absentmindedly stated "(100Hz = 10ms frametime x frame delay = input lag in ms)" since that's the only way I could get your numbers to line up when comparing your "LAG (game frames)" column numbers with your "LAG (msec)" column numbers. So I now assume the "LAG (game frames)" column numbers must represent the 10ms frametime of 100Hz, e.g. 2.8 frames = ~28ms, right?

If so, even though it's technically correct, I'd suggest you discard that column, as it confuses things (the start/stop and ms numbers are enough).

Vleeswolf wrote:With 4.2 ms it is possible to measure within 1 frame differences, at least at 100Hz.

And I'm suggesting to you that it may not be. The frame differences you're trying to capture are 10ms per (100Hz), and there's only a 5.8ms frametime difference between your monitor and your capture device.

So while it can show a difference, it can't show an exacting or complete one.

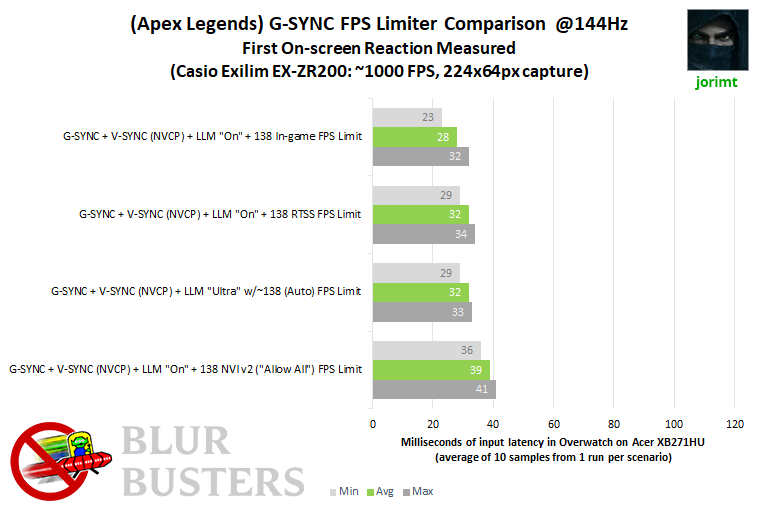

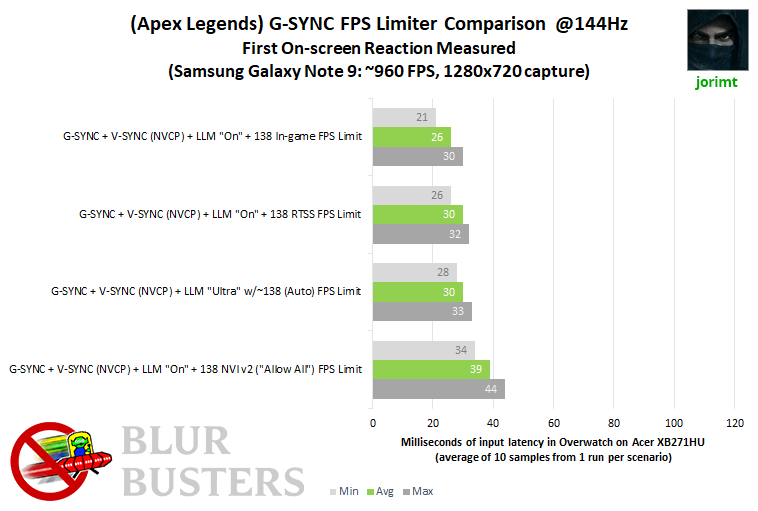

For instance, let's take two of your Apex scenarios (also, was this with G-SYNC? And why do you have V-SYNC listed as "Game" for some and "None" for others when comparing like-for-like scenarios? The scenarios should be identical but for the framerate limiter type for proper comparison, at least if you're trying to isolate the differences between the limiters):

IN-GAME @97 FPS = min: 13ms, max: 21ms, avg: 18.43ms

NULL + NVI @97 FPS = min: 17ms, max: 29ms, avg: 25.6ms

Difference = 7.17ms.

That's not a full frame at @100Hz (10ms), which means, again, if it ends up being a frame or more with a more accurate setup (1000 FPS), it will show your camera may only be able to partially capture the lag difference between the two scenarios.

I also forgot to ask, are you capturing with your phone in portrait or landscape? If the former, you're introducing even more potential inconsistencies, as now the camera's scanout is going left/right, as opposed to the display's top/bottom. Either way, the camera and display scanouts aren't synced, which causes further inaccuracy, another reason it's important that the capture device's scanout orientation is a match to the captured display, and that the capture device's FPS be that much higher than the display's to compensate for the desynchronization of scanouts between the two.

In other words, the lower the refresh rate you're capturing with your 240 FPS camera, the more accurate it will be (e.g. <60Hz), thus the need for a higher FPS capture device when we're talking about <1 frame differences at higher refresh rates.

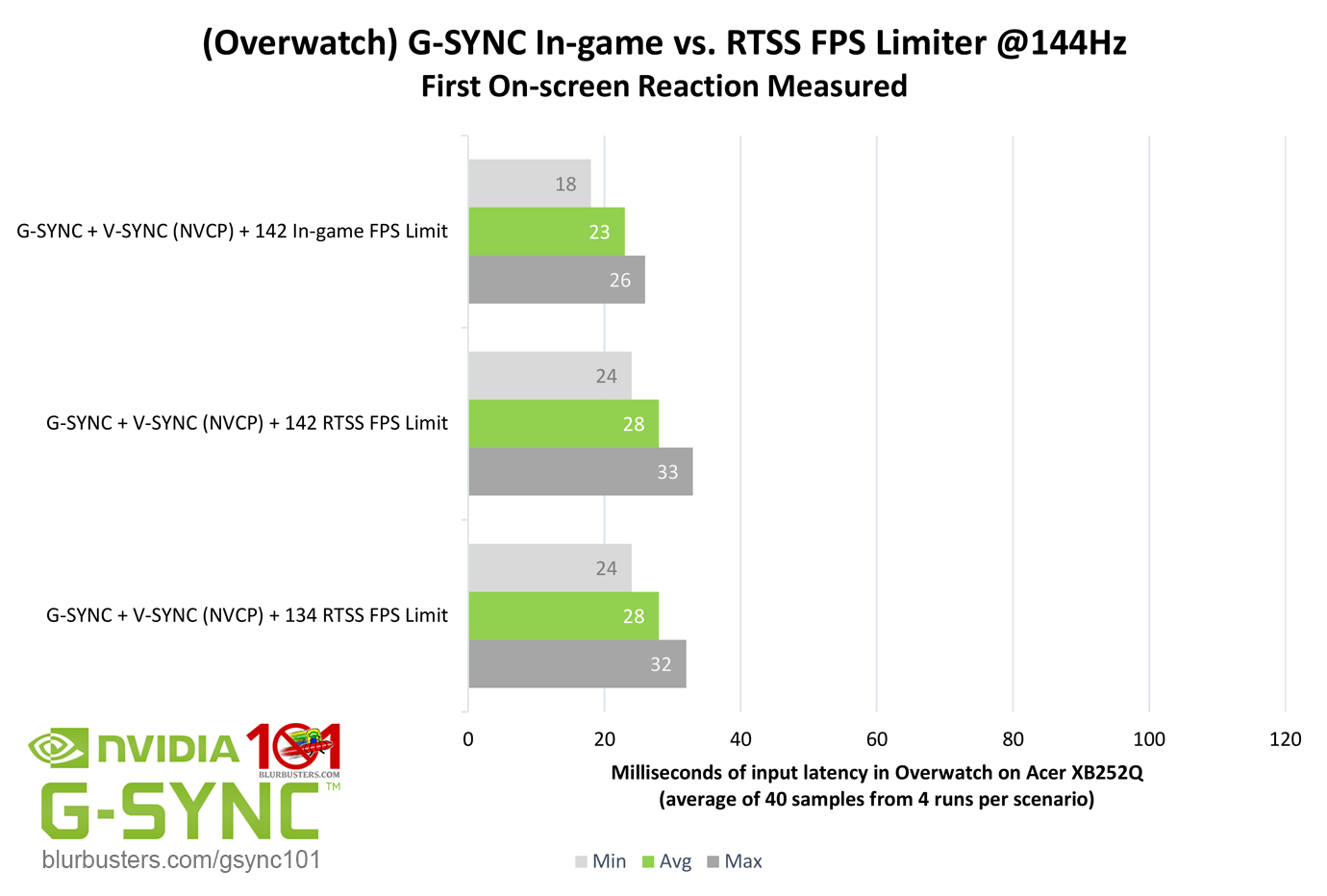

It's the reason I said you should test Overwatch or CS:GO, if possible; Battle(non)sense and I have repeatedly captured data in Overwatch (at 1000 FPS with well practiced and repeatedly validated methods) that you can directly compare, and depending on how well your results match ours, we'll know how capable (or incapable) your setup is.

With the limitations of your equipment, you need a control to get results approaching anything close to accurate, and even then, you may ultimately have to take them with a grain of salt.

Don't get me wrong here, I'm not trying to dissuade you from further testing, I'm trying to help you get it right; there's a reason not many people perform high speed test: it's absurdly time consuming (it took me over 2 months to manually generate the data in my article, and 2 weeks of that was getting my methodology down) and full of "gotchas" that can invalidate your results in no time if you're not careful (something I know all too well from my own experiences).