[Overcoming LCD limitations] Big rant about LCD's & 120Hz BS

Posted: 29 Jul 2017, 00:00

First, hello everyone, since I've just registered only to post this.

Where to start... I may be a little biased here, because I come from the CRT era, I saw LCD come up, thought that no one would want this crap that only had the advantage of being less bulky, and yet they did took over our nice CRT's...

Don't get me wrong, I love LCDs for what they allowed, namely laptops, smartphones & other small devices. That's not something CRT's would have done. And, while I hope OLEDs will fully replace LCD's in the not-so-far future, these owe their existence to LCD's anyway.

So I'm not worshipping CRT's, the OLED screen on my smartphone is pretty much perfection, the only thing a CRT still does better is multi-resolution, but with very high pixel densities even that becomes less significant, and even CRT's have a prefered resolution anyway.

So, for years 2 monitors have been sitting on my desktop, a Neovo F-419 LCD, which I like for reading text, & a ViewSonic g90f+, which gives eye orgasms in games.

It's not just the refresh, it's also the slight blur/bleeding, which isn't so nice for text, but certainly better for games. That's also why old arcade games look pretty bad today without a good CRT emulation, blur & visible scanlines make poor & low-res artwork look better. Not saying this is a "good feature" though, and I'm glad my CRT doesn't have visible scanlines. In fact, the LCD I hoped to replace it with, does have more visible scanlines.

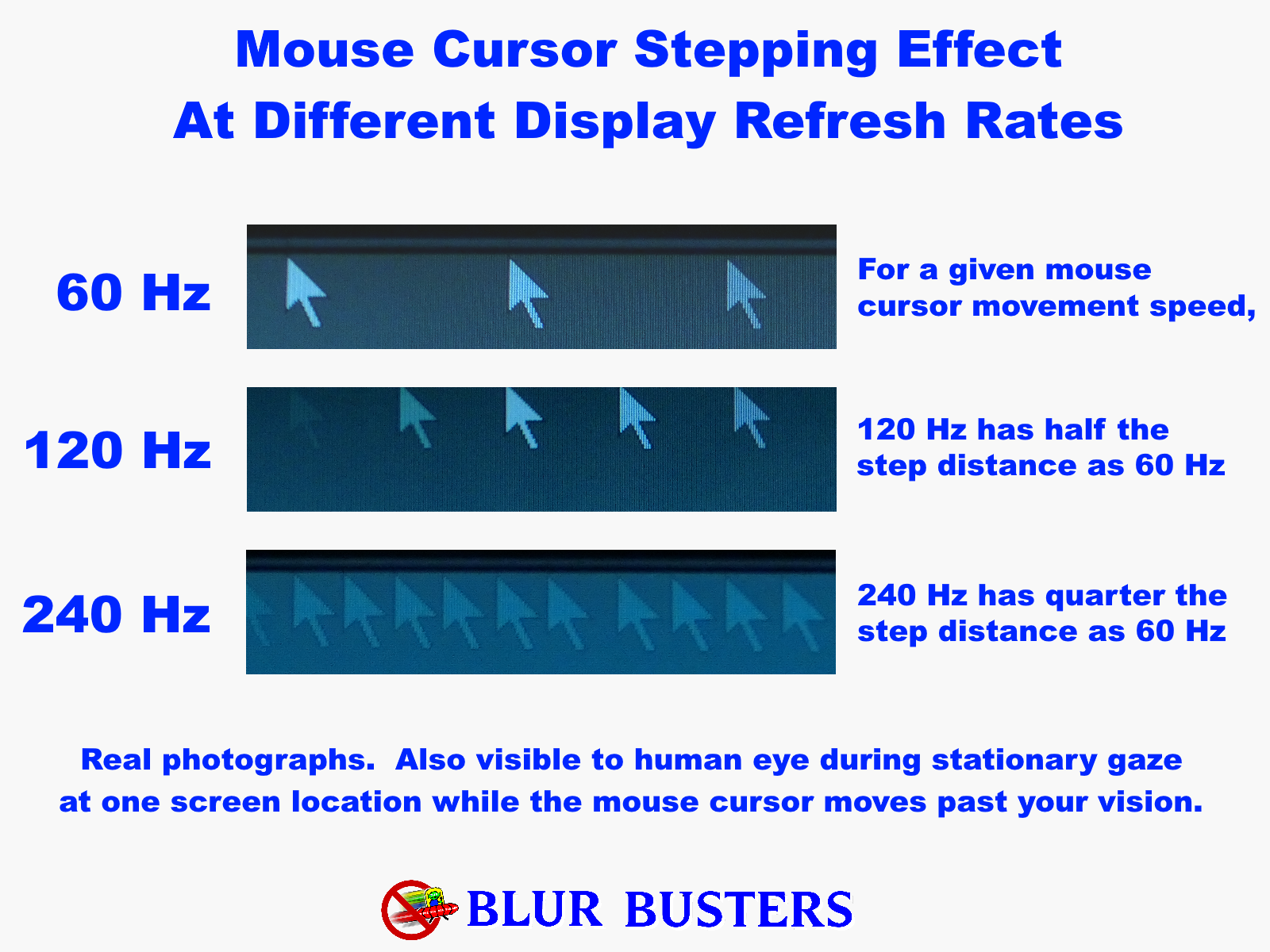

I kept hearing about 120Hz in games, that people could see the difference, all that crap. And I thought that -maybe- I was really missing something here. Monitors evolved, but surely our eyes didn't? While I can clearly see 60Hz blinking in peripheral vision, starting from 70Hz it's pretty much perfection to me. So what would I see above that? I had already tried the 85Hz that my monitor supports, well, a smooth scrolling didn't feel any smoother.

So I bought a new "gaming" monitor, first assuming that yes, these days we could really game on a LCD as people were saying, and I went for a 120Hz+ one, to see that difference that everyone was claiming to see.

I picked a Samsung CFG73. I wanted a pixel density around 90PPI - I'm not conservative at all about pixel density, but Windows apps are. So for me 90PPI is still better today, 180PPI a good alternative as it allows doubling pixels without interpolation, but anything in-between is a bad idea - which ruled out the DELL S2417DG for me.

I then installed it. I like 4/3, but I can understand how every monitor is now 16/9, since pretty much all content is made for that.

Did I see that difference that ever gamer claims to see, at 120Hz? Hell yes.............. BUT ON THAT SAME MONITOR!

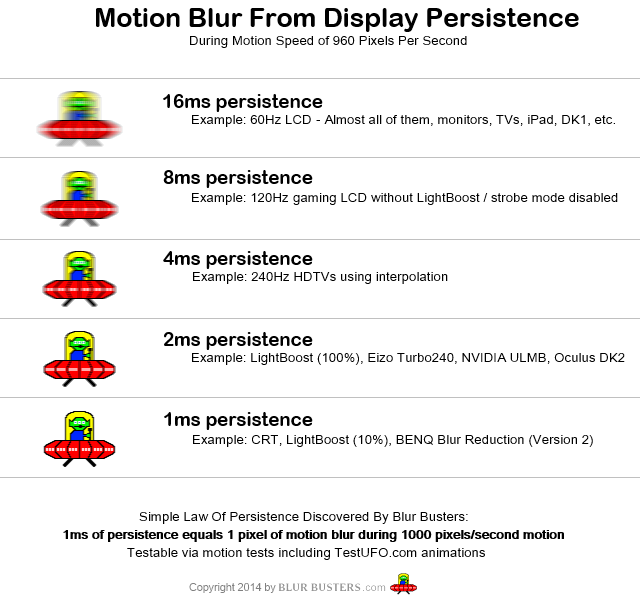

Was it smooth? Yes. Definitely. Not as perfect as on a CRT, there was still slight ghosting, but it was ok, acceptable. But at 120Hz! It was doing nearly the same as a CRT at 60Hz, but starting at 120Hz! I was at least hoping that the monitor would have given the same result as a CRT at 60Hz, and that I would have discovered the magic of 120Hz that everyone was raving about. BUT NO!

So what was in for me? Nearly the same as I already had on my CRT, except that to get this, my graphic card had to work twice as hard to produce twice more images. GREAT.

I just don't get it. How is this a technical evolution? There is no need to hype 120Hz. Our eyes don't need it.

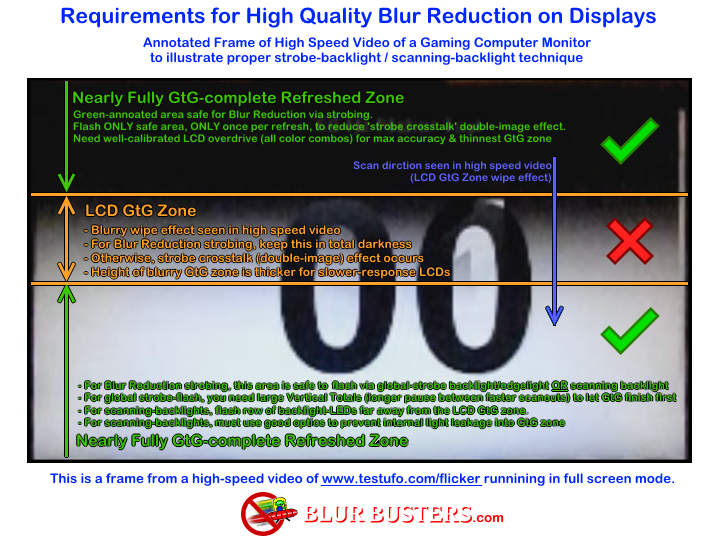

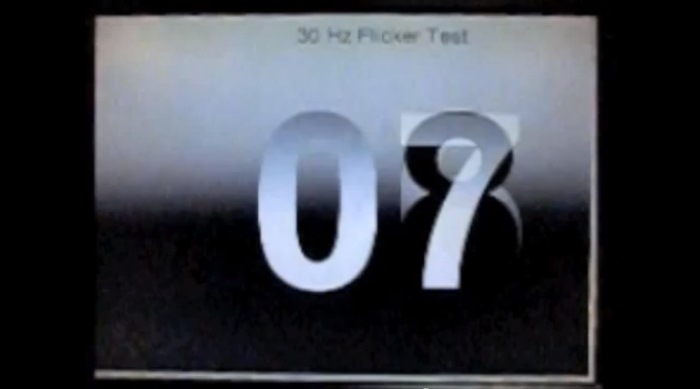

From what I understood, the only reason an LCD is still not able to do as good as a CRT, is that it can't be as bright? That is, it would have to have a very bright image for a very short time, and let it fade out until the next frame. But since it can't be that bright, a black frame is inserted twice more frequently, so the source has to spit out twice more images, and these cannot be twice the same images, am I right?

I only got this recently. I thought that black frame insertion really allowed an LCD to do like a CRT, at 60Hz.

Well that just sucks. But if this is right, it means that this is also a problem for OLEDs. Well...

In conclusion, to me that 120Hz crap is pure hype, it's only a little step forward after the large step backwards that the LCD was, and for a gamer, a CRT is still much better.

It would be interesting to see 120Hz on a CRT, though. Perhaps that would be different, because aferall, more images are produced, thus the result should be closer to real motion blur. I haven't noticed this on the CFG at 120Hz, though. To me the result was simply the same as on my CRT at 60Hz.

And something I also haven't understood, is how graphic cards aren't able to emulate refresh rate scaling. My NVidia is already capable of fake resolution, with post downscaling. I don't see the problem for the graphic card to report 4x the refresh rate, blend the 4 images together, and output that. Or maybe it has been tried and it looks bad? Maybe it's a naïve thought, but it makes sense that, while we shouldn't be needing monitor refresh rates above 70Hz, a refresh rate would benefit from being higher, or well, infinite. I dislike motion blurring in games, but those are post effects and at a too low rate, thus that's what they do, they too visibly blur. But imagine a 700Hz framerate, blending (simple or advanced, but you can't really do miracles out of stills), and output at 70Hz, why wouldn't this give good results?

Side note, I've already returned the CFG73, because it's buggy (monitor hangs [for real] after power saving. Went for CFG73 instead of CFG70 to be on the safe side, apparently it was a bad idea).

Also, the bad "text clarity" that this monitor was reported to have, is real. And it's pretty bad, really not acceptable to me. Draw a perfectly antialiased disk, the lower half is all blurry. And this monitor was praised for its image quality?

/end of rant

Where to start... I may be a little biased here, because I come from the CRT era, I saw LCD come up, thought that no one would want this crap that only had the advantage of being less bulky, and yet they did took over our nice CRT's...

Don't get me wrong, I love LCDs for what they allowed, namely laptops, smartphones & other small devices. That's not something CRT's would have done. And, while I hope OLEDs will fully replace LCD's in the not-so-far future, these owe their existence to LCD's anyway.

So I'm not worshipping CRT's, the OLED screen on my smartphone is pretty much perfection, the only thing a CRT still does better is multi-resolution, but with very high pixel densities even that becomes less significant, and even CRT's have a prefered resolution anyway.

So, for years 2 monitors have been sitting on my desktop, a Neovo F-419 LCD, which I like for reading text, & a ViewSonic g90f+, which gives eye orgasms in games.

It's not just the refresh, it's also the slight blur/bleeding, which isn't so nice for text, but certainly better for games. That's also why old arcade games look pretty bad today without a good CRT emulation, blur & visible scanlines make poor & low-res artwork look better. Not saying this is a "good feature" though, and I'm glad my CRT doesn't have visible scanlines. In fact, the LCD I hoped to replace it with, does have more visible scanlines.

I kept hearing about 120Hz in games, that people could see the difference, all that crap. And I thought that -maybe- I was really missing something here. Monitors evolved, but surely our eyes didn't? While I can clearly see 60Hz blinking in peripheral vision, starting from 70Hz it's pretty much perfection to me. So what would I see above that? I had already tried the 85Hz that my monitor supports, well, a smooth scrolling didn't feel any smoother.

So I bought a new "gaming" monitor, first assuming that yes, these days we could really game on a LCD as people were saying, and I went for a 120Hz+ one, to see that difference that everyone was claiming to see.

I picked a Samsung CFG73. I wanted a pixel density around 90PPI - I'm not conservative at all about pixel density, but Windows apps are. So for me 90PPI is still better today, 180PPI a good alternative as it allows doubling pixels without interpolation, but anything in-between is a bad idea - which ruled out the DELL S2417DG for me.

I then installed it. I like 4/3, but I can understand how every monitor is now 16/9, since pretty much all content is made for that.

Did I see that difference that ever gamer claims to see, at 120Hz? Hell yes.............. BUT ON THAT SAME MONITOR!

Was it smooth? Yes. Definitely. Not as perfect as on a CRT, there was still slight ghosting, but it was ok, acceptable. But at 120Hz! It was doing nearly the same as a CRT at 60Hz, but starting at 120Hz! I was at least hoping that the monitor would have given the same result as a CRT at 60Hz, and that I would have discovered the magic of 120Hz that everyone was raving about. BUT NO!

So what was in for me? Nearly the same as I already had on my CRT, except that to get this, my graphic card had to work twice as hard to produce twice more images. GREAT.

I just don't get it. How is this a technical evolution? There is no need to hype 120Hz. Our eyes don't need it.

From what I understood, the only reason an LCD is still not able to do as good as a CRT, is that it can't be as bright? That is, it would have to have a very bright image for a very short time, and let it fade out until the next frame. But since it can't be that bright, a black frame is inserted twice more frequently, so the source has to spit out twice more images, and these cannot be twice the same images, am I right?

I only got this recently. I thought that black frame insertion really allowed an LCD to do like a CRT, at 60Hz.

Well that just sucks. But if this is right, it means that this is also a problem for OLEDs. Well...

In conclusion, to me that 120Hz crap is pure hype, it's only a little step forward after the large step backwards that the LCD was, and for a gamer, a CRT is still much better.

It would be interesting to see 120Hz on a CRT, though. Perhaps that would be different, because aferall, more images are produced, thus the result should be closer to real motion blur. I haven't noticed this on the CFG at 120Hz, though. To me the result was simply the same as on my CRT at 60Hz.

And something I also haven't understood, is how graphic cards aren't able to emulate refresh rate scaling. My NVidia is already capable of fake resolution, with post downscaling. I don't see the problem for the graphic card to report 4x the refresh rate, blend the 4 images together, and output that. Or maybe it has been tried and it looks bad? Maybe it's a naïve thought, but it makes sense that, while we shouldn't be needing monitor refresh rates above 70Hz, a refresh rate would benefit from being higher, or well, infinite. I dislike motion blurring in games, but those are post effects and at a too low rate, thus that's what they do, they too visibly blur. But imagine a 700Hz framerate, blending (simple or advanced, but you can't really do miracles out of stills), and output at 70Hz, why wouldn't this give good results?

Side note, I've already returned the CFG73, because it's buggy (monitor hangs [for real] after power saving. Went for CFG73 instead of CFG70 to be on the safe side, apparently it was a bad idea).

Also, the bad "text clarity" that this monitor was reported to have, is real. And it's pretty bad, really not acceptable to me. Draw a perfectly antialiased disk, the lower half is all blurry. And this monitor was praised for its image quality?

/end of rant

versus

versus  versus

versus