BurzumStride wrote:You guys made a valid point about producers meeting the demands of the average consumer. Before reading this I have not even dreamed of seeing non-pixelated 1000hz 1000FPS anytime soon, but with framerate amplification technologies' improved GPU frame output, the high hertz approach could appeal to a broader crowd than BlurBusters and Input lag purists such as myself (I officially coin that term haha).

Four years ago, I did not even dare think 1000fps at 1000Hz was going to be realistic within our lifetimes.

Now I've realized it's become very realistic in less than 10 years at least for high-end gaming monitor territory. Experimental 1000Hz displays are currently running in laboratories around the world, and some are actually now being sold for laboratory use (

ViewPixx 1440Hz DLP) -- and since successful homebrew 480 Hz happened (

Making of story) -- we now see 1000fps@1000Hz (lagless & strobeless ULMB!) becoming a reality within a decade.

Blur Busters coverage will be increasingly louder in the coming few years, to help compel GPU and monitor manufacturers work towards this goal. We'll probably reach the point where we'll begin gently shaming the websites that say 240 Hz and 480 Hz is not important -- there are many of those.

It's necessary for the holy grail of strobless ULMB and blurless sample-and-hold, reaching closer and closer

to a real-life display that has no motion blur above-and-beyond human eye limitations.

It'll factor into our upcoming monitor tests,

when we publish "Minimum persistence without strobing" benchmarks in a prominent part of our upcoming new monitor-reviews format. The only way manufacturers can reduce persistence (MPRTs) without strobing via higher Hz closer and closer to the "blur-free sample-and-hold" holy grail.

Mathematically:

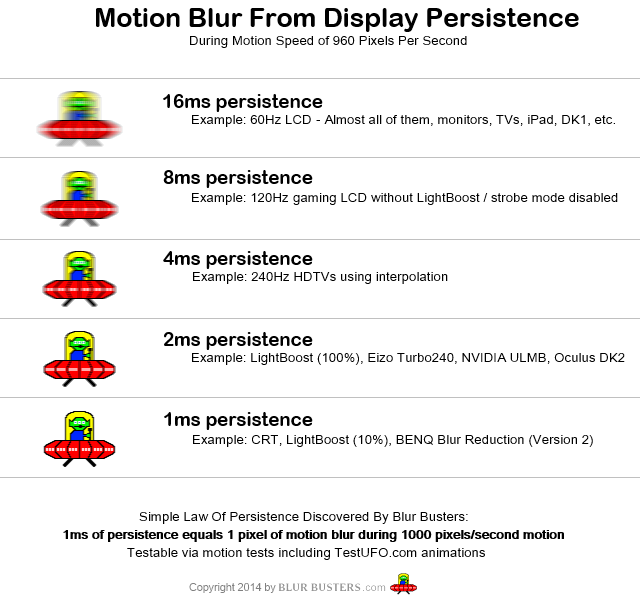

120fps at 120Hz non-strobed LCD = minimum possible MPRT/persistence is 8.33ms

240fps at 240Hz non-strobed LCD = minimum possible MPRT/persistence is 4.16ms

480fps at 480Hz non-strobed LCD = minimum possible MPRT/persistence is 2.1ms

1000fps at 1000Hz non-strobed LCD = minimum possible MPRT/persistence is 1ms

Assuming pixel response is not the limiting factor. The more squarewave you can get LCD GtG pixel response to become (closer to traditional blur-reduction strobing), the MPRT measurement actually scales linearly with refresh rate.

Today, the only way manufacturers achieve 1ms MPRT (not GtG) is via strobing. When you see a manufacturer mention "MPRT" along with "1ms", that's the strobed measurement.

BurzumStride wrote:Granted, I do not know much about the frame-time limitations, and how difficult it may be to get over the final 4.17ms to 1ms gap due to things like the time it takes for the CPU and GPU to communicate etc, so please correct me if I am wrong.

My feeling is that it is probably okay to put a small amount of input lag (e.g. 2ms) into the whole pipeline if there's a huge benefit such as converting 100fps->1000fps.

Ideally, the fully rendered frames should be delivered laglessly, with the additional frames inserted laglessly in between.

To help reduce artifacts, the engine & GPU can communicate partial data about intermediate frames (not for rendering, but for better reprojection). You might have only 100 position updates per second, but the game engine could deliver 1000 low-resolution geometry positions per second (e.g. collisionbox granularity), with the GPU doing lagless geometry-aware interpolation via various tricks (multilayer Z-Buffers and other depth buffers) to allow successful artifact-free occulsion-reveals (objects behind objects) during lagless interpolation techniques such as time warping, reprojection, etc. Lots of researchers are working on this as we speak, and probably lots more in secret laboratories at places like NVIDIA or AMD.

Eventually, we'll have detailed enough buffers to allow things like artifact-free object rotations and parallax effects (artifact-free obscure/reveal effects) during some future form of frame rate amplification technology. There are many ways to do this with less GPU horsepower than a full, complete, polygonal scene re-rendering.

That's what researchers are doing, thanks to virtual reality making it critical. Any company that does not do this, risk falling behind, losing shareholder money (as VR and eSports industries rapidly grow beyond them in 10 years, etc).

Single-frame-drop stutters are only mildly bothersome on a computer monitor, but can cause sensitive people to actually puke (real barf) during virtual reality, so completely stutter-free operation is essential in making virtual reality mainstream, especially as more queasy people begin to begin wearing VR headsets.

And some people cannot wear today's VR because of 90Hz flicker (so the only way to get low-persistence without flicker is insane frame rates). Over time, so VR needs to be absolutely more and more 'perfectly stutterfree and real' in solving a lot of problems (motion blur, stutters, input lag, etc). Both AMD and NVIDIA are beginning to realize this only recently. Give them 10 years, and we'll have plenty of dedicated silicon directly on the GPU towards solving the problem of higher frame rates laglessly & artifactlessly without needing full GPU re-renders for each single frame.

It will soon become a priority project at GPU/monitor companies once all the technological jigsaw puzzles are falling in place: It's beginning to happen. Besides -- going towards insanely-high Hertz is currently the only way to pass fast-motion Holodeck Turing Tests anyway: "

Wow, I didn't know I was wearing a VR headset instead of transparent ski goggles" fashion where they are not able to tell apart a VR head set and real life. This has to be achieved without motion blur and without strobing.

As explained earlier, going ultra-high-Hz (or using analog motion; going framerateless) is the only way to get closer to a true, real-life display -- because real life doesn't strobe, real life doesn't flicker, real life does not force extra motion blur above your vision limits, real life does not have a frame rate. So ultra-high-Hz is the only easy technological progress out the VR uncanny valley (and it's still difficult).

So, as a result, GPU manufacturers are forced into researching frame rate amplification technologies -- with obvious spinoff applications to cheap 1000fps@1000Hz in a decade or so -- including on desktop gaming monitors (not just VR).

BurzumStride wrote:I would love to be able to predict when we might expect to get 1000fps stable in most games

Two workarounds:

(A) Use a lower frame rate for stability, and framerate-amplify that instead.

We don't necessarily need 1000fps stable for frame rate amplification -- we can just do a lower number stable such as 50fps, 100fps or 200fps stable. Once stability is achieved, frame rate amplification is stable the rest of the way. 100fps stable = can be "frame rate amplified" to 1000fps stable. (whether by interpolation, timewarping, reprojection, or other artifact-free geometry-aware lag less technology)

(B) Use variable refresh rate even at 1000Hz

If randomness is still a problem at these levels (even a 1ms object mis-position can still be a visible microstutter in VRR) --

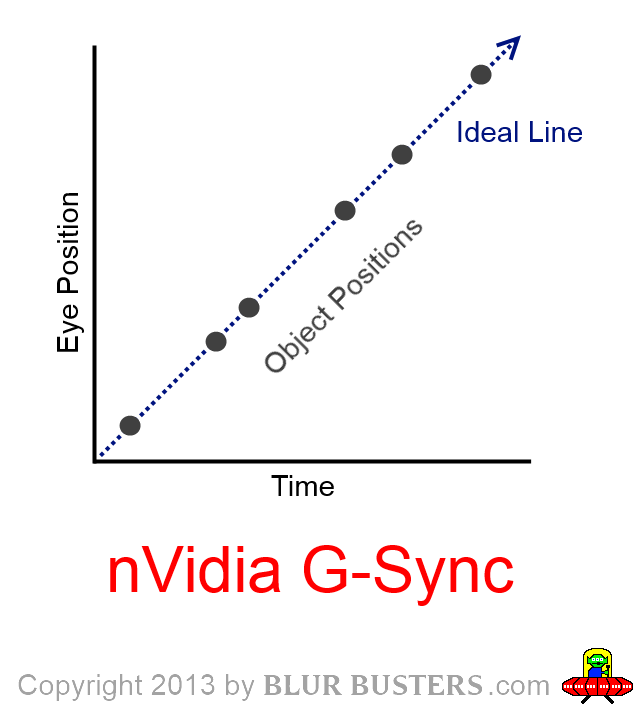

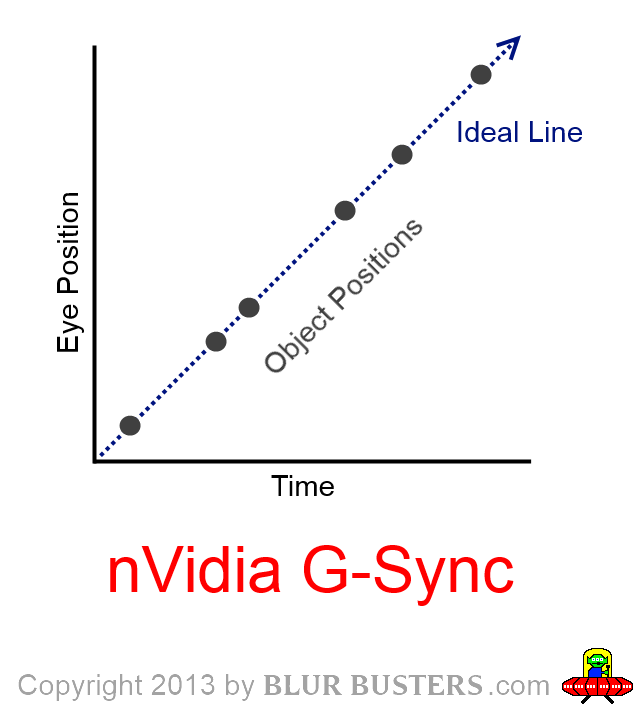

variable refresh rate rate can still be used in the 1000 Hz stratosphere. VRR (FreeSync, GSYNC) can eliminate visibility of random stutter as long as the randomness is perfectly synchronous.

Random frame visibility times are completely stutterless as long as human visibility time is perfectly in sync with gametimes. This is by virtue that random edge-vibrations simply blends into motion blur. 90fps->112fps->93fps->108fps->91fps->118fps->104fps->95fps random frametimes look just like perfect VSYNC ON 100fps@100Hz, by virtue of the variable refresh rate technology.

As long as the game is very "VRR perfect", it's theoretically possible to have a VRR-compatible frame rate amplification technology. It's much harder probably, but it's not mathematically impossible to combine frame rate amplification technology and VRR simultaneously.

Theoretically, VRR may eventually become unnecessary when well above >1000fps or when we gain better foeval "ultra-high-Hz-at-eye-gaze" rendering tricks. But VRR is still useful at 480Hz and 1000Hz, as even a 1ms microstutter is still human-eye-visible, especially in VR. During 8000 pixels/second eye tracking in a 1-screen-width-per-second 8K VR headset, a single 1ms framedrop 1/1000sec -- turns into an 8-pixel microstutter. Small but still visible to human eye.

We already know VRR still remains useful in the 1000Hz league. It's low-persistence VRR without strobing.

BurzumStride wrote:If I understand the logic correctly, the jump from generating 240FPS (~4.17ms) to 1000FPS (1ms) will only require a ~3.17ms frame-time decrease. Seeing how the the jump from 60 to 120 frames already required a 8.3ms decrease in frame-time, shouldn't the final ~3.17ms step towards 1000 frames be relatively easy?

It doesn't get easier. The mathematical difficulty is something else completely different.

From a "keep things artifact-free to human eye", it's theoretically easy if you have geometry-awareness and full artifact-free reveal (parallax / rotate / obscure / reveal effects). Human eyes still can notice things being wrong, even if briefly, as the animation below demonstrates well:

A very good animation demo of this effect is

http://www.testufo.com/persistence -- it's very pixellated at 60Hz, doubles in resolution at 120Hz, quadruples in resolution at 240Hz, and octupled in resolution at 480Hz during our

actual 480Hz tests.

Turn off all strobing (turn off ULMB), use ordinary LCD, and then look at the stationary UFO, and then look at the moving UFO.

That's always a

full-resolution photograph being scrolled behind slits. The pixellation is caused by the sheer lowness of the refresh rate producing limited obscure-and-reveal opportunities. This horizontal pixellation artifact is caused by low Hz, that still is a problem even at 240Hz.

GPUs will need to be able to avoid this type of artifacts. Obscure/reveal artifacts. Frame rate amplification technologies will need to be depth-aware/geometry-aware with sufficient graphical knowledge behind objects.

It doesn't just apply to vertical lines! It also applies to single side-scrolling objects in front of background -- parallax side effects -- and artifacts around edges of objects -- that currently occur with today's VR reprojection technologies during 45fps->90fps operation.

Or even random obscure/reveals such as seeing through a bush, like running through dense jungles. The limited Hz produces limited obscure/reveal opportunities. Running through a dense jungle and trying to identify objects behind dense bush, is much easier in real life. This is because real life has an analog-league of infinite numbers of continual obscure-reveal opportunities that flicker-in-and-out. It all blends better than you could do with a limited-Hz display. The only way to get closer and closer to real life on this, is ultra-high Hz in this respect. Frame rate amplification technologies will need to handle this sort of stuff at least at an acceptable manner (far better than today's reprojectors).

Ordinary interpolators won't successfully guess the proper obscure/reveal effects in between real frames.

But, tomorrow, depth-aware / geometry-aware interpolators/reprojectors/timewarpers can potentially properly fill-in the proper obscure-reveals effects (at least "most of the time"), in order for you to avoid strange effects such as reduced resolution.

Researchers are working on ways to solve this type of problem, so that reprojection/interpolation can be done laglessly with even fewer artifacts -- by skipping full GPU renders for even 80% or 90% of frames -- and using frame rate amplification technologies instead.

BurzumStride wrote:Granted, I do not know much about the frame-time limitations, and how difficult it may be to get over the final 4.17ms to 1ms gap due to things like the time it takes for the CPU and GPU to communicate etc, so please correct me if I am wrong.

Currently, this isn't the main wall of difficulty at the moment.

There may still be enforced lag from the communications, but the key is that the real frames (e.g. 100fps) would be delivered with no lag relative to today. The difficulty is inserting extra frames laglessly. Which is possible with some kinds of re-projection technologies. The even bigger difficulty is to insert extra frames without interpolation artifacts -- by improving the depth-awareness / geometry-awareness of the interpolation technology. Then assuming you had proper motion vectors (which can continue at 1000Hz from the PC), you can still continuously reproject the last rendered frame, with proper "obscure-and-reveal" compensation.

That's the specific important technological breakthrough that current researchers are currently working on --

and it will successfully allow large-ratio frame rate amplification -- such as 10:1 ratios (e.g. 100fps -> 1000fps frame rate amplification). Successful reliable geometry-awareness / depth-awareness -- with obscure-and-reveal compensation during reprojection technologies -- will be very key to this, as probably being germane to the invention of "simultaneously blurless and strobeless" screen modes (blurless sample and hold) without needing unobtainium GPUs.

BurzumStride wrote:At the moment, in games like Battlefield 1 it is still very difficult to hit stable 240FPS even with an overclocked 7700k

This is very true. But you can:

1. Cap to a lower frame rate instead for stability and then framerate-amplify from there.

2. Use variable refresh rate during 1000Hz, and use a variable-framerate-aware reprojection algorithm.

So you can do either (1) or (2) or both.

Problem solved, assuming minor modifications to the game engine to give motion-vector hinting to the frame rate amplification technology (GPU silicon).

Developers probably still want to send roughly 1000 telemetry updates per second to the GPU (basically 6dof telemetry, probably at less than 10 or 100 kilobytes per interpolated frame) -- to help the geometry-aware reprojector avoid mis-guessing motion vectors to avoid back-and-fourth jumping effects sometimes seen in online gameplay during erratic latencies. The information that helps frame rate amplifier technologies might be simply depth-buffer-data level or low-resolution hitbox geometry level, and the frame rate amplification technology (reprojector) does the rest based. With data from the last fully-rendered frame instead. And the last fully rendered frame might maybe need, say, 10% more GPU rendering (not a biggie) to allow extra texture caching to occur to accomodate obscure-and-reveal compensation in succeeding reprojected frames. A small GPU cost, to allow good frame rate amplification ratios (e.g. 5:1 or 10:1).

To accomodate errors in stability, you can timecode everything with microsecond-accurate gametimes, and then simply make sure refresh visibility times stay in sync with gametimes (By using 1000Hz VRR-compatible frame rate amplification technology) and make sure all the reprojected frames are in perfect gametime-sync with predicted eye-tracking positions. Then errors/fluctuations in communications, rendertimes, CPU processing, etc, will be rendered invisible (or mostly invisible), as long as object positions stay within microseconds of refresh-cycle visibility times, even if everything is lag-shifted by a few-hundred-microseconds to de-jitter "engine-to-monitor" pipeline-flow erraticness (Many GPU drivers already do that today, to help frame pacing issues, and this is often increased much bigger when running in SLI mode). There are many technological workarounds to compensate for erraticness, none insurmountable -- what's important is gametime is in really good sync with refresh cycle visibility times. If gametime is erratic, then allow refresh cycles to become synchronously erratic to that, to avoid stutter (in the common traditional "G-SYNC reduces stutters" fashion) -- it still works at the kilohertz refresh leagues -- and still keeps the blurless sample-and-hold (strobeless ULMB/LightBoost) holy grail -- it isn't mutually exclusive.

It can also be a completely different algorithm than what I'm dreaming of.

There are actually huge numbers of ways to potentially pull this off (and we don't know who plans to do what approach) -- but I know this for sure:

Researchers are currently working on this problem, indirectly in thanks to billions of of dollars being spent on virtual reality research & development -- and technology has finally caught up to making this feasible in the not-too-distant future.

_____

Oculus' timewarping (45fps->90fps) is a very good step towards true lagless geometry-aware frame rate amplification technology. Today, it's a big breakthrough.

But ultimately, eventually it'll only be just a Wright Brothers airplane. Tomorrow's algorithms will be hugely far more advanced, allow much bigger ratios (10:1 frame rate amplification) and with virtually artifact-free obscure-and-reveal capability.

All of this, will be very, very good towards making 1000fps @ 1000Hz practical by the mid 2020s at Ultra-league details on a three-figure-priced ordinary GPU.