<HOMEBREW MONITOR-HACKING TALK>

Skip if you're not interested in technical chat

In theory, *depending* on how much the monitor's hardware can help dejitter your strobing signals (e.g. if it can begin a timed pulse for you, or capable of scheduling a pulse at the next VBI, or needs an ON-signal + OFF-signal), then triggering the pulse from software is likely acceptable. But it's a heavy "depends" -- and there's often no easy way to determine if the monitor firmware of a specific monitor is capable of helping you.

Strobe Phase precision is less important

The phase jitter can actually jitter a lot (Even a +/- 100 milliseconds jitter in *starting* the flash did not produce noticeable flicker, at least at high frequencies like 120Hz+) -- as long as length of flash remained darn near perfectly constant (same number of photons per second hitting the human eyeballs). But even lengthening/shortening the flash by 10 microseconds, will produce noticeable brightness flicker. Be noted, that strobe crosstalk can vibrate upwards/downwards slightly -- +/-100ms is a 200ms amplitude and 0.2/8.3ms -- or about 1/41th of a screen height vibration in strobe crosstalk for random +/-100ms vibrations in strobe phase precision. This is probably not as noticeable nor bothersome as flicker, but it is a subtle side effect that can be noticed at http://www.testufo.com/crosstalk ... it's at least important to mathematically understand vertical shifts in strobe crosstalk relating to strobe phase inaccuracy.

Strobe Length precision is super-important

10 microsecond error is very flickery. Let's consider many strobe backlights are capable of flashing at 1 millisecond per refresh cycle. That's 1000 microseconds. Now, vary that by 1% -- that's 10 microseconds. A strobe flash difference of 1000 microsecond versus 1010 microseconds is a 1% difference in number of photons hitting the human eyeballs, and is roughly almost three shades apart in 8-bit greyscale (0 to 255 -- basically almost the brightness difference of three shades apart in the color palette). So 10ms is WAY too inaccurate to time a strobe backlight flash -- you gotta aim for sub-microsecond precision for homebrew strobe backlight flash.

So if your monitor's firmware is capable of turning off a flash accurately, you can software trigger the flashes. But most monitor firmwares needs the firmware to do the turn-on signal and the turn-off signal to the backlights. And not all monitor firmwares have direct access to the backlight -- it's very panel dependant.

But a homebrew modder can open up a monitor, and piggyback an Arduino to the backlight. That's been done before by multiple modders, and quite successfully so. But it's very advanced stuff, it's easier to just buy the Cirthix/Zisworks backlight controller board and retrofit the monitor yourself to have a strobe backlight.

Regardless, USB random jitter is extremely bad -- you you will not get the necessary precision from software running in Windows except possibly as a real-time VxD running in the top-priority ring, connected to a direct logic GPIO line (like a parallel port pin) completely bypassing ports. This is probably doable from a Raspberry Pi running a RTOS, but it's easy to do with an Arduino -- a $20 Arduino clone is capable of driving a strobe backlight accurately even with a USB connection to the PC. The Arduino can de-jitter as needed.

If you are running software at a very thin OS level (e.g. dedicated DOS app, or an RTOS like QNX) certainly your existing computer probably can handle it. But not under Microsoft Windows (at least for strobe flash length part -- that would need to be handled separately of Windows).

Software black frame insertion does not have this problem at refresh-cycle granularity simply because the refresh cycles are running on an exact schedule (fixed Hz). It's simply framebuffered and the hardware times the refresh cycle exactly. That's why as long as VSYNC ON is working, the hardware VSYNC is de-jittering perfectly for you, prevening issues.

But trying to software-control a sub-refresh-cycle black frame insertion directly (via direct backlight control), you do not have the help of VSYNC ON to help de-jitter for you. You're completely on your own. Instead of hardware helping you (thanks to VSYNC ON), you don't have hardware helping you anymore for timing backlight-based blackness.

Bam, you now have, literally almost 4 orders more magnitudes of accuracy demands on your software for software-controlled backlights, versus simple framebuffer-based software black frame insertion. So trying to get one-quarter persistence (one quarter of a refresh cycle -- 2ms versus 8.3ms of 1/120sec) by using backlight instead of software BFI, you now have to have more than 5000x more software precision demanded of your software.

Software Precision Requirements

During 120Hz blackness-insertion between visible frames, to reduce motion blur:

Software framebuffer BFI: 8.333 milliseconds (8333 microseconds) since hardware VSYNC ON is helping you

Software backlight driving: 0.001 millisecond (1 microsecond) now becomes necessary

Ouch... about 5 orders of magnitude!

That's 8.333ms (1/120 = 8.333ms) for timing 120Hz software BFI, versus 1 microsecond for timing backlight flashes. Ouch! Almost 10,000x more software precision necessary just to get 4x better persistence (8.3ms full persistence versus 2ms strobed persistence) because you're now suddenly saying goodbye to hardware help ("VSYNC ON" during software BFI). That's the big brick wall you need to jump over, and it's mostly impassable unless you're on an RTOS instead of Microsoft Windows.

It's all fun stuff, but anything done via Windows needs at least *some* help from the hardware, to keep it sufficiently accurate to work very well.

The best the software can do is time the start of strobe flashes, or configure backlight flash timer hardware, basically you will need a little bit of help from hardware. Things like http://www.testufo.com/refreshrate and http://www.vsynctester.com manages to (over time) successfully extrapolate microsecond precision in refresh rates but you need the exact precision in realtime, every single refresh cycle. The most Windows may be able to do is use a USB (or DDC/CI connection) to approximately signal the VSYNC timing/phase to an Arduino attached to the monitor backlight. The Arduino which would then de-jitter (and filter outliers / missed VSYNC signals from USB jitter, etc) and then the Arduino would merrily continuously precisely timing the strobe backlights, especially correct strobe lengths. If you strobe a monitor's backlight, make sure you use one of those 32-bit ARM based Arduinos, those easily achieve microsecond precision with good programming.

Ideally, Arduino should directly monitor the VSYNC in the monitor signal (and that kind of makes the strobe-backlight VRR compatible -- variable rate strobing!) but in theory the software can configure the Arudino precisely enough via Windows, so minimally, just an attachment to the backlight only, bypassing the monitor motherboard and no nonstandard taps into video cables or monitor motherboards (thus making it more generic-monitor-mod compatible, in theory). Heck, you can even packetize it via timecoded VSYNC history (microsecond accurate) transmitted as random packets occasionally to an Arduino. And then the Arduino would extrapolate a darn-near perfect strobe flash out of it, so in theory you do not need any hardware VSYNC attachments at all. But you still need the Arduino to accurately time the strobe flashes.

BlurBusters originally started because of an Arduino scanning backlight, so we have learned enough to be able to inform you that if you do some of it in software, you still need help from the hardware -- whether as barebones like a GPIO connection to the backlight from an RTOS directly on your PC -- or via Windows configuring an Arduino backlight driver doing the flash timing for you. There are no APIs available to control monitor's backlight flashing at the sub-refresh-cycle level, so this is essentially 100% hack-on stuff if you do DIY strobe backlights.

It's cheaper to mail the monitor ($100 shipment), get someone to add the Zisworks board (a few hundred dollars for materials, labour, and monitor-specific tweaks), and mail the monitor back ($100 shipment), than to pay someone to attempt to do this in 100% software -- it's almost an Apollo mission without an RTOS / without GPIO logic lines / without an external microcontroller (whether existing in monitor, or a strap-on hack to the monitor backlight). It would even take me several weeks to pull it off even under QNX, assuming the motherboard had a microsecond-accurate GPIO line that I can attach to the monitor's backlight. And you wouldn't be able to run any Windows games, since the computer would be running QNX as the microsecond-accurate operating system -- or another RTOS instead. All this, at typical computer programmer rates in North America, that is many thousands of dollars. Cheaper to buy a high-end GSYNC monitor with ULMB.

So the easy route is strap-on an Arduino to the back of the monitor, wire it to control ON/OFF the backlight/edgelight of the monitor's panel, write a couple hundreds lines of Arduino code (for a basic strobe backlight), and only use Windows-side to configure the Arduino parameters (if necessary, even intermittently communicate the current refresh rate, phase and lengths, for the Arduino to extrapolate off. And/or communicating timestamps of past VSYNC events too for Arduino to extrapolate a synchronization to, if you're avoiding a VSYNC hardwire to a GPIO pin on the Arduino).

</HOMEBREW MONITOR-HACKING TALK>

Blur reduction possible on any monitor via software ?

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Blur reduction possible on any monitor via software ?

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

Re: Blur reduction possible on any monitor via software ?

I tried making a user-mode program which turns off the monitor during VBLANK on Linux but it resulted in extreme flickering (running at 60hz monitor won't overclock on Linux for some reason).

Would it be possible to run the real time code while Windows / Linux is running ?

At least my monitor let's me have complete control of the backlight from Linux commands

Would it be possible to run the real time code while Windows / Linux is running ?

At least my monitor let's me have complete control of the backlight from Linux commands

Re: Blur reduction possible on any monitor via software ?

Windows is not a RTOS, so no. Most variants of Linux aren't RTOS either.

A RTOS will trade throughput for predictable latency.

Basically you need to guarantee that your code will finish executing before your deadline. You don't always need an RTOS to achieve this, but with tightly constrained deadlines, and all the competing processes on a modern desktop PC, it gets ugly. If you're running your code on a dedicated microcontroller, it's a lot easier, because you control everything that's running. If you're dead set against modifying the monitor itself, then maybe you can man in the middle the command channel of the display interface, and insert your commands there.

A RTOS will trade throughput for predictable latency.

Basically you need to guarantee that your code will finish executing before your deadline. You don't always need an RTOS to achieve this, but with tightly constrained deadlines, and all the competing processes on a modern desktop PC, it gets ugly. If you're running your code on a dedicated microcontroller, it's a lot easier, because you control everything that's running. If you're dead set against modifying the monitor itself, then maybe you can man in the middle the command channel of the display interface, and insert your commands there.

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Blur reduction possible on any monitor via software ?

Also, it should be done using direct register access to the monitor. Not via USB, not via serial cable, not by DDC/CI or VCP/MCCS commands, not by video cable. Won't work precise enough, alas. Those aren't microsecond accurate. You need a direct GPIO wire, perhaps a parallel port pin of a direct PCI-Express parallel port. And you need to run that wire directly to the backlight controller of the monitor. And run your software in kernal space -- essentially a "Ring 0" driver.

Also, get familiar with how strobe phase affects strobe crosstalk. For a 135KHz horizontal scanrate (1080p120 typical), a delay of 1/135000sec shifts strobe crosstalk downwards by 1 pixel. So adjusting phase of strobe flash, to correctly time the GtG band off-screen.

Strobe flash length accuracy -- I suggest 1 microsecond precision

Strobe phase accuracy -- I suggest 100 microsecond precision, this will be minor vertical vibration in strobe crosstalk, but should be acceptable.

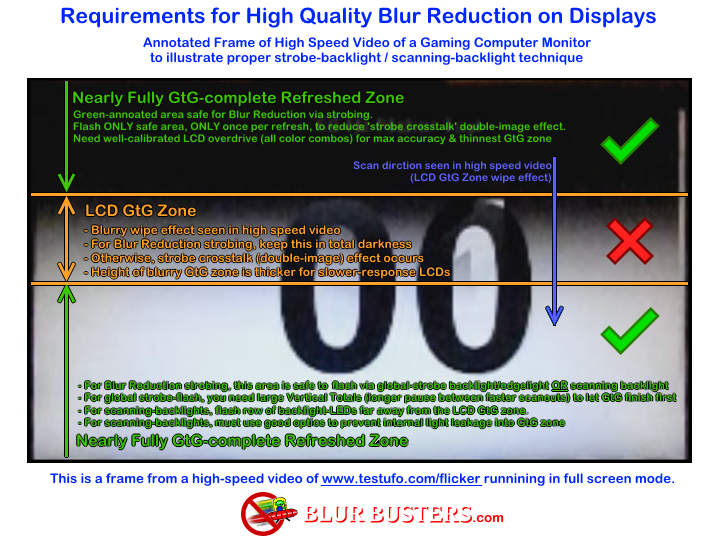

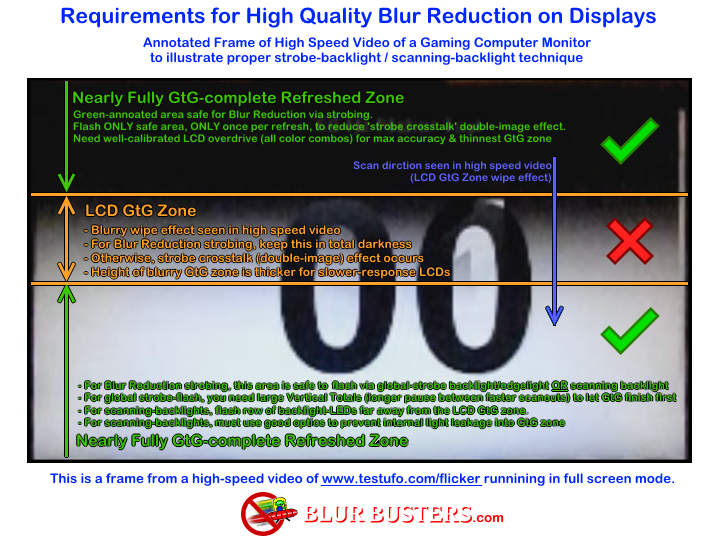

You want to use large vertical totals, and time the strobe phase to "cram GtG into VBI":

(From this page)

Also, get familiar with how strobe phase affects strobe crosstalk. For a 135KHz horizontal scanrate (1080p120 typical), a delay of 1/135000sec shifts strobe crosstalk downwards by 1 pixel. So adjusting phase of strobe flash, to correctly time the GtG band off-screen.

Strobe flash length accuracy -- I suggest 1 microsecond precision

Strobe phase accuracy -- I suggest 100 microsecond precision, this will be minor vertical vibration in strobe crosstalk, but should be acceptable.

You want to use large vertical totals, and time the strobe phase to "cram GtG into VBI":

(From this page)

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

Re: Blur reduction possible on any monitor via software ?

I'll try modifying the Radeon driver for Linux to do this.If the backlight control has a X millisecond delay can't you just do it X milliseconds earlier.Jitter will be a problem though but a tickless kernel might help.Chief Blur Buster wrote:Also, it should be done using direct register access to the monitor. Not via USB, not via serial cable, not by DDC/CI or VCP/MCCS commands, not by video cable. Won't work precise enough, alas. Those aren't microsecond accurate. You need a direct GPIO wire, perhaps a parallel port pin of a direct PCI-Express parallel port. And you need to run that wire directly to the backlight controller of the monitor. And run your software in kernal space -- essentially a "Ring 0" driver.

Also, get familiar with how strobe phase affects strobe crosstalk. For a 135KHz horizontal scanrate (1080p120 typical), a delay of 1/135000sec shifts strobe crosstalk downwards by 1 pixel. So adjusting phase of strobe flash, to correctly time the GtG band off-screen.

Strobe flash length accuracy -- I suggest 1 microsecond precision

Strobe phase accuracy -- I suggest 100 microsecond precision, this will be minor vertical vibration in strobe crosstalk, but should be acceptable.

You want to use large vertical totals, and time the strobe phase to "cram GtG into VBI":

(From this page)

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Blur reduction possible on any monitor via software ?

You can do an offsetting compensation, but you have no control over DisplayPort micropackets (jitter) or UART FIFO buffer-flush unpredictabilities (e.g. serial buffer jitter). There's no way to transmit DDC/CI with VESA/MCCS/VCP commands (or USB) with jitter low enough to prevent flicker.Curi0 wrote:I'll try modifying the Radeon driver for Linux to do this.If the backlight control has a X millisecond delay can't you just do it X milliseconds earlier.Jitter will be a problem though but a tickless kernel might help.

Also, when you send multiple commands consecutively, they tend to want to "buffer together" for efficiency. So transmitting two commands a millisecond apart on serial mechanisms (serial/USB/etc) you might actually cause the commands to transmit simultaneously together because of a serial-transmit buffer.

Basically, the behavior of a traditional UART's transmit buffer which delays for a tiny fraction of a second to check if there's consecutive commands and then transmit them together. This behaviour has existed for decades, all the way back to 16550AFN UARTs in the 1980s that revolutionized a slow computer's ability to use a high-speed modem by merging multiple bytes (inefficiently transmitted by themselves) into one larger burst (block transmission at lower frequencies). This behavior exists in almost all serial buses today, including USB and the serial bus that is used for DDC too, and is a basic behavior of all more-advanced packetization systems too since buffering massively increases bandwidth by several orders of magnitude for a specific amount of processing. That hardware-based buffering-up (beyond your control) of multiple commands will wreak havoc on proper microsecond-accurate strobe timings. Your CPU may successfully send a microsecond accurate signal, but that is all for naught if the serial bus adds jitter from buffering.

Ideally, you need a direct GPIO wire. Like parallel port wire direct to a monitor motherboard. If carefully done, a direct I2C bus may be deterministic enough. You literally have to bypass all serial buses for microsecond-accurate signalling, and that's hard to do without a GPIO wire (such as a 100% hardware-based parallel port pin of a PCI-X parallel port card -- a true "LPT1" parallel port -- not a USB parallel port adaptor). Tests were done that showed that parallel port pins can be microsecond-accurate timed in an assembly language DOS loop, so there's hope for a kernel-level driver in an RTOS/Linux. A PCI-X parallel port card is the easiest way to add GPIO capability to a modern computer -- with direct register writes to I/O port 0x0378 it behaves just like Arduino or Atmel GPIO pins. But that often requires modifying the monitor itself, to interpret the GPIO signal (into the gate of a transistor (MOSFET) wired into the monitor's existing edgelight). A GPIO directly to a MOSFET attached to the monitor's edgelight will allow you to do it much more deterministically (to the microsecond), completely bypassing serial-jitter and i2c-jitter.

However, with serial and carefully-done buffer-flushing, you might be able to pull off a somewhat-usable proof-of-concept using a low-level serial port (like a serial FIFO directly connected to a DDC wire of a DVI port, bypassing DisplayPort packetization and bypassing USB port). But there is no guarantee how zero-jitter the DDC interpretor is in the monitor's firmware. It might only update DDC state once every refresh cycle, rather than letting you time DDC commands at sub-refresh-cycle intervals.

It's an advanced programming task that also requires at least basic electronics knowledge (at least an understanding of serial buses). If you're going to try to "get DDC commands as jitter-free as possible", your best bet is to use DVI (instead of DisplayPort to avoid the micropacket jitter) and find a way to access the UART-buffer-flush capability via hacking the Linux driver.

Even things like the CPU processing cycles on your monitor's motherboard for processing DDC/CI VCP/MCCS commands might add lots of jitter, e.g. the execution of commands may not be microsecond-accurate relative to transmission of command. The monitor's firmware might poll the serial for bytes of VCP/MCCS commands at a low frequency (e.g. 1KHz or 10KHz) which means you may have 100 or 1000 microseconds of jittering in the timing of backlight state changes, even if you're microsecond-accurate from the computer side.

No two monitors program their DDC/CI command processor loop in exactly the same way (usually an Atmel AVR32 or Arduino IDE or some other programming language). Ideally, you want to modify the monitor's firmware, and that is something that is possible on certain monitor models if you're a bit daring of a tweaker.

Theoretical Most Deterministic Real-Time Way to Transmit DDC/CI commands

Without modifying your monitor, this is the most accurate you can possibly get -- but still at mercy to monitor's firmware limitations. Your OS (RTOS or Linux) driver/software handling DDC/CI VCP/MCCS (for transmitting monitor commands) might piggyback on another module such as a serial driver (which contains UART transmit-buffer handling). DDC/CI uses i2c on the DVI port, so if you can bypass buffering/UART logic, that is ideal. But you might also be at mercy of the low clockrate of an i2c bus (e.g. 100KHz), that clockrate adds minimum 10 microseconds of jittering to DDC/CI commands. But, still, do it at kernel level at the highest CPU privelage. If you're stuck with automatic buffering of serial buses, hunt down for the low-level "transmit buffer flush" call that forces a partially-filled transmit buffer to transmit immediately. Call that immediately after transmitting your backlight ON/OFF command. Then that way, you can then finally transmit DDC/CI commands with as much deterministic timing as you possibly can -- and hope that the specific's monitor's firmware doesn't add any additional jitter. You may still get candlelight-flicker effect with solid white colors (this is what happens when strobe lengths randomly varies by 10 microseconds -- that's 1% brightness change to a 1000us strobe length). It may even still be useable on some monitors for certain games -- the candlelight-flickering may be at a sufficiently-tolerable level once you've made everything as deterministic as possible. Could be a worthwhile experiment!

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

Re: Blur reduction possible on any monitor via software ?

I'll try writing a kennel driver to do this.Do I need to flicker it during the monitor refresh or can I just flicker it like a diy strobe backlight (since it might be hard to hook into Radeon driver for the VBLANK signal and will require kernel recompilation).Will a tickless kernel be enough or do I need real time ? What does LVDS use to transfer DDC commands ?

Also my monitor shows ghosting or motion blur but when I take a 1/200 photo it doesn't show any (UFO is sharp and no ghost UFO)

Also will 60hz cause noticable flicker because my monitor won't overclock in Linux.

Also my monitor shows ghosting or motion blur but when I take a 1/200 photo it doesn't show any (UFO is sharp and no ghost UFO)

Also will 60hz cause noticable flicker because my monitor won't overclock in Linux.

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Blur reduction possible on any monitor via software ?

You need to time it. Strobe crosstalk is shifted 1 pixel down for every 1 scanline delay in beginning strobe flash.Curi0 wrote:I'll try writing a kennel driver to do this.Do I need to flicker it during the monitor refresh.

Some manufacturers turn ON the backlight very late in VSYNC, and turn OFF backlight approximately 0.5ms into the beginning of new refresh cycle. This offsetting is because of the GtG lag, in order to center the GtG band in the time interval between refresh cycles.

If you strobe during the middle of a refresh cycle, you get worse double image (or more) effects.

Timing the strobe flash is important. And you want to use larger vertical totals. Most VSYNC is only 0.5ms or less, not big enough to hide most of 1ms GtG between refresh cycles, so you need to have large VSYNC intervals, preferably 2ms (VT1350 at 120Hz) or almost 3ms (VT1500 at 120Hz). Many 60Hz monitors can do 75Hz, so you may be able to do approx VT1250-VT1300 for 1080p/60. Basically using 75Hz dotclock at 60Hz, and using up the excess dotclock to create a larger blanking interval.

The faster the LCD response, and the bigger the blanking intercal (longer VSYNC), gives you more flexibility to hide LCD GtG between refresh cycles via a precisely timed strobe backlight flash. Strobe phase can be less precise than strobe length.

Ideally, you want both a strobe phase and and a strobe length adjustment. Strobe phase lets you move the crosstalk-band (GtG zone) upwards/downwards. Larger blanking intervals give you more room to push the crosstalk-band (GtG zone) below the bottom edge of the screen before it wraps around to the top edge of the screen. Position of strobe crosstalk band is based on horizontal scanrate. 1080p120Hz is 135KHz horizontal scanrate or 135000 pixel rows per second. So if you are just 10/135000ths of a second late in flashing the strobe, your crosstalk band will have shifted downwards by ten pixels. It is hard to explain in words but becomes familiar when you have played with Blur Busters Strobe Utility - www.blurbusters.com/strobe-utility or adjusted the "Area" setting (strobe phase) on a BenQ/Zowie monitor. This is why you need to time the strobe correctly for minimum strobe crosstalk in screen centre.

Image artifacts for timing the PHASE of strobe flash:

1000us jitter in timing beginning of flash: Your crosstalk zone will vibrate up/down quite noticeably. Basically, a slightly flickery strobe crosstalk

100us jitter in timing beginning of flash: Your crosstalk zone will vibrate up/down subtly, only noticeable in some scenery

10us jitter in timing beginning of flash: No noticeable issue.

Image artifacts for timing the LENGTH of strobe flash:

1000us jitter in timing strobe length: Your solid colors will erratically flicker in brightness by 100 percent

100us jitter in timing strobe length: Your solid colors will erratically flicker in brighness by 10 percent

10us jitter in timing strobe length: Your solid colors will erratically flicker in brightness by 1 percent

1ms jitter in timing strobe length: Flicker is too faint to be seen. Looks solid.

For photography:

If you want to accurately photograph motion blur, you need to pursuit the camera (at the same speed as eye tracking) at http://www.testufo.com/ghosting .... links to instructions are there. But you can also try hand-panning the camera to follow the UFO while taking a slow 1/30 photo. Making sure the ladder "sync track" looks like a ladder track in the resulting photograph.

The motion blur is eye tracking based. See www.testufo.com/eyetracking .... the only way to photographically capture that accurately is to track the motion like an eyeball, either by rotating camera or panning the camera. We invented an inexpensive pursuit camera technique that many other web testers now use, www.blurbusters.com/motion-tests/pursuit-camera

But you don't need to do this if you only want to focus on backlight tinkering. Just so that you understand that photographing display motion blur accurately requires a camera-tracking like eye-tracking.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

Re: Blur reduction possible on any monitor via software ?

I'll first write a driver to strobe it at 60hz and then add VSYNC support to it since even with cross-talk if the timing and everything is write it shouldn't flicker.

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Blur reduction possible on any monitor via software ?

No guarantees.

Monitor firmware not designed for strobing may still mess timing up enough to cause it to flicker.

Or it might do things like poll the register only once a refreah cycle, making it impossible to get sub-refresh-cycle persistence.

But give it a try, it would be fun to see how deterministic we can get DDC/CI signalled strobing! If you are determined (pun) to do it.

Monitor firmware not designed for strobing may still mess timing up enough to cause it to flicker.

Or it might do things like poll the register only once a refreah cycle, making it impossible to get sub-refresh-cycle persistence.

But give it a try, it would be fun to see how deterministic we can get DDC/CI signalled strobing! If you are determined (pun) to do it.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!