<Blur Busters Pandora Box>

Oh wow, big Pandora Box of a topic that is almost as difficult to explain as quantum mechanics...

ELK wrote:How does this stuff work?

It becomes easier to understand if you understand rasters, raster interrupts, racing the beam, or those techniques from the classic programming days. You treat VSYNC OFF is like a poor man's version of "Racing The Beam" -- from the CRT days.

GPU doesn't wait for VSYNC OFF. The new pixel rows are spliced

immediately into the current raster position.

Whatever frameslice (at the raster) is delivered immediately from GPU to photons as little as sub-millisecond, right at the pixel position of the current raster.

Atari 2600 had no frame buffer. It doesn't have memory for a frame! Each scanline were rendered in realtime.

If you want to read a book, see this one:

This book is on

Amazon.

To understand that

VSYNC OFF tearlines are just rasters, read the

Tearline Jedi Thread.

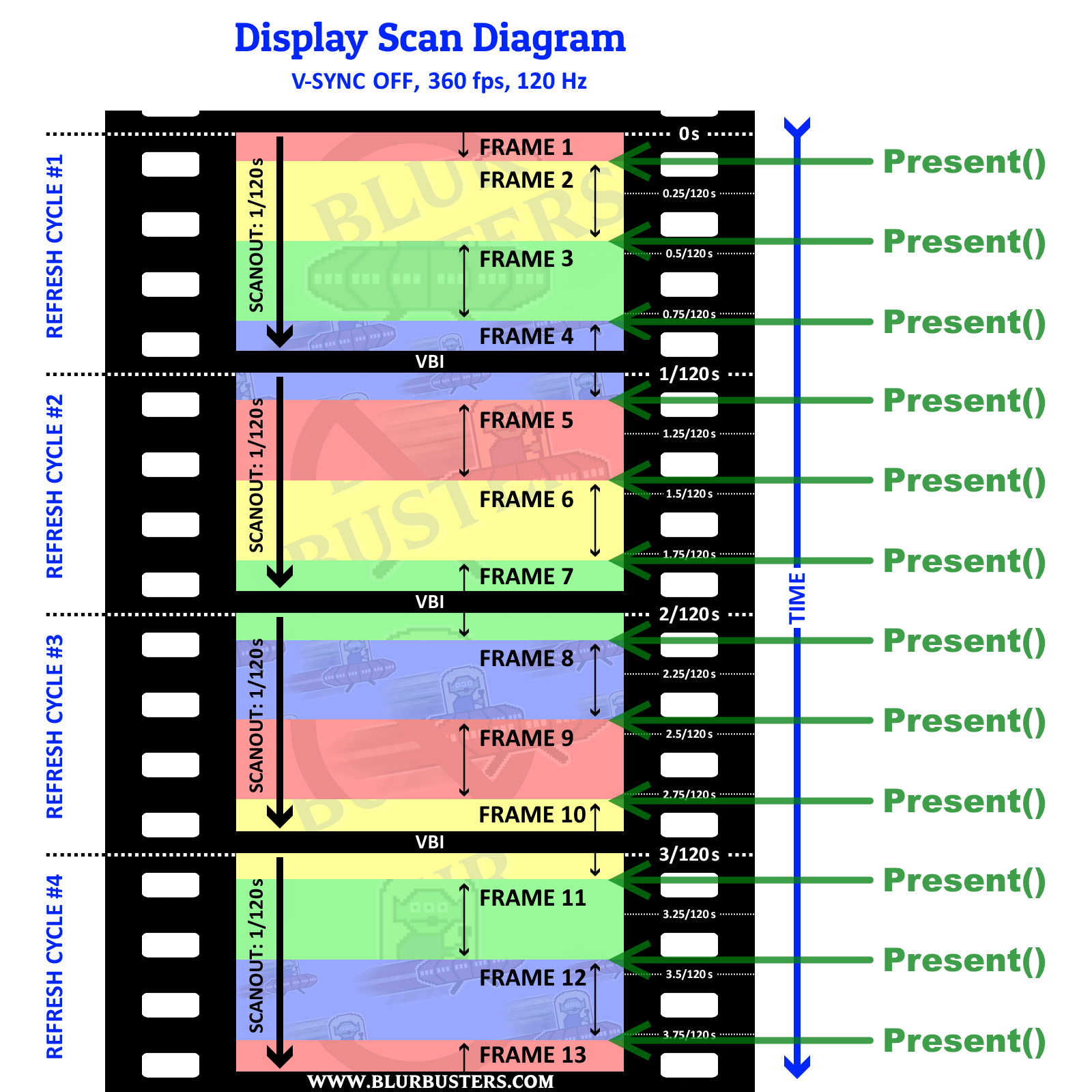

When a computer presents the frame buffer during VSYNC OFF, the next pixel row that the display scans-out will be the new frame's. Since a new frame can be rendered in sub-refresh (e.g. 500fps VSYNC OFF), that frame is fresher than the beginning of the current refresh cycle. Meaning the frame didn't exist when the screen was scanning out at the top edge, but the frame now exists when the screen is scanning-out mid-scanout.

I'll have to develop a VSYNC OFF version of

http://www.testufo.com/scanout and show you a high speed video of VSYNC OFF in order for you to understand better, but needless to say -- the book and the Tearline Jedi YouTubes will help.

The display is currently merrily scanning out interrupted whatever the GPU output is delivering.

Also, remember, we're talking about a specific lag measurement: The latency of a corresponding pixel rendered in the GPU frame buffer, to the latency of a corresponding pixel displayed on the frame. This is not the same thing as other parts of the latency chain, or other latency terminologies (e.g. "scanout latency", e.g. the latency that a screen takes to scan from top edge to bottom edge of the screen)

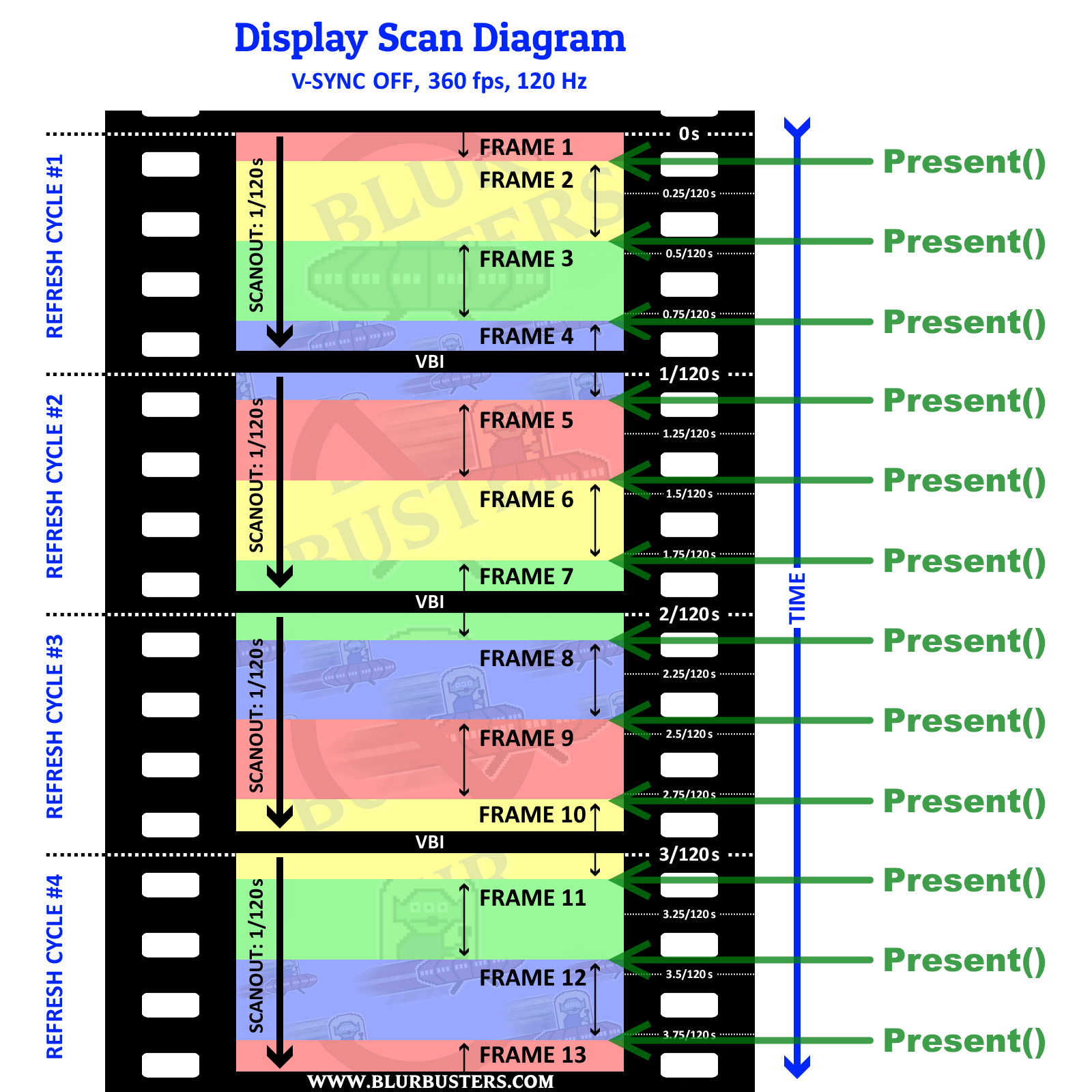

Now I don't know if you are a computer programmer or not, but the computer command that software developers use is called "Present()" in Direct3D. That's the command to begin delivering a frame to the monitor. In VSYNC ON, there's delay until a new refresh cycle begins. In VSYNC OFF, there's no delay -- the new frame begins transmitting immediately (even sub-refresh), interrupting the current cable scanout.

I have a demonstration of precise raster-controlled VSYNC OFF tearlines, as follows:

In this particular case, there's only 2ms to 3ms between Direct3D Present() and the correponding pixels emitting photons!

That's sub-refresh latency, since the specific rendering area was precisely timed to right below a region of screen area that the cable has not yet transmitted yet. (Many, not all, displays realtime displays pixels almost straight off the cable).

Each row of text is emitting photons less than a refresh cycle.

As an example, look at the first three rows of text -- red, blue, then cyan

The red frameslice was rendered while transmitting the blue frameslice from GPU to display.

The cyan frameslice was rendered while transmitting the red frameslice from GPU to display.

And so on.

Which means the framebuffer of the red frameslice

DID NOT EXIST when the refresh cycle began! The frameslice was only rendered when the screen scanout reached the location of within the frame (aka the "frameslice" -- ala, the vertical location of a frameslice within a framebuffer), and the cable transmission of refresh cycle is "interrupted" with a new frame.

Sub-refresh API-to-photons latencies are definitely possible, full stop, assuming:

(A) VSYNC OFF

(B) You render in the untransmitted region (aka "below the raster").

(C) You Present() your render before it's scanned out.

(D) Monitor is displaying scanout in sync with cable scanout (CRTs, some but not all LCDs, most eSports LCDs).

Anything outside the visible frameslice area (aka the portion of a framebuffer that's successfully scanned out) is never visible if it's a subrefresh frameslice, since the framebuffer can be gone from memory once you're done with it (when the new frame splices in).

It's possible for frameslices in the middle of a refresh cycle -- to never exist at the beginning or end of refresh cycle -- not rendered yet when refresh cycle begins -- and discarded from memory before end of refresh cycle.

VSYNC OFF is completely nonblocking.

It doesn't wait for anything else.

It begins delivering delivering mid-scanout right into the GPU output immediately.

Whatever scanout position it is currently at, right at that instant, is now coming from the new framebuffer.

All VSYNC OFF tearlines have always been rasters.

All VSYNC OFF tearlines in humankind, over the last century, is all because of that action.

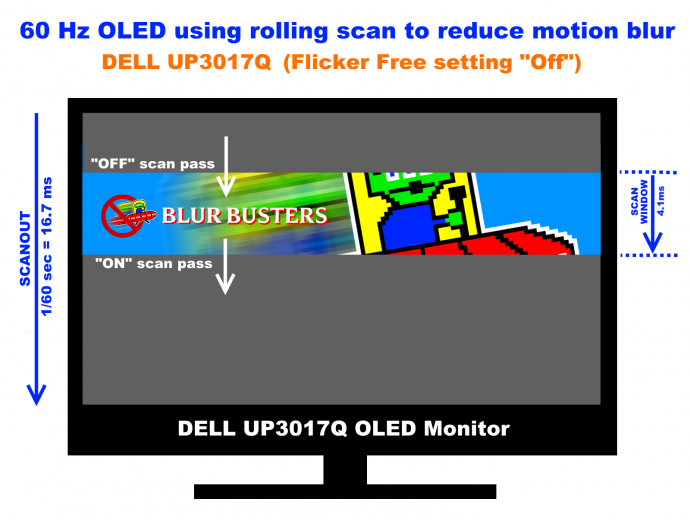

Some monitors synchronizes cable scanout to panel scanout, but not all of them does. CRTs were always synchronous in cable scanout to panel scanout. Many old LCDs prebuffered a whole refresh before panel-scanout. But most eSports game monitors do realtime scanout onto the screen from the cable (with only a pixel response GtG lag effect).

Whatever the monitor does, buffers or realtime scans, is beyond the GPU's control. The GPU just merrily outputs the pixel rows sequentially one after the other, at the horizontal scan rate, e.g. 160KHz Horizontal Scan Rate = 160,000 pixels rows per second = 1/160,000sec to transmit one pixel row = means a 1/160,000sec intentional delay before frame Present() API moves a tearline down by 1 pixel). Whatever monitor is connected can do whatever it wants with the pixels, but if the monitor is realtime displaying pixels straight off the cable, it's already capable of sub-refresh latency right there, on the spot. For an application to take advantage of that, requires ultra-precise timing of rendering and presenting the frame.

If you were an 8-bit programmer trying to multiply a sprite (e.g. 8 sprites to 16 sprites) on a Commodore 64 computer using a Raster Interrupt. Doing a precise sub-refresh VSYNC OFF frameslice is really exactly the same thing. You can simply poll D3DKMTGetScanline() API to emulate your own PC-based raster interrupt. If you have access to enough timing precision, you can Present() a new framebuffer with the tearline exactly where you want.

Assuming the signal has a 160KHz scan rate (160000 pixels rows per second) -- the input lag of first pixel row underneath a tearline is 1/160,000sec cable latency. The second pixel row underneath a tearline is 2/160,000sec lag, the fourth pixel row underneath a tearline is 3/160,000sec latency, and so on. Assuming zero monitor processing time and assuming 0ms GtG. Neither of which are possible (except CRT comes darn near close, to microseconds). The thing is we now have 1ms GtG TN panels, that are capable of eSports realtime cable=panel synchronization, and thus, we're still getting subrefresh latency, even if not 0ms latency for the first pixel row underneath the tearline.

You can look up "Horizontal Scan Rate" in a Custom Resolution Utility (it's sometimes called "Horizontal Refresh Rate") and is often called "KHz" or "kilohertz" -- that's simply number of scanlines per second.

The monitor doesn't know it's a separate frame, it's just a single scanout sweep over the cable, but it

can be "interrupted" with a new frame in realtime. So if you Present() 4 times a refresh cycle (e.g. 480fps at 120Hz) you can have as little as 1/4 refresh cycle latency.

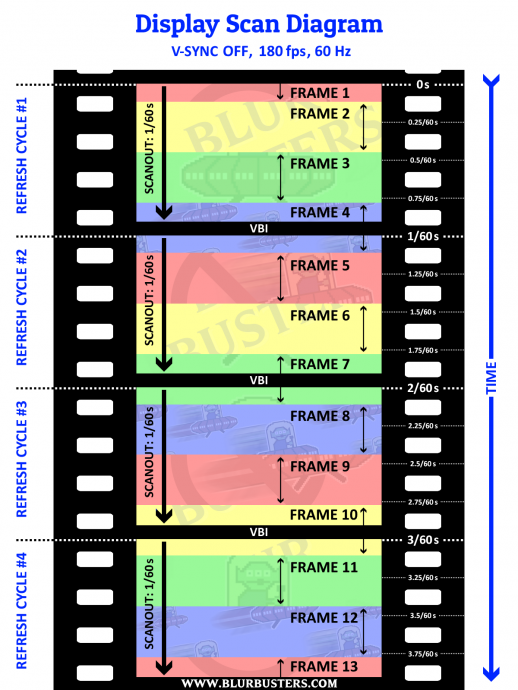

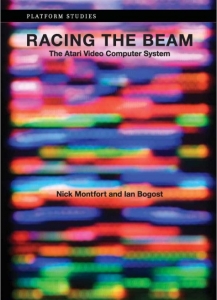

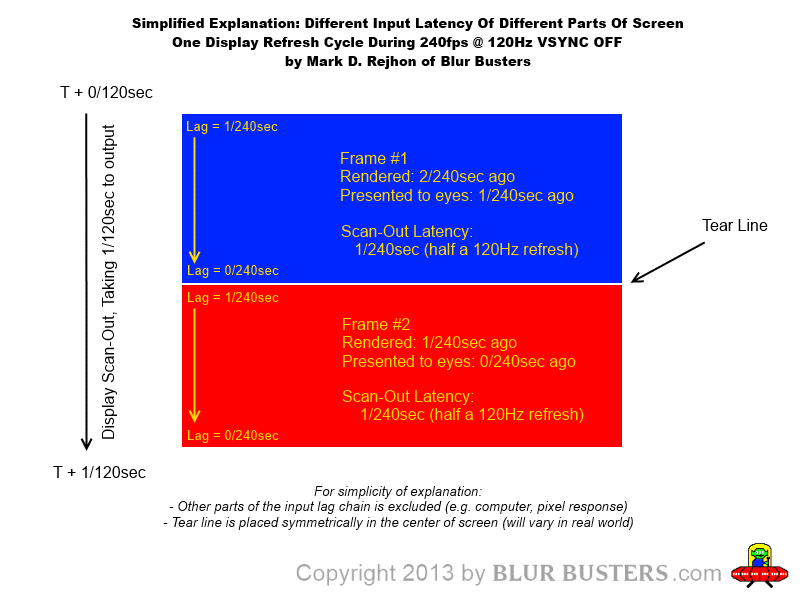

Here's a very old, simpler diagram:

I shared this in 2013 before this was successfully experimentally verified in Tearline Jedi and high-speed-video tests. It was well known back in the CRT era, since the Atari 2600 required you to literally render scanlines on the fly since it only essentially had a scanline buffer (not enough memory for a full frame buffer).

Few people realize that tearlines are simply realtime splices in a video cable transmission.

Tearlines are just rasters. By uinderstanding that properly, you then thusly understand sub-refresh latencies.

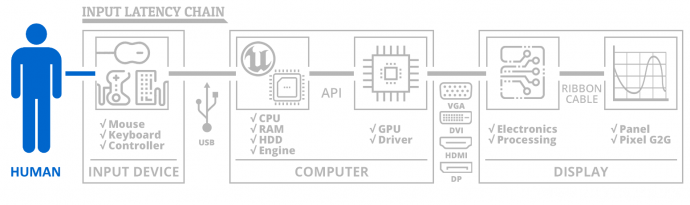

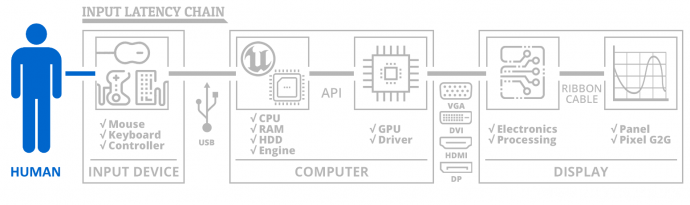

You don't see it in games because the input lag chain is a complex chain:

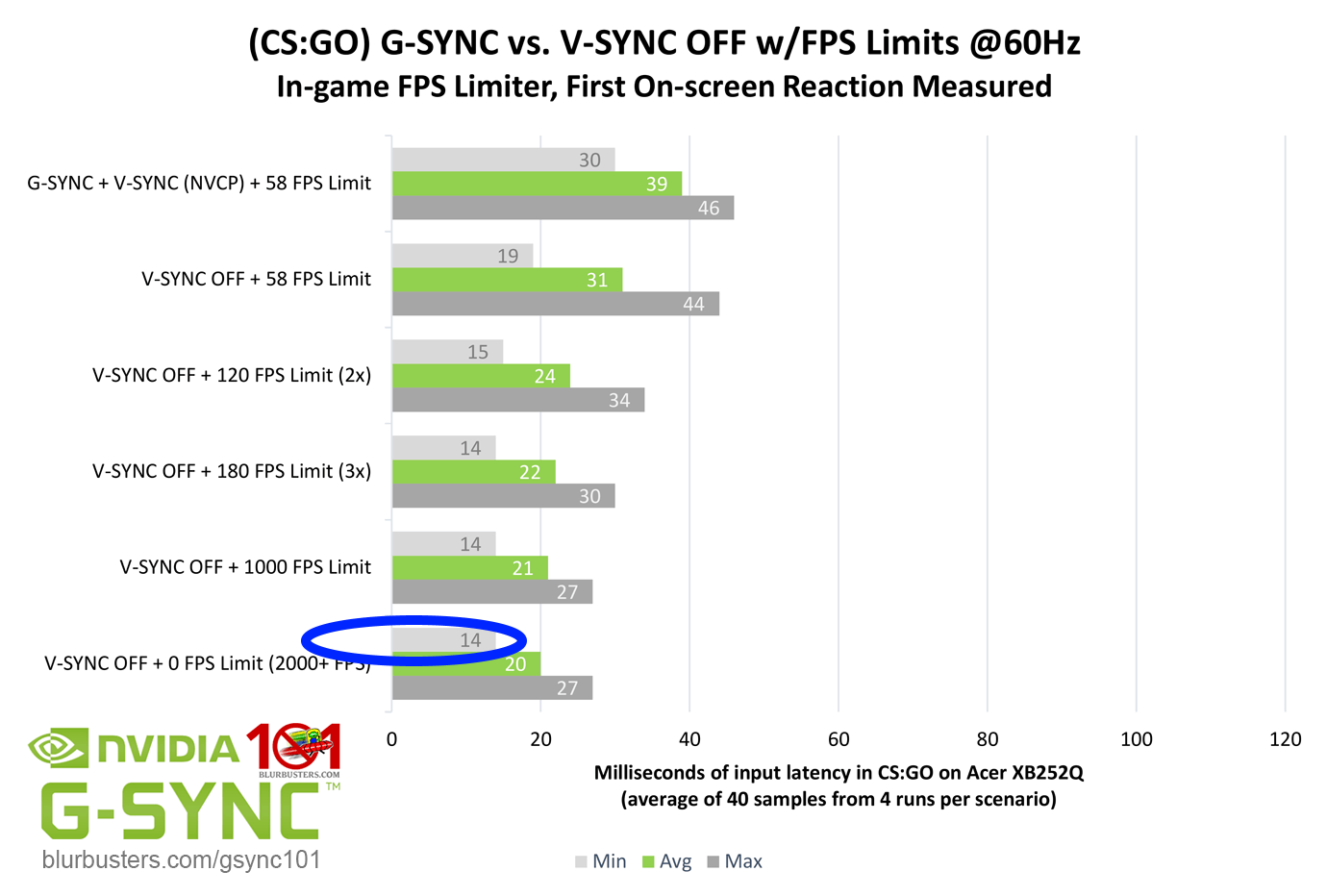

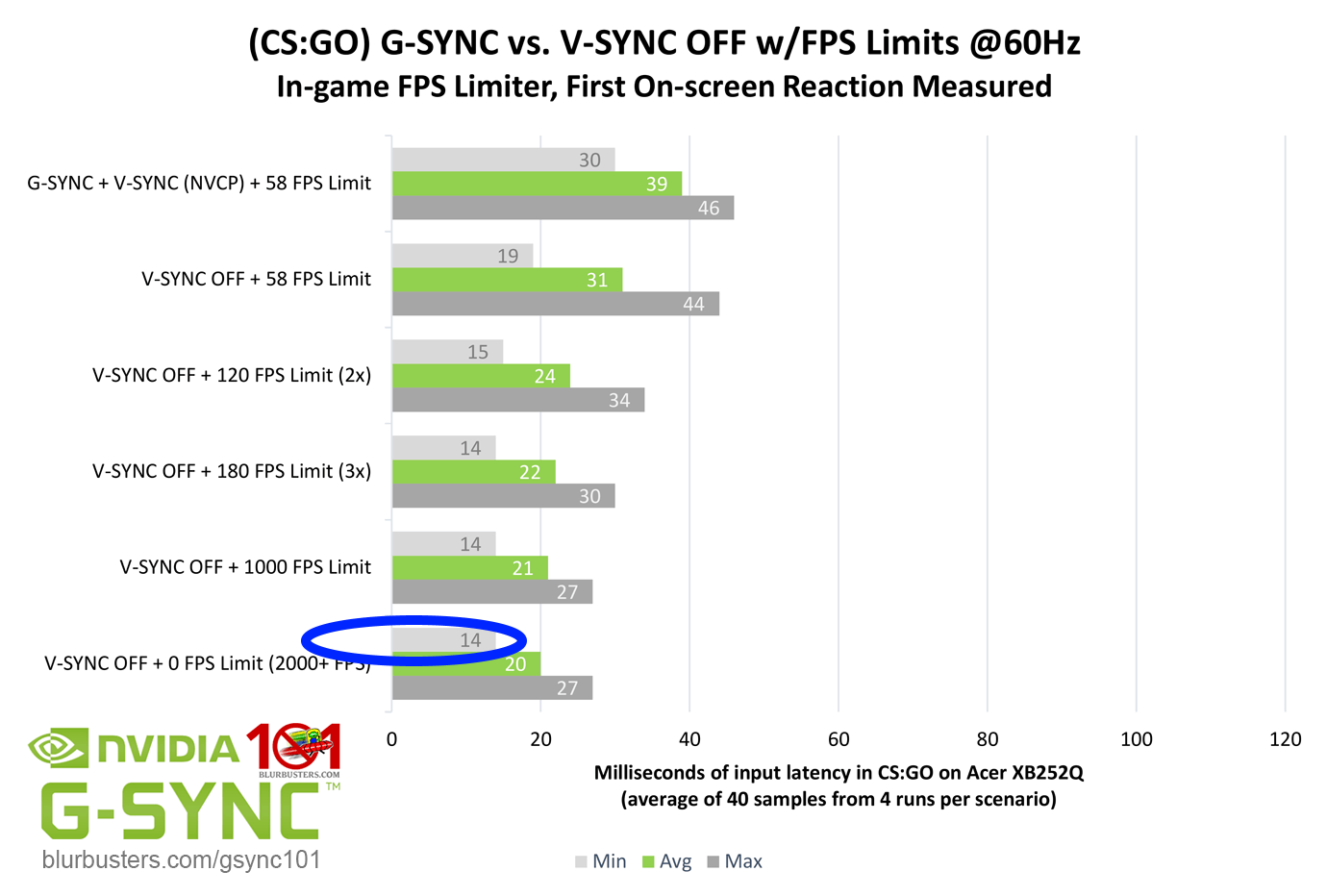

However, sub-refresh latencies occur in some older games like Quake Live and CS:GO (A) insanity framerates (B) long refresh cycles. Usually, one needs an ultra-extreme ratio of at least 10:1 or 20:1 (600fps or 1200fps at 60Hz) or greater, to punch the sub-refresh numbers way above the noise of the rest of the latency chain piling on top of the API-to-photons latency which is only a subset of the whole button-to-photons latency.

Since the photons-to-pixels were so small (e.g. 3ms), the other latencies (e.g. 1ms input latency, frametime / game engine latency, etc) only added up to 14ms on the better case scenarios -- less than 16.7ms of 1/60sec. This isn't even error margin; this was a high speed 1000fps video from actual buttondown (LED light) to monitor emitting photons. The high speed video showed only 14 milliseconds for some of the better cases.

Now, if you ignore the game engine and mouse device, and focus only on API-to-photons, you can indeed get nearly 0ms latency for that, if you render a quick frame (e.g. blank frame buffer) and Present() right before the raster hits that scanout, and if that monitor is a zero-lag cable-to-panel scanout (e.g. CRT). An unsynchronized page flip randomly anywhere in a framebuffer will add a fuzz of roughly one refresh cycle. That's why you see roughly 16 milliseconds between min/max (1/60sec) in this 60Hz test.

But if you precisely raster-time it, you can easily get sub-millisecond reliably, like I can do with Tearline Jedi Demo.

Raster-controlled situations (precise tearline synchronization) makes it much easier to get sub-refresh latencies, as long as you follow the mandatory guidelines (see above list)

Present() is non-blocking (no waits occur). It switches the cable transmission to begin transmitting from the new framebuffer instead.

(effectively the tearline -- essentially a figurative and metaphorical splice --

at the raster!)

Did you know some emulators like WinUAE now use sub-millisecond latency via WinUAE frameslice beam racing? (

My github submission, the new

first release with lagless vsync mode). This is easy with an emulator because emulators are often running on beamraced/raster-dependent platforms (e.g. Atari TIA, Commodore VIC, Amiga Copper) that has a mandatory requirement of sub-refresh synchronizations. Since those were output to CRTs that used top-to-bottom scanout, these are easiest to remap to the top-to-bottom scanout metaphor of VSYNC OFF frameslice streaming.

Did you know

RTSS Scanline Sync was invented because of my Tearline Jedi research, and because of my suggestion to Guru 3D (see my

original suggestion, their

announcement, and

my thanking them). While RTSS Scanline Sync is NOT subrefresh latency (on average anyway) it builds upon precise raster synchronization of tearlines. Modern games to render full framebuffers (not portions of screen at a time, precisely timed to screen scanout). It is one of the more lagless variants of "VSYNC ON" looklikes, for people who hate VSYNC OFF tearing. Basically RTSS Scanline Sync allows you to move the tearline wherever you want it, in older games (e.g. Quake, CS:GO, etc), including just above the top edge of the screen or below bottom edge of the screen. See the

Scanline Sync HOWTO.

Remember, Leo Bodnar Lag Tester is

strictly a VSYNC ON lag tester and does not use VSYNC OFF stopwatching criteria, and the definitely possible ability to synchronize a last-minute render while the screen is scanning out mid-refresh. Remember,

input lag testing is a nebulous mix of different stopwatching criteria.

Please

read that important post about "so many different lag stopwatching criteria" before you reply if you are still confused -- make sure to clearly define the lag stopwatch criteria. After all, how did an Atari 2600 work?

Everything it did had subrefresh latency, out of sheer necessity, due to lack of frame buffer. (BTW, read the book if you don't understand rasters. Or at least

read this 2009 wired magazine article that explains the Atari 2600's

lack of a frame buffer making sub-refresh code-to-photons essential for Atari 2600 to even barely work at all.

It's not commonly done on the PC today because we just didn't need to work sub-refresh in modern era,

because we have frame buffers today and it is not necessary to do sub-refresh latencies today. But we can if we want to (if we use the same old fashioned tricks). It's just simply only more complicated than doing full-framebuffer-based workflows. Resurrecting sub-refresh latencies is indeed possible, as it's very old science --

ATARI 2600 -- techniques reborn.

It's really easy to conceptualize in your mind if you're familiar with beam racing or raster interrupts (like many 8-bit programmers). It's a lost art. But you can achieve sub refresh latency today if you use that lost art. VSYNC OFF tearlines are just simply rasters. Every tearline ever created in humankind is simply a raster-positioned metaphorical splice between an old frame buffer and a new frame buffer.

Tearlines only exist because it is simply an interruption in a graphics delivery sequence (aka the sequential raster-based scanout sequence). Tearlines don't exist if there are no sequential delivery mechanism to interrupt (aka sequential raster-based scanout mechanism). By virtue of knowing or guessing where the raster is (what pixel row the display output is currently outputting, whether via RasterStatus.Scanline, D3DKMTGetScanline() or via a time-based estimate between two blanking intervals), we can time a last-minute render and a last-minute presentation to display new pixels in the middle of a refresh cycle that didn't exist in any frame buffer when the refresh cycle began. Atari 2600 did that out of necessity (no frame buffer at all!) it's just hugely optional and mostly useless for a PC. The bottom line, fundamentally, sub-refresh latency is possible. It's old science.

Super Mario 3 on a Nintendo has an intentional tearline: It's the splitscreen dividing line! Between the moving image above a static scoring area. It was done in real time using raster-based trick. Even though Nintendo has a framebuffer, it still used real time rasters to generate a split screen (scrolling playfield and a stationary score area). Many 8-bit platforms used this technique to generate splitscreens, those spitscreen zones are your Grandfather's equivalent of a "VSYNC OFF Tearline": A precise synchronized mid-scanout graphics mode change or framebuffer change. Because it was all in assembly language (machine language), with no operating system, even an 8-bit chip could do a microsecond-accurate timing operation (that's how Nintendo Zapper works by the way....math on microsecond timings). Old platforms were slow, but boy, man, they were SOOOOO precise-accurate in timing. No overheads.

Today, everything is high level operating system, high level APIs, and high level programming like C# or C++. It's only recently computers finally became fast and precise enough (again...) to allow even a slow script programming language to generate real-time Kefrens Bars, something that formerly required microsecond-accurate math.

One pixel row per frameslice, 8000 tearlines per second,

with sub-millisecond video cable delivery per frameslice, generating something formerly possible only on a classic 8-bit platform. This demo is exactingly precise, and you'll notice near the end of the demo, window dragging causes glitches in the demo.

Raster beam racing knowledge is just become a lost art, until being resurrected by virtual reality beam racing, via emulator beam racing, via RTSS scanline sync, etc.

-

Virtual reality beam racing programming (Android)

-

Lagless VSYNC ON Mode in emulators -- WinUAE/GroovyMAME

-

New RTSS frame capping via Scan Line

- Tearline Jedi Demo experiments.

When re-learning mastering this lost art (formerly done in the 1980s), you gain the skill of subrefresh latencies on modern PCs. The problem is it's 100x more complicated for a modern "framebuffer programmer" who have absolutely no understanding of even being able to grasp framebufferless gaming console programming. It almost requires a new 1-year training course just to understand how to do subrefresh latencies.... almost.

As a result, "Leo Bodnar Lag Tester 9ms" (accurate for VSYNC ON middle of a very low-lag display) can become "2ms For Screen Bottom Using Beam Racing" (accurate too, because of different VSYNC OFF stopwatching criteria). Since beam racing can equallize the TOP/CENTER/BOTTOM lag to equalling the lowest number (TOP). Already, you can get sub-refresh lag with Leo Bodnar for TOP, then using beam racing to get the same TOP number for MIDDLE and for BOTTOM edge of screen.

For VSYNC OFF and for raster-timed "frameslices", we are intentionally stopwatching position-for-position (GPU card's pixel row to corresponding pixel row), not stopwatching scanout time from VBI-to-screen position (e.g. scanout time from top to bottom) which is what Leo Bodnar stopwatches for CENTER (average) and for BOTTOM.

Usually, we don't do subrefresh latencies

unless necessary, since achieving that requires understanding the raster, which most people don't.

...You don't need to understand quantum mechanics to build a treehouse or cupboard.

...You don't need to understand calculus to be able to speak French.

...Likewise, you don't need to understand rasters and raster interrupts in order to program a modern 3D video or iPhone app today....

unless you need subrefresh latency.

Obviously, an easier way to reducing latency for modern programmers is simply using a higher refresh rate, to reduce the scanout latency, and reduce intervals between refresh cycles). 240Hz monitors only have ~4ms between refresh cycles. To many modern 3D programmers, trying to learn raster interrupts almost feels as complicated as learning quantum mechanics (raster knowledge was mandatory on the 70s/80s consoles but knowledge is no longer needed today). For the question of sub-refresh latencies, "is it possible?" is a completely different question from "is it necessary?" because it can horrendously complicate programming for some.

We're the experts in subrefresh latency tricks.

We're the experts at understanding what happens to pixels

after Present() and before the photons reaches human eyes.

(additional knowledge essential to subrefresh latencies).

I am happy to answer display engineering questions.

</Blur Busters Pandora Box>