Is motion blur directly related to strobe visibility or not?

-

valeriy l14

- Posts: 30

- Joined: 21 Mar 2021, 11:36

Is motion blur directly related to strobe visibility or not?

Look, if Mark says in his article that in a pinch we need an update rate of 10,000 Hz, does this mean that if there is no strobing, motion blur will completely disappear, no matter what the speed of movement will be?

- Chief Blur Buster

- Site Admin

- Posts: 12098

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Is motion blur directly related to strobe visibility or not?

It's a bit of a overgeneralization because the exact Hz that becomes "retina" varies widely (and varies from human to human too).

For example, retina refresh rates are lower for smaller screens with narrower-FOV. It is easier to see refresh rate limitations when the screen covers more of your field of vision at higher resolutions and higher pixel densities. Some of us can eye-track better than the other -- for example, some of us can track TestUFO at 3000 pixels/sec on a 24" monitor, while others of us cannot.

Also, the correct way to say this is that motion blur is tied to frame visibility time, not just only strobe visibility time.

Demo Animations of how motion blur scales:

1. TestUFO Animation Demo (nonstrobed) -- twice frame rate halves display motion blur.

2. TestUFO Animation Demo (strobed) -- briefer frame visibility time reduces display motion blur

These TestUFO tests are the most educational viewed at 120 Hz or higher.

Once seeing those above TestUFO tests, it becomes easier to understand:

1. Non-strobed: Motion blur is tied to frametime (refreshtime)

That's because that's how long the frame is visible for

2. Strobed: Motion blur is tied to strobe flash length time.

That's also because that's how long the frame is visible for

Known side effects of frame rates lower than Hz:

1. Non-strobed: Low frame rates on non-strobed screen just generate more blur. 60fps@120Hz has same blur as 60fps@60Hz. So Blur Busters Law works well framerate-based rather than refreshrate-based.

2. Strobed: Low frame rates on a strobed screen will create duplicate images (like CRT 30fps@60Hz, LightBoost 60fps@120Hz), similar to this animation and this screen photograph.

Don't forget the Vicious Cycle Effect: higher Hz is more important for higher resolutions & wider FOV

Remember, it depends on the display size and FOV -- how long you can clearly track pixels for.

-- Retina refresh rates for a smartphone might be only 500-1000Hz

-- Retina refresh rates for VR (180-degree 16K resolution) is already known to be well over 10,000Hz.

--- For a desktop 24", it is likely in between the two.

Terminologically, "retina refresh rate" is the point where the human derives no further benefit from the diminishing curve of returns for motion quality provided by frame rates & refresh rates.

This is because wider FOV means higher pixel densities that can be eye-tracked for longer, making it easier to identify motion flaws. I call this the Vicious Cycle Effect -- the higher resolution + the wider FOV, the easier it is to see refresh rate limitations. A 10,000 Hz 1024x768 screen would be useless because of low resolution would hide the superior motion resolution. Now, a 10,000 Hz 10,000fps 16K screen (15360x8640) panning full 180-degree FOV VR would be much more useful. A smaller low resolution screen means (A) you don't have enough time to track high-detail objects before it disappears off the edge of the screen; and (B) the low spatial resolution will mask high temporal resolution.

Due to diminishing curve of returns, Blur Busters also recommends geometric upgrades to your refresh rate and frame rate. Such as via 60 -> 120 -> 240 -> 480Hz upgrade path, or via 60 -> 144 -> 360Hz upgrade path. (Professionals will be happy doing more incremental upgrades though, such as 144 -> 165 -> 240 -> 280 -> 360 ... However, geometric frame rate upgrades to follow will require Frame Rate Amplification Technologies.

More educational reading can be found at www.blurbusters.com/category/area51-display-research

For example, retina refresh rates are lower for smaller screens with narrower-FOV. It is easier to see refresh rate limitations when the screen covers more of your field of vision at higher resolutions and higher pixel densities. Some of us can eye-track better than the other -- for example, some of us can track TestUFO at 3000 pixels/sec on a 24" monitor, while others of us cannot.

Also, the correct way to say this is that motion blur is tied to frame visibility time, not just only strobe visibility time.

Demo Animations of how motion blur scales:

1. TestUFO Animation Demo (nonstrobed) -- twice frame rate halves display motion blur.

2. TestUFO Animation Demo (strobed) -- briefer frame visibility time reduces display motion blur

These TestUFO tests are the most educational viewed at 120 Hz or higher.

Once seeing those above TestUFO tests, it becomes easier to understand:

1. Non-strobed: Motion blur is tied to frametime (refreshtime)

That's because that's how long the frame is visible for

2. Strobed: Motion blur is tied to strobe flash length time.

That's also because that's how long the frame is visible for

Known side effects of frame rates lower than Hz:

1. Non-strobed: Low frame rates on non-strobed screen just generate more blur. 60fps@120Hz has same blur as 60fps@60Hz. So Blur Busters Law works well framerate-based rather than refreshrate-based.

2. Strobed: Low frame rates on a strobed screen will create duplicate images (like CRT 30fps@60Hz, LightBoost 60fps@120Hz), similar to this animation and this screen photograph.

Don't forget the Vicious Cycle Effect: higher Hz is more important for higher resolutions & wider FOV

Remember, it depends on the display size and FOV -- how long you can clearly track pixels for.

-- Retina refresh rates for a smartphone might be only 500-1000Hz

-- Retina refresh rates for VR (180-degree 16K resolution) is already known to be well over 10,000Hz.

--- For a desktop 24", it is likely in between the two.

Terminologically, "retina refresh rate" is the point where the human derives no further benefit from the diminishing curve of returns for motion quality provided by frame rates & refresh rates.

This is because wider FOV means higher pixel densities that can be eye-tracked for longer, making it easier to identify motion flaws. I call this the Vicious Cycle Effect -- the higher resolution + the wider FOV, the easier it is to see refresh rate limitations. A 10,000 Hz 1024x768 screen would be useless because of low resolution would hide the superior motion resolution. Now, a 10,000 Hz 10,000fps 16K screen (15360x8640) panning full 180-degree FOV VR would be much more useful. A smaller low resolution screen means (A) you don't have enough time to track high-detail objects before it disappears off the edge of the screen; and (B) the low spatial resolution will mask high temporal resolution.

Due to diminishing curve of returns, Blur Busters also recommends geometric upgrades to your refresh rate and frame rate. Such as via 60 -> 120 -> 240 -> 480Hz upgrade path, or via 60 -> 144 -> 360Hz upgrade path. (Professionals will be happy doing more incremental upgrades though, such as 144 -> 165 -> 240 -> 280 -> 360 ... However, geometric frame rate upgrades to follow will require Frame Rate Amplification Technologies.

More educational reading can be found at www.blurbusters.com/category/area51-display-research

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

valeriy l14

- Posts: 30

- Joined: 21 Mar 2021, 11:36

Re: Is motion blur directly related to strobe visibility or not?

Well I understood this, but still I have one question for you: how long does it take for the eye to capture a moving target (I call it saccadic input lag)?Chief Blur Buster wrote: ↑24 Mar 2021, 16:23It's a bit of a overgeneralization because the exact Hz that becomes "retina" varies widely (and varies from human to human too).

For example, retina refresh rates are lower for smaller screens with narrower-FOV. It is easier to see refresh rate limitations when the screen covers more of your field of vision at higher resolutions and higher pixel densities. Some of us can eye-track better than the other -- for example, some of us can track TestUFO at 3000 pixels/sec on a 24" monitor, while others of us cannot.

Also, the correct way to say this is that motion blur is tied to frame visibility time, not just only strobe visibility time.

Demo Animations of how motion blur scales:

1. TestUFO Animation Demo (nonstrobed) -- twice frame rate halves display motion blur.

2. TestUFO Animation Demo (strobed) -- briefer frame visibility time reduces display motion blur

These TestUFO tests are the most educational viewed at 120 Hz or higher.

Once seeing those above TestUFO tests, it becomes easier to understand:

1. Non-strobed: Motion blur is tied to frametime (refreshtime)

That's because that's how long the frame is visible for

2. Strobed: Motion blur is tied to strobe flash length time.

That's also because that's how long the frame is visible for

Known side effects of frame rates lower than Hz:

1. Non-strobed: Low frame rates on non-strobed screen just generate more blur. 60fps@120Hz has same blur as 60fps@60Hz. So Blur Busters Law works well framerate-based rather than refreshrate-based.

2. Strobed: Low frame rates on a strobed screen will create duplicate images (like CRT 30fps@60Hz, LightBoost 60fps@120Hz), similar to this animation and this screen photograph.

Don't forget the Vicious Cycle Effect: higher Hz is more important for higher resolutions & wider FOV

Remember, it depends on the display size and FOV -- how long you can clearly track pixels for.

-- Retina refresh rates for a smartphone might be only 500-1000Hz

-- Retina refresh rates for VR (180-degree 16K resolution) is already known to be well over 10,000Hz.

--- For a desktop 24", it is likely in between the two.

Terminologically, "retina refresh rate" is the point where the human derives no further benefit from the diminishing curve of returns for motion quality provided by frame rates & refresh rates.

This is because wider FOV means higher pixel densities that can be eye-tracked for longer, making it easier to identify motion flaws. I call this the Vicious Cycle Effect -- the higher resolution + the wider FOV, the easier it is to see refresh rate limitations. A 10,000 Hz 1024x768 screen would be useless because of low resolution would hide the superior motion resolution. Now, a 10,000 Hz 10,000fps 16K screen (15360x8640) panning full 180-degree FOV VR would be much more useful. A smaller low resolution screen means (A) you don't have enough time to track high-detail objects before it disappears off the edge of the screen; and (B) the low spatial resolution will mask high temporal resolution.

Due to diminishing curve of returns, Blur Busters also recommends geometric upgrades to your refresh rate and frame rate. Such as via 60 -> 120 -> 240 -> 480Hz upgrade path, or via 60 -> 144 -> 360Hz upgrade path. (Professionals will be happy doing more incremental upgrades though, such as 144 -> 165 -> 240 -> 280 -> 360 ... However, geometric frame rate upgrades to follow will require Frame Rate Amplification Technologies.

More educational reading can be found at www.blurbusters.com/category/area51-display-research

500ms, 250, or maybe there are superhumans that can do this in 100ms?

- Chief Blur Buster

- Site Admin

- Posts: 12098

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Is motion blur directly related to strobe visibility or not?

This is an area that varies widely, with a lot of factors:valeriy l14 wrote: ↑26 Mar 2021, 10:16Well I understood this, but still I have one question for you: how long does it take for the eye to capture a moving target (I call it saccadic input lag)?

500ms, 250, or maybe there are superhumans that can do this in 100ms?

- Your abilities

- Your training

- Initial identification via direct vision area versus peripheral vision

- Brightness and contrast of the object

- Expectations (predictable vs random objects)

- How far your eye gaze is away from the object you need to lock gaze on

- The initial motion vectors (of your gaze motion & of the object motion)

- How badly it is obscured (including obscured by motion blur, obscured by stutter, etc)

- Etc.

The majority of time, for predictable objects, it is well less than a second. Human "target locking" (fixing your gaze on the object) of an object is a separate problem than the display flaws itself, and is not as much of a study that Blur Busters currently does as on displays. However, since the science/physics overlaps because of the Vicious Cycle Effect (wider-FOV, higher resolution, higher refresh rate, all feeds into amplifying the needs of each other). So out of necessity, I have to be adequately familiar with the human vision limitations, to properly define the limitations of a display relative to human ability.

Remember, identifying whether the display has motion blur, requires multiple steps -- looking for the object (e.g. finding a moving UFO), locking eyes on the object (putting your eyes directly on the UFO), and finally inspecting the object for motion artifacts such as blur/stroboscopics/ghosting/etc (as you track a UFO in TestUFO). These stages typically take separate hundred(s) of milliseconds each, and adds up to perhaps a full half a second (or so), total.

So we're covering multiple separate sciences here:

- The human brain

- The human vision system

- The display itself

- The interactions between the two that generate human visible artifacts (such as display-enforced eye tracking motion blur)

The above animation is predictable:

- Moving UFO keeps reappearing at left edge

- Speed smoothly varies, rather than randomly

This makes it easy to observe the weird display behaviors (lines blending to motion blur) caused by eye-tracking-enforced display motion blur. This animation is cleverly designed to be easy to show off a display behaviors -- such as teaching about the stutter-to-blur continuum (like slow-vibrating music strings vibrate visibly, and fast-vibrating music strings vibrates in a blur), which is also demonstrated on framerate-ramping animations on variable refresh rates (e.g. www.testufo.com/vrr ...)

Now *within* my area of expertise, cherrypicking the variables to help the user easily identify display flaws: With training/coaching on predictable objects (similiar to teaching people to notice artifacts such as 3:2 pulldown effect that they can't unsee later)...... My experience is most people only need 0.5 to 1 second to identify the existence (or lack) of display motion blur.

Variables:

- Framerate=Hz

- Smooth horizontal motion (longest dimension of screen)

- Predictive appearance of object at edge of screen (like TestUFO)

- Locking gaze on object (tracking the UFO)

- Inspecting the object (checking if UFO has motion blur)

I am able to do this in approximately half a second or so, TestUFO Panning Map Test at 3000 pixels/sec. A street name label is on the screen for only ~0.64 seconds on a 1920x1080. If I have ULMB enabled with ULMB Pulse Width 30-50, I can lock my eyes on one street name label long enough to read one street name label (e.g. Front Street or Yonge Street) since I'm familiar with Toronto, Canada map and know where to quickly lock my eyes on a familiar street and start trying to read its street name label. So eye "find-gaze-identify" is complete in less than 1 second if the motion is clear.

- ULMB default ULMB Pulse Width 100 = 1ms MPRT

- ULMB with ULMB Pulse Width 50 = about 0.5ms MPRT

At 3000 pixels/sec, 1ms = 3 pixels of motion blur, which can obscure 6-point street name label text with display motion blur.

Under the best ULMB circumstances (ULMB ON + ULMB Pulse Width 50, or a similar "0.5ms MPRT strobe backlight")

- Some people can do the "find-gaze-identify" on this TestUFO panning map test at 2000 pixels/sec (1 second)

- Some people can do the "find-gaze-identify" on this TestUFO panning map test at 3000 pixels/sec (0.64 second)

- Some people can do the "find-gaze-identify" on this TestUFO panning map test at 4000 pixels/sec (0.5 second)

I can do the "find-gaze-see blur" at 4000 pixels/sec but I can't read the text fast enough, so slowing it down to 3000 pixels/sec or 2880 pixels/sec, I am able to read one street label on the fast-panning street map -- about 0.6 seconds.

But remember, this is the whole process -- finding the object (one street), locking my eyes on the object (street label), and inspecting (reading the street name). Each step would take an average of 200 milliseconds each within the 0.6 seconds deadline (from appear at left-eft to disappear at right-edge). But it could be a different ratio such as 200-100-300 or 150-150-400 or 300-50-250 -- that I don't know, someone will have to point a high speed camera at my eyeballs and corroborate the first two parts of the "find-gaze-inspect" behavior -- probably this is an underresearched area.

Occasionally, Blur Busters does commission research (like www.blurbusters.com/human-reflex ...) and this would be exactly the type of research up my alley if someone wanted to reach out. I've been thinking of writing some more research papers and getting it peer reviewed by others (say, NVIDIA researchers or others) -- but it's a time management thing too, because I don't get paid to write research papers, so I prefer to write "Popular Science" style articles on the main Blur Busters site to help de-mystify things for readers.

Informally, the Ballpark is 0.5-Second to 1-Second For 3-Step Eye Tracking "Find-Gaze-Identify"

Long-time TestUFO experience by millions of visitors indicate that the whole 3-step process of eye-tracking "find-gaze-identify" tends to take 0.5 to 1 second for predictable moving-objects (ala TestUFO), but that includes all 3 intermediate steps, finding the object, gazing the object, then identifying the details of the object, of which each may be surprisingly brief, since gaze is usually faster than initially finding the object, especially if object is unexpected.

It's hard to benchmark the separate stages of "find-gaze-identify", but easy to measure the whole thing together. For example, showing someone TestUFO's Panning Map Test and asking them to read one street name (any street name), once optimizing it to absolute best possible strobing (short strobe pulse widths). This provides a rough baseline of informal anecdotes, all of which is consistently within the 1-second ballpark. This provides the easiest basis for determining a retina refresh rate.

When I say "retina" refresh rate (a refresh rate equivalent of retina resolutions) -- this is the the refresh rate (and frame rate) where further improvements can no longer be derived. This, also, of course, assumes 0ms GtG (or, minimally, at least real world GtG 100% pixel transitions occuring well under a refresh cycle).

General Rule of Thumb: The Resolution Of the Longest Dimension Of Display is Conveniently the Approximate Retina Refresh Rate*

For the Average Joe User, I use the 1-second eye-tracking benchmark as an easy guideline for calculating practical Retina Refresh Rates

- 1920x1080 displays = requires 1920 pixels/sec to zoom edge-to-edge in 1 seconds = likely ~1920fps at ~1920 Hz is retina refresh rate

- 3840x2160 displays = requires 3840 pixels/sec to zoom edge-to-edge in 1 seconds = likely ~3840fps at ~3840 Hz is retina refresh rate

These aren't hard numbers; there's a lot of fuzz depending on human ability.

For well trained users, i.e. the top 10% users, I would approximately double the "retina" refresh rate (~4000Hz for 1080p). And for the Grandma "I can barely tell apart DVD and HDTV" crowd, I would approximately halve the "retina" refresh rate (~1000Hz for 1080p). (All of them, in a TestUFO versus, are able to notice a difference between 240fps vs 120fps vs 60fps when I show family members, so they're easily trainable to see the differences between doublings at those lower refresh rates -- so most vision-abled people are still seeing 240Hz benefits *if* they pay attention).

*Caveat; some assumptions made:

- Pixels are big enough to distinguish; and

- Object movement is not beyond human eye-tracking speed abilities (smooth eye pursuit); and

Retina resolution screens (like modern smartphone screens at 300-500+ dpi) where pixels are too tiny to individually identify, start to cap the retina refresh rate at some point. The point where the screen is just "almost retina resolution", probably also dictates the retina refresh rate thereafter too (for any higher resolution in the same-FOV screen). So a retina-resolution 4K smartphone screen will be bottlenecked by the tiny size of the screen & the tininess of pixels. Which would automatically lower the "retina" refresh rate -- it would be useless to have 3840 Hz on a 4K smartphone screen, for example, because you can't identify individual pixels (i.e. jaggies on a diagonal line) -- TestUFO rendered at 100% DPI scaling would yield extremely tiny UFOs that are hard to see details in, for example.

Easy Comparing For Average Users Usually Requires At Least Hz Doublings (or more)

While esports players can see small Hz improvements, the majority of average users can see framerate doublings when coached (e.g. showing A versus B). For example, 30fps vs 60fps, or 60fps vs 120fps, or 120fps vs 240fps (assuming GtG largely faster than Hz, since GtG overlapping multiple refresh cycles diminishes differences between refresh rates / frame rates). That's why the Blur Busters recommendation is the geometric curve upgrade -- 60Hz -> 120Hz -> 240Hz -> 480Hz -> 960Hz. The diminishing curve of returns requires more dramatic jumps in refresh rate & frame rate. So people also need to double frame rates while also doubling refresh rates if they're focussing on display motion blur (and/or stroboscopics). One can still benefit without upgraded refresh rates (e.g. 120fps at 240Hz is lower lag than 120fps at 120Hz) but human visible benefit (motion blur) requires frame rates to keep up with refresh rate.

Now, if you're starting to hit near the edge of the diminishing curve, you may even need to triple or quadruple refresh rate. For example, on a smartphone, 240Hz-vs-480Hz may be hard to see for most Average Joe Users, but 240Hz-vs-1000Hz may be easy to see for Average Joe Users (flick scrolling). So more dramatic geometric upgrades to frame rates and refresh rates may be required -- and that's a very hard engineering problem, especially for a battery-powered-constrained device.

In some cases, not everyone sees it until coached to notice (e.g. eye-tracking text while flick-scrolling the screen). When coached this way, most can identify the difference between a 60Hz iPad and a 120Hz iPad, even if they didn't notice before. (As long as they've got at least average brain function & average vision)

As a result, more realistically, we'll hit retina refresh rates on lower resolution desktop displays (1080p) long before this happens on VR displays (where retina refresh rates are really high due to really high resolutions) and long before they come to mobile screens (due to battery requirements, etc).

This is why high Hz is not just for esports anymore; it's got mainstream ergonomic benefits (e.g. more comfortable web browser scrolling). As long as higher Hz becomes cheap, it'll eventually get gradually mainstreamed (like 120Hz slowly is for interactive content -- phones, tablets, consoles, etc)

Obviously, this all needs to rolled into more formalized research -- and perhaps I'll collaborate with a researcher (feel free to contact me mark [at] blurbusters.com if any reader is a researcher). I've been cited in dozens of peer reviewed papers relating to display-temporal-topics...

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

valeriy l14

- Posts: 30

- Joined: 21 Mar 2021, 11:36

Re: Is motion blur directly related to strobe visibility or not?

Wait, isn't a 20/20 or 1 arc minute visual acuity just a normal level of vision? It seems like there are people with vision of 20/15, 20/10, and even though very rarely there were those who have 20/5 (it seems they were aborigens), for some reason I think that the power of the eye is somewhere between 20/7 and 20 /5.Chief Blur Buster wrote: ↑27 Mar 2021, 01:41

Retina resolution screens (like modern smartphone screens at 300-500+ dpi) where pixels are too tiny to individually identify, start to cap the retina refresh rate at some point.

It remains to be seen whether it is worth referring here to vernier visual acuity

P.s myself have somewhere between 20/15 and 20/10 with a minimum accommodation distance of 10-15 cm

Re: Is motion blur directly related to strobe visibility or not?

If we assume that motion blur comes from display persistence when tracking moving object, then necessary refresh rate for a sample-and-hold display can be calculated as:

RefreshRate = AngularSpeed / VisualAcuity

Under this condition, motion blur trail from persistence will have the same length as visual resolution. Visual acuity has to be larger of the values for visual acuity of one's eye and angular size of one pixel if display does not have "retina" resolution.

Typical visual acuity is said to be 1 arcminute, so this would give us refresh rate 50*60 = 3000 Hz for 50°/s angular speed. However, this is misleading since static visual acuity was used. In reality, visual acuity will get worse with increasing angular speed of tracked object. I tried to illustrate this in following figure, where 1 arcminute static visual acuity was assumed:

You can see that refresh rate requirement increase at first but they not increase to infinity if we take dynamic visual acuity into account. Under very simple model which I used, maximum required refresh rate is around 1800 Hz at roughly 60°/s and decreases at higher angular speeds.

Please, do not read to much into the actual values since the dynamic visual acuity depends on a lot of things (i.e. type of motion, time to lock on the target, test subject, etc.) but I wanted do demonstrate the idea behind it. In addition, requirements to fix stroboscopic effects might be higher than this.

RefreshRate = AngularSpeed / VisualAcuity

Under this condition, motion blur trail from persistence will have the same length as visual resolution. Visual acuity has to be larger of the values for visual acuity of one's eye and angular size of one pixel if display does not have "retina" resolution.

Typical visual acuity is said to be 1 arcminute, so this would give us refresh rate 50*60 = 3000 Hz for 50°/s angular speed. However, this is misleading since static visual acuity was used. In reality, visual acuity will get worse with increasing angular speed of tracked object. I tried to illustrate this in following figure, where 1 arcminute static visual acuity was assumed:

You can see that refresh rate requirement increase at first but they not increase to infinity if we take dynamic visual acuity into account. Under very simple model which I used, maximum required refresh rate is around 1800 Hz at roughly 60°/s and decreases at higher angular speeds.

Please, do not read to much into the actual values since the dynamic visual acuity depends on a lot of things (i.e. type of motion, time to lock on the target, test subject, etc.) but I wanted do demonstrate the idea behind it. In addition, requirements to fix stroboscopic effects might be higher than this.

Re: Is motion blur directly related to strobe visibility or not?

this is only the fov change requirements tho, 133 max in Hyperscape is not very much, even though some people insist on setting their mouse pad to be complete 360 (and I think that's too few a fov/either they should get a bigger pad keeping same edpi, or they should up their edpi, such as if it is already too big and of course if they aren't stubborn as hell) , that is to say, moving left and right gets them 180, then they lift it up, in any case from a standstill they can react to any static target anywhere without lifting up.

- Chief Blur Buster

- Site Admin

- Posts: 12098

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Is motion blur directly related to strobe visibility or not?

You certainly can measure via the arcminute instead -- that's more scientifically accurate than resolution, viewing distance, and FOV.valeriy l14 wrote: ↑27 Mar 2021, 04:58Wait, isn't a 20/20 or 1 arc minute visual acuity just a normal level of vision? It seems like there are people with vision of 20/15, 20/10, and even though very rarely there were those who have 20/5 (it seems they were aborigens), for some reason I think that the power of the eye is somewhere between 20/7 and 20 /5.

However, from a "Popular Science" perspective, it is easier to explain to laypeople about resolution amplifying refresh rate limitations, and refresh rate amplifying resolution limitations. Since a closer high resolution screen would have a similar retina refresh rate for a more distant but bigger high resolution screen (same FOV). Assuming your focussing ability is about the same at both distances.

But you are right, it's more accurately based on the arcminute density of pixels, versus the arcminutes/sec eye tracking speed. That would be more universal, from a scientific/researcher POV.

The important thing is longer pursuit of visible objects. The fastest motion of an object that can fit in a screen pursuit -- while still giving the human time to identify motion artifacts within the said object -- and the number of pixels over that distance -- appears to be dictate the retina refresh rate from the perspective of motion blur.

Now that being said, a 300dpi, 500dpi and a 1000dpi smartphone screen is usually indistinguishable for an average human displaying everyday imagery like a panning retina-resolution photo scaled to the screen (i.e. not a synthetic test pattern designed to amplify resolution limitations, e.g. www.testufo.com/aliasing-visibility where you can see 4K limitations on a 24" display from 10 feet away!)

So the moment the screen pretty much caps out a person's visual acuity, also simultaneously caps out the highest retina refresh rate (more or less).

There will be a curve-off effect in the diminishing curve of returns, for sure -- but for simplicity it will pretty much be plateaued, right there depending on your (superior/average/inferior) vision acuity in (whole or potentially fractions of) arcminutes, as well as eyetracking speed in (whole or potentially fractions of) arcminutes/sec.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

- Chief Blur Buster

- Site Admin

- Posts: 12098

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: Is motion blur directly related to strobe visibility or not?

This be true.MCLV wrote: ↑27 Mar 2021, 06:36You can see that refresh rate requirement increase at first but they not increase to infinity if we take dynamic visual acuity into account. Under very simple model which I used, maximum required refresh rate is around 1800 Hz at roughly 60°/s and decreases at higher angular speeds.

That said, from a "display engineering requirements" point of view (what Hz do I need to engineer it at?) -- it will always be a max(AllResults) because real-world games will often be full of moving objects at all charted velocities.

So you have to go straight to the weak link -- the maximum retina refresh rate Hz discovered for the most Hz-revealing moving object found in the content that the said "retina refresh rate" display would be displaying, in the worst-case content that the said display would be displaying.

But very good observation, there will possibly be a somewhat of a fall-off effect since humans will start to eye-track fast moving objects and fail to keep up gaze velocity almost (and still identify some artifacts).

Beyond a certain point, objects start moving too fast for a human to even bother to start attempting to eyetrack them (like another human waving hand fast in front of your eyes -- it's just a real-world motion blur in real life, motion blur generated by your human brain).

Now that said:

Good point.MCLV wrote: ↑27 Mar 2021, 06:36Please, do not read to much into the actual values since the dynamic visual acuity depends on a lot of things (i.e. type of motion, time to lock on the target, test subject, etc.) but I wanted do demonstrate the idea behind it. In addition, requirements to fix stroboscopic effects might be higher than this.

Yes, a bullet with a built-in flashing LED flying past your face -- (a tiny pinpoint flashing LED) speeding at 1 kilometer per second across your face with a light flashing at 1 MHz -- the phantom array effect could still be visible, with the phantom array 1mm apart (1 km/sec = 1mm in 1 microsecond). Obviously, way too fast to eye-track, but the phantom array effect is still there in this extreme circumstances.

However, you can simply add artificial GPU motion blur to fix stroboscopics. Now, you simply need to rougly double the retina refresh rate (nyquist sampling factor...) THEN add a tiny amount of GPU motion blur. It would be so little motion blur that it's beyond retina refresh rate. For example if a desktop screen of a specific size and resolution becomes "retina refresh rate" at 4000Hz, then you'd double it to 8000Hz and then add 1/8000sec of GPU motion blur. That's only 1/8000th second of GPU motion blur. Like a SLR camera shutter at 1/8000sec.

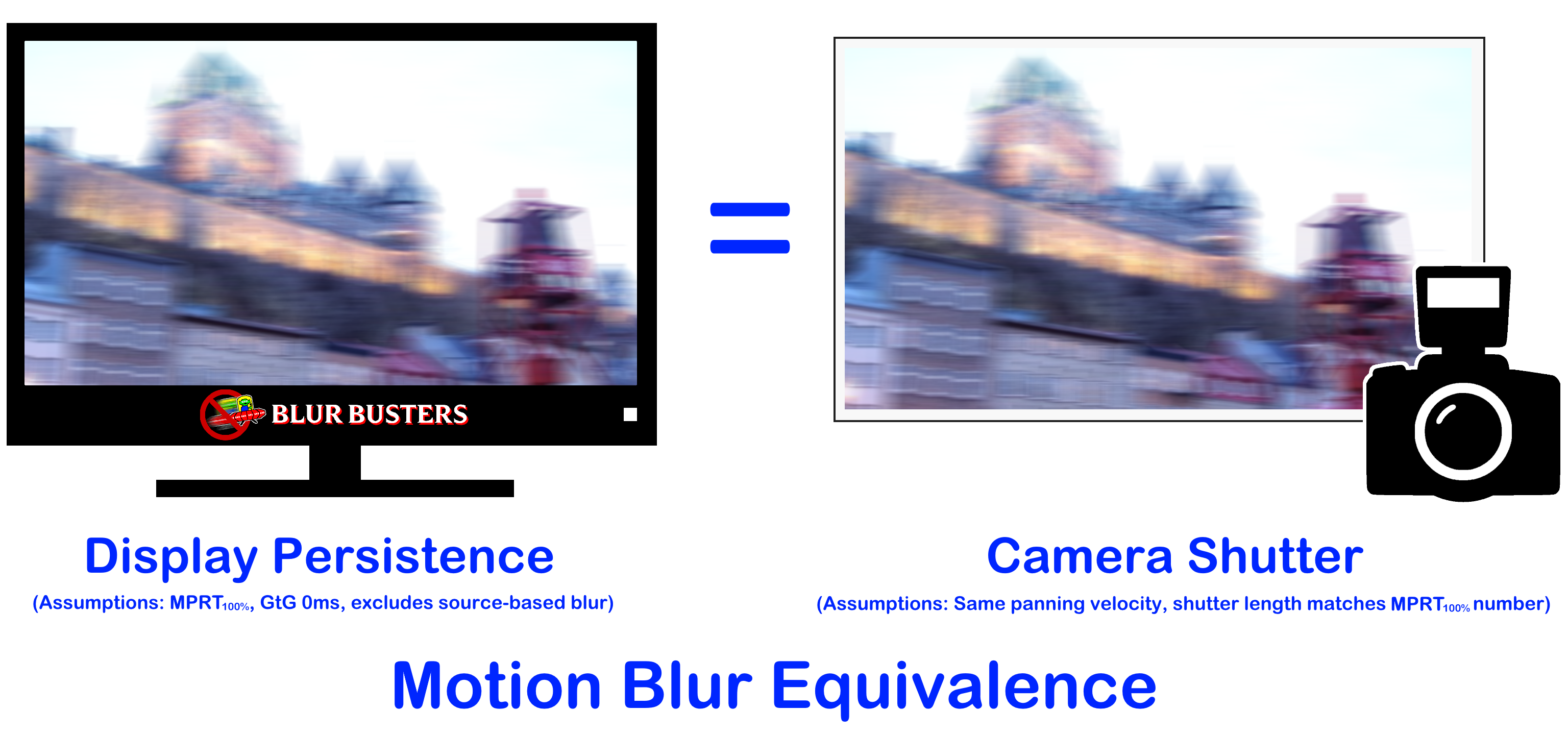

As explained at The Stroboscopic Effect of Finite Frame Rates as well as Pixel Response FAQ: GtG Versus MPRT, the two most important diagrams relevant to this thread would be the following.

Yes, a lot of us hate GPU motion blur. Sometimes it's nice for the Hollywood effect, but it does not work in virtual reality (motion blur creates nausea in VR). However, hear me out:

The above is an example of low-framerate GPU motion blur, at 30fps, 1/30sec of motion blur would be required to eliminate stroboscopic effect. UGH -- many of us hate motion blur.

But... if we're already beyond retina refresh rate, then adding a tiny bit of GPU motion blur isn't even visible to human eyes from a motion blur perspective in these circumstances! It only serves to fix the stroboscopic effect for fixed-gaze-moving-ultrafast-objects situations. This way, objects can still move at 1000mph and still never show stroboscopic effects. Since these objects are impossible to eye-track at close distances, then the only purpose of adding an ultra-tiny GPU motion blur is to fix stroboscopic effects at retina refresh rate.

Imagine a SLR camera at 1/8000sec for an 8000Hz display. Or 1/2500sec for a 2500Hz display. We already know how sharp camera photographs are during fast shutters, so this isn't objectionable GPU motion blur, since it's only fixing stroboscopic effects.

Now, that being said, we have adjust both source persistence (camera shutter or GPU blur) and destination persistence (display) to create a retina refresh rate effect the perspective of both motion blur as well as stroboscopic effects (and its cousions, "phantom array effect" and "wagon wheel effect").

Many high-Hz skeptics (mostly in the non-researcher community, who now increasingly recognize the benefits of high Hz for matching real life).... claims that motion blur is natural. This is correct for many contexts like watching simple 2D films. I'm a big fan of Hollywood Movie Maker 24fps effect -- I even write about this in UltraHFR FAQ: Real Time 1000fps on Real 1000Hz Displays.

BTW -- on a related note -- there's really just two favourite standards to me: Regular low frame rate 24fps, and retina frame rate (1000fps+). Intermediate HFR (48fps or 120fps) on sample-and-hold generates more motion-blur nausea than 24fps (traditional) or 1000fps (UltraHFR). This headache uncanny valley effect is most amplified in virtual reality -- that's why all VR headsets avoid sample-and-hold at current contemporary refresh rates of 60Hz-240Hz range]. This is because pseudo-HFR (48fps to 120fps) on sample-and-hold displays are high frame rate enough to be beyond flicker fusion threshold, but motion still is more motion-blurry than real life. This feels vertigo-odd to many humans, and a nauseating effect (smooth motion that still has motion blur). Many film connoiseurs (including me) rather watch low frame rates (24fps), or go to 1000fps UltraHFR for sample-and-hold displays. If I watch 120fps HFR, I prefer it strobed instead of sample-and-hold. This is why VR headsets at these HFR refresh rate ranges tends to be strobed -- it creates less headaches than 120fps sample-and-hold HFR (ugh!). The same is true for large desktop gaming displays, especially with interactive content -- although less pronounced than VR, it affects a large percentage of gamers who gets motion-sickness during gaming.

From the UltraHFR FAQ

The GPU version of this would be to add GPU motion blur (as an equivalent of camera-based motion blur).Chief Blur Buster wrote:Four Simple Fixes for Temporally Accurate Reality Simulation

To eliminate all weak links, so that remaining limitations is human-vision based. No camera-based or display-based limitations above and beyond natural human vision/brain limitations.Therefore;

- Fix source stroboscopic effect (camera): Must use a 360-degree camera shutter;

- Fix destination stroboscopic effect (display): Must use a sample-and-hold display;

- Fix source motion blur (camera): Must use a short camera exposure per frame;

- Fix destination motion blur (display): Must use a short persistence per refresh cycle.

Therefor, to solve (1), (2), (3), (4) simultaneously requires ultra high frame rates at ultra high refresh rates.

- Ultra high frame rate with 360-degree camera shutter is also short camera exposure per frame;

- Ultra high refresh rate with sample-and-hold display is also short persistence per refresh cycle,

So for 1000fps 1000Hz elimination of stroboscopic effect, would require 1/1000sec of GPU motion blur. The higher the frame rate, the smaller the GPU motion blur. So you just need to push the entire combination (display refresh rate, frame rate, and GPU motion blur) a little well beyond retina refresh rates -- oversample a bit beyond vision acuity for motion pursuit situations -- and you're golden in completely eliminating stroboscopic effects without needing to raise retina refresh rates any further beyond this point.

Also -- on a related note -- many researchers don't even realize the HFR uncanny valley effect (sample-and-hold 48fps-to-120fps HFR generating nausea), which also affects a lot of traditional interpolation. Even if the interpolation is perfect (no parallax artifacts), the blurry-HFR look of sample-and-hold HFR is pretty odd to many people. Strobed HFR is better, or going blurless sample-and-hold (aka 1000fps 1000Hz).

Special consideration: Now, there's one minor advantage of stroboscopic effects -- some people have gaming tactics that use momentary-object-identification of the stroboscopic effect to get a competitive advantage -- e.g. imagine the mouse arrows at www.testufo.com/mousearrow is instead a horizontally moving enemy -- may also aid in enemy identification. But real life (in non-flickering situations, whether from flashing-light effects, or from picket-fence effects) doesn't generate stroboscopic effects. Still, that's one of the reasons a very small % of gamers prefer 144Hz than 240-360Hz -- a very minority. But they do exist, gamers who intentionally use the stroboscopic effect as a preferred competitive advantage, instead of other advantages like reduced latency (higher Hz has lower lag) & reduced motion blur (higher Hz has less blur). But stroboscopics is an artificial gaming advantage (it is divergent from real-life motion) much like shadow-boost or false-color features. Besides, the latency-savings and motion-clarity of ultra-high-Hz exceeds the small competitive advantage of a phantom array effect at lower Hz.

Example: The familiar mouse arrow stroboscopic effect, when spinning around a mouse cursor....

(but also a refreh-rate-limitation-reveal in games too, VR too, Holodecks too)

When we're mimicking real life (VR or Holodecks), we don't want any of this from a display! So, the brute force method to making display motion look analog motion -- is ultra high frame rates at ultra high refresh rates. Newer VR scientists have schooled traditional greybeard/retired display scientists (grew up in CRT days) who don't quite understand the physics of sample-and-hold display motion blur. Refresh rate (to fix motion blur) is far more important for flickerless sample-and-hold displays like most modern flat panel displays.

Interesting discussion though. There's many rabbit holes and pandora's boxes to unpack here, of course.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on: BlueSky | Twitter | Facebook

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

Re: Is motion blur directly related to strobe visibility or not?

Yes, you have to take highest value achieved in a range of speeds relevant for particular application.Chief Blur Buster wrote: ↑28 Mar 2021, 15:05That said, from a "display engineering requirements" point of view (what Hz do I need to engineer it at?) -- it will always be a max(AllResults) because real-world games will often be full of moving objects at all charted velocities.

I think it really depends on the application. I'm not sure that it's so clear cut and that uncanny value is always that wide. 24 fps has definitely it's own look but I have to say that I've never considered it good looking in certain situations, e.g. panning shots. I made my own comparison of 24, 30, 60 and 120 fps video few weeks ago. 24 fps looks great when camera is static but falls apart during panning. Higher framerates look progressively better during panning. However, it was not completely apples-to-apples comparison since I recorded 24 and 30 fps in 4K and 60 and 120 fps only in 1080p. Unfortunately, I don't own any device capable of recording 4K at 120 fps.Chief Blur Buster wrote: ↑28 Mar 2021, 15:05BTW -- on a related note -- there's really just two favourite standards to me: Regular low frame rate 24fps, and retina frame rate (1000fps+).

My suspicion is that major contribution to why HFR movies look bad and fake is the fact that what you see on screen was in fact fake when shot was taken. Hollywood does not build spaceships, kill people on set and actors... well, they act. I think that HFR simply allows you to see all this fakeness more easily while 24 fps leaves enough room for brain to fill in the gaps by itself. So I don't think that we will see Hollywood to switch to HFR anytime soon. However, documentaries, sports broadcast, etc. would benefit from this since stuff in front of a camera is actually real. And in my opinion, currently available HFR options like 60 and 120 fps already provide such benefit so we don't have to wait until 1000 fps arrives in these applications. But I guess it's also matter of taste so your preferences might differ.