So, we have a display. I'll try to answer the question: “What minimum refresh rate would someone find acceptable?

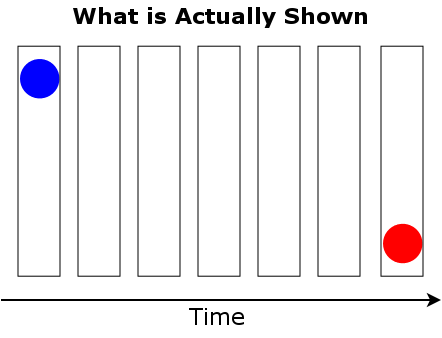

Motion perception: Motion perception is primarily a result of the phi phenomenon and beta movement. In phi, there are different images in a single place, whereas in beta the images are in different locations. To perceive both illusions we need a minimum frame of around 20 Hz. 24 Hz for film was chosen for this reason.

Illustrations:

Source: http://books.google.at/books?id=jzbUUL0 ... &q&f=false

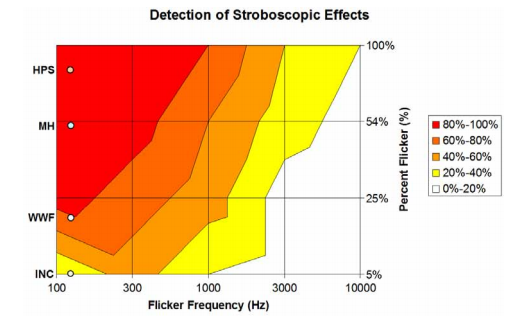

Flicker: To avoid eye tracking motion blur, we lower the persistence duration of our display, and use persistence of vision to 'hide' the blacking out of the display. But if the refresh rate is too low, the change in brightness becomes visible as flicker. The threshold frequency is called critical flicker fusion, and primarily depends on brightness, area and location of illumination

So 60Hz is enough to avoid visible flicker in any situation, and can be lower in controlled environments (e.g. movie theater or VR)

Source: http://webvision.med.utah.edu/book/part ... esolution/

Eye strain and headaches: While flicker higher 60Hz can't be detected consciously, it still has an effect on our visual cortex, especially after longer periods. Rhythmic potentials in the human ERGcan be elicited by fluorescent lighting at frequencies as high as 147 Hz. Lower brightness, lower duration and ambient light are mitigating factors. Refresh rates above 75Hz avoid most averse effects, but higher still shows improvements. 100 Hz is generally considered “flicker free” for displays.

Sources: http://web.mit.edu/parmstr/Public/NRCan/nrcc38944.pdf

http://lrt.sagepub.com/content/21/1/11.abstract

http://onlinelibrary.wiley.com/doi/10.1 ... x/abstract

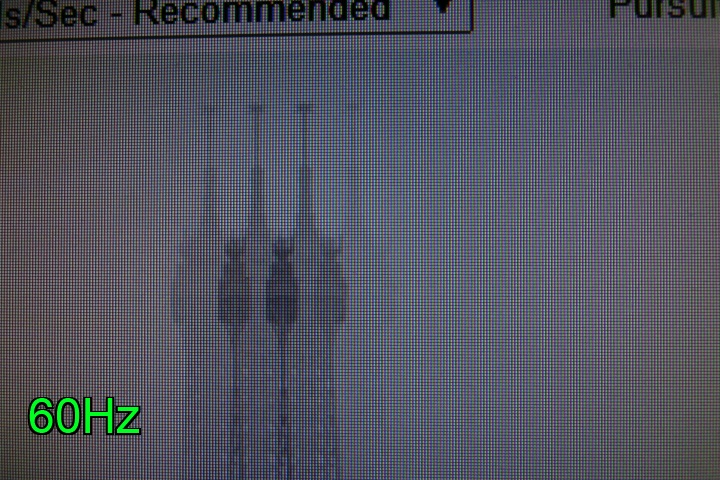

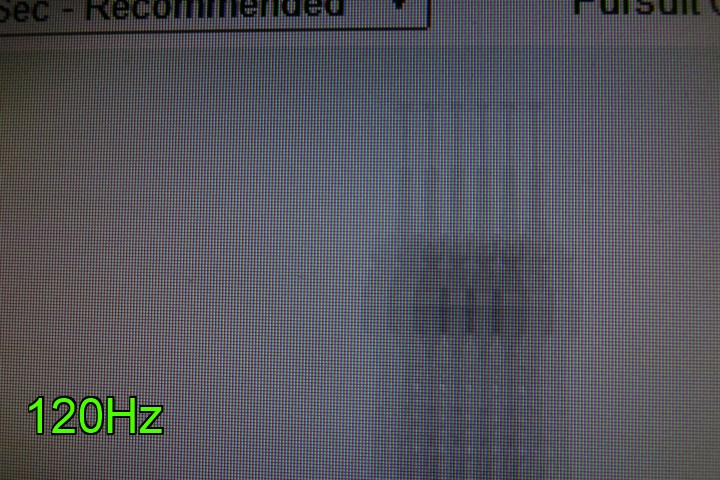

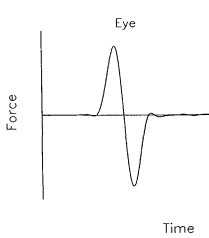

Phantom arrray effect: (A higher order issue example) Eyes constantly look around, scanning the scene for interesting parts. These quick, jerky, simultaneous eye movements are called saccades. The speed of the saccades depends on the angle traveled, with a maximum of 900°/s for angles above 60°.

Persistence of vision causes the image on the retina to blur during saccades, but saccadic masking prevents transmitting low frequency information via the optic nerve during, and slightly before the begin of the saccade.

Ultra low persistence (Oculus Crystal Cove, Lightboost 10%) disables saccadic masking, causing a judder-like effect.

here's what Valve experienced

Sources: http://opensiuc.lib.siu.edu/cgi/viewcon ... ontext=tprThe first factor is the interaction of low persistence with saccadic masking. It’s a widespread belief that the eye is blind while saccading, and while the eye actually does gather a variety of information during saccades, it is true that normally no sharp images can be collected because the image of the real world smears across the retina, and that saccadic masking raises detection thresholds, keeping those smeared images from reaching our conscious awareness. However, low-persistence images can defeat saccadic masking, perhaps because saccadic masking fails when mid-saccadic images are as clear as pre- and post-saccadic images in the absence of retinal smear. At saccadic eye velocities (several hundred degrees/second), strobing is exactly what would be expected if saccadic masking fails to suppress perception of the lines flashed during the saccade.

One other factor to consider is that the eye and brain need to have a frame of reference at all times in order to interpret incoming retinal data and fit it into a model of the world. It appears that when the eye prepares to saccade, it snapshots the frame of reference it’s saccading from, and prepares a new frame of reference for the location it’s saccading to. Then, while it’s moving, it normally suppresses the perception of retinal input, so no intermediate frames of reference are needed. However, as noted above, saccadic masking can fail when a low-persistence image is perceived during a saccade. In that case, neither of the frames of reference is correct, since the eye is between the two positions. There’s evidence that the brain uses a combination of an approximated eye position signal and either the pre- or post-saccadic frame of reference, but the result is less accurate than usual, so the image is mislocalized; that is, it’s perceived to be in the wrong location.

Not long ago, I wrote a simple prototype two-player VR game that was set in a virtual box room. For the walls, ceiling, and floor of the room, I used factory wall textures, which were okay, but didn’t add much to the experience. Then Aaron Nicholls suggested that it would be better if the room was more Tron-like, so I changed the texture to a grid of bright, thin green lines on black, as if the players were in a cage made of a glowing green coarse mesh.

For the most part, it looked fantastic. Both the other player and the grid on the walls were stable and clear under all conditions. Then Atman Binstock tried standing near a wall, looking down the wall into the corner it made with the adjacent wall and the floor, and shifting his gaze rapidly to look at the middle of the wall. What happened was that the whole room seemed to shift or turn by a very noticeable amount. When we mentally marked a location in the HMD and repeated the triggering action, it was clear that the room hadn’t actually moved, but everyone who tried it agreed that there was an unmistakable sense of movement, which caused a feeling that the world was unstable for a brief moment. Initially, we thought we had optics issues, but Aaron suspected persistence was the culprit, and when we went to full persistence, the instability vanished completely. In further testing, we were able to induce a similar effect in the real world via a strobe light.

http://blogs.valvesoftware.com/abrash/d ... ng-judder/

Input Lag: Input lag below 20 ms is generally considered imperceptible. Required refresh rate highly depends on other sources of additional latency. Counter Strike: Go at 144 Hz comes close with ~25 ms, and this requirement could be further reduced with alternative rendering methods, up to a theoretical minimum of 50 Hz.

http://www.blurbusters.com/gsync/preview2/

http://www.altdevblogaday.com/2013/02/2 ... trategies/

Conclusion/TLDR: A minimum of ~20Hz is required to perceive motion. For a low persistence display, a minimum of 50-60 Hz is necessary to avoid flicker perception, but a refresh rate higher than 75 Hz might be necessary to avoid discomfort and/or reduce input lag. Ultra low persistence causes additional issues that may necessitate even higher frame rates or alternative mitigation strategies (e.g. low latency eye tracking for saccadic masking)