Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

I did some end-to-testing with Apex Legends yesterday; outcomes are:

- NULL, NVIDIA limiter, and RTSS perform on par with each other: they reduce latency with very similar amounts. When I say NVIDIA limiter I basically mean NULL enabled and a specific limit set in Profile Inspector, ie 97 fps in these tests. Just NULL means no limit set in Profile Inspector and NULL limits to 97.3 automatically in my setup.

- using the in-game frame rate limiter with vsync in game disabled reduced latency a further 10ms, making it the preferred option. I do not experience tearing in Apex with these settings, surprisingly, most other games would have tearing.

Details in my spreadsheet linked above.

- NULL, NVIDIA limiter, and RTSS perform on par with each other: they reduce latency with very similar amounts. When I say NVIDIA limiter I basically mean NULL enabled and a specific limit set in Profile Inspector, ie 97 fps in these tests. Just NULL means no limit set in Profile Inspector and NULL limits to 97.3 automatically in my setup.

- using the in-game frame rate limiter with vsync in game disabled reduced latency a further 10ms, making it the preferred option. I do not experience tearing in Apex with these settings, surprisingly, most other games would have tearing.

Details in my spreadsheet linked above.

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

I inspected your results.Vleeswolf wrote:I did some end-to-testing with Apex Legends

[ https://docs.google.com/spreadsheets/d/ ... edit#gid=0 ]

While you're math appears to be about right (100Hz = 10ms frametime x frame delay = input lag in ms), your setup isn't capable of capturing <1 frame differences, and you're keyboard/led method could very well be equalizing samples more often than not; you actually need to do a high speed test of the led reaction first to see how much lag/variance it has from press to light up.

Currently, too many of your samples within each scenario are either identical or really close from press-to-press, which tells me most of your results are getting lost/masked by your equipment/methodology's error margin, because with a capable high-speed test setup, the variance from sample to sample often varies greatly (thus the need for a min, max, average, which is then averaged out of many samples).

The reason it's detecting the reduction with the in-game limiter scenario, is because there is more than 1 frame of input lag difference vs. the other scenarios. Your current equipment and testing methodology is only going to be able to capture scenarios that have a difference of 2 or more frames (and even less the higher the refresh rate you go, where a frame becomes worth less and less), and it will only partially capture those difference.

I attempted something similar with a phone camera (240 FPS, 720p slo-mo capture) when I was first starting out (viewtopic.php?f=5&t=3055&start=50#p22913), and all it did was confirm that uncapped V-SYNC had more input lag than capped G-SYNC, and it didn't even report the right number, it was just enough to tell me there was a difference.

Have you happened to read this page of the article where I describe my test methodology? It may help you improve yours if you're serious about continuing your tests:

https://www.blurbusters.com/gsync/gsync ... ettings/3/

My original test spreadsheet is also available for inspection here:

https://onedrive.live.com/view.aspx?cid ... 50hiq4wuf8&

Finally, you want to ensure you are capturing the same amount of samples from scenario to scenario, that you have at least 20 samples per scenario (the more samples the better the less accurate your equipment is), and the test game isn't further equalizing and/or randomizing your results through the chosen test actions/animations.

(jorimt: /jor-uhm-tee/)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

Thanks for the review Jorimt.

Agree on the point that the caps-lock-light method was not evaluated; it would surprise me if it really was much different than connecting an LED to a mouse button though.

Obviously the 240 fps phone camera does a less precise measurement than a 1000 fps camera. My formula is actually 1000/240 = 4.2 ms * frames count from input to output, not sure why you say 10 ms per frame. With 4.2 ms it is possible to measure within 1 frame differences, at least at 100Hz.

Another valid point is that more samples are needed. Indeed If only there were more hours in the day. I do calculate the sample mean, and additionally the confidence bounds (t test) for 80% and 95% confidence levels.

If only there were more hours in the day. I do calculate the sample mean, and additionally the confidence bounds (t test) for 80% and 95% confidence levels.

I appreciate that there are way better setups available to do this, which also require all kinds of specific hardware setup. In the future I'm probably going to look for more programmatic approaches, however. PresentMon is a nice step in that direction but it only measures what happens to Present calls. Lag reduction techniques happening in-engine before Present is even called are not measured by PresentMon (Source engine limiter is one of such examples).

I made the following plot for several traces of Apex Legends with various settings. The MsUntilDisplayed measure (blue line) essentially is the component of frame latency caused by the present pipeline. It's clearly much higher in case VSYNC is ON and the game hitting the refresh rate (100Hz); ie. the top-right graph. In all the other graphs the latency component sits around 8-9 ms despite various different settings and limiters being used. Based on the end-to-end measurements though, we know the in-game capping results in a lower overall latency. PresentMon cannot see that difference, unfortunately. It would be great if we could somehow timestamp game inputs and connect those to PresentMon's traces... Maybe Windows ETW has a way to do it, I'm not knowledgeable enough to say, but I'll look into it.

Cheers,

Vleeswolf

Agree on the point that the caps-lock-light method was not evaluated; it would surprise me if it really was much different than connecting an LED to a mouse button though.

Obviously the 240 fps phone camera does a less precise measurement than a 1000 fps camera. My formula is actually 1000/240 = 4.2 ms * frames count from input to output, not sure why you say 10 ms per frame. With 4.2 ms it is possible to measure within 1 frame differences, at least at 100Hz.

Another valid point is that more samples are needed. Indeed

I appreciate that there are way better setups available to do this, which also require all kinds of specific hardware setup. In the future I'm probably going to look for more programmatic approaches, however. PresentMon is a nice step in that direction but it only measures what happens to Present calls. Lag reduction techniques happening in-engine before Present is even called are not measured by PresentMon (Source engine limiter is one of such examples).

I made the following plot for several traces of Apex Legends with various settings. The MsUntilDisplayed measure (blue line) essentially is the component of frame latency caused by the present pipeline. It's clearly much higher in case VSYNC is ON and the game hitting the refresh rate (100Hz); ie. the top-right graph. In all the other graphs the latency component sits around 8-9 ms despite various different settings and limiters being used. Based on the end-to-end measurements though, we know the in-game capping results in a lower overall latency. PresentMon cannot see that difference, unfortunately. It would be great if we could somehow timestamp game inputs and connect those to PresentMon's traces... Maybe Windows ETW has a way to do it, I'm not knowledgeable enough to say, but I'll look into it.

Cheers,

Vleeswolf

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

There certainly can be.Vleeswolf wrote:it would surprise me if it really was much different than connecting an LED to a mouse button though.

In my original forum tests, I validated with my 1000 FPS camera that when tapping into the Razer chroma function using a custom program to light up my mouse scroll wheel LED on click, I got anywhere from 9-10ms of delay from click to light up, as opposed to the external LED that the Chief hardwired to my mouse, which only had a <1ms variance.

Seeing as you're relying on the keyboards existing software, which is usually not tuned for responsiveness or consistency in this respect, as they don't expect/intend it to be used for this purpose (whereas I optimized the light up action responsiveness as much as possible through a custom program for my forum test, which weren't even as accurate as my article tests), that's a big unknown, and is definitely influencing the final results.

Yes, sorry, typo: *(240 FPS = 4.2ms frametime x frame delay = input lag in ms). That's at least how I was calculating in my original 240 FPS tests (which I found weren't worth too much where accuracy was concerned, thus why I moved onto a 1000 FPS camera).Vleeswolf wrote: My formula is actually 1000/240 = 4.2 ms * frames count from input to output, not sure why you say 10 ms per frame.

So you're saying you times 4.2ms by total delayed frames captured?

Because I originally absentmindedly stated "(100Hz = 10ms frametime x frame delay = input lag in ms)" since that's the only way I could get your numbers to line up when comparing your "LAG (game frames)" column numbers with your "LAG (msec)" column numbers. So I now assume the "LAG (game frames)" column numbers must represent the 10ms frametime of 100Hz, e.g. 2.8 frames = ~28ms, right?

If so, even though it's technically correct, I'd suggest you discard that column, as it confuses things (the start/stop and ms numbers are enough).

And I'm suggesting to you that it may not be. The frame differences you're trying to capture are 10ms per (100Hz), and there's only a 5.8ms frametime difference between your monitor and your capture device.Vleeswolf wrote:With 4.2 ms it is possible to measure within 1 frame differences, at least at 100Hz.

So while it can show a difference, it can't show an exacting or complete one.

For instance, let's take two of your Apex scenarios (also, was this with G-SYNC? And why do you have V-SYNC listed as "Game" for some and "None" for others when comparing like-for-like scenarios? The scenarios should be identical but for the framerate limiter type for proper comparison, at least if you're trying to isolate the differences between the limiters):

IN-GAME @97 FPS = min: 13ms, max: 21ms, avg: 18.43ms

NULL + NVI @97 FPS = min: 17ms, max: 29ms, avg: 25.6ms

Difference = 7.17ms.

That's not a full frame at @100Hz (10ms), which means, again, if it ends up being a frame or more with a more accurate setup (1000 FPS), it will show your camera may only be able to partially capture the lag difference between the two scenarios.

I also forgot to ask, are you capturing with your phone in portrait or landscape? If the former, you're introducing even more potential inconsistencies, as now the camera's scanout is going left/right, as opposed to the display's top/bottom. Either way, the camera and display scanouts aren't synced, which causes further inaccuracy, another reason it's important that the capture device's scanout orientation is a match to the captured display, and that the capture device's FPS be that much higher than the display's to compensate for the desynchronization of scanouts between the two.

In other words, the lower the refresh rate you're capturing with your 240 FPS camera, the more accurate it will be (e.g. <60Hz), thus the need for a higher FPS capture device when we're talking about <1 frame differences at higher refresh rates.

It's the reason I said you should test Overwatch or CS:GO, if possible; Battle(non)sense and I have repeatedly captured data in Overwatch (at 1000 FPS with well practiced and repeatedly validated methods) that you can directly compare, and depending on how well your results match ours, we'll know how capable (or incapable) your setup is.

With the limitations of your equipment, you need a control to get results approaching anything close to accurate, and even then, you may ultimately have to take them with a grain of salt.

Don't get me wrong here, I'm not trying to dissuade you from further testing, I'm trying to help you get it right; there's a reason not many people perform high speed test: it's absurdly time consuming (it took me over 2 months to manually generate the data in my article, and 2 weeks of that was getting my methodology down) and full of "gotchas" that can invalidate your results in no time if you're not careful (something I know all too well from my own experiences).

(jorimt: /jor-uhm-tee/)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

Finished reading this thread.

Thanks jorimt for going through and explaining this stuff for us.

Thanks jorimt for going through and explaining this stuff for us.

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

Happy to @nodicaL.

Also, on a more general note here, I'd ultimately like to compare these scenarios myself in a variety of games if I am ever able to acquire more capable equipment, as while what I used in my G-SYNC 101 article was effective (and ultimately sufficient) for the subject matter (V-SYNC-induced input lag), it was really only achieved through brute force.

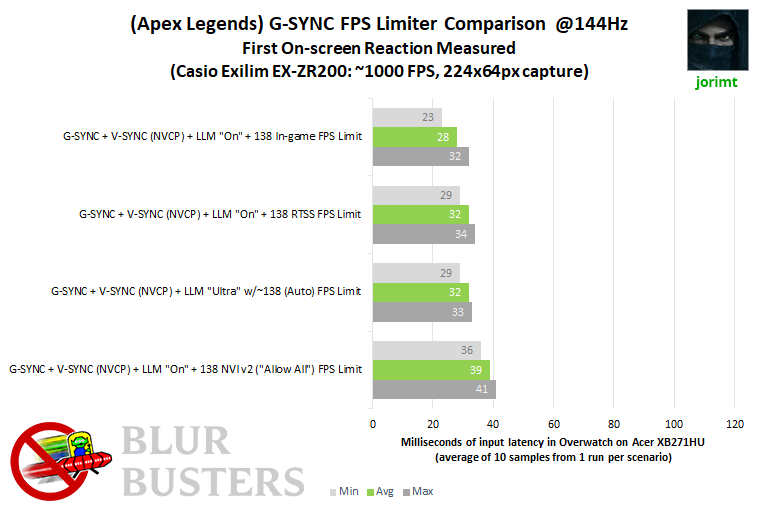

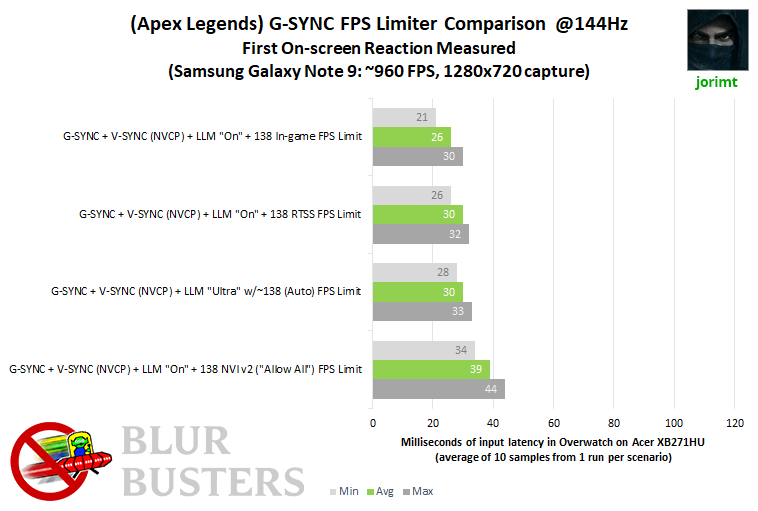

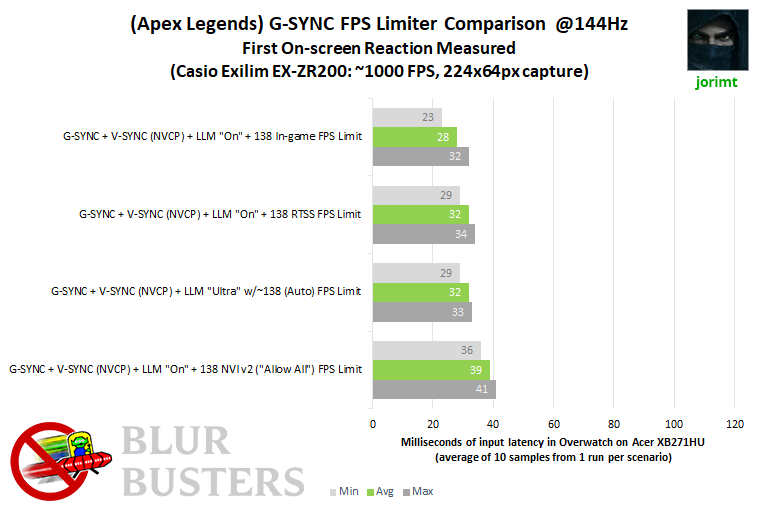

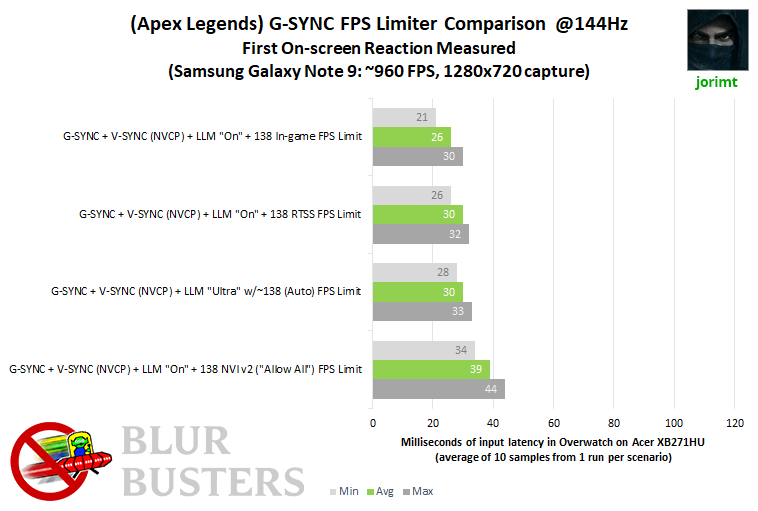

The amount of test scenario combinations required, and the lack of my current camera's resolution (1000 FPS, 224x64px) would make testing in a variety of different games (that don't always have such controlled conditions available; cluttered scenery that make screen changes difficult to spot, poor remapping options that don't allow strafe on click, character animations with slow response time and/or reactive variance from action-to-action) very impractical.

As @Vleeswolf previously suggested, there are indeed possibly conditions where NULL and RTSS (with G-SYNC) perform with similar, maybe even virtually identical input latency levels, but we simply only have one data point (for G-SYNC + NULL) to go by, that being Battle(non)sense's recent test chart I've embedded a couple times here, which show it may have more input lag than RTSS (at least in that one game with "Reduced Buffering" disabled).

I've been doing some (very, very) casual testing regarding this (only 10 samples per in a handful of scenarios) with both my original 1000 FPS test camera and my Note 9 ("960 FPS" mode; I have yet to validate it's accuracy, but it's been fun to play around with it due to the increase in fidelity; 720p), so if I find anything worth sharing, I will eventually do so here.

Also, on a more general note here, I'd ultimately like to compare these scenarios myself in a variety of games if I am ever able to acquire more capable equipment, as while what I used in my G-SYNC 101 article was effective (and ultimately sufficient) for the subject matter (V-SYNC-induced input lag), it was really only achieved through brute force.

The amount of test scenario combinations required, and the lack of my current camera's resolution (1000 FPS, 224x64px) would make testing in a variety of different games (that don't always have such controlled conditions available; cluttered scenery that make screen changes difficult to spot, poor remapping options that don't allow strafe on click, character animations with slow response time and/or reactive variance from action-to-action) very impractical.

As @Vleeswolf previously suggested, there are indeed possibly conditions where NULL and RTSS (with G-SYNC) perform with similar, maybe even virtually identical input latency levels, but we simply only have one data point (for G-SYNC + NULL) to go by, that being Battle(non)sense's recent test chart I've embedded a couple times here, which show it may have more input lag than RTSS (at least in that one game with "Reduced Buffering" disabled).

I've been doing some (very, very) casual testing regarding this (only 10 samples per in a handful of scenarios) with both my original 1000 FPS test camera and my Note 9 ("960 FPS" mode; I have yet to validate it's accuracy, but it's been fun to play around with it due to the increase in fidelity; 720p), so if I find anything worth sharing, I will eventually do so here.

(jorimt: /jor-uhm-tee/)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

Test specs:

OS: Windows 10 Pro 64-bit (1909)

Nvidia Driver: 441.34

Display: Acer Predator XB271HU (27" 144Hz G-Sync @1440p)

Motherboard: ASUS ROG Maximus X Hero

Power Supply: EVGA SuperNOVA 750W G2

CPU: i7-8700k @4.3GHz (Hyper-Threaded: 6 cores/12 threads)

Heatsink: H100i v2 w/2x Noctua NF-F12 Fans

GPU: EVGA GTX 1080 Ti FTW3 GAMING iCX 11GB (1936MHz Boost Core Clock)

Sound: Creative Sound Blaster Z

RAM: 32GB G.SKILL TridentZ DDR4 @3200MHz (Dual Channel: 14-14-14-34, 2T)

SSD (OS): 500GB Samsung 960 EVO NVMe M.2

HDD (Games): 5TB Western Digital Black 7200 RPM w/128MB Cache

Test game:

Apex Legends, all settings "low/disabled" but for textures at "Insane" (GPU usage: ~80%)

These results are by no means comprehensive or conclusive (let alone article-worthy), but interesting anecdotally nonetheless:

It appears that NULL and RTSS have the same input lag levels with G-SYNC in this game with these test settings.

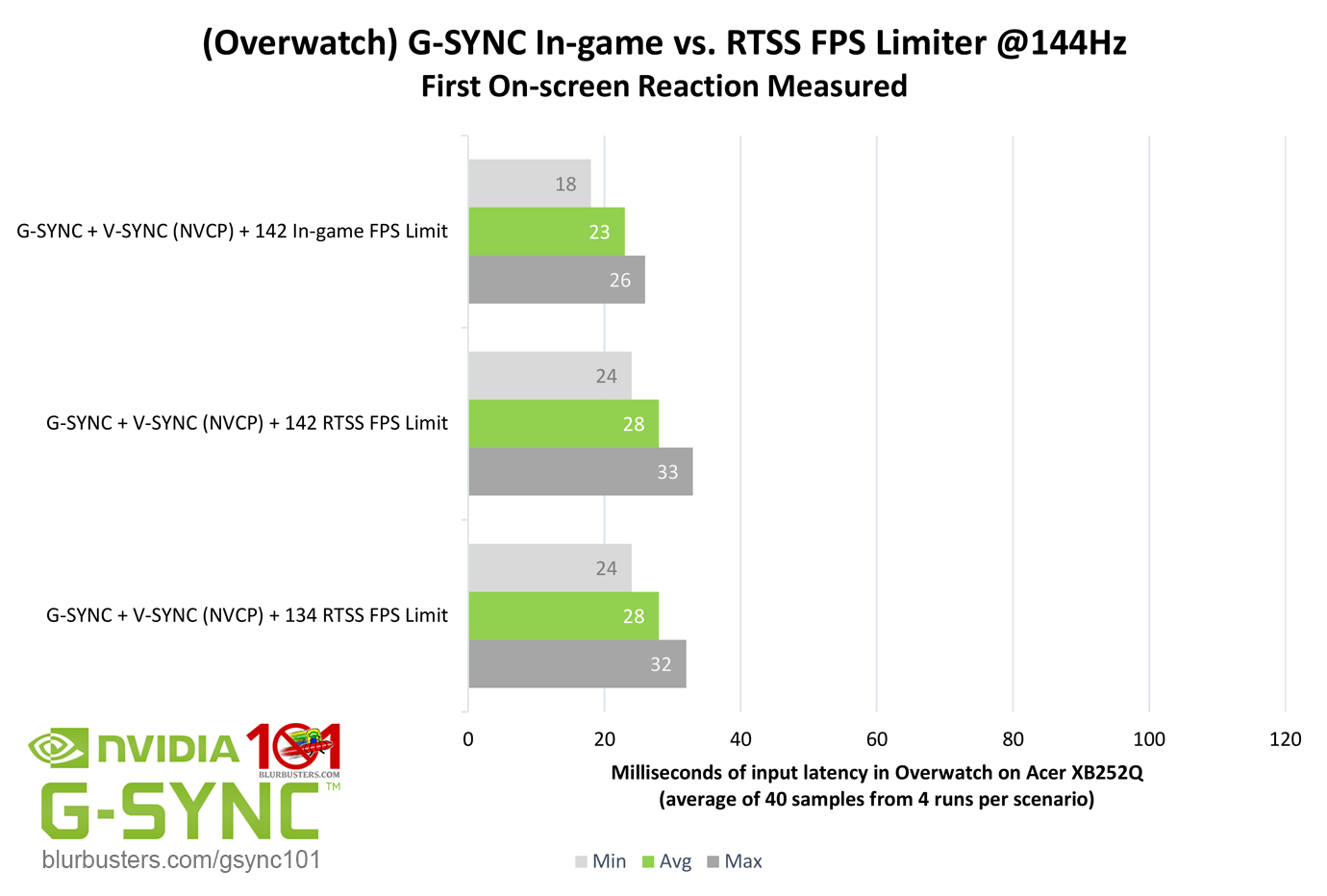

As for Battle(non)sense's results, it may be that with Overwatch's "Reduced Buffering" setting "Off," it increases input latency for external FPS limiters across the board without increasing the in-game limiter's input latency proportionately, which might be what we're seeing there (I originally tested Overwatch with that setting enabled).

I'll also point out that even in cases where NULL may be as low latency as RTSS, you still can't manually control how high/low it limits the FPS due it's auto-limiting function (which is a steep reduction at 240Hz currently, for instance). That, and NULL currently doesn't support DX12 or Vulkan, so my existing (recently updated) Optimal G-SYNC Settings still stand for the moment:

https://www.blurbusters.com/gsync/gsync ... ttings/14/

OS: Windows 10 Pro 64-bit (1909)

Nvidia Driver: 441.34

Display: Acer Predator XB271HU (27" 144Hz G-Sync @1440p)

Motherboard: ASUS ROG Maximus X Hero

Power Supply: EVGA SuperNOVA 750W G2

CPU: i7-8700k @4.3GHz (Hyper-Threaded: 6 cores/12 threads)

Heatsink: H100i v2 w/2x Noctua NF-F12 Fans

GPU: EVGA GTX 1080 Ti FTW3 GAMING iCX 11GB (1936MHz Boost Core Clock)

Sound: Creative Sound Blaster Z

RAM: 32GB G.SKILL TridentZ DDR4 @3200MHz (Dual Channel: 14-14-14-34, 2T)

SSD (OS): 500GB Samsung 960 EVO NVMe M.2

HDD (Games): 5TB Western Digital Black 7200 RPM w/128MB Cache

Test game:

Apex Legends, all settings "low/disabled" but for textures at "Insane" (GPU usage: ~80%)

These results are by no means comprehensive or conclusive (let alone article-worthy), but interesting anecdotally nonetheless:

It appears that NULL and RTSS have the same input lag levels with G-SYNC in this game with these test settings.

- RTSS/NULL have 0.5 - 1 frame more input lag than the Apex Legends (Source engine's) in-game limiter.

- NVI v2 has up to 2 frames more input lag than the Apex Legends (Source engine's) in-game limiter.

As for Battle(non)sense's results, it may be that with Overwatch's "Reduced Buffering" setting "Off," it increases input latency for external FPS limiters across the board without increasing the in-game limiter's input latency proportionately, which might be what we're seeing there (I originally tested Overwatch with that setting enabled).

I'll also point out that even in cases where NULL may be as low latency as RTSS, you still can't manually control how high/low it limits the FPS due it's auto-limiting function (which is a steep reduction at 240Hz currently, for instance). That, and NULL currently doesn't support DX12 or Vulkan, so my existing (recently updated) Optimal G-SYNC Settings still stand for the moment:

https://www.blurbusters.com/gsync/gsync ... ttings/14/

(jorimt: /jor-uhm-tee/)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

@jorimt I appreciate the post and would like to ask some questions if you don't mind answering, been lurking for months, finally went through past couple weeks fully optimizing my computer. I appreciate people like you that put in the time to try and help everyone else out. So anyways it has seemed for a while and in battle nonsense videos people generally use rtss for the stability and it only adding 1 frame of input lag, especially at higher frame rates is not that big of a deal for a lot of people. Well I'm trying to achieve the best for me personally so here are my questions.

First based on your findings if the NULL on, ingame limiter was the best.. rtss and ultra null,vsync, and gsync auto cap was the same I have a theory that maybe you can answer, based on my knowledge NULL on vs ultra if you are not hardware capped should in theory be one less frame of input lag, have you found that in your testings or do you only test gsync,vsync on? Because and I could be wrong, I wish I had a high fps camera to test, but if that's the case then for example you know how you found you got on average 4ms input lag by adding 1 frame of buffering (rtss), well if based on what I know is correct then by enabling ultra NULL, you should have in theory gotten the same input lag but in the opposite direction, (24ms avg with ultra null,vsync,gsync) where you got 28 with on null, and 32 with rtss. Well if that's right and you found that using NVI v2 caused 2 frames of input lag, you might see where I'm going with this that with NULL ultra, vsync and gsync the auto cap that nvidia does could very possibly use that same limiter to limit the fps, because then you would take the 24ms that you SHOULD get if it was an ingame cap, then add 2 frames*4ms, and you would get 32ms avg which is EXACTLY what you got with the auto cap. If you were to test Gsync+Ultra NULL, no vsync so it doesn't auto cap I would guess it would be the 24ms, which would be the best out of all (performance wise). So let's just hope that I'm correct have you found nvidia profile inspector changing which limiter option it is reduces input lag at all or are they all 2 frames of input lag? Because I know you guys like gsync with vsync on, I'm just looking for the best.

Secondly, have you found in your tests in general that most modern esports games like overwatch, rb6, all that stuff has similar input lag using ingame cap, if you have the same fps, or have you found some games work better somehow with rtss?

This is my first post so sorry if I got things wrong, but hopefully I helped and we can figure this stuff out together!

First based on your findings if the NULL on, ingame limiter was the best.. rtss and ultra null,vsync, and gsync auto cap was the same I have a theory that maybe you can answer, based on my knowledge NULL on vs ultra if you are not hardware capped should in theory be one less frame of input lag, have you found that in your testings or do you only test gsync,vsync on? Because and I could be wrong, I wish I had a high fps camera to test, but if that's the case then for example you know how you found you got on average 4ms input lag by adding 1 frame of buffering (rtss), well if based on what I know is correct then by enabling ultra NULL, you should have in theory gotten the same input lag but in the opposite direction, (24ms avg with ultra null,vsync,gsync) where you got 28 with on null, and 32 with rtss. Well if that's right and you found that using NVI v2 caused 2 frames of input lag, you might see where I'm going with this that with NULL ultra, vsync and gsync the auto cap that nvidia does could very possibly use that same limiter to limit the fps, because then you would take the 24ms that you SHOULD get if it was an ingame cap, then add 2 frames*4ms, and you would get 32ms avg which is EXACTLY what you got with the auto cap. If you were to test Gsync+Ultra NULL, no vsync so it doesn't auto cap I would guess it would be the 24ms, which would be the best out of all (performance wise). So let's just hope that I'm correct have you found nvidia profile inspector changing which limiter option it is reduces input lag at all or are they all 2 frames of input lag? Because I know you guys like gsync with vsync on, I'm just looking for the best.

Secondly, have you found in your tests in general that most modern esports games like overwatch, rb6, all that stuff has similar input lag using ingame cap, if you have the same fps, or have you found some games work better somehow with rtss?

This is my first post so sorry if I got things wrong, but hopefully I helped and we can figure this stuff out together!

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

If you're system is GPU-bound (>99% GPU usage), not counting V-SYNC input lag, NULL will, in the best cases, reduce input lag by 1 additional frame, G-SYNC or no G-SYNC.

That said, in my scenarios posted here, my system was not GPU-bound, and I was preventing V-SYNC input lag by limiting the framerate, so these scenarios are only testing the difference in input lag between the capping function of the FPS limiters (including NULL).

Yes, I'm pretty sure Nvidia is using their driver-level FPS limiter for the NULL auto-cap. That said, in off-the-record tests that I did not post with the most recent ones here, I did test NVI v1 and NVI "Default" limiter options, and, so far, they had the same input lag as NVI v2.Zaxeq wrote: ↑27 Nov 2019, 17:35Because and I could be wrong, I wish I had a high fps camera to test, but if that's the case then for example you know how you found you got on average 4ms input lag by adding 1 frame of buffering (rtss), well if based on what I know is correct then by enabling ultra NULL, you should have in theory gotten the same input lag but in the opposite direction, (24ms avg with ultra null,vsync,gsync) where you got 28 with on null, and 32 with rtss. Well if that's right and you found that using NVI v2 caused 2 frames of input lag, you might see where I'm going with this that with NULL ultra, vsync and gsync the auto cap that nvidia does could very possibly use that same limiter to limit the fps, because then you would take the 24ms that you SHOULD get if it was an ingame cap, then add 2 frames*4ms, and you would get 32ms avg which is EXACTLY what you got with the auto cap. If you were to test Gsync+Ultra NULL, no vsync so it doesn't auto cap I would guess it would be the 24ms, which would be the best out of all (performance wise). So let's just hope that I'm correct have you found nvidia profile inspector changing which limiter option it is reduces input lag at all or are they all 2 frames of input lag? Because I know you guys like gsync with vsync on, I'm just looking for the best.

For whatever reason, NULL's auto cap is the only Nvidia limiting function that is matching RTSS input lag levels (in Apex, at least) in my testing thus far. I'd have to test more to narrow anything else down, and I don't really plan to anytime soon.

As for G-SYNC + LLM "Ultra" + V-SYNC "Off," without the auto FPS limit, that would effectively be identical to G-SYNC + LLM "On" + V-SYNC "Off," and at any point your FPS exceeded your refresh rate, you would leave the G-SYNC range and have full tearing.

In other words, if I remove the framerate limiting aspect from NULL with G-SYNC, I can't compare it to the other FPS limiters any more.

Anyway, the point here is, you can't get any lower input lag than G-SYNC + V-SYNC + LLM "On" + in-game limiter with G-SYNC; you're not getting rid of that extra 0.5 - 1 frame of input lag of NULL/RTSS over a good in-game limiter with any configuration.

I haven't tested Siege's in-game limiter, so I'm not sure, but I know for a fact that Overwatch's in-game limiter has lower input lag than RTSS, NULL and NVI, and is one of the better, more stable in-game limiters around. There's really no reason not to use it with G-SYNC:

As for whether some games work better with RTSS, that's highly dependent on the game in question, and whether you're using G-SYNC. RTSS is always going to have more stable frametime performance than in-game limiters because it limits by frametime, whereas good in-game limiters limit by an average framerate target, and let frametime run free, one of the very reasons they have lower input lag than external limiters, but this also means frametimes aren't as consistent. It's a trade-off.

Either way, G-SYNC stabilizes frametime well enough on it's own for you to use an in-game limiter over RTSS when available, if you desire the absolute lowest input lag.

That said, there are in-game FPS limiters I hear aren't great (like CoD MW), where you're better off using RTSS, but that's pretty rare.

(jorimt: /jor-uhm-tee/)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Author: Blur Busters "G-SYNC 101" Series

Displays: ASUS PG27AQN, LG 48C4 Scaler: RetroTINK 4k Consoles: Dreamcast, PS2, PS3, PS5, Switch 2, Wii, Xbox, Analogue Pocket + Dock VR: Beyond, Quest 3, Reverb G2, Index OS: Windows 11 Pro Case: Fractal Design Torrent PSU: Seasonic PRIME TX-1000 MB: ASUS Z790 Hero CPU: Intel i9-13900k w/Noctua NH-U12A GPU: GIGABYTE RTX 4090 GAMING OC RAM: 32GB G.SKILL Trident Z5 DDR5 6400MHz CL32 SSDs: 2TB WD_BLACK SN850 (OS), 4TB WD_BLACK SN850X (Games) Keyboards: Wooting 60HE, Logitech G915 TKL Mice: Razer Viper Mini SE, Razer Viper 8kHz Sound: Creative Sound Blaster Katana V2 (speakers/amp/DAC), AFUL Performer 8 (IEMs)

Re: Driver 441.08: Ultra-Low Latency Now with G-SYNC Support

Thanks for these. Looks like the way nvidia is limiting the framerate in NULL mode is different than their normal limiter.

Steam • GitHub • Stack Overflow

The views and opinions expressed in my posts are my own and do not necessarily reflect the official policy or position of Blur Busters.

The views and opinions expressed in my posts are my own and do not necessarily reflect the official policy or position of Blur Busters.