Hello,

to reduce the inputlag of my game and provide the right settings to let players tune the input lag and smoothness according to their machine performance, I wrote a program made to test different settings and techniques.

For now, I am interested in reducing the input lag.

The game needs to be deterministic, so it uses fixed timestep. Because it can cause smoothness issues, I used this program to test if an interpolation won't add too much input lag. I also tried to have variable timestep in a way that the next frame can start earlier to be in sync with the screen refresh, but overall the update rate is the same. This what I call "loose timestep".

The program has a scene in which it displays 3 white bars when an input is pressed. I use a photodiode and a microcontroller to send keyboard inputs and measure how long it takes for the screen to go from black to white, and then how many frames are displayed before the program displays black again because the button is released. This way I have input lag in ms and in game ticks, at the top, middle and bottom of the monitor. Each test is repeated 1000 times, with a random delay between ON inputs.

I tested several updates rates, from less than half the display refresh rate to more than the double, with integer and non-integer ratios. I tested with different render times to see the potential behaviours on more or less fast machines. I testes with V-Sync off and on, with and without GPU hardsync, and with predictive waiting.

I noticed that I got inconsistent results when the V-Sync is turned off. The program starts at a random time in the display refresh cycle, so in different executions the buffers are not swapped at the same time. To get consistent results, the program turns V-Sync ON for one frame, syncs up and turns it off.

I also made a scrolling checkerboard scene to test tearing and smoothness.

For now I am interested only in video, but I may do similar work concerning audio later.

I measured the input lag only with one monitor plugged on my desktop computer, on Windows.

On a 2nd monitor, I don't see tear lines when it should have it.

Same with my laptop on it's monitor, with either GPU.

On GNU/Linux, I get a tear line that moves depending on where is the mouse cursor, even with V-Sync on. Also it detects a triple buffer, and I don't know how to turn it off.

I don't own a VRR monitor so I didn't researched how to make the best use of them.

The source code is on Github: https://github.com/Sentmoraap/doing-sdl-right.

If you read it, you may wonder why I render the frame on a offscreen buffer in different context, and then render it to the main window. I want to test it for games made for a specific resolution, and then scaled to fit.

I also want to be able to destroy and recreate a window, so I want to recreate a context without having to reload everything. I want to recreate a window because I got problems with just switching modes and moving it.

Here is what I concluded in my tests:

- my monitor have approx 17 ms of input lag; (EDIT: 17 ms not 25, I forgot to remove 8 ms from the random times compared to the scanout)

- interpolation does not seem to be a big deal in terms of input lag. So loose timestep is not necessary, and not desirable considering the implications of variable timestep.

- after several fixes, it seems that my predictive waiting works. There is not much more average lag than VSync off when the game update rate is the same as the display refresh rate;

- sometimes it looks like that V-Sync OFF has significantly less lag, when in theory it should not. It may because how the tear lines are offset from the VBL, so on the average the bars are earlier in the refresh cycles;

- when the program is waiting, it should sleep to prevent the scheduler to end it's time slice at the wrong time and make it miss the VBL;

- the tear lines are jittery, and are slowly moving upwards when they should stay at the same place. The VBL is longer than the jitter time.

It looks like I got how to have less input lag with V-Sync ON on non VRR monitors. I may have made some mistakes. I have attached a Win32 build and a spreadsheet with my measurements.

My attempt at achieving a low input lag. Am I doing it right?

-

Sentmoraap

- Posts: 4

- Joined: 22 Mar 2020, 07:18

My attempt at achieving a low input lag. Am I doing it right?

- Attachments

-

- sdl-test.7z

- (766.97 KiB) Downloaded 284 times

-

- Results.ods

- (34.17 KiB) Downloaded 287 times

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: My attempt at achieving a low input lag. Am I doing it right?

Before I answer your question and run your software, I just wanted to make sure you are aware of the complex considerations of input lag. Some of the terminology suggests you are, so let's review.

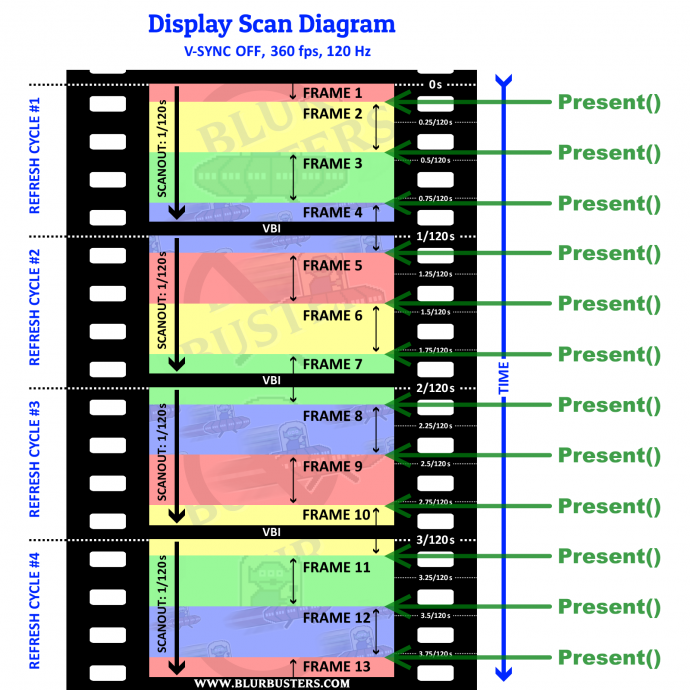

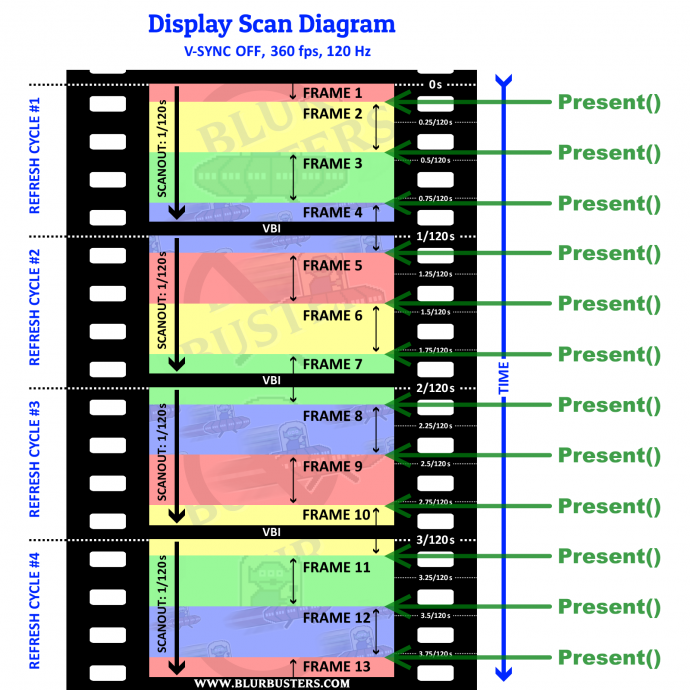

VSYNC OFF frame-slices are "latency gradients" themselves. The lowest lag is always right below a tearline, and the highest lag is always right above a tearline. That's because you're essentially "splicing" a new frame into an existing scanout as seen in high speed videos at www.blurbusters.com/scanout since not all pixels refresh at the same time, it sweeps from top to bottom.

As the tearlines shifts, the latency gradients shifts. That's why you get jittery lag with single-photodiode measurements.

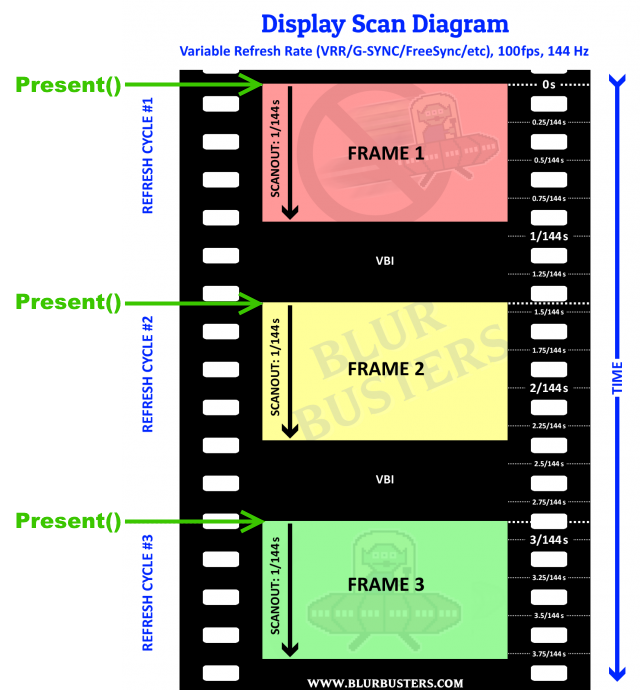

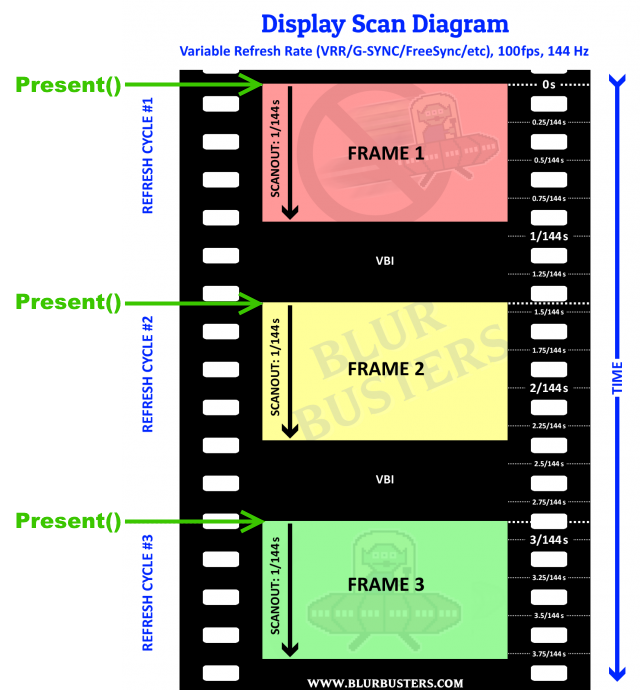

Now, for variable refresh rate displays, the display only refreshes when you Present().

In variable refresh rate mode, the display is slaving to your software when intervals between presents are within the VRR range.

It's the time differential between Present() which is the location of the tearline (tearlines are rasters, and you can even actually beam race them with D3DKMTGetScanLine() during Performance Power Plan). The 8-bit programmer that programmed Ataris, can pretty much learn how to beamrace a tearline. (That's also how RTSS Scanline Sync works too -- the tearingless VSYNC OFF feature). But that's very hard to do with a game, since beamraced tearlines can becomes unreliable with power management and/or high GPU utilization; witnessing how RTSS Scanline Sync (tearingless VSYNC OFF feature). If you want to learn more about beam-raced tearlines, see Raster "Interrupts" / Beam Racing on GeForces/Radeons!.

However, this is probably beyond your scope of needs. I only simply link to that, simply to understand the relationship of latency versus a VSYNC OFF tearline, and if you ever desire to control a VSYNC OFF tearline.

Also do not forget latency gradients. The inputlag of screen top edge versus screen bottom edge.

Assuming well-calibrated (zero strobe crosstalk) strobing, these latency gradients occur:

VSYNC ON + nonstrobed creates TOP < CENTER < BOTTOM (consistent fixed offsets)

VSYNC OFF + nonstrobed creates TOP = CENTER = BOTTOM (jittery but TOP equals BOTTOM when averaging 100+ lag passes)

VSYNC ON + strobed creates TOP = CENTER = BOTTOM (ultrasmooth fixed non-volatile lag, but with a large offset)

VSYNC OFF + strobed creates TOP > CENTER > BOTTOM (aiming sometimes feel wrong)

That's often why I really recommend fps=Hz sync during strobed modes, because aiming is better at slightly higher lag but non-jittery lag, etc. There's a lot of complexities involved. Lag is not a single-number single-photodiode operation, and there's lag gradient problems, plus lag volatility problems to consider too.

Input lag is complex:

- Absolute lag

- Latency volatility

- Latency gradient

- Other factors affecting lag (e.g. strobe crosstalk, temperature, picture settings that moves gamut to a faster-GtG portion of the LCD gamut, etc)

And how you stopwatch. Where you decide to cutoff GtG. Look at high speed videos of www.blurbusters.com/scanout show that GtG is simply a pixel fading to another color over a period of milliseconds. You can see GtG even at just 10% or even at 5%. GtG 10% for a GtG from black to white, is like RGB(25,25,25) on a fade-journey from RGB(0,0,0) to RGB(255,255,255). Your lag number will hugely change. Even temperatures affect GtG (ever forgot a phone in a freezing car in the middle of the winter? The LCD GtG can last several seconds sometimes, instead of milliseconds), which is why I recommend people warm up monitors before playing a critical competitive game. Especially VA panels, which are much more temperature sensitive than TN and IPS.

Depending on WHERE on the screen you measure, and WHAT framerate you're using, and what settings you are using (sync tech settings, strobe setting, VRR setting), you may have a base-case VSYNC ON measurement number that's lower th8an the worst-case VSYNC OFF measurement number.

P.S. If you use strobed modes, this explains why the latency gradient inverts with VSYNC OFF + strobed versus VSYNC ON + nonstrobed: viewtopic.php?f=13&t=6430

VSYNC OFF frame-slices are "latency gradients" themselves. The lowest lag is always right below a tearline, and the highest lag is always right above a tearline. That's because you're essentially "splicing" a new frame into an existing scanout as seen in high speed videos at www.blurbusters.com/scanout since not all pixels refresh at the same time, it sweeps from top to bottom.

As the tearlines shifts, the latency gradients shifts. That's why you get jittery lag with single-photodiode measurements.

Now, for variable refresh rate displays, the display only refreshes when you Present().

In variable refresh rate mode, the display is slaving to your software when intervals between presents are within the VRR range.

It's the time differential between Present() which is the location of the tearline (tearlines are rasters, and you can even actually beam race them with D3DKMTGetScanLine() during Performance Power Plan). The 8-bit programmer that programmed Ataris, can pretty much learn how to beamrace a tearline. (That's also how RTSS Scanline Sync works too -- the tearingless VSYNC OFF feature). But that's very hard to do with a game, since beamraced tearlines can becomes unreliable with power management and/or high GPU utilization; witnessing how RTSS Scanline Sync (tearingless VSYNC OFF feature). If you want to learn more about beam-raced tearlines, see Raster "Interrupts" / Beam Racing on GeForces/Radeons!.

However, this is probably beyond your scope of needs. I only simply link to that, simply to understand the relationship of latency versus a VSYNC OFF tearline, and if you ever desire to control a VSYNC OFF tearline.

Also do not forget latency gradients. The inputlag of screen top edge versus screen bottom edge.

Assuming well-calibrated (zero strobe crosstalk) strobing, these latency gradients occur:

VSYNC ON + nonstrobed creates TOP < CENTER < BOTTOM (consistent fixed offsets)

VSYNC OFF + nonstrobed creates TOP = CENTER = BOTTOM (jittery but TOP equals BOTTOM when averaging 100+ lag passes)

VSYNC ON + strobed creates TOP = CENTER = BOTTOM (ultrasmooth fixed non-volatile lag, but with a large offset)

VSYNC OFF + strobed creates TOP > CENTER > BOTTOM (aiming sometimes feel wrong)

That's often why I really recommend fps=Hz sync during strobed modes, because aiming is better at slightly higher lag but non-jittery lag, etc. There's a lot of complexities involved. Lag is not a single-number single-photodiode operation, and there's lag gradient problems, plus lag volatility problems to consider too.

Input lag is complex:

- Absolute lag

- Latency volatility

- Latency gradient

- Other factors affecting lag (e.g. strobe crosstalk, temperature, picture settings that moves gamut to a faster-GtG portion of the LCD gamut, etc)

And how you stopwatch. Where you decide to cutoff GtG. Look at high speed videos of www.blurbusters.com/scanout show that GtG is simply a pixel fading to another color over a period of milliseconds. You can see GtG even at just 10% or even at 5%. GtG 10% for a GtG from black to white, is like RGB(25,25,25) on a fade-journey from RGB(0,0,0) to RGB(255,255,255). Your lag number will hugely change. Even temperatures affect GtG (ever forgot a phone in a freezing car in the middle of the winter? The LCD GtG can last several seconds sometimes, instead of milliseconds), which is why I recommend people warm up monitors before playing a critical competitive game. Especially VA panels, which are much more temperature sensitive than TN and IPS.

Depending on WHERE on the screen you measure, and WHAT framerate you're using, and what settings you are using (sync tech settings, strobe setting, VRR setting), you may have a base-case VSYNC ON measurement number that's lower th8an the worst-case VSYNC OFF measurement number.

P.S. If you use strobed modes, this explains why the latency gradient inverts with VSYNC OFF + strobed versus VSYNC ON + nonstrobed: viewtopic.php?f=13&t=6430

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: My attempt at achieving a low input lag. Am I doing it right?

Now I will answer one:

A pair of monitors are often not exactly the same refresh rate, especially if they're different models, or plugged into different GPUs. 1920x1080p might be 143.998Hz VT1125 on one, and 1920x1080p might be 144.00235Hz VT1148 on the other (in EDID). So the tearline on one will "slew" relative to the others.

Without looking at your source code, I can tell you up front (it's widespread problem among fellow Tearline Jedis):

Remember to sync your tearlines to the correct monitor source. Even RTSS has a bug in this sometimes. Sometimes the primary monitor is not monitor #0 in the monitor array you enumerate from the system. Use the IsPrimary flag (of whatever enumeration API you use) and create a Full Screen Exclusive buffer on the primary monitor.

It's a common problem with multimonitor, with VSYNC synced on a different montor than the one you're trying to sync on. The moral of the story is use FSE + use primary .... To make sure your VSYNC target is the same as the Direct3D buffer. There are ways to do FSE on secondaries and sync on secondaries, but that can be a bit more complex programming, including corroborating between multiple enumerated data, to hunt down the necessary metrics data so you're not getting the slewing-tearline problem during beamraced VSYNC OFF operation (even RTSS Scanline Sync has had that problem)

Also, test disabling power management, sometimes it creates weird latency volatility duirng low GPU utilization situation as the GPU goes to sleep between frames and has a wakeup latency that exists at low frame rates (under 5% GPU utilization, typically when it spends a few milliseconds idling). Doing things like Flush() can help keep it more awake, to an extent, though at high GPU cost.

As for Linux sync, that's a big problem, that is hard to resolve sometimes -- much like the TestUFO browser stutter problems that has been enemic with Linux distros -- viewtopic.php?f=19&t=3842

Unplug your 2nd monitor completely for more deterministic lag.Sentmoraap wrote: ↑22 Mar 2020, 10:39- when the program is waiting, it should sleep to prevent the scheduler to end it's time slice at the wrong time and make it miss the VBL;

- the tear lines are jittery, and are slowly moving upwards when they should stay at the same place. The VBL is longer than the jitter time.

A pair of monitors are often not exactly the same refresh rate, especially if they're different models, or plugged into different GPUs. 1920x1080p might be 143.998Hz VT1125 on one, and 1920x1080p might be 144.00235Hz VT1148 on the other (in EDID). So the tearline on one will "slew" relative to the others.

Without looking at your source code, I can tell you up front (it's widespread problem among fellow Tearline Jedis):

Remember to sync your tearlines to the correct monitor source. Even RTSS has a bug in this sometimes. Sometimes the primary monitor is not monitor #0 in the monitor array you enumerate from the system. Use the IsPrimary flag (of whatever enumeration API you use) and create a Full Screen Exclusive buffer on the primary monitor.

It's a common problem with multimonitor, with VSYNC synced on a different montor than the one you're trying to sync on. The moral of the story is use FSE + use primary .... To make sure your VSYNC target is the same as the Direct3D buffer. There are ways to do FSE on secondaries and sync on secondaries, but that can be a bit more complex programming, including corroborating between multiple enumerated data, to hunt down the necessary metrics data so you're not getting the slewing-tearline problem during beamraced VSYNC OFF operation (even RTSS Scanline Sync has had that problem)

Also, test disabling power management, sometimes it creates weird latency volatility duirng low GPU utilization situation as the GPU goes to sleep between frames and has a wakeup latency that exists at low frame rates (under 5% GPU utilization, typically when it spends a few milliseconds idling). Doing things like Flush() can help keep it more awake, to an extent, though at high GPU cost.

As for Linux sync, that's a big problem, that is hard to resolve sometimes -- much like the TestUFO browser stutter problems that has been enemic with Linux distros -- viewtopic.php?f=19&t=3842

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

Sentmoraap

- Posts: 4

- Joined: 22 Mar 2020, 07:18

Re: My attempt at achieving a low input lag. Am I doing it right?

Thank you for your long and detailed answer.

I am aware of how the image is sent to the monitor and how it is supposed to display it. That's why each test is done 3 times, I put the photodiode at the top, middle or bottom of the monitor. I also put random delays between each presses because we do not push buttons in sync with the monitor, and depending on when it is compared to when the game read the inputs it can add a frame of lag.

Are GtG times that significant? It looks small compared to a whole frame, so it looks small compared to a software mistake that add one of several frames of lag.

But I understand that it can affect when the luminosity reaches a threshold. The pixels are (0,0,0) or (255,255,255), and the threshold is roughly half the white luminosity. So it should not wait until a pixel has completely finished it's transition to detect it has changed colour.

I have not thought of how strobing affects input lag. It makes sense. It waits to receive everything before displaying everything simultaneously.

From your diagram it looks like it's easy to support VRR, and it has the extra benefit of displaying the bottom line as fast as with the max refresh rate.

My measurements are made with only one monitor plugged. I suspect it's refresh rate to not be exactly 60 Hz, but something like 60.001 Hz which makes it not perfectly synced with the game. My goal is not to sync it with V-Sync OFF, there are V-Sync ON + predictive waiting settings for that. Unless, for a reason I don't know, it's better to achieve the same result with V-Sync OFF and hiding the tear line in the VBI.

My monitor is not strobed. With V-Sync ON, the latency is higher on the bottom than on the top, as expected.

With V-Sync OFF and many FPS, it's on average constant. There may be gradients when the FPS is a multiple or a divider of 60, but because the tear line are slowly moving and doing 1000 measures takes approximately 2 min 30 it may move significantly, but I don't think the test is long enough to have an accurate average.

After reading you post I checked power management. It's in performance mode.

I think the V-Sync OFF tearline jitter was partly because in V-Sync OFF the game was constantly re-drawing frames, so when it's actually a new game state it had to finish to draw the previous frame. With a sleep + a busyloop for the last milliseconds, it's more stable but still jittery.

The function D3DKMTGetScanLine looks interesting but I am trying to get an approximation of that by measuring the time since the last SDL_GL_SwapWindow. It cannot be accurate down to the scanline but it's cross-platform and my goal is not to have Tearline Jedi rasters, I can have some margin.

SDL is one of the most complete abstraction layers out there, but it looks like I can't provide a good support for running the game in another display then the primary display without calling platform-specific functions.

I re-tried runing it on Linux. I disabled compositing in MATE. I got expected results when only one monitor is plugged. It looks like compositing is unavoidable when using multiple monitors, at least with X+RandR+MATE.

I will use this for shoot'em ups, and maybe other 2D action games. So like in competitive FPS the input lag is very important, but the top priorities may be subtly different because of the accuracy of mouse aiming vs. accuracy of joystick/keyboard moves.

I am aware of how the image is sent to the monitor and how it is supposed to display it. That's why each test is done 3 times, I put the photodiode at the top, middle or bottom of the monitor. I also put random delays between each presses because we do not push buttons in sync with the monitor, and depending on when it is compared to when the game read the inputs it can add a frame of lag.

Are GtG times that significant? It looks small compared to a whole frame, so it looks small compared to a software mistake that add one of several frames of lag.

But I understand that it can affect when the luminosity reaches a threshold. The pixels are (0,0,0) or (255,255,255), and the threshold is roughly half the white luminosity. So it should not wait until a pixel has completely finished it's transition to detect it has changed colour.

I have not thought of how strobing affects input lag. It makes sense. It waits to receive everything before displaying everything simultaneously.

From your diagram it looks like it's easy to support VRR, and it has the extra benefit of displaying the bottom line as fast as with the max refresh rate.

My measurements are made with only one monitor plugged. I suspect it's refresh rate to not be exactly 60 Hz, but something like 60.001 Hz which makes it not perfectly synced with the game. My goal is not to sync it with V-Sync OFF, there are V-Sync ON + predictive waiting settings for that. Unless, for a reason I don't know, it's better to achieve the same result with V-Sync OFF and hiding the tear line in the VBI.

My monitor is not strobed. With V-Sync ON, the latency is higher on the bottom than on the top, as expected.

With V-Sync OFF and many FPS, it's on average constant. There may be gradients when the FPS is a multiple or a divider of 60, but because the tear line are slowly moving and doing 1000 measures takes approximately 2 min 30 it may move significantly, but I don't think the test is long enough to have an accurate average.

After reading you post I checked power management. It's in performance mode.

I think the V-Sync OFF tearline jitter was partly because in V-Sync OFF the game was constantly re-drawing frames, so when it's actually a new game state it had to finish to draw the previous frame. With a sleep + a busyloop for the last milliseconds, it's more stable but still jittery.

The function D3DKMTGetScanLine looks interesting but I am trying to get an approximation of that by measuring the time since the last SDL_GL_SwapWindow. It cannot be accurate down to the scanline but it's cross-platform and my goal is not to have Tearline Jedi rasters, I can have some margin.

SDL is one of the most complete abstraction layers out there, but it looks like I can't provide a good support for running the game in another display then the primary display without calling platform-specific functions.

I re-tried runing it on Linux. I disabled compositing in MATE. I got expected results when only one monitor is plugged. It looks like compositing is unavoidable when using multiple monitors, at least with X+RandR+MATE.

I will use this for shoot'em ups, and maybe other 2D action games. So like in competitive FPS the input lag is very important, but the top priorities may be subtly different because of the accuracy of mouse aiming vs. accuracy of joystick/keyboard moves.

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: My attempt at achieving a low input lag. Am I doing it right?

Half is a good compromise.Sentmoraap wrote: ↑25 Mar 2020, 12:15Are GtG times that significant? It looks small compared to a whole frame, so it looks small compared to a software mistake that add one of several frames of lag.

But I understand that it can affect when the luminosity reaches a threshold. The pixels are (0,0,0) or (255,255,255), and the threshold is roughly half the white luminosity. So it should not wait until a pixel has completely finished it's transition to detect it has changed colour.

The problem is some 3ms GtG panels often takes 5ms to GtG97%, 10ms to GtG98% and over 20ms to GtG99%, for specific colors. Now, on some VA panels, some color transition combos are 10x slower than others (dark greys). For some panels, it is like trying to reach the speed of light -- you can get closer and closer but accelerating the last bit is harder and harder. That's why if you wait till GtG100%, you abberate very far away from human reaction time. That's why I like lag testers that stopwatch-end sooner, such as GtG10% or GtG50%. I say GtG 50% is a great compromise, as that's usually within a millisecond of human reaction time trigger (enough photons emitted from pixels to hit human eyes to begin reacting to hit).

If you measure continuously, you will witness a latnecy sawtooth. A slowly-slewing tearline on a single monitor is often because one of your compositors (i.e. X) is using an internal 60Hz clock unsynchronized to the monitor VSYNC. You could switch to kwin-lowlatency to fix this, they've been doing a good job forcing X to sync to VSYNC.Sentmoraap wrote: ↑25 Mar 2020, 12:15My monitor is not strobed. With V-Sync ON, the latency is higher on the bottom than on the top, as expected.

With V-Sync OFF and many FPS, it's on average constant. There may be gradients when the FPS is a multiple or a divider of 60, but because the tear line are slowly moving and doing 1000 measures takes approximately 2 min 30 it may move significantly, but I don't think the test is long enough to have an accurate average.

I have a $2000 bounty on fixing Linux VSYNC completely Linux-ecosystem-wide, but it's kind of a tall & herculean ask. The point is, I'm frustrated at unsynchronized Linux systems where I can't trust VSYNC to be the real VSYNC.

That's what Tearline Jedi does too.Sentmoraap wrote: ↑25 Mar 2020, 12:15I think the V-Sync OFF tearline jitter was partly because in V-Sync OFF the game was constantly re-drawing frames, so when it's actually a new game state it had to finish to draw the previous frame. With a sleep + a busyloop for the last milliseconds, it's more stable but still jittery.

I agree with your approach, good job.

Tearline Jedi does exactly what you're doing -- it is not using D3DKMTGetScanLine. A time offset between two VSYNC's is pretty accurate, and becomes much more accurate if you get the vertical total too (from the timings calls available in Windows 7,8,10, as well timing strings in Linux), since you can calculate raster based on vertical total instead of active resolution. For unknown vertical totals I automatically assume a 45-line VBI because that's backwards compatible with common VT525 for 480p, as well as common VT1125 for 1080p resolution. About 90% of the time, this gets my Tearline Jedi rasters pretty close.Sentmoraap wrote: ↑25 Mar 2020, 12:15The function D3DKMTGetScanLine looks interesting but I am trying to get an approximation of that by measuring the time since the last SDL_GL_SwapWindow. It cannot be accurate down to the scanline but it's cross-platform and my goal is not to have Tearline Jedi rasters, I can have some margin.

I agree with your approach, good job.

Expected results with one monitor is good. The sync source and the graphics source needs to be the same, and sometimes that's hard when you're trying to sync up multiple APIs that aren't necessarily aware of each other... Gets horrendously difficult for cross-platform multimonitor.Sentmoraap wrote: ↑25 Mar 2020, 12:15I re-tried runing it on Linux. I disabled compositing in MATE. I got expected results when only one monitor is plugged. It looks like compositing is unavoidable when using multiple monitors, at least with X+RandR+MATE.

This is the hard part.

Yes, completely eliminating stutter can be more important than lowest absolute latency for things like shootem-ups and emulators, etc where you really need perfect sync between frame rate and refresh rate, without the framebuffer latency of traditional VSYNC ON.Sentmoraap wrote: ↑25 Mar 2020, 12:15I will use this for shoot'em ups, and maybe other 2D action games. So like in competitive FPS the input lag is very important, but the top priorities may be subtly different because of the accuracy of mouse aiming vs. accuracy of joystick/keyboard moves.

Also, things like stutter. ("Stutter" is synonymous with "latency volatility" when viewed through a mathematical lens). VSYNC OFF is much lower lag but you still have the microstutter amplitudes caused by unsynchronized mode (fps mismatched from Hz).

So you get lower absolute lag latency but higher latency volatility. The unsynchronized issue affects both mouse and keyboard moves. Different people are sensitive/insensitive to it.

If your variables is to eliminate latency volatility, and get the supersmooth "Super Mario scrolling" effect (scrolling without stutters) without adding input lag -- great for shoot-em-ups and other arcade style games -- then you begin to understand why some people love RTSS Scanline Sync as one of the world's lowest-lag "VSYNC ON clones" under Windows. It appears you have successfully achieved a LInux clone of RTSS Scanline Sync, that can be built-in into games. Congratulations!

______________________________

<Interesting Reading>

On another subject but a related subject (just a knowledge matter). It might not be important to you but it's useful to know, from an education perspective. Including why sync technologies exist because displays are finite refresh rates. If we had 5000fps at 5000Hz, sync technologies could become mostly obsolete (VSYNC ON, VSYNC OFF, GSYNC, FreeSync, Fast Sync, etc) as they all become looking idententical at the same frame rates. The higher the fps & Hz, the more all sync technologies converg in look-and-feel (and lag). At such ultra-refresh rates, VSYNC ON and VSYNC OFF and GSYNC all having (to human eyes) practically equivalent laglessness, equivalent look, equivalent smoothness, equivalent stutterfreenes, etc. The point is, sync technologies exist today because of the finiteness of refresh rates -- I also cover this at www.blurbusters.com/stroboscopics and www.blurbusters.com/1000hz-journey -- good technology background reads.

Also strobing amplifies visibility of microstutter to the point where even a 1ms or 2ms microstutter becomes human-visible. Microstutter amplitudes much smaller than motion blur size, are typically hidden, which is why everything suddenly looks so jittery when you turn on blur reduction. Which is why RTSS Scanline Sync is essentially God Mode for ULMB, especially when combined with accurate 3200dpi mouse tracking (low in-game sensitivity) with new mouse, clean mouse feet, and an ultra-high-resolution mousepad. Everything becomes CRT Arcade Smooth, or Nintendo Smooth -- silky microstutterfree motion with zero motion blur. Very hard to achieve. But possible if you know what you're doing (like milking hertzroom to eliminate strobe crosstalk -- using the cram GtG-in-long-VBI tricks that we've done -- e.g. 120Hz PureXP+ on a ViewSonic XG270). Good motion quality strobing demands "a VSYNC ON-like" low lag technology (similiar to RTSS Scanline Sync), and VSYNC OFF gives very bad strobing experiences for some people. Now, some monitors like BenQ DyAc, simply uses sheer refresh rate (240Hz) to just compensate. This helps a lot in lowering lag, and reducing microstutter when using unsynchronized motion (VSYNC OFF) since the aliasing between fps vs Hz is much smaller (but not zeroed out).

In extreme cases, 0.5ms microstutter can become human visible at very fast motion. If persistence is low. For example, on Valve Index VR headset which is 0.3ms persistence. A 4000 pixels/second headturn means 0.5ms creates a 2 pixeljump microstutter, which may be just barely visible assuming motion blur is smaller than that (i.e. 0.3ms of display motion blur == ~1 pixels of motion blur ~3000 pixels per second panning).

For a great exercise of human-visible 0.5ms MPRT versus 1.0ms MPRT -- turn on NVIDIA ULMB and then look at TestUFO Panning Map Test at 3000 pixels/second. 1ms MPRT creates 3 pixels of motion blur at 3000 pixels/second scrolling, making those street name labels unreadable. But 0.5ms MPRT reduces that to 1.5 pixels of motion blur at 3000 pixels/second scrolling, making those street names readable. Load this up on either a ULMB monitor (G-SYNC). At 100% pulse width, you can't read the street labels. Go into monitor menus and change "ULMB Pulse Width" lower until you can read the street name labels. Now you're at MPRT levels well below 1ms, that's the only way to read the street name labels on this. Also, if you don't have an NVIDIA ULMB monitor, a BenQ strobed monitor also works too since they are all adjustable-persistence using Blur Busters Strobe Utility.

The Vicious Cycle Effect is a textbook study as we start approaching retina resolutions simultaneously with retina refresh rates.

I go into great detail about this, because we are Blur Busters, the art of busting display motion blur, the website born because of eliminating display motion blur (we helped popularize LightBoost almost a decade ago). So we're pretty in-depth about strobing mathematics & stutter mathematics -- and the millisecond has surprises lurking in the refresh rate race. Strobing is a band-aid to eliminate motion blur because of low refresh rates, but in reality we are now huge fans of using brute force to eliminate motion blur (strobeless ULMB -- 1000fps at 1000Hz), simply fill the whole millisecond with short-persistence frames with no black periods in between. Some monitor companies like ASUS now have a road map to 1000 Hz displays thanks to recent research. Basically CRT without the flicker, impulsing, strobing, flashing, etc -- but the same zero motion blur. The only way to eliminate motion blur strobelessly is those unobtainium refresh rates, but the world's getting technologically close now in the next ten to twenty years or less, with some laboratory prototypes already.

For software developers readers to this thread, who aren't currently familiar with the Refresh Rate Race, these are interesting technology background reads:

* www.blurbusters.com/scanout -- High Speed Videos of Display Refreshing

* www.blurbusters.com/stroboscopics -- Stroboscopic Effects of a Finite Refresh Rate

* www.blurbusters.com/1000hz-journey -- Amazing Journey To Future 1000 Hz Displays

* www.blurbusters.com/gtg-vs-mprt -- Pixel Response FAQ: GtG versus MPRT

This certainly isn't important for 60fps shoot-em-ups, but for those wondering about the scientific basis of why we are huge proponents of the current refresh rate race!

</Interesting Reading>

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

Sentmoraap

- Posts: 4

- Joined: 22 Mar 2020, 07:18

Re: My attempt at achieving a low input lag. Am I doing it right?

Wow that's a lot of reading! Again, thanks for the long answer.

I agree with your approach, good job.

That's nice to read. Then the next step is to implement it in a real game.It appears you have successfully achieved a LInux clone of RTSS Scanline Sync, that can be built-in into games. Congratulations!

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: My attempt at achieving a low input lag. Am I doing it right?

Or an emulator!Sentmoraap wrote: ↑26 Mar 2020, 07:24Wow that's a lot of reading! Again, thanks for the long answer.

I agree with your approach, good job.That's nice to read. Then the next step is to implement it in a real game.It appears you have successfully achieved a LInux clone of RTSS Scanline Sync, that can be built-in into games. Congratulations!

Several emulators have more lag on Linux due to the subsystem latency, and implementation of this technique in an emulator would dramatically lower input lag in these. Emulators are a frequent source of shoot-em-up games too, and many emulators are open source, so this would be a great starter place to add this sync technique.

Given the programming skills you needed to do this, it would probably be a doable matter for you to modify one (that is arleady using the same framework) -- thoughts?

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!

-

Sentmoraap

- Posts: 4

- Joined: 22 Mar 2020, 07:18

Re: My attempt at achieving a low input lag. Am I doing it right?

I use MAME regularly, I will look at what it is actually doing and if it can be improved. I know there is a recent lowlatency option, and it handles sub-frames timing (it sorts of takes screenshots mid-scanning when the real monitor is not in sync with the virtual CRT).it would probably be a doable matter for you to modify one (that is arleady using the same framework) -- thoughts?

For emulators, there is the beam racing technique which is irrelevant in non-emulated games (well, there may be some cases in which it's useful like with split screen, but you got my point), frame delay is related to it but I have not looked into implementing that technique.

There is also run-ahead, which can be considered as cheating, but a good option to have in emulators with good save state support.

Also, RetroArch is interesting because of it being a multi-emulator front-end, but it's already quite advanced regarding anti-lag features.

- Chief Blur Buster

- Site Admin

- Posts: 11653

- Joined: 05 Dec 2013, 15:44

- Location: Toronto / Hamilton, Ontario, Canada

- Contact:

Re: My attempt at achieving a low input lag. Am I doing it right?

It would be nice to implement beamraced sync (real raster sync with emu raster, at least within a ~1ms chase jitter margin) like the WinUAE implementation. We came up with a lagless VSYNC algorithm to allow a PC to match an FPGA in duplicating original latency behaviours with emulators (even for mid-screen input reads).

Related 2018 article:

www.blurbusters.com/blur-busters-lagles ... evelopers/

It's part of some emulators now, such as WinUAE. Recently Thomas Harte successfully got this working with some of his emulators.

Related 2018 article:

www.blurbusters.com/blur-busters-lagles ... evelopers/

It's part of some emulators now, such as WinUAE. Recently Thomas Harte successfully got this working with some of his emulators.

Head of Blur Busters - BlurBusters.com | TestUFO.com | Follow @BlurBusters on Twitter

Forum Rules wrote: 1. Rule #1: Be Nice. This is published forum rule #1. Even To Newbies & People You Disagree With!

2. Please report rule violations If you see a post that violates forum rules, then report the post.

3. ALWAYS respect indie testers here. See how indies are bootstrapping Blur Busters research!