Kaldaien wrote: ↑21 Aug 2021, 02:31

Is there a reason I might need to expose user configurable scanline targets the way Scanline Sync does? My understanding is there should be an ideal calculable time to flip buffers for scan-out, and the end-user should not need to be burdened with this stuff since I already know the active display's timings.

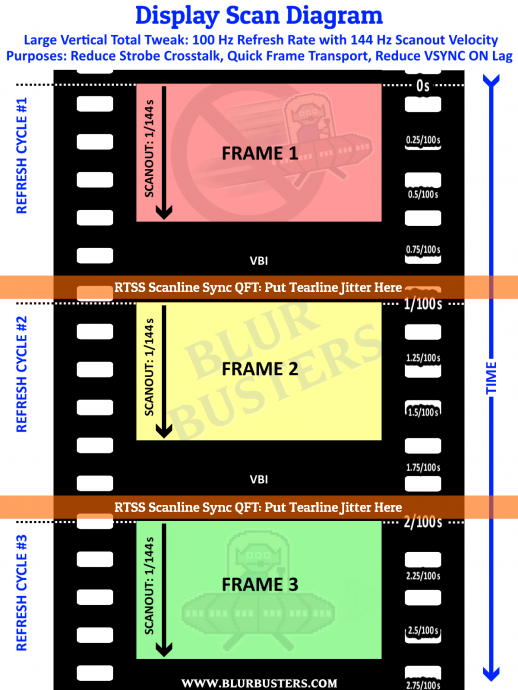

Yes, what you do should be made easier than RTSS Scanline Sync by default if possible.

Well, advanced users may prefer control. But it should be more automatic than RTSS Scanline Sync. I know there’s algorithms to fully automate this, and you should automatically have a checkbox [X] to decide automatic treatment for missed VSYNC’s — e.g. tear the next refresh cycle, or WAIT for the next VBI (flip on beginning of VBI, flip on end of VBI).

So you should have “Auto” by default with an optional “Manual” mode.

Also, consistent latency can be important, as it screws royally badly with aim training effects — even 1ms unexpected latency change can throw off an existing esports-train, please read my article-post

The Amazing Human Visible Benefits Of The Millisecond. Not all milliseconds are human visible, but I assure you, SOME of them definitely are.

Caveat emptor with assumptions on Blur Busters — they are unceremoniously shot down around here, thanks to the

Vicious Cycle Effect. Higher resolution, bigger displays, wider FOV, higher refresh rates all simultaneously combine to make effects of single millseconds more and more visible.

Oh, and precise frame pacing of unblocks can help reduce stutter — have an option where you don’t vary time interval between unblocks if you can, if you are stutter-priority. I can teach amazing things that — even jitter (70Hz ultrafast microstutter occuring during 410fps on a 480Hz display) blends to 1-pixel motion blur, which is also why things look clearer on a 360Hz display with the Razer 8KHz mouse rather than an everyday 1000Hz mouse, because of the elimination of high-frequency jitter blending to motion blur. Stutter and blur is a continuum, it is the same thing — see

www.testufo.com/vrr and see

www.testufo.com/eyetracking#speed=-1 — and it’s quite obvious that stutter and persistence blur is the same thing, like slow (vibrate) vs fast (blurry) guitar or harp strings. So if gametime:photontime jitters by 1ms, I can see the stutter caused by 1ms, as long as display persistence is less than the stutter-error. So a 1ms MPRT strobed display massively amplifies the visibility of tiny 2ms framepacing errors if the framepacing (Even if hidden by VSYNC ON) has the gametime inside the frames jittering by 2ms and rendered object positions inside these frames are off-by-2ms despite being perfectly VSYNC ON framepaced.

If you ever wondered "why is strobing so jittery compared to non-strobing"? --

THIS IS IT. Mange your Present() unblock pacing. If you want things to be EVEN more accurate, clock the beginning of the renders too -- so gametime:presenttime is constant despite varying rendertimes. You probably already know this.

Ideally game rendering algorithms should be smarter than that, but game developers do so much crazy shit, so you need to include precision modes for keeping the Present() unblocks microsecond accurate in advanced mode, with easy mode being more realtime floating (in a selective way).

So if MPRT is equal or less than the gametime:photontime relativity error, I can see the human visible stutters. Sometimes the next gametime timestamp is captured during present-time unblocks in some engines, which means things start to stutter if you vary that time-relativity. Coincidentially, that’s why a 4ms stutter is hidden in a 33ms 30fps nonstrobed display (MPRT barely 10% of frametime), but is a huge stutterjump during 240fps 240Hz VSYNC ON strobed (MPRT is frametime). Which is why strobing amplifies jittering/stuttering. Which is why I recommend framerate=Hz. Which is also why strobe lovers also love RTSS Scanline Sync and QFT for low-lag strobing. The smaller the MPRT (due to sheer Hz or from strobing), the more visible frametime error are in the rendered frames.

Ideally, you want perfect relative-time sync between gametime, rendertime, frametime, presenttime, photontime.

So, bottom line, have both an automatic and a manual setting, please.

Automatic: Easy for users, maybe two settings “Smoothness priority automatic” and “Latency priority automatic”. You would do a real-time automatic calculation as you do.

Manual: Easy to optimize for a sweet spot compomise for a specific game or specific emulator, perhaps loaded as a profile on a per-game basis or per-refreshrate/VT basis. Having a manual phase offset (e.g. fixed inputdelay) can keep things consistent.

And in the manual mode, please have a setting that enables/disables force flush e.g. Flush() after Present(), including both soft-flush and hard-flush. Hard flush will slow framerates by 50% but make present timing microsecond-accurate, which can be useful for lowering latency of low-GPU games such as emulators where low lag precise framepacing is more critical.

You can use waitable swapchains + full screen exclusive mode, to get full control over guaranteeing that your current Present() hits the next refresh cycle, regardless of GPU.

Remember to get the handle of the correct monitors for both the scanline check and for the waitable swapchains, so your software works correctly on a different-Hz multimonitor system, RTSS Scanline Sync still has a small bug with that. (Also prompt an error message if mirror is occuring — this will make beamraced VSYNc algorithm unreliable but at least it’s possible to query the system whether a multimonitor system is currently mirrored mode).

Theoretically you can detect VRR in a generic roundabout way on Windows by measuring Present()-vs-D3DKMTGetScanLine() if you wanted to go overkill on plug-n-play automaticness on any-sync multimonitor systems, for the ultimate GPU-agnostic plug-and-play scanline sync.

Display warnings about scan line sync unrealibility every time:

- You detect monitor surround mode; or

- You detect monitor mirroring mode; or

- You detect battery saver power management mode; or

- You detect fullscreen exclusive mode is unavailable (unless you do the Present-before-VBI method);

- (optional) You detect you’re running in a VM; or

- (optional) You detect VRR is enabled (Via direct API call, or via heuristics on Present()unblock-vs-D3DKMTGetScanLine()

Sufficient APIs exist to do all the above, if one wished for something vastly easier than RTSS Scanline Sync.

Also, profiles will be useful to automatically disable the algorithm for games and VR apps using its own built-in beam-raced-VSYNC algorithms. I have contributed my lagless VSYNC algorithm (emulator raster synchronized with real raster) to a few emulators, and is already implemented in WinUAE:

https://blurbusters.com/blur-busters-la ... evelopers/

As the resident expert of Present()-to-Photons (P2P) black box, I’m happy to answer questions about the black box. Don’t be afraid to ask more questions needed to become a Tearline Jedi, since what you’re doing requires an adequate understanding of P2P.