First off, it's a privilege actually posting here given how long I've been following the site. Pandering out of the way, I've found myself on something of a quest recently as far as smoothing out 30fps content (namely console-exclusive video games) on high-refresh displays. I know that most, if not all, consoles output 30fps in a 60hz container as standard, thus doubling 30 frames to 60, and creating various doubling artifacts. My particular display is actually a CRT, specifically the Sony FW900 Trinitron (I'm very lucky), which is very flexible as far as resolutions and refresh rates, but obviously predates many of the modern luxuries designed to replicate CRT goodness. I have no issues whatsoever playing native PC content on this monitor, but as stated, console games at 30fps create a number of afterimage artifacts as you might imagine.

So I had an idea of implementing what modern displays use to replicate CRT technology - namely BFI. I've tried BFI on a 60hz display via RetroArch and seen how flicker-filled and terrible that can be, so my next idea would be to use some type of framerate conversion to send the source 60hz signal into a 120hz container and add BFI to that. The FW900 in particular easily supports 720p and below at 120 or more hz, so it's not so much an issue of the monitor(s), but rather finding hard/software that can do this specific thing. Using a custom resolution of 1280x800@120hz (this monitor is 16:10) I was able to see the BFI UFO test actually looks pretty great, even at 30fps.

I have some thoughts when it comes to this, some of which I've seen suggested on this very forum but am unsure if anything became of it. One idea is to use a low-latency capture card with my PC and apply some type of Windows/GPU-driver-based BFI to my display as a whole. That way I can essentially "stream" the console's 60hz output signal to myself on the monitor in 120hz mode, with each frame blackened by BFI on the PC. This will guaranteed produce a non-zero amount of latency, but depending on the game it might be worthwhile if such a thing is possible.

Another idea is to use something akin to what retro gamers refer to as "scanline generators," whereby a clock generator chip dims an analog video signal's RGB values to 0 every so often as a frame is sent through in order to essentially blank out (with blackness) even or odd lines of an image to artificially recreate the vintage CRT look. However, a friend gave me the brilliant idea of blanking out entire alternating frames rather than simply lines of pixels within a frame, so a similar circuit design could be timed to 0 out the RGB values of the analog video every 8.33ms and then pass them through normally for the next 8.33ms... something like that. Unfortunately, this only really applies to analog video displays, like CRTs, but if this is the most viable method then I will be selfish and pursue it wholeheartedly, haha.

Lastly, I've seen the idea floated around of leaving the 60hz signal itself untouched, but having hard/software acting as an intermediary that will "convert" the 60hz signal to a 120hz one by inserting black frames in between the otherwise vanilla 60hz. This seems to be the most elegant, "holy grail" solution, but I can't find anything on the internet as far as tools to do this. I don't have a projector or any sort of 3D display for telling alternating frames to just be blacked out, and since I plan to use this largely for console games, using an option that only works traditionally for PC gaming doesn't seem to apply here...

Anyway, just thought I'd share my thoughts and see if anyone had any suggestions or wanted to chime in on their own. I'll continue down this rabbit hole myself, but since you guys are THE guys when it comes to killing motion blur artifacts, I figured this was the best place to ask. Thank you for your time, and your attention!

Chief Blur Buster wrote: ↑05 Sep 2022, 17:11EDIT: To Insert Chief Blur Buster Reply:

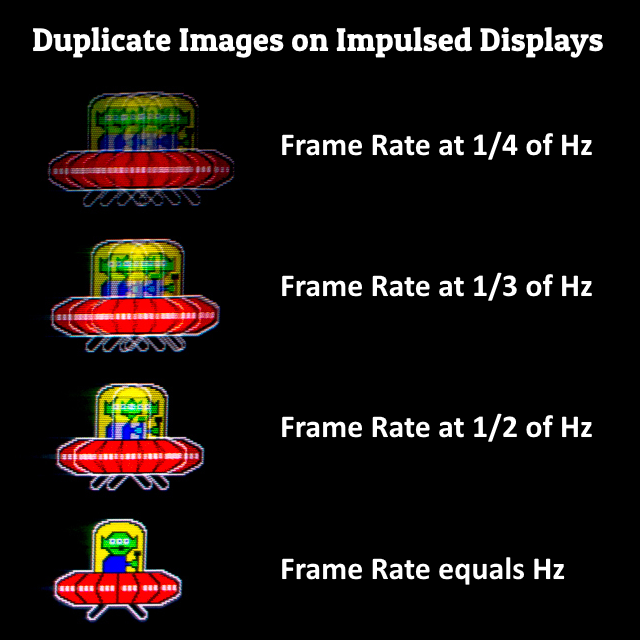

It reduces flicker yes, but it introduces a duplicate image effect, so it isn't a Holy Grail.

For More Info And Animation Demos, See blurbusters.com/area51

Multi-strobe definitely creates duplicate images. This is confirmed:

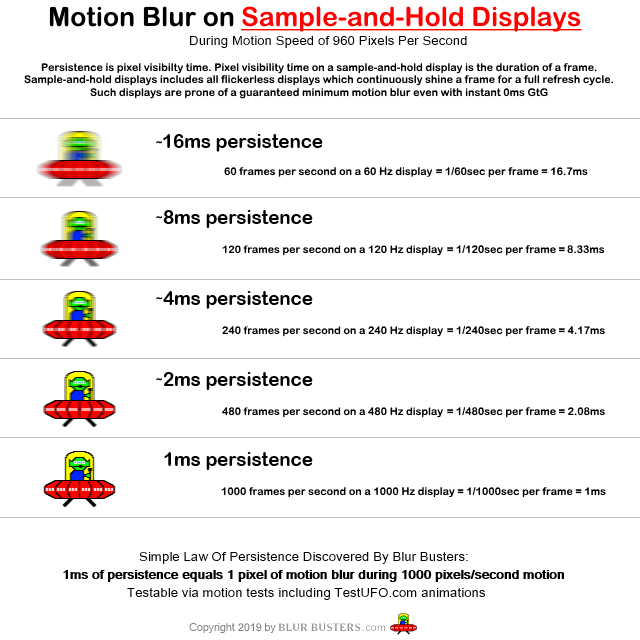

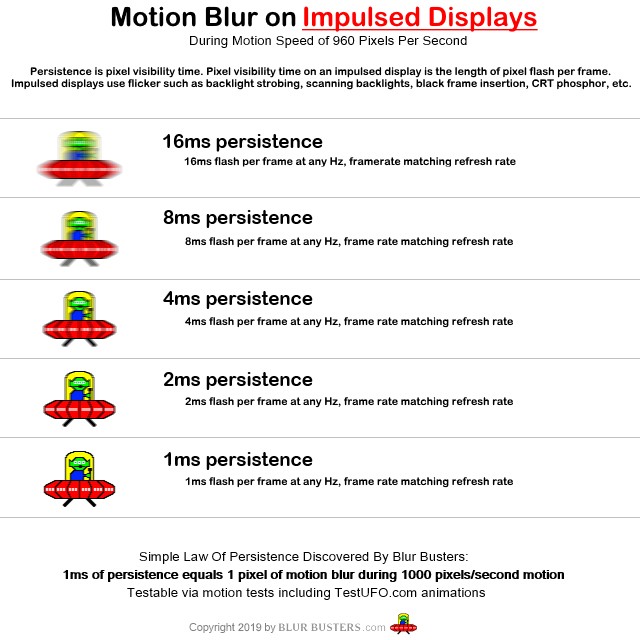

1 - Motion blur is proportional to pixel visibility time. For squarewave strobed framerate=Hz, motion blur=pulse width. For non0strobed, motion blur=frametime

Educational Animation Demo Link: TestUFO Variable Persistence BFI

(*IMPORTANT: Not for strobed displays or CRTs; run this on a sample-and-hold display of 120Hz or higher. If you only have 60 Hz, it will be super-flickery)

2 - To avoid duplicate image artifacts, flicker must be contiguous for a unique frame, to avoid duplicate images.

Educational Animation Demo Link: TestUFO Black Frame Insertion With Duplicate Images

For More Info And Animation Demos, See blurbusters.com/area51

From the "Research" tab on the Blur Busters website, I have provided these two images as a reference how to get identical display motion blur on a strobed display versus impulsed display. (Important: CRTs impulse so briefly that no commercially available unstrobed sample-and-hold display can yet match the motion blur of CRT).

This is all confirmed and now known (for a decade) display motion blur science.

This is even demonstratable in software-based BFI, just click the links above. The more refresh rate the better, because BFI persistence can only be simulated in refresh cycle increments. So if your display is 144Hz, your BFI persistence control of software-based BFI can only occur in 1/144sec increments. So the TestUFO animations become more educational the higher the refresh rate you go

Blurfree 60fps absolutely has to unavoidably modulate light output (with a contguous light-output peak per unique image aka frame).

So because you're stuck with 60 light-output peaks (flickers) per second, you have to do various means of mitigation, as follows:

How Do You Fix 60 Hz Flicker As Much As Possible? (aka How To Simulate a CRT Better)

The main 60 Hz flicker workaround is

(A) Soften the leading edge and/or trailing edge. CRTs have phosphor decay so the motion blur trailing edge is softened slightly (less harsh flicker)

(B) make sure photons are continually hitting human eyes by using a rolling strobe (like a CRT) instead to soften the leading and trailing edges of the flicker, at the cost of a bit more blur/ghosting/phosphor-decay effects. That's why I'm a fan of future CRT simulators.

Be noted there are other factors (brightness, image size, viewing distance, ambient lighting, flicker sensitivities between humans, etc), but the above is what CRTs naturally did; a rolling flicker with a decay effect. This is what makes 60 Hz flicker a lot more tolerable than 60 Hz squarewave. Be noted the sensitivities vary a lot -- there are people who can't stand a 60 Hz CRT -- and on the opposite side of the spectrum, there are people who tolerate 60 Hz global strobing.

Some displays do (B) like LG OLED rolling BFI but that doesn't fix (A). So the current (2017-2022) LG OLED rolling strobe is a less flickery but has way more motion blur (over 10x more) than the best strobed LCDs. Many love the LG OLED (as do I) as a compromise, however. But it is not (yet) a Holy Grail.

That's why I am a big fan of future CRT electron beam simulators (rolling strobe with fadebehind), because once you have enough brute refresh rate, you can simulate in finer granularities. The higher the refresh rate, the shorter-persistence a CRT tube can be simulated (as persistence is in refreshtime increments). If only the LG OLEDs could do 1000Hz+....

Long-term, I'm interested in seeing an open-source Windows Indirect Display Driver exist (based on that Microsoft sample) to run various kinds of shader algorithms such as:

- software-based BFI (for 120Hz displays)

- rolling-scan simulators (for ultrahigh Hz displays, 240Hz+ OLED or 360Hz+ LCD)

- software simulated VRR (like testufo.com/vrr -- algorithm only works on sample and hold display; doesn't work on CRT)

- software-based LCD overdrive superior to manufacturer overdrive (e.g. allow in-between overdrive settings, and/or select different overdrive curves).

- etc.

An ADC isn't critical -- to omit a video processor box or ADC, you can just do a simple GPU shader running in a modified Microsoft-sample Windows Indirect Display Driver, and committed to github -- would do the job.

For More Info And Animation Demos, See blurbusters.com/area51