Are Hand-Wave Pursuit Cameras Silly/Stupid?

Posted: 10 May 2020, 17:25

Valid criticism, but do you understand why they look all the same? (We do).

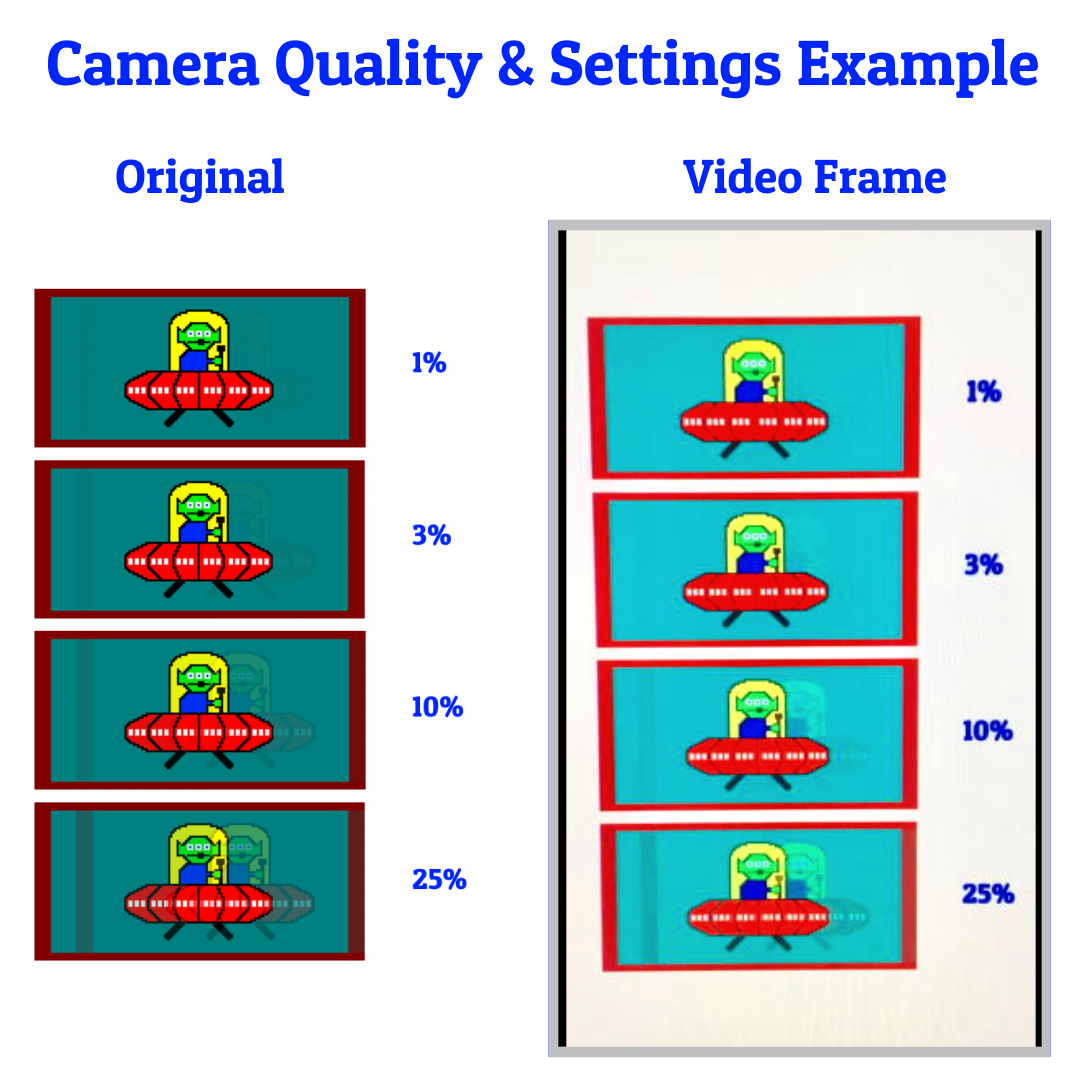

It's mostly a camera quality issue. Hobbyist pursuits can be crap, but some of the better hobbyist hand-wave pursuits are more accurate than the worst reviewer pursuits.

Actually, most motorized rails are worse -- we tried! (see below for why)

Also, for hand-waves, the bigger problem is camera quality making them all the same. You can digitally equalize a photo to make a TN photo look the same as IPS photo, blow out those highlights, saturate the colors, denoised, etc, so you ignore the colors, and only focus on the amount of blur / ghosting / coronas.

Also, people who do fixed-gaze-only-on-crosshairs, and don't play any scrolling/panning/turning (eyetracking) won't care as much about pursuits, because they more apply to the amount of motion blur (and trailing artifacts) in eye-tracking situations.

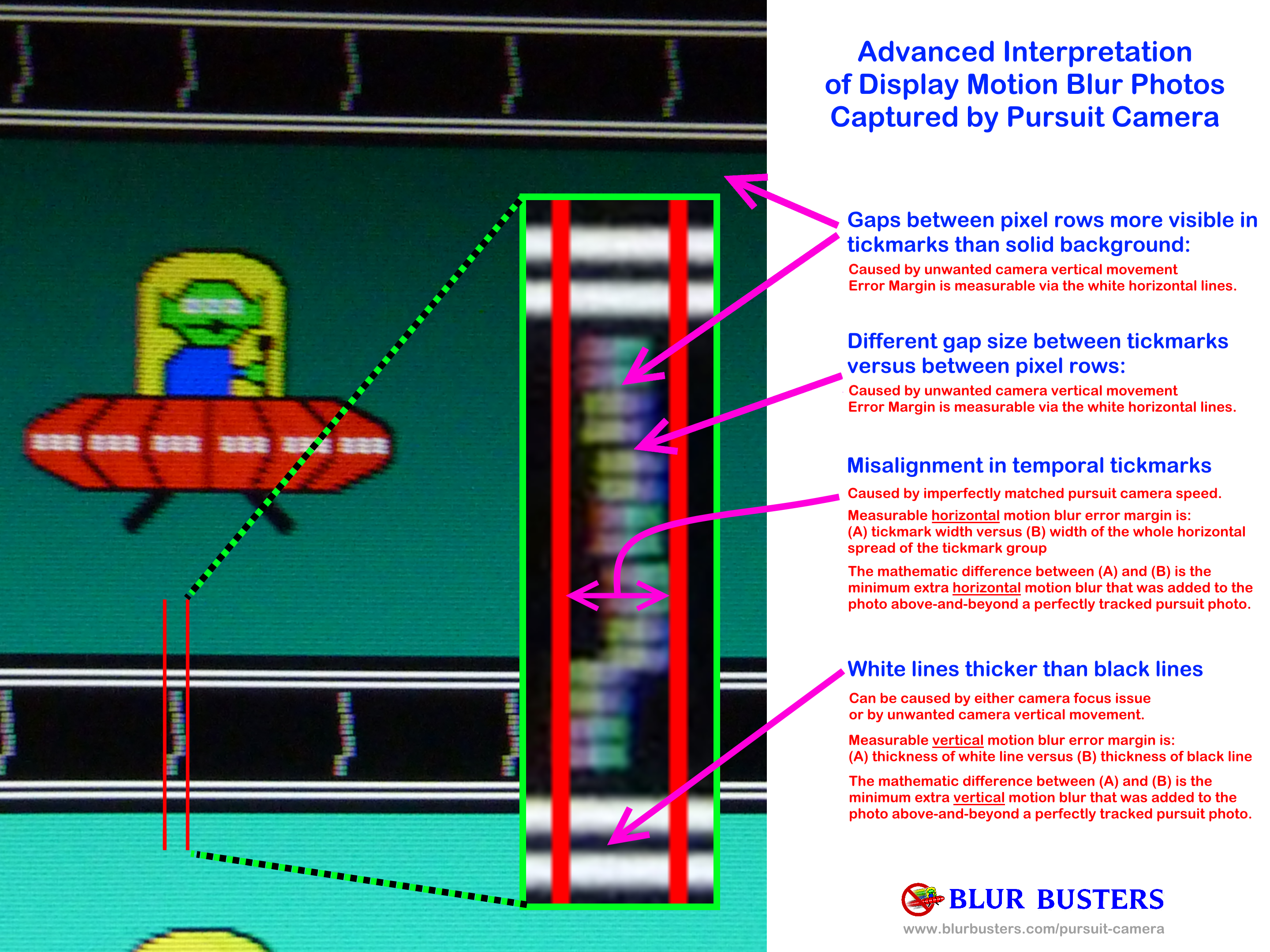

But with an upgraded camera, you'd be surprised even several university graduates / PhD's now agree with me there is validity in handwaves. The great thing is that a properly exposed sync track is literally metaphorically a recorded certificate of error margins:

Some of the professional reviewers do hand-waves too (don't use a rail) yet the photos look much better than all the hobbyist hand-waves here.

This is because of the literal accuracy-certificate factor of the sync track the sheer brute-force sampling factor (remember: The human eye is not on a rail).

The bigger problem is the camera quality and the willingness to practice vs willingness to spend money. There were situations where a person with 10 hours of handwave practice can outperform a person with 10 hours of rail pratice -- because of various factors like rail vibrations and tripod vibrations (motorized and otherwise). Read on:

Ultimately, how you pursuit doesn't matter as long as it's consistently accurate to your required error margins. The error margins are measurable in the sync track! Also motorized setups sometimes are less accurate than a professional manual propelled rail, because of motor vibrations + camera vibrations + granular digital speed settings (slightly too fast versus slightly too slow). I've seen a $100 manual propelled rail be far, far, far more accurate than a $2000 motorized setup. See peer reviewed conference paper proof. It's how accurately you set up your rig.

The proof is in the sync track, no matter what your setup is -- the venn diagram of accuracy overlaps. I've seen hand-wave pursuits that were superior to a cheap rail based setup.

The moral of the story:

1. Prefer 4 tickmarks exposed

2. Photos includes multiple sync tracks

3. Lightly processed, not overexposed, not underexposed, not de-noised, etc.

How you pursuit it, is up to you. I've seen clever setups.

But, of course, interpretation of photos is more proportional to eye-pursuiting (e.g. eye tracking while looking at panning motions in DOTA2, LOL, browser scrolling, FPS turning (looking at objects scroll past rather than fixed-gaze at crosshairs), and other similar.

Static photography is a good analog of static eye gaze (e.g. stare at crosshairs)

Pursuit photography is a good analog of pursuit eye gaze (e.g. moving eyeballs tracking motion)

(RTings)

(HDTVtest.co.uk)

(Blur Busters Early prototype #1)

Wheeled LEGO device from the 2nd page of this thread.

(Blur Busters Early prototype #2, achieved this near-perfect LightBoost photo. A bit underexposed but represented the dimness of old LightBoost monitors, and the strobe crosstalk seen is very close to exact WYSIWYG)

An enlargement of a pursuit photo using the above wood-blocks rails:

- This is from LightBoost 10% on an old ASUS monitor

- Successful WYSIWYG: The sync track was fully preserved

- Successful WYSIWYG: The sync track inversion artifact (WYSIWYG) was preserved including the tinting of the pixels (the patterning effect also seen in sync track). You often saw this when you did flickering patterns on 6bit+FRC TN LCDs (e.g. www.testufo.com/blackframes on a LightBoost monitor -- the 2nd UFO often gained amplified inversion artifacts)

- Successful WYSIWYG: The odd misalignment between sync track and the monitors' inversion pattern, creating those 2-1-1-2-1-1 vertical patterning in the sync track (green-tinted pixels and cyan-tinted pixels); explained via the TestUFO graphics being 1 pixel downwards relative to the monitor's native voltage-inversion pattern.

- Successful WYSIWYG: The vertical screendoor effect was preserved (darn ner zero vertical vibration)

- Successful WYSIWYG: The horizontal error margin is so accurate it preserved the subpixel-level blur artifacts including the WYSIWYG red fringe along the left edge of the UFO dome; that part is truly WYSIWYG. This happens with monitors in low-MPRT strobe backlight operation; the subpixel color fringes can be preserved for moving objects not just static objects (because red is the leftmost subpixel cell in RGB, and a tiny 1/3-pixelwidth red fringe shows up on yellow objects on black backgrounds -- you notice this with a magnifying glass).

- Successful WYSIWYG: The faint strobe crosstalk effect was successfully preserved (LightBoost used to be really good on the VG278H and VG248QE), seeing the UFO.

- So many WYSIWYG elements was preserved, all the way down to subpixel artifacts

Assumption: You track eyes on the UFOs.

(Yes, that's not equal to real-world stationary-gaze on crosshairs in CS:GO. But there are other games and situations such reading text while scrolling (web browsers), or looking at things during RTS panning, or seeing tiny details during fast sports panning, or identifying camoflaged enemies in high speed low altitude helicoptor flybys (Battlefield 3) etc. And it is super important if you're in VR because the head movements and shakiness create perma-panning in VR, necessitiating zero-added blur above-and-beyond human vision. A slow 30 degree head turn over 1 second can create blurry panning on most older VR screens. Creating a distracting world-defocussing effect as you turn head. Instant nausea. See! Applied necessity.)

Anyway, I haven't seen a motorized pursuit ever gotten this accurate (so far) in tracking error margin.

Sometimes blur is wanted, but sometimes blur is unwanted. Everybody is picky in different ways. Some hate tearing. Some hate blur. Some hate stutters. We respect if you want blur and other better aspects.

Mind you, it helped that the camera avoided doing noise filtering.

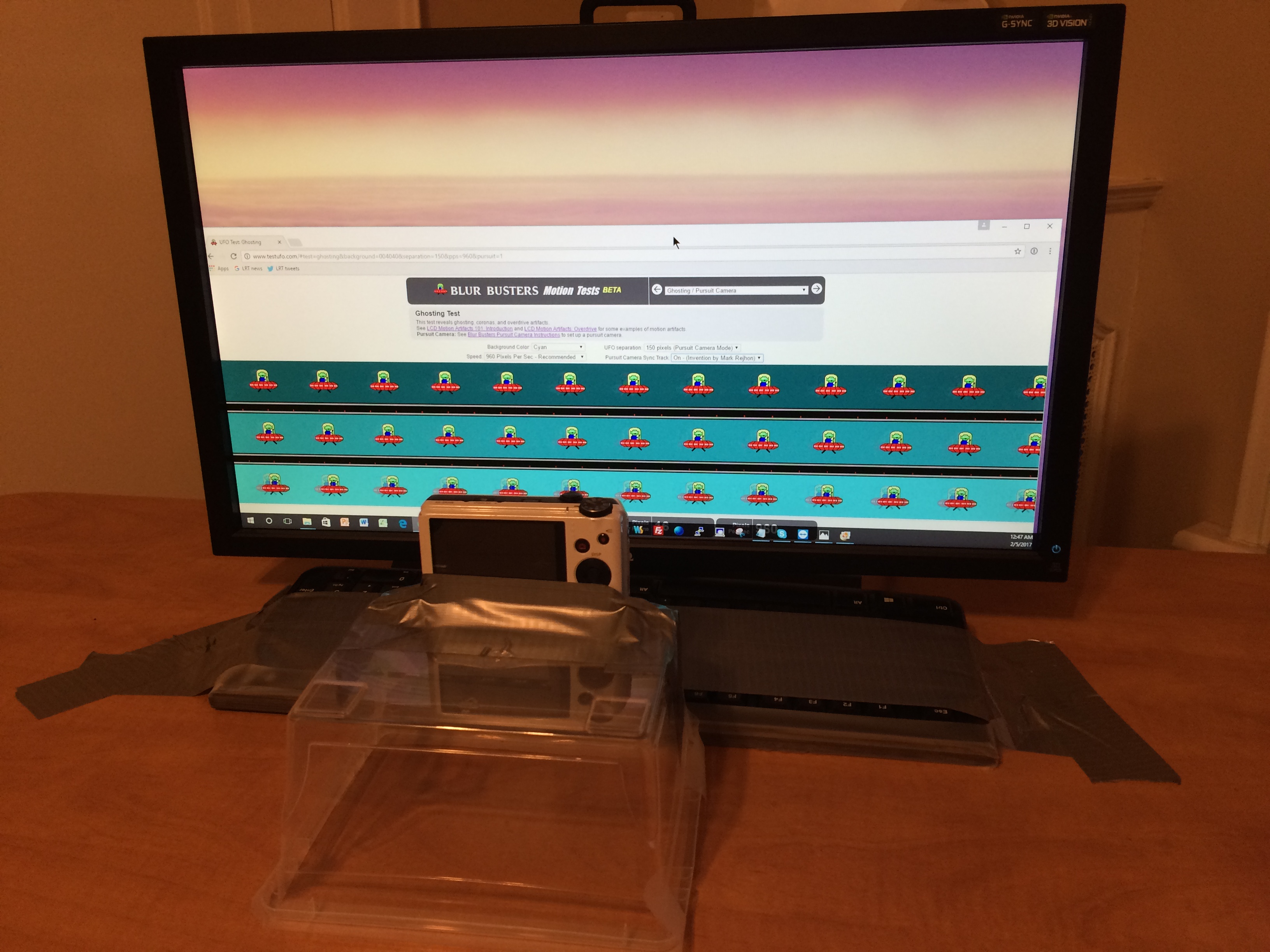

In this situation, I found that vise-mounted wooden blocks actually stabilized the rail better than camera tripods, and actually performed better than the $30,000 rig. I've never seen a motorized camera track as well the Blur Busters Prototype #2. Due to motor vibration, or other factors like motors being 1/10th pixel too fast or 1/10th pixel too slow.

It's counter-intuitive. Yes, I thought motorized was better. But with incredible amounts of practice, a manual pursuit + sheer number of samples can compensate.

Motorized can still be worth it and motorized can automate things -- someday -- I'd like to see someone invent an auto-compensating motorized setup that feedbacks itself in real time (e.g. camera app that monitors the sync track automatically), sending realtime feedback via BLE or WiFi to an Arduino-controlled pursuit camera motor.

Either way, people are inventing pursuit camera methods. It doesn't matter HOW you pursuit. The sync track is literally almost a cryptographic certificate of error margins no matter how you tracked. That's why I invented the sync track because it embeds proof of tracking accuracy into the photo, which revolutionized display motion blur photography industry-wide in the last seven years.

The quality of the camera is more important than the method of pursuiting. You simply keep testing, testing, testing different pursuit methods until the sync track looks more perfect. Many motorized setups (>90%+ of them), amazingly, are far less accurate than manual method, or takes longer to setup, because it's so goddamn difficult to get the motor speed exactly correct. The error margins of repeated manual propulsions means enough speed attempts that you can get a good manual within 10 minutes, compared to spending 2 hours trying to get motor speed perfect on a motorized setup, and even some motors just has too much issues.

Now, I've seen some excellent motorized setups, and the sync track is a great verifier of motor accuracy, but most motors don't have realtime feedback on pursuit speeds, just like you can do it manually with your human eyes, because if the sync track tilts forward in the live preview (screen on camera or smartphone) you know it went too fast, and if the sync track tilts backwards in the live preview (screen on camera or smartphone) you know it went too slow.

Sufficiently trained, you can dynamically speed up / slow down your pursuit as you manually slide your camera during your shutter-held-down burst shoot (Current favourite: Sony Alpha a6000 Mirrorless with 11fps 24megapixel burst-shoot on U3-speed SDXC card ... much lighter than a big SLR and thus less vibrations in a sliding rail). So you get good pursuits in fewer attempts than using motorized approaches, because you can do 20 manual propulsion attempts simply letting the camera slide on its own continuous momentum.

An example good manual pursuit with a practically perfect ladder, completed on the Blur Busters Prototype #2 (woodblock version). The woodblocks gave better stiffness than tripods, especially when vise-mounted to a desk.

(Click to zoom the photo, to look at the faint LightBoost strobe crosstalk more closely)

However, I've very rarely seen a motor do this accurate a sync track ladder done via the manual rail technique. Only when you get 5-figure pricing.

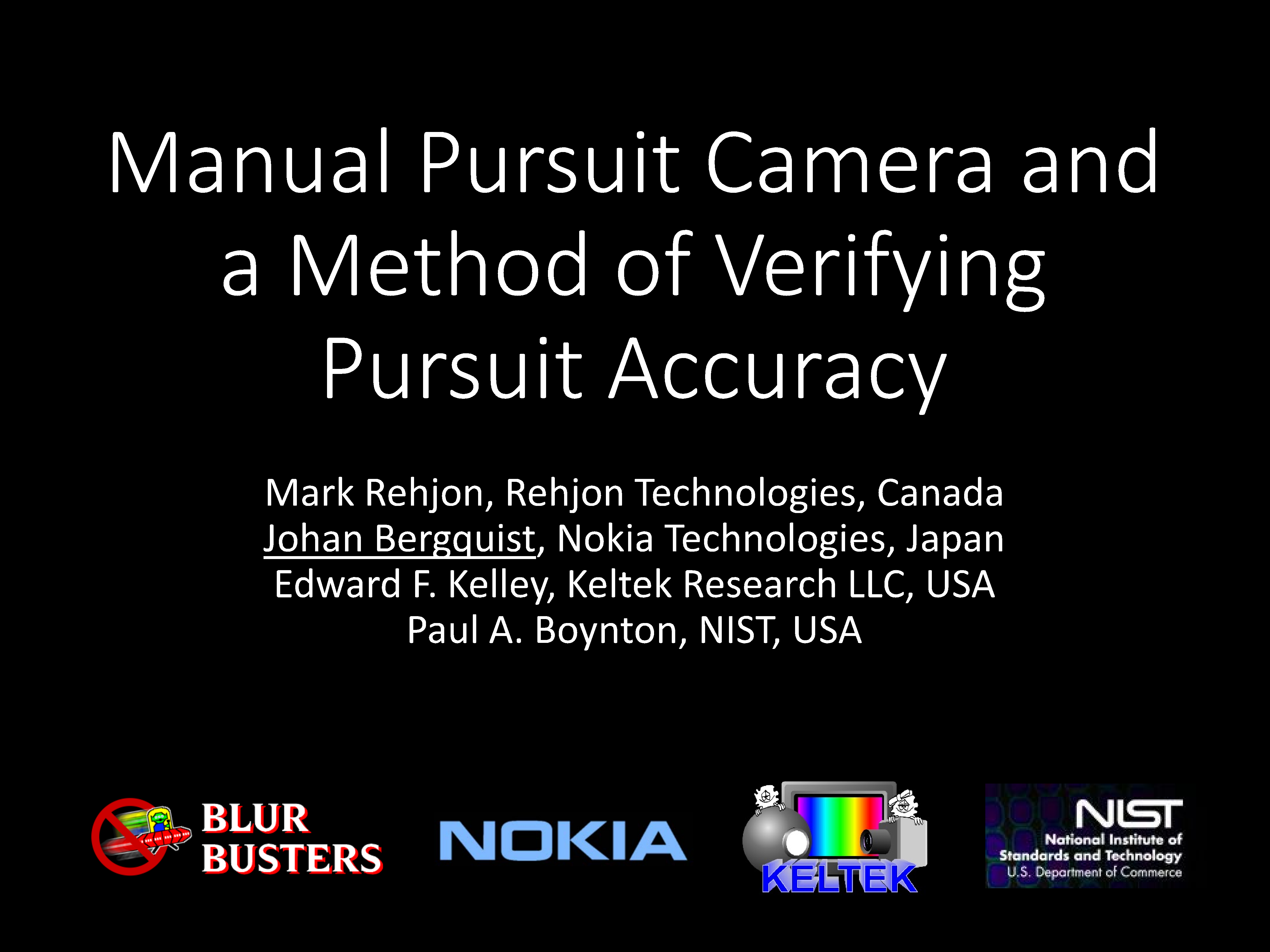

As long-time readers already know, a peer-revewed paper, which I confirmed along with NOKIA, NIST.gov, Keltek researchers -- had confirmed that manual-propelled pursuit camera can get the same accuracy as a $30,000 motorized rig!

A good reviewer can also simply set your error margin goal and design your rig around it.

That said, many reviewers are bad...

Mind you, compression problems and other issues (Denoising, overexposure, underexposure), remain endemic issues.

This is common in hobbyist pursuits. But also on the worse reviewers (that are worse than the best hobbyist pursuits).

So interpretation of hobbyist pursuit may be much more limited due to the problems. That said, it can still reveal nice information like amount of ghosting, amount of coronas, strobe crosstalk, and other WYSIWYG effects that aren't filtered out by cheap cameras. Stuff you normally see in the LCD Motion Artifacts FAQ and LCD Overdrive Artifacts FAQ.

I see a lot of problems with pursuit photography. Yes. But the fact that lots of good stuff exists now, is great. I love reviewers that boast their sync tracks (pictures of the sync track). You don't see those on all review websites, so it's hard to trust their accuracy. The sync track, again, is literally like a certificate of camera tracking accuracy. One should not care how one track the camera as long as the certificate of tracking looks good. Those A+ pursuits are quite obvious when I see the sync track. It's also nice when the EXIF is included, so I know what camera and settings they used on an unretouched, so I can trust results even more.

I prefer rail. Best rails do better.

However... The bottom line is that the best hand-waves are superior to the worst rail-based.

Scientifically, the brute-force sampling (30fps video creates 1800 freezeframes in just 1 minute), meaning you can spray literally 1000 photo attempts in just 1 minute of handwaving. The sheer number of scientific samples compensate for the inaccuracy of handwave, because the multiple-orders-of-magnitude of extra samples, means there's chances that a sufficiently accurate pursuit occured.

It might only happen 1 out of 1000 video freezeframes that it becomes within your desired error margin. Video as a stand-in for burst shooting. Video giving 1000 photos instead of 1 simply by waving multiple times. Then one file contains all thousands of "photos". A single player can single-step through them to find the perfect freezeframe. The lack of cost outlay (no need to buy a rail if you have lots of time to become a professional hand-waver after 30-minutes of practice).

The worst reviewers rail-based approaches is much worse than the best hobbyist video-based approaches. There is scientific validity in using a zero-cost pursuit camera, but it's easy to screw up. Eventually I'd like to see an app that automates it intelligently and create results similar to a rail. (Analog human-brain-driven human eyeballs are not on a rail, after all, and technology has come to a point where video is suitable stand-in under certain circumstances).

Now, it's easy for people do to a crappy attempt at video, to the point where the pursuit is useless. You prefer a recent smartphone, 3rd party software, ability to disable as much of the noise filtering, manual control over the per-frame video, and everything else. Then the video becomes useful.

One can diss the video pursuits all they wish, or burst-shoot handwaves of a Sony Alpha a6000 (it works well too, stiffen your arms, while holding camera and shutter, while spinning computer chair pursuiting). You can get maybe 100-200 photos in about 5 minutes, and then use a good photo viewer to quickly leaf through those. But most people only have smartphones, and the video player (With a good manual app that adjusts exposure, ISO, fixed focus, etc in the video), and it provides an opportunity of the $0 pursuit camera.

Refresh cycles don't even need to be aligned with shutter, because the human eyes don't align with refresh cycles, and the stacking of multiple exposures compensate. Though for temporally-generated tech (e.g. DLP, plasma, etc), you want exposures an exact multiple of refresh cycles ideally, and over an integer multiple of the dither cycle (e.g. 2-frame dither cycle, 4-frame dither cycle). That way, you don't get artifacts from trunctated dithering, and you more accurately represent human vision averaging behaviours -- that's the principle of multi-exposure stacking of pursuit camera. Human vision integration behavior.

To do it professionally though, you need video that is as good as 30fps burstshooting, and that usually requires expensive cameras. But some newer phones have manual apps that enables an ultrahighbitrate 1080p or 4K mode (because of their 4K and 8K capability) that has less filtering than older video. Video has also been used on a rail before too, and that is valid if the video is good enough.

In the long term, apps will choose the perfect freezeframe automatically, and a rail eventually ultimately doesn't matter due to duplicating a human's eyeball stabilization system, with those ever-improving camera sensors. Personally I'd prefer the app to use 10fps burstshoot over 30fps video, for higher quality photos/freezeframes, but the ultimate goal is to not require a rail at all.

The devil is in the details -- crappy videos by inexperienced members -- but we're not discouraging such practice because there is validity -- and it is allowing us to study the shortcomings of cheap compression codecs too. Even when I compliment on the tracking, they are not as good as the best video pursuits by a professional camera with a more trained hand-waver.

This doesn't mean that there are major problems with hobbyist pursuits, including all of those lately. However, I still encourage them because it is helping me discover which smartphone cameras are doing good jobs, as well as helping me brainstorm ways to create future apps that simulate the stabilizing nature of an analog human eyeball, making rails less necessary, etc.

Anyway, even Ph.D's have agreed that the hand-wave method has validity, because of the brute-sample factor (sheer numbers of photo samples to compensate for decreased accuracy, yielding more opportunities of better-than-rail accuracy).

Once graduates/researchers realize more details, they already stop laughing -- in exactly the same way people around here stop laughing anymore about 1000Hz displays.

P.S. Anyone want to help out in creating a hand-wave pursuit app? Perhaps make it your Ph.D project? Using various Matlab / ffmpeg / shader / AI algorithms / etc, to replace the rail while automating analysis / automating ensuring WYSIWYG effects. Today's camera software and smartphone sensors are crap but rapidly getting better and emulating a human eyeball without a rail will progressively get easier and easier.

______________

Blur Busters is obsessed about display motion characteristics. Full stop. Mic drop.