thatoneguy wrote: ↑27 Oct 2020, 02:02

From what I've read from one of the earliest articles MicroLEDs have sub-nanosecond switching time. That's picosecond response time.

From what I remember reading somewhere CRT's have picosecond rise and fall though I'm not exactly sure.

The rise and fall time (GtG) is not as important as motion blur (MPRT). Those unaware can also read

Pixel Response FAQ: GtG versus MPRT to understand that GtG is the pixel

transition time (rise and/or fall), and MPRT is the pixel

visibility time, which is more important for display motion blur.

From GtG perspective instead of MPRT perspective, CRT has near-instant rise time but slow fall time, which is why you see CRT phosphor ghosting. Also, CRT naturally is an impulsed (strobed) tech, so it's naturally zero motion blur, despite the fact that real life does not flicker/strobe. So CRT was just assumed as the Natural Way To Display Things. But that's not true (because of the flicker).

The Natural Way to Display Things is really analog motion (aka infinite frame rates) to bypass all the

Stroboscopic Effect of Finite Frame Rates from the artificial humankind invention of digitally flipbooking through static images to emulate analog moving images. Instead, the Better way to emulate real life is brute framerates at brute refresh rates (

as well as potential future theoretical framerateless displays; which is currently hard for most people to wrap their brain around, much like Quantum Mechanics versus Newtonian Math).

We can be perfectly happy with 0.1ms real-world GtG (100us) as long as it's consistently fast for all 65,536 transitions of an 8-bit panel (see

The Complexity of Measuring GtG), if we're aiming at 1.0ms MPRT. To eliminate human-visibility of rise-fall issues (e.g. 0.1ms GtG100% for all colors, not GtG10-90% for a cherrypicked color) only needs to be a tiny fraction of pixel static time (e.g. the 1ms refreshtime). OLED is very good at doing this; witness 120Hz on an OLED; it follows Blur Busters Law almost exactly mathematically to a near-perfect tee, because Blur Busters Law (MPRT100% simple pixel mathematics) becomes beautifully perfect at GtG=0 about 1ms of pixel visibility time (MPRT100%) translates to exactly 1 pixel of eye-tracking-bsaed motion blur per 1000 pixels/second motion. Those unfamiliar with Blur Busters Law can read

Blur Busters Law: The Amazing Journey To Future 1000Hz Displays as well as

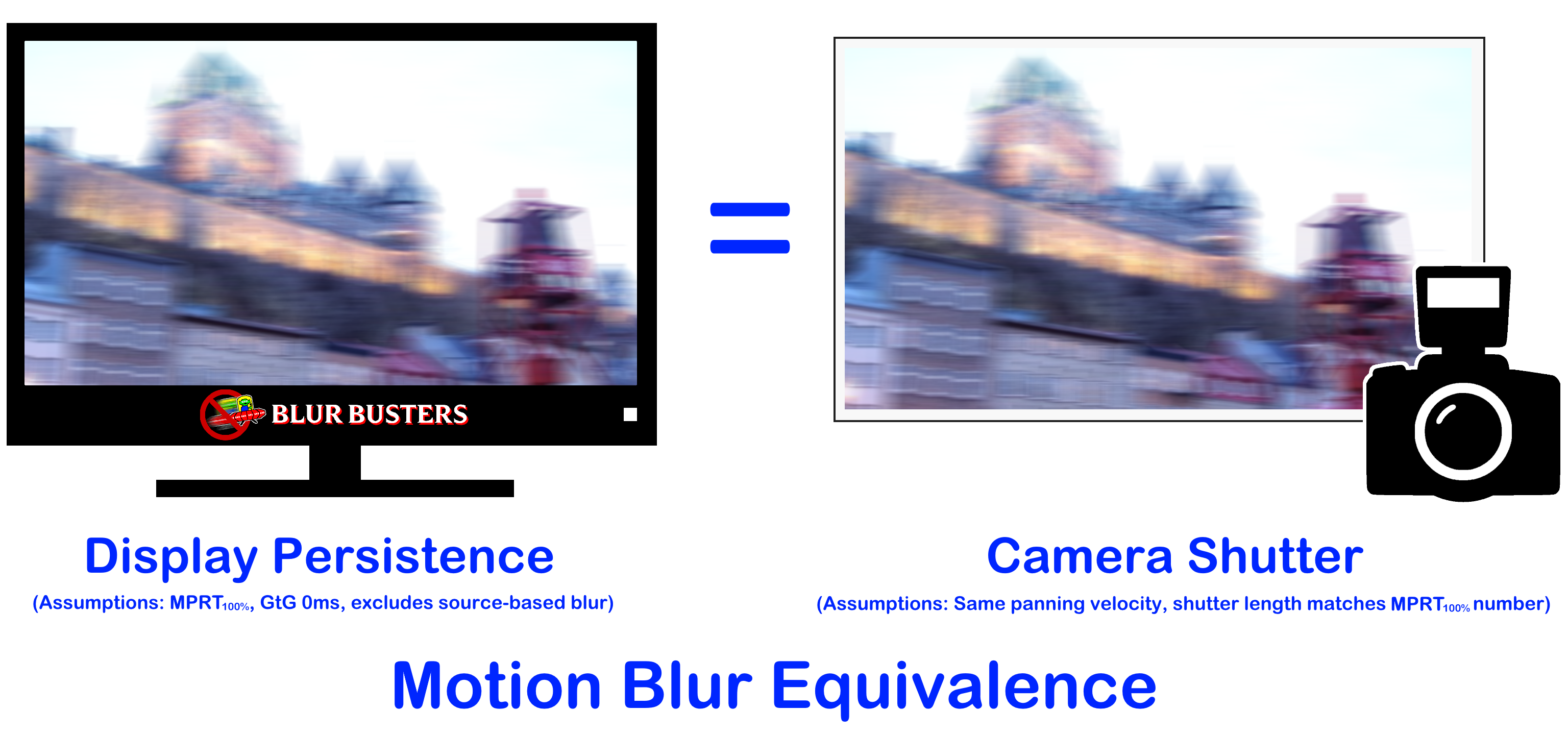

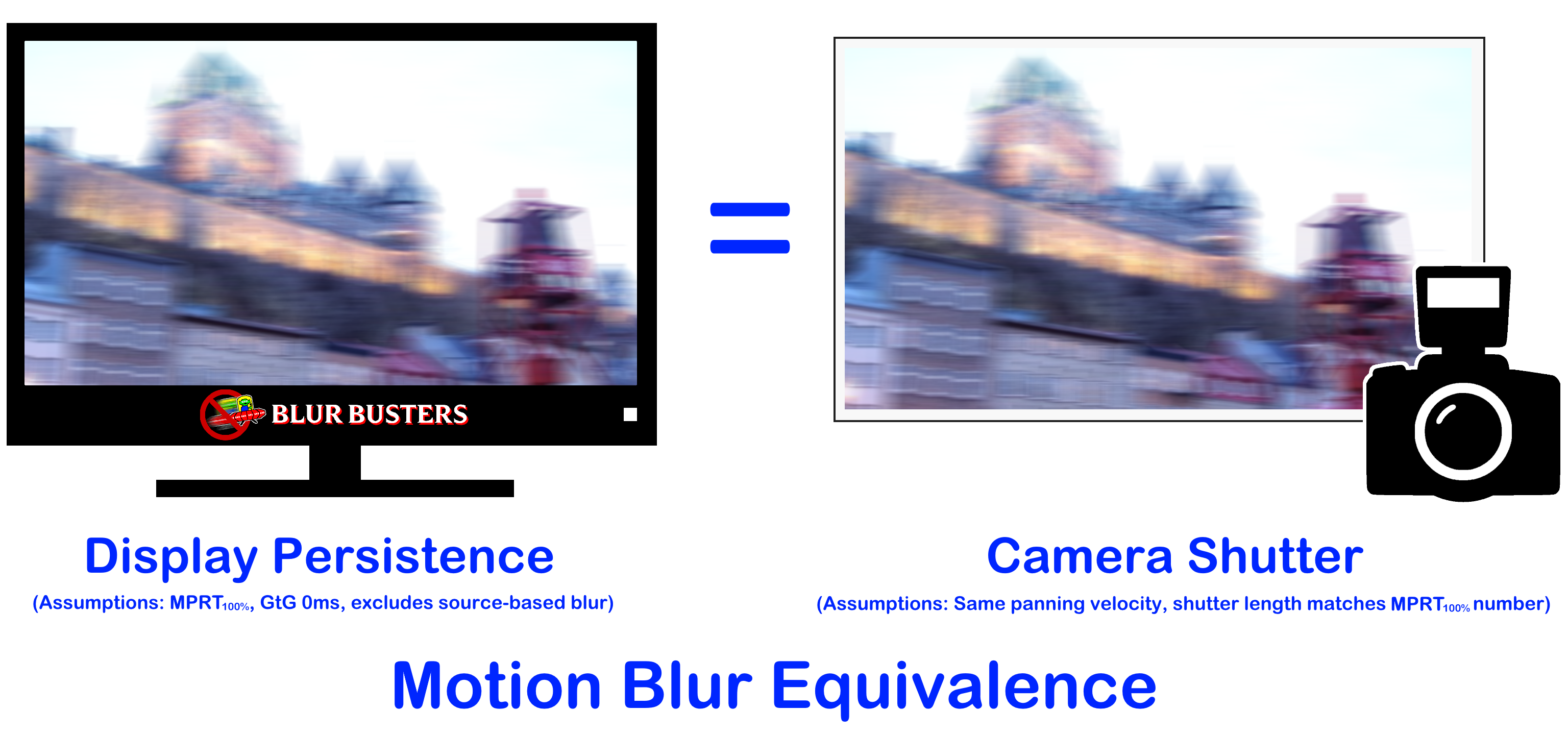

GtG versus MPRT, and understand the MPRT equivalence to a camera's shutter:

Which means 240fps on a 240Hz 0ms GtG display, at the same screen panning speed (turning/scrolling/strafing + headturning in virtual reality), is exactly the same motion blurring as a camera shutter at 1/240sec for the same equivalent physical camera-panning speed.

TL;DR: 240fps on 240Hz screen = same blur as 1/240sec shutter on SLR camera

You know your smartphone photos become blurry if you pan while taking a picture, and it gets sharper if shutter is faster? Same thing with display refresh rates & frametime (pixel visibility time) on sample-and-hold displays. People still see benefits of ever faster and faster camera shutter speeds in fast-paced material, which underscores the need for geometric improvements to frame rates and refresh rates, needed to achieve blurless sample-and-hold.

In other words: [Rheoretical napkin-exercise self-question] Who cares about nanosecond GtG times if we're aiming at blurless sample-and-hold with MPRTs in the hundreds of microseconds. The motionblur of MPRT will hide the GtG, and

you only need to retina-out MPRT sufficiently. Fast GtG is conveience; it simplifies strobeless low-MPRT engineering. Low strobeless MPRTs are impossible when GtG overlaps multiple refresh cycles (e.g. 33ms+ 60Hz LCDs)

GtG weaknesses are amplified/hidden depending on how you do it. During direct pixel strobing where rise/fall is GtG-powered, GtG fastness is important (i.e. strobed OLED or panel-based/software BFI) since artifacts from GtG:MPRT ratios is massively amplified in this situation. I'm using GtG(100%) and MPRT(100%) here, all colors, for math simplicity.

GtG-distortions in strobing: Now, GtG:MPRT ratio of 1:100 can still have human visible artifacts in strobing if strobing is GtG-powered, since a distorted curve in 1% of pixel visibility length may bump color shades of pixels a few shades off (like RGB(128,131,129) inaccuracy versus RGB(128,128,128) on a spectrum of RGB(0,0,0) to RGB(255,255,255), but consistent GtG can fix that.

GtG-distortions in strobless blur reduction: But during strobless blur reduction, GtG fastness simply need to below the MPRT noisefloor, and that's it, GtG:MPRT ratio is far more leninent and GtG is easily pushed below noisefloor. Focus on retina-ing out MPRT, and put GtG a bit below that noisefloor, and voila! GtG:MPRT ratio of 1:10 during 1000fps@1000Hz sample and hold creates a 0.1ms:0ms ratio. The blur difference is virtually unnoticeable at these scales, and a lot of color distortions (from GtG asymmetry between different color channels) are better hidden -- the blur of full-persistence MPRT more eawsily completely hides the GtG limitation once the GtG is a tiny fraction of a refresh cycle.

GtG:MPRT ratios are less critical during strobeless blur reduction. -- aka using brute frame rates & brute refresh rates. It was a big problem when LCDs were so slow, that real-world GtG overlapped refresh cycles. But in the discussion of nanosecond LED switching times, it's meritworthy for a scientist/researcher to understand what nanoseconds is important and what nanoseconds is less important, for specific nuances of display engineering. When you build a LED display, you practically never have to worry about GtG limitations (until you are dealing with long tiny microwires trying to switch distant transistors), and can thus focus on other priorities.

Just like Henry Ford went through the dumps in the 1920s looking at rusty cars to figure out which Model T Ford car parts wore out more than others, to figure out how to make parts more cheaply and other parts more durably -- sometimes you discover overkill and discover what's important. Realworld GtG100% just simply need to be a tiny fraction of MPRT100%, and it's really game over. That said, GtG inconsistencies can lead to color calibration difficulties (e.g. slower pixel responses for certain colors creating tinted distortion in ghosting/motionblur). But direct-color LED (no white phosphor) generally has none of that problem so we can consider GtG equal and easily realworldable for all color combos. Now the correct engineering target thusly become MPRT, and thus raising brute refresh rates and brute frame rates. Prioritization FTW!

It was found that the turn-on time is on the order of our system response (30 ps) and the turn-off time is on the order of 0.2 ns and shows a strong size dependence.

Deliciously fast. But we're still limited via very low pixel refresh rate, but it's

actually easier than expected to get kilohertz refresh rates via parllelized scanout logic on MicroLED modules. For example, JumboTron modules (the 32x32 or 64x64 RGB LED metrixes that you can buy off Alibaba or ALIexpress for under $10 each), are often 600Hz already due to PWM behavior, and a minor modification to those, combined with new logic in centralized controller, permits 600 frames per second. And I have an algorithm to eliminate zig-zag combing artifacts in panning scenery on concurrent multiscanning.

The great thing is we can mostly forget about discrete LED GtG (unless power-transitor switching is slow) and focus on improving MPRT which is easy to halve simply by doubling refresh rate & frame rate because we already know GtG won't be a bottleneck in MPRT progress on MiniLED/MicroLED/etc. OLED GtG is slower only because of the massive number of tiny microwires reaching tiny transitors, it means that gate switching may be too slow. The light emitting element of the OLED pixel is not the limiting factor, but the difficulty telegraphing a voltage over vast distances over extremely tiny wires in an ultra-brief manner, to get an OLED active matrix transistor switched fast enough.

That's really the only reason for OLED GtG slower than MicroLED/MiniLED GtG -- because panel fabircation of creating tens of thousands of micrometers-wide wires in a modern 4K and 8K LCD panel, it's hard to get electricity from A to B quickly, and even trying to push 10 volts down those matrix microwires (to switch the pixel transistors faster) is like trying to put 5 megavolts into a power transmission corridor -- they'll leak or arc across each other. So you have sweet spot voltage that may switch a transistor a little slower than we want. Nontheless, today's panels (LCD and OLED) are an engineering achievement, even if we bitch and moan about panel imperfections, it's a mircale we pay only $200 for a 24" desktop LCD. I still remember the days when a 3 inch active matric LCD was in the 1980s approached a thousand dollars, before quickly falling to a few hundred dollars in the late 1980s, for portable TVs or airplane cockpits when industry wanted big ultra light weight color displays.

Manufaturing a single LCD or OLED is literally like manufacturing a computer chip these days, with lithography techniques to print millions of transistors onto glass, at integrated circuit densities higher than an Intel 4004 CPU -- across the whole panelglass!!! Some integrated circuits are built into the edges of some modern panels, as part of the same lithography printing -- basically simple ASICs like row-column addressors built into the edges of (some) modern LCDs.

Anyway, kilohertz refresh rates aren't going to be unobtainiumly expensive forever, much as $10,000 4K in 2001 became $299 Walmart specials. There are many cheap-than-expected engineering paths to the true kilohertz display refresh rate over the next 20 years. This engineering stuff much easier than SpaceX Starlink cramming billion dollar phased arrays into a $500 consumer UFO-on-a-stick and is already been done DIY. Do-It-Yourself kilohertz refresh rates (at low resolutions).