PhreakShow wrote: ↑27 Feb 2023, 18:37

What annoys me most is the reboot. I don't know if that is possible, but a tool that could change the values on the fly, as soon as I confirm them, would be nice, but I think that's a driver limitation? Then a lot of possible values could be checked with the click of a button.

Are you aware of ToastyX restart64.exe?

It restarts the GPU driver (1 second screen blackout).

Faster than rebooting the computer.

PhreakShow wrote: ↑27 Feb 2023, 18:37

You describes the mechanism of an EDID in your answer. The EDID has integers for all values, but as you said, this doesn't always work out due to rounding. How does the video card determine, what to do in this case? Which values does it prioritize over others, like lowering the refresh rate from 60.000 to 59.995 Hz to match the clock? Or vice versa?

Given the bandwidth budget is noth exhausted, why does my intel not even recognize the display until I start increasing the front/back porch by 1 or 2, also increasing the pixel clock along with it?

Sounds like you know what you are doing

for the most part. Assuming you structured your EDIDs correctly, or are blind-forcing the custom mode (EDID-less, creating EDIDs only via Windows registry).

But some tips, which you may have overlooked.

I assume you also tried intentionally decreasing your pixel clock to compensate for the increase in porch (etc). Some GPUs like Intel needs enough VBI time to function properly, because some PC-based GPUs have bugs that prevent them from working if blanking is too small; this may be happening to your Intel GPU -- I have heard of this happen. The workaround is to use bigger VBI (porches) like your fix. And you can most definitely fix the refresh rate by lowering your pixel clock to compensate. Your manufacturer of embedded screen may not have tested on the flawed Intel GPU that failed to work with too-small blanking intervals (due to housekeeping that needed to execute between refresh cycles).

Since pixel clock is simply number of pixels per second, and if you're running into rounding errors with larger porches, simply readjust both the HORIZONTAL TOTAL and VERTICAL TOTAL a few times until you're able to get back to an exact 60.000 if that's important to you for any reason (even 60.000 might be 59.998 on one GPU, 60.002 on another GPU, 60.001 on yet another). Try to keep your vertical total an even number for simplicity sake, and your horizontal total divisible by 8. Focussing on atomic-clock quality 60.000000000000000 is a losing battle. But if it's just EDID tidiness, you can easily get the EDID to math 60.00000000000000 (read onwards) if your EDID hygiene is a bigger priority than atomic-clock accuracy of refresh cycle timings. Many monitors ship with weird 60Hz refresh rates including 59.979 or 60.013, but you can get it to math better if you want.

Embedded screens are usually low resolutions, and you are only doing 60.000 Hz, and even a 15-year-old GPU can out-bandwidth many embedded screens. So just waste a higher Pixel Clock to get better compatibility. You MUST have a good reason to stay with tiny porches -- do you? If not, then definitely don't do tiny porches. (I still tell screen manufacturers to support them, both tiny porches and big porches, in flexible tolerance abilities. But not all GPUs work reliably with tiny porches, sadly).

Now let's imagine you're a 1024x768 embedded display, you could simply fudge (1024 + horizontal blanking) and (768 + vertical blanking) to have numbers that creates tidier clocks. Keep the numbers even and creates cleaner multiplication. You might find that 1080x800 (HTxVT) produces easier refresh rate and pixel clock math for you, and easier to hit 60.000. So the side effect of increasing vertical total by 1 may necessitate increasing by 2 instead, while simultaneously increasing/decreasing HT to create a tidier number that creates better timings math. Whenever unsure, always err on bigger totals / sync / porches. More time for the display to sync between pixel rows, or between refresh cycles.

Ask yourself: Why do you need to keep your blankings too tiny? That is usually an expensive compatibility nightmare especially if there's too few clock cycles between last pixel of previous refresh cycle, and first pixel of new refresh cycle -- not enough time for some housekeeping tasks that occur on certain GPUs while the GPU is waiting between refresh cycles. Just waste extra GPU bandwidth on bigger porches that are much more compatible, and make sure the maths rounds-off correctly.

Glossary

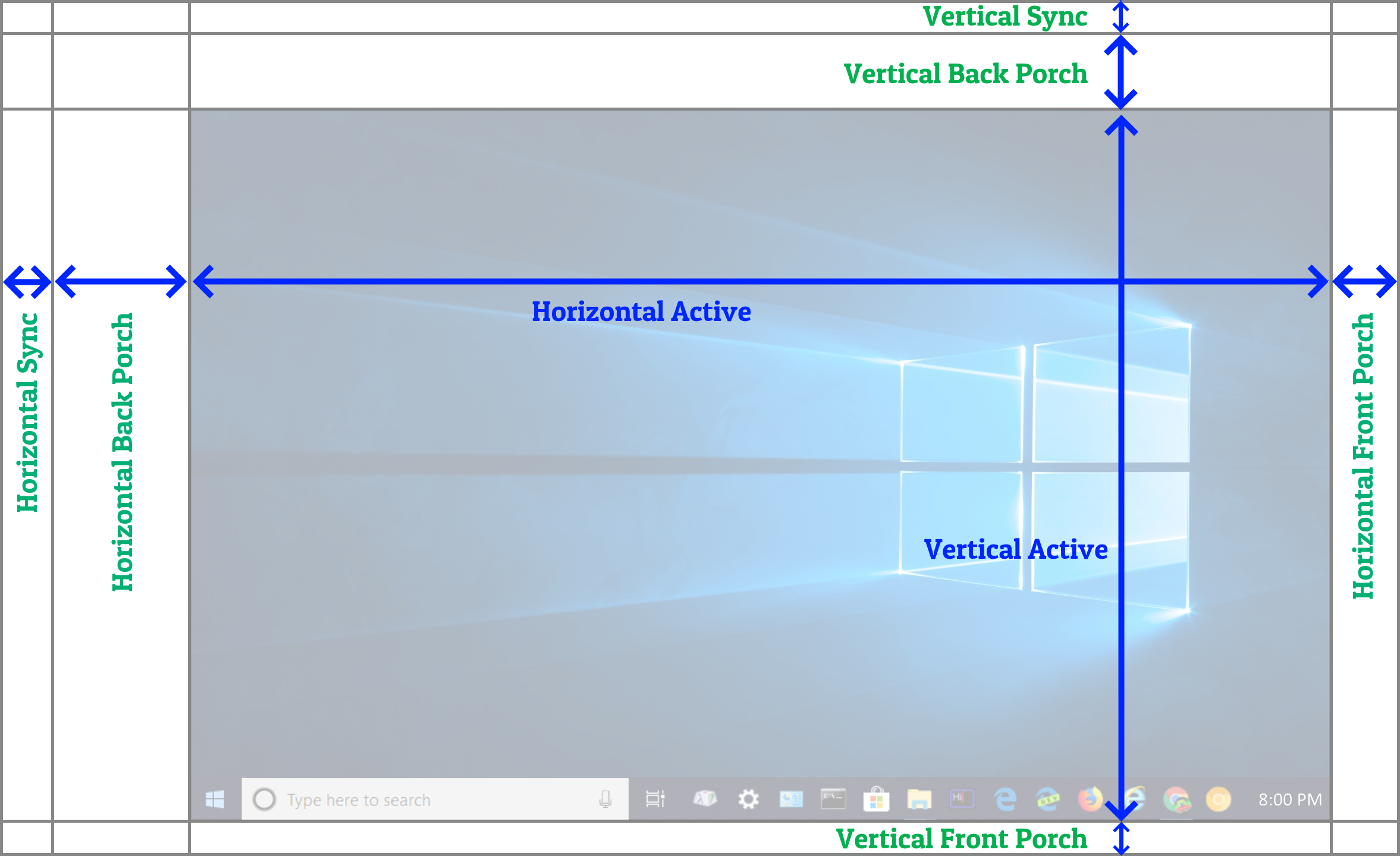

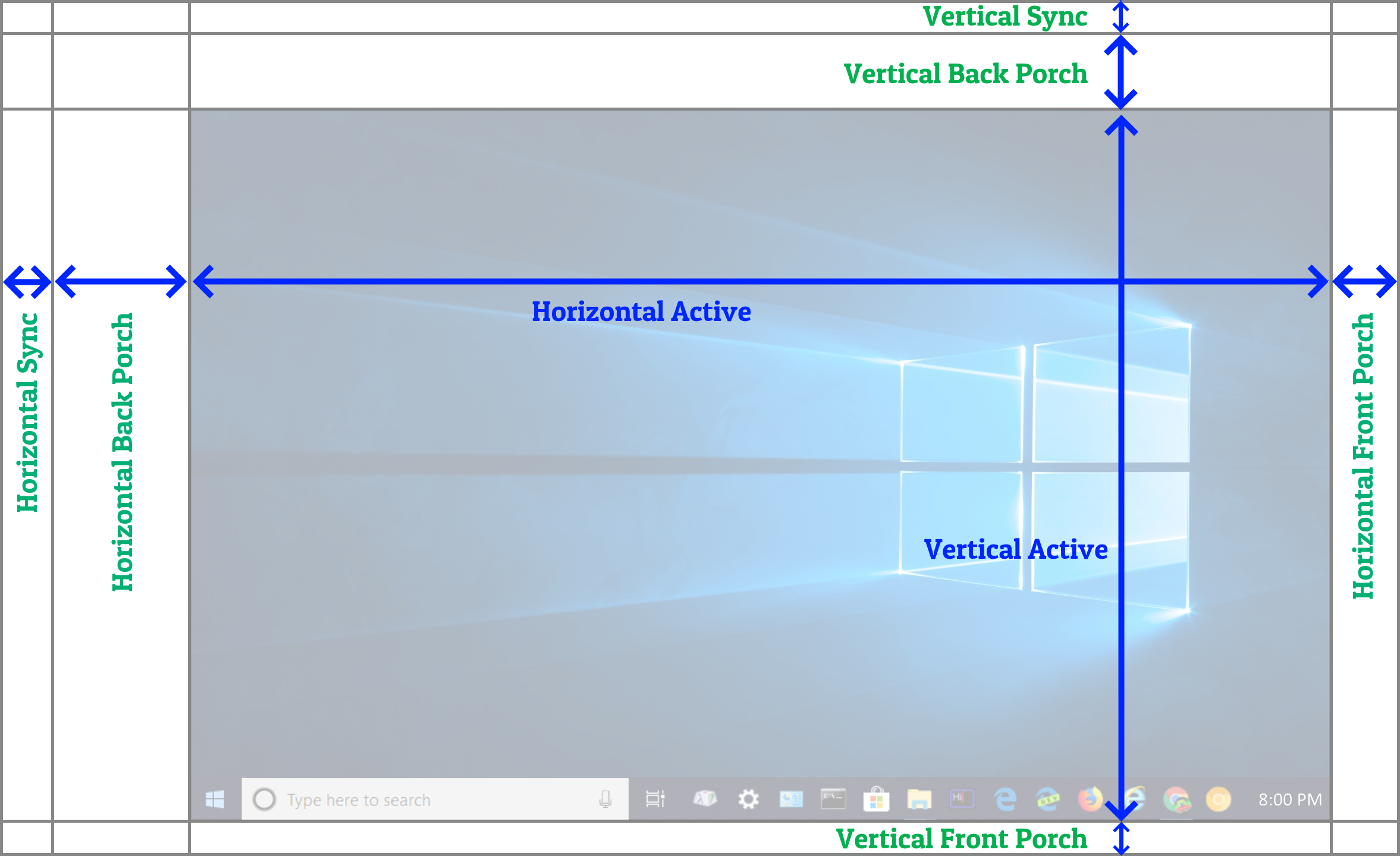

VBI = Vertical Blanking Interval = (Vertical Front Porch + Vertical Sync + Vertical Back Porch)

HBI = Horizontal Blanking Interval = (Horizontal Front Porch + Horizontal Sync + Horizontal Back Porch)

VT = Vertical Total = (All verticals added together, including visible pixels)

HT = Horizontal Total = (All horizontals added together, including visible pixels)

Dot Clock = Pixel Clock = (Horizontal Total) x (Vertical Total) x (Refresh Rate)

Scan Rate = Horizontal Refresh Rate = (Vertical Total) x (Refresh Rate)

If you didn't know this by now, you didn't read the links I posted in the earlier message...

Conceptually, signal timing are metaphorically like virtual resolution with pixels beyond the screen bezels. They had an overscan and sync purpose on CRT tubes, now they're simply dummy (comma-separatoresque) pixels in digital. Analog and digital has a 1:1 timing conversion, which is why passive bufferless 1080p adaptor exist to convert 1080p VGA to 1080p DVI/HDMI (at least in the unpacketized HDMI 1.0 era) and vice versa without needing any buffer in the analog-digital adaptor. We've been using the same signal topology at this signal layer for almost 100 years, from the 1920s Baird/Farnsworth TVs, all the way to 2020s DisplayPort, it's just conceptually a serialization of 2D image into 1D (wire or broadcast) and it timings-survived the switch from analog to digital, unmodified!

For example, a 960x960 screen might work better with 1000x1000 including blanking intervals, which makes it 1 million pixels per refresh cycle, and at 60Hz (multiply the virtual resolution by 60.000) creating a very tidy 60 million pixel per second pixel clock (60.000MHz) that has no rounding errors. And if those porches are not big enough, fudge things around (e.g. 1080x1080, 1000x1080, 1200x1200, etc) until the pixel clock only has many zeros after a few significant digits. No rounding errors in your EDID to worry about! So don't do 979x993 porches with a 960x960 specialty LCD, you gotta know how to math all of this out properly to save time. Remember (Horizontal Total) x (Vertical Total) x (Refresh Rate) = (Pixel Clock)? If you didn't know that, now you do, and you can stop testing and simply pick up a pocket calculator first to create some proposed numbers.

Imagine it as a virtual resolution bigger than the screen, and pixels are transmitted over the cable one pixel at a time, left-to-right, top-to-bottom (high speed videos of scanout:

www.blurbusters.com/scanout ...) ... it's a raster serialization of 2D image over 1D cable. Pixel Clock is always exactly every single pixel of this "virtual resolution" times refresh rate. So now you want a tidier Horizontal Total and a tidier Vertical Total (both even numbers that mutliply better) and multiplies more easily by 60.000 to create an exact pixel clock concurrently with an exact refresh rate with no rounding error. So you have an incentive to intentionally deviate away from a flawed EDID recommendation by a manufacturer and create your better more compatible EDID. But you MUST understand every single number and WHY rounding errors happen.

Remember clock chips and clock crystals are not perfect, so 60.05Hz may be 59.95Hz in realtime (atomic clock). If exact Hz is important to you, you must measure it externally (oscilloscope, logic analyzer, etc). Otherwise, don't worry too much about off-by-a-smidge situations in a nitpicking fashion. Remember, GPU clocks can slew against CPU clocks (temperature), so your timing drift may cause different refresh rates at different temperatures (Example:

www.testufo.com/refreshrate#digits=8 after 30 minutes of unattended execution).

This number is very sensitive to slew between CPU clock tickrate and GPU clock tickrate, so even power management (SpeedStep) can affect these numbers. Actual 3rd party measurements also actually measure refresh rate drift too from mere things like temperature and such. This happens to all video sources (whether a DVD player or cable box), and you may find that even two adjacent models of television settop video player boxes of them play a few milliseconds faster/slower over a period of an hour, even with perfect zero framedrops.

Different GPU vendors do things slightly differently, annoyingly, but most displays tolerate major variations. That's why a cheap $99 LCD such as a HP 60Hz or DELL 60Hz monitor works just fine at 48Hz-72Hz in literally 0.001Hz increments, and some of the units tolerates 3-pixel VBIs (1 pixel front porch, 1 pixel sync, 1 pixel back porch) if the GPU (e.g. NVIDIA) can handle functioning properly at such tight porches. These cheap generic LCDs are still very porch-forgiving (tiny/big HTs and VTs).

And if it wasn't the too-tiny porch issue, why isn't your embedded display more forgiving? Why isn't your display more compatible with bigger porches as a workaround? Etc. I would blame the manufacturer of the embedded display for designing such poor firmware. I wouldn't necessarily use the EDID recommendation that the embedded vendor gives -- I would use an EDID that works on worst-case Intel/NVIDIA/AMD product.

It seems like your embedded display is astoundingly unusually picky, beyond any reasonable means. I would walk away from it, while lighting it in a dumpster fire.

My humble opinion is that tolerance margins should be +/- 5% at

absolute minimum, not +/- 0.1% like your display seems to be.

Unless it's a new technology (e.g. micro OLED or such) that is understandably going through bleeding edge design, LCD panels are very forgiving (sometimes unusually forgiving, such as

generic 60Hz LCD overclocked to 180Hz+ when it didn't have bad firmware code standing in the way)

You may want to buy a different embedded display that is more forgiving. My verdict: If it's just a random mere ordinary 60Hz LCD from Alibaba, put the embedded display containing sloppy intern-programmed firmware into a dumpster, and light the dumpster on fire as a financial waste of time if you are a business, or a waste of life if you are personal (and can afford the cost of a couple or three more other random Alibaba embedded displays).

Get a few other more candidates. One day of wasted billable hours of work at a business pays for a small fleet of better embedded LCDs to test, one by one.

Now if it's a specialty prototype like a MicroLED or Micro OLED, okay -- fair enough -- but if it's just a mere ordinary 60Hz LCD -- why are you still wasting time with one containing sloppy timing-intolerant firmware? Alibaba offers over 100 models of embedded LCDs, many of which has undocumented >5% forgivingness in its tweakability. Of course, it might be the GPU's fault (porches too tiny for the GPU to properly function -- this can annoyingly happen). If your business product need GPU-agnostic ability (e.g. embedded display that works with any GPU) then you have a financial interest to intentionally deviate away from the embedded display manufacturers' recommended EDID.

Or if you're building a custom product (e.g. you are a hardware manufacturer and you specced out for an exact size embedded screen and it is too costly to retool your factory), then you've got a costly pick-poison choice faced in front of you.

Or if it's a DIY box with a custom 3D printed holder for an embedded screen, just get a similar size and print an plastic adaptor to fit your slightly-different-sized (off by a few millimeters or whatever) embedded LCD.

Understandably my advice may not apply here -- Ask yourself; If the screen goes black with smaller AND bigger porches, then ask yourself: How stuck are you with THIS embedded screen if you're unable to deviate away from the manufacturer EDID recommendation?

And if you're hell bent on trying to get the manufacturer EDID recommendation to work, they obviously didn't QA test enough to cover all those edge cases (like certain GPUs that goes blank if the VBIs are too tiny because they didn't have enough time to execute housekeeping between refresh cycles). Remember, if horizontal scanrate is 100 Kilohertz, and you have a 50-line VBI (porches + sync), that means you have 50/100,000th of a second between refresh cycles. Your act of increasing by 1 may have given enough CPU cycles to an Intel GPU to finish housekeeping between refresh cycles. That may have been what happened. A flaw in a specific GPU that the other GPU did not have. Etc.

So that's why bigger porches is almost always better for compatibility. Yesteryear big porches was to give enough time for the momentum of electron beam to move back to the top edge. But today, big porches are still needed as guard delays for maximal compatibility, to give some signal sources enough time to do between-refresh-cycle processing tasks. I don't know what the minimums are for all GPUs ever manufactured in humankind, but my NVIDIA GPU can do ultra-tiny 3-pixel VBIs just fine (1 pixel each!). But I've seen certain Intel and AMD GPUs utterly and completely fail at that before. Now, if you're failing at much bigger porches too (damned if you do, damned if you dont), then that embedded screen feels like an unnecessary time&money drain.

I can tell you far beyond my two hands the many times I've told manufacturers to fix their flawed EDID recommendation to improve compatibility or fix problems like frameskipping (e.g. One example is the famous 240Hz frameskipping debacle at

viewtopic.php?t=3598 ...) and this is big-budget 240Hz, not tiny-budget 60Hz embedded LCDs that might just be repurposed 15-year-old tablet/smartphone screens from an old fab factory they want to keep running, with old inflexible duct tape firmware making them barely work on only one computer that they tested. Barf.

Hopefully this helps you surgically select the right tree in the forest -- it's all often a learning experience. Exit the side door away from time waste, and just surigcally hone into perfection faster. If the embedded screen won't let you (fails at both significant increase/decrease, e.g. -2 and +2 increases in porches etc), get a different one (with more forgiving tolerances). I have no patience for intolerant LCDs anymore, given >99%+ of what I use are very forgiving nowadays.

SUMMARY

- Numero Uno Most Important Rule: manufacturer EDID = only guideline Manufacturer EDID recommendation on many specialty screens is sometimes just a "suggestion". Deviate away from it to suit your priorities (e.g. better compatibility) especially if you have ability to reprogram the EDID

- Second Rule: Bigger is better for bug-free compatibility. Unless your embedded screen is absurdly high refresh rate (like 1000Hz) or absurdly high resolution (like 8K), please just merely waste your GPU pixel clock on more porch/sync padding for Intel+NVIDIA+AMD compatibility. Thank me later.

- Third Rule: Pick up a pocket calculator more often. If EDID "60.000" tidiness is important, keep inflating the totals on paper (away from testing) until you create a mathematically neater virtual resolution (Horizontal Total) x (Vertical Total) that when multiplied by exact refresh rate, creates a very tidy Pixel Clock number that has few significant digits. (Or vice versa, a tidy total number of pixels per refresh cycle, that is easy to multiply by 60). Minimizes your rounding errors for EDID editors that gives you limited number of significant digits. Once you got your HT x VT x Hz = PixelClock correct, split your HT and VT any whichever way you want (porches and sync), it doesn't matter as long as your HT and VT is unchanged, and it works on all your GPUs.

- Embedded screen selection flexibility If this embedded screen won't let you do it on nearly first try, get a different embedded screen that lets you succed (1)(2)(3) on the FIRST TRY.